Humans are not a good target for calm technology.

大多数人认为技术应该适应人类的工作方式和认知习惯。但作者认为人类不适合作为'平静技术'的目标,因为人类需要高认知负荷的交互。这一观点挑战了以用户为中心的设计原则,暗示我们应该重新思考人机交互的基本模式。

Humans are not a good target for calm technology.

大多数人认为技术应该适应人类的工作方式和认知习惯。但作者认为人类不适合作为'平静技术'的目标,因为人类需要高认知负荷的交互。这一观点挑战了以用户为中心的设计原则,暗示我们应该重新思考人机交互的基本模式。

In 1977, social psychologist James Gibson coined the term “affordance” to denote “action possibilities provided to the actor by the environment”.[2] A decade later, Donald Norman introduced affordances to the field of object design in his well-known book The Psychology of Everyday Things (1988), after which the concept quickly made its way into all corners of the humanities and social sciences, including the study of Human-Computer Interaction (HCI).

almost all beginners to RDF go through a sort of "identity crisis" phase, where they confuse people with their names, and documents with their titles. For example, it is common to see statements such as:- <http://example.org/> dc:creator "Bob" . However, Bob is just a literal string, so how can a literal string write a document?

This could be trivially solved by extending the syntax to include some notation that has the semantics of a well-defined reference but the ergonomics of a quoted string. So if the notation used the sigil ~ (for example), then ~"Bob" could denote an implicitly defined entity that is, through some type-/class-specific mechanism associated with the string "Bob".

configuring xterm

Ugh. This is the real problem, I'd wager. Nobody wants to derp around configuring the Xresources to make xterm behave acceptably—i.e. just to reach parity with Gnome Terminal—if they can literally just open up Gnome Terminal and use that.

I say this as a Vim user. (Who doesn't do any of the suping/ricing that is commonly associated with Vim.)

It is worth considering an interesting idea, though: what if someone wrote a separate xterm configuration utility? Suppose it started out where the default would be to produce the settings that would most closely match the vanilla Gnome Terminal (or some other contemporary desktop default) experience, but show you the exact same set of knobs that xterm's modern counter part gives you (by way of its settings dialog) to tweak this behavior? And then beyond that you could fiddle with the "advanced" settings to exercise the full breadth of the sort of control that xterm gives you? Think Firefox preferences/settings/options vs. dropping down to about:config for your odd idiosyncrasy.

Since this is just an Xresources file, it would be straightforward to build this sort of frontend as an in-browser utility... (or a triple script, even).

Döring, Tanja, and Steffi Beckhaus. “The Card Box at Hand: Exploring the Potentials of a Paper-Based Tangible Interface for Education and Research in Art History.” In Proceedings of the 1st International Conference on Tangible and Embedded Interaction, 87–90. TEI ’07. New York, NY, USA: Association for Computing Machinery, 2007. https://doi.org/10.1145/1226969.1226986.

This looks fascinating with respect to note taking and subsequent arranging, outlining, and use of notes in human computer interaction space for creating usable user interfaces.

Internet ‘algospeak’ is changing our language in real time, from ‘nip nops’ to ‘le dollar bean’ by [[Taylor Lorenz]]

shifts in language and meaning of words and symbols as the result of algorithmic content moderation

instead of slow semantic shifts, content moderation is actively pushing shifts of words and their meanings

article suggested by this week's Dan Allosso Book club on Pirate Enlightenment

Wordcraft Writers Workshop by Andy Coenen - PAIR, Daphne Ippolito - Brain Research Ann Yuan - PAIR, Sehmon Burnam - Magenta

cross reference: ChatGPT

how important is the concrete syntax of their language in contrast to

how important is the concrete syntax of their language in contrast to the abstract concepts behind them what I mean they say can someone somewhat awkward concrete syntax be an obstacle when it comes to the acceptance

programs with type errors must still be specified to have a well-defined semantics

Use this to explain why Bernhardt's JS wat (or, really, folks' gut reaction to what they're seeing) is misleading.

Mark: Cathy Marshall at Xerox PARC originally started speaking about information gardening. She developed an early tool that’s the inspiration for the Tinderbox map view, in which you would have boxes but no lines. It was a spatial hypertext system, a system for connecting things by placing them near each other rather than drawing a line between them. Very interesting abstract representational problem, but also it turned out to be tremendously useful.

Cathy Marshall was an early digital gardener!

People should be able to teach their computers the meaning behind their data

His major work in recent years has been on information visualization, originating the treemap concept for hierarchical data.[30]

A mental model is what the user believes about the system at hand.

“Mental models are one of the most important concepts in human–computer interaction (HCI).”

— Nielsen Norman Group

Wahn, B., & Kingstone, A. (2020, April 30). Sharing task load with artificial – yet human-like – co-actors. https://doi.org/10.31234/osf.io/2am8y

Reflective Design Strategies In addition shaping our principles or objectives, our foundational influences and case studies have also helped us articulate strategies for reflective design. The first three strategies identified here speak to characteristics of designs that encourage reflection by users. The second group of strategies provides ways for reflecting on the process of design.

verbatim from subheads in this section

1.Provide for interpretive flexibility.

2.Give users license to participate.

3.Provide dynamic feedback to users.

4.Inspire rich feedback from users.

5.Build technology as a probe.

6.Invert metaphors and cross boundaries.

Some Reflective Design Challenges

The reflective design strategies offer potential design interventions but lack advice on how to evaluate them against each other.

"Designing for appropriation requires recognizing that users already interact with technology not just on a superficial, task-centered level, but with an awareness of the larger social and cultural embeddedness of the activity."

Principles of Reflective Design

verbatim from subheads in this section

Designers should use reflection to uncover and alter the limitations of design practice

Designers should use reflection to re-understand their own role in the technology design process.

Designers should support users in reflecting on their lives.

Technology should support skepticism about and reinterpretation of its own working.

Reflection is not a separate activity from action but is folded into it as an integral part of experience

Dialogic engagement between designers and users through technology can enhance reflection.

Reflective design, like reflection-in-action, advocates practicing research and design concomitantly, and not only as separate disciplines. We also subscribe to a view of reflection as a fully engaged interaction and not a detached assessment. Finally, we draw from the observation that reflection is often triggered by an element of surprise, where someone moves from knowing-in-action, operating within the status quo, to reflection-in-action, puzzling out what to do next or why the status quo has been disrupted

Influences from reflection-in-action for reflective design values/methods.

In this effort, reflection-in-action provides a ground for uniting theory and practice; whereas theory presents a view of the world in general principles and abstract problem spaces, practice involves both building within these generalities and breaking them down.

A more improvisational, intuitive and visceral process of rethinking/challenging the initial design frame.

Popular with HCI and CSCW designers

CTP is a key method for reflective design, since it offers strategies to bring unconscious values to the fore by creating technical alternatives. In our work, we extend CTP in several ways that make it particularly appropriate for HCI and critical computing.

Ways in which Senger, et al., describe how to extend CTP for HCI needs:

• incorporate both designer/user reflection on technology use and its design

• integrate reflection into design even when there is no specific "technical impasse" or metaphor breakdown

• driven by critical concerns, not simply technical problems

CTP synthesizes critical reflection with technology production as a way of highlighting and altering unconsciously-held assumptions that are hindering progress in a technical field.

Definition of critical technical practice.

This approach is grounded in AI rather than HCI

(verbatim from the paper) "CTP consists of the following moves:

• identifying the core metaphors of the field

• noticing what, when working with those metaphors, remains marginalized

• inverting the dominant metaphors to bring that margin to the center

• embodying the alternative as a new technology

Ludic design promotes engagement in the exploration and production of meaning, providing for curiosity, exploration and reflection as key values. In other words, ludic design focuses on reflection and engagement through the experience of using the designed object.

Definition of ludic design.

Offers a more playful approach than critical design.

goal is to push design research beyond an agenda of reinforcing values of consumer culture and to instead embody cultural critique in designed artifacts. A critical designer designs objects not to do what users want and value, but to introduce both designers and users to new ways of looking at the world and the role that designed objects can play for them in it.

Definition of critical design.

This approach tends to be more art-based and intentionally provocative than a practical design method to inculcate a certain sensibility into the technology design process.

value-sensitive design method (VSD). VSD provides techniques to elucidate and answer values questions during the course of a system's design.

Definition of value-sensitive design.

(verbatim from the paper)

*"VSD employs three methods :

• conceptual investigations drawing on moral philosophy, which identify stakeholders, fundamental values, and trade-offs among values pertinent to the design

• empirical investigations using social-science methods to uncover how stakeholders think about and act with respect to the values involved in the system

• technical investigations which explore the links between specific technical decisions and the values and practices they aid and hinder" *

From participatory design, we draw several core principles, most notably the reflexive recognition of the politics of design practice and a desire to speak to the needs of multiple constituencies in the design process.

Description of participatory design which has a more political angle than user-centered design, with which it is often equated in HCI

PD strategies tend to be used to support existing practices identified collaboratively by users and designers as a design-worthy project. While values clashes between designers and different users can be elucidated in this collaboration, the values which users and designers share do not necessarily go examined. For reflective design to function as a design practice that opens new cultural possibilities, however, we need to question values which we may unconsciously hold in common. In addition, designers may need to introduce values issues which initially do not interest users or make them uncomfortabl

Differences between participatory design practices and reflective design

We define 'reflection' as referring tocritical reflection, orbringing unconscious aspects of experience to conscious awareness, thereby making them available for conscious choice. This critical reflection is crucial to both individual freedom and our quality of life in society as a whole, since without it, we unthinkingly adopt attitudes, practices, values, and identities we might not consciously espouse. Additionally, reflection is not a purely cognitive activity, but is folded into all our ways of seeing and experiencing the world.

Definition of critical reflection

Our perspective on reflection is grounded in critical theory, a Western tradition of critical reflection embodied in various intellectual strands including Marxism, feminism, racial and ethnic studies, media studies and psychoanalysis.

Definition of critical theory

ritical theory argues that our everyday values, practices, perspectives, and sense of agency and self are strongly shaped by forces and agendas of which we are normally unaware, such as the politics of race, gender, and economics. Critical reflection provides a means to gain some awareness of such forces as a first step toward possible change.

Critical theory in practice

We believe that, for those concerned about the social implications of the technologies we build, reflection itself should be a core technology design outcome for HCI. That is to say, technology design practices should support both designers and users in ongoing critical reflection about technology and its relationship to human life.

Critical reflection can/should support designers and users.

Outliers : All data sets have an expected range of values, and any actual data set also has outliers that fall below or above the expected range. (Space precludes a detailed discussion of how to handle outliers for statistical analysis purposes, see: Barnett & Lewis, 1994 for details.) How to clean outliers strongly depends on the goals of the analysis and the nature of the data.

Outliers can be signals of unanticipated range of behavior or of errors.

Understanding the structure of the data : In order to clean log data properly, the researcher must understand the meaning of each record, its associated fi elds, and the interpretation of values. Contextual information about the system that produced the log should be associated with the fi le directly (e.g., “Logging system 3.2.33.2 recorded this fi le on 12-3-2012”) so that if necessary the specifi c code that gener-ated the log can be examined to answer questions about the meaning of the record before executing cleaning operations. The potential misinterpretations take many forms, which we illustrate with encoding of missing data and capped data values.

Context of the data collection and how it is structured is also a critical need.

Example, coding missing info as "0" risks misinterpretation rather than coding it as NIL, NDN or something distinguishable from other data

Data transformations : The goal of data-cleaning is to preserve the meaning with respect to an intended analysis. A concomitant lesson is that the data-cleaner must track all transformations performed on the data .

Changes to data during clean up should be annotated.

Incorporate meta data about the "chain of change" to accompany the written memo

Data Cleaning A basic axiom of log analysis is that the raw data cannot be assumed to correctly and completely represent the data being recorded. Validation is really the point of data cleaning: to understand any errors that might have entered into the data and to transform the data in a way that preserves the meaning while removing noise. Although we discuss web log cleaning in this section, it is important to note that these principles apply more broadly to all kinds of log analysis; small datasets often have similar cleaning issues as massive collections. In this section, we discuss the issues and how they can be addressed. How can logs possibly go wrong ? Logs suffer from a variety of data errors and distortions. The common sources of errors we have seen in practice include:

Common sources of errors:

• Missing events

• Dropped data

• Misplaced semantics (encoding log events differently)

In addition, real world events, such as the death of a major sports fi gure or a political event can often cause people to interact with a site differently. Again, be vigilant in sanity checking (e.g., look for an unusual number of visitors) and exclude data until things are back to normal.

Important consideration for temporal event RQs in refugee study -- whether external events influence use of natural disaster metaphors.

Recording accurate and consistent time is often a challenge. Web log fi les record many different timestamps during a search interaction: the time the query was sent from the client, the time it was received by the server, the time results were returned from the server, and the time results were received on the client. Server data is more robust but includes unknown network latencies. In both cases the researcher needs to normalize times and synchronize times across multiple machines. It is common to divide the log data up into “days,” but what counts as a day? Is it all the data from midnight to midnight at some common time reference point or is it all the data from midnight to midnight in the user’s local time zone? Is it important to know if people behave differently in the morning than in the evening? Then local time is important. Is it important to know everything that is happening at a given time? Then all the records should be converted to a common time zone.

Challenges of using time-based log data are similar to difficulties in the SBTF time study using Slack transcripts, social media, and Google Sheets

Log Studies collect the most natural observations of people as they use systems in whatever ways they typically do, uninfl uenced by experimenters or observers. As the amount of log data that can be collected increases, log studies include many different kinds of people, from all over the world, doing many different kinds of tasks. However, because of the way log data is gathered, much less is known about the people being observed, their intentions or goals, or the contexts in which the observed behaviors occur. Observational log studies allow researchers to form an abstract picture of behavior with an existing system, whereas experimental log stud-ies enable comparisons of two or more systems.

Benefits of log studies:

• Complement other types of lab/field studies

• Provide a portrait of uncensored behavior

• Easy to capture at scale

Disadvantages of log studies:

• Lack of demographic data

• Non-random sampling bias

• Provide info on what people are doing but not their "motivations, success or satisfaction"

• Can lack needed context (software version, what is displayed on screen, etc.)

Ways to mitigate: Collecting, Cleaning and Using Log Data section

Two common ways to partition log data are by time and by user. Partitioning by time is interesting because log data often contains signifi cant temporal features, such as periodicities (including consistent daily, weekly, and yearly patterns) and spikes in behavior during important events. It is often possible to get an up-to-the- minute picture of how people are behaving with a system from log data by compar-ing past and current behavior.

Bookmarked for time reference.

Mentions challenges of accounting for time zones in log data.

An important characteristic of log data is that it captures actual user behavior and not recalled behaviors or subjective impressions of interactions.

Logs can be captured on client-side (operating systems, applications, or special purpose logging software/hardware) or on server-side (web search engines or e-commerce)

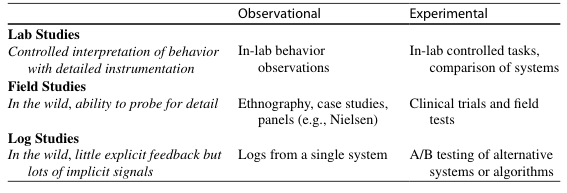

Table 1 Different types of user data in HCI research

Large-scale log data has enabled HCI researchers to observe how information diffuses through social networks in near real-time during crisis situations (Starbird & Palen, 2010 ), characterize how people revisit web pages over time (Adar, Teevan, & Dumais, 2008 ), and compare how different interfaces for supporting email organi-zation infl uence initial uptake and sustained use (Dumais, Cutrell, Cadiz, Jancke, Sarin, & Robbins, 2003 ; Rodden & Leggett, 2010 ).

Wide variety of uses of log data

Behavioral logs are traces of human behavior seen through the lenses of sensors that capture and record user activity.

Definition of log data

The distinct sorts of questions asked of science and design manifest the different kinds of accountability that apply to each - that is, the expectations of what activities must be defended and how, and by extension the ways narratives (accounts) are legitimately formed about each endeavour.science is defined by epistemological accountability, in which the essential requirement is to be able to explain and defend the basis of one’s claimed knowledge. Design, in contrast, works with aesthetic accountability, where ‘aesthetic’ refers to how satisfactory the composition of multiple design features are (as opposed to how ‘beautiful’ it might be). The requirement here is to be able to explain and defend – or, more typically, to demonstrate –that one’s design works.

Scientific accountability >> epistemological

Design accountability >> aesthetic

The issue of whether something ‘works’ goes beyond questions of technical or practical efficacy to address a host of social, cultural, aesthetic and ethical concerns.

Intent is the critical factor for design work, not its function.

To be sure, the topicality, novelty or potential benefits of a given line of research might help it attract notice and support, butscientific researchfundamentally stands or falls on the thoroughness with which activities and reasoning can be tied together. You just can’t get in the game without a solid methodology.

Methodology is the critical factor for scientific study, not the result.