After backlash on social media, Meta abolished the internal leaderboard last week.

Meta在社交媒体上的负面反应导致其取消内部排行榜,这一事件表明社交媒体对企业管理决策的影响力。

After backlash on social media, Meta abolished the internal leaderboard last week.

Meta在社交媒体上的负面反应导致其取消内部排行榜,这一事件表明社交媒体对企业管理决策的影响力。

Personally though, the only action I could take, and have, is run a blog on my domain which lets me truly own my thoughts and connections to people on the Web. Maybe you could do that too. In such little corners of the Internet, there are no algorithms shaping content based on hollow likes or reposts. And no public list of followers to race for. Just humans sharing stuff and interacting at will.

The value of running a blog in an internet without intent.

an X post today receives less than 3% of the views a single tweet delivered seven years ago.

令人惊讶的是:如今在X上的帖子获得的浏览量不到七年前单条推文浏览量的3%。这种急剧下降不仅反映了平台算法的变化,也揭示了社交媒体平台内容分发机制的根本性转变,以及用户行为和平台优先级的巨大变化。

We posted to Twitter (now known as X) five to ten times a day in 2018. Those tweets garnered somewhere between 50 and 100 million impressions per month.

令人惊讶的是:EFF在2018年每天发布5-10条推文,每月能获得5000万到1亿次曝光,而到了2024年,2500条帖子每月仅获得200万次曝光。这种急剧下降反映了社交媒体平台算法变化和用户注意力转移的惊人速度。

People on X are the first to know.

令人惊讶的是:这条推文暗示X平台上的用户比其他社交媒体平台的用户更早获取信息,这可能反映了平台的信息传播机制或用户群体的特殊性,但这并没有提供具体证据或数据支持这一说法。

In this respect, we join Fitzpatrick (2011) in exploring “the extent to which the means of media production and distribution are undergoing a process of radical democratization in the Web 2.0 era, and a desire to test the limits of that democratization”

Comment by Janneke_Adema: Comment by chrisaldrich: Something about this is reminiscent of WordPress' mission to democratize publishing. We can also compare it to Facebook whose (stated) mission is to connect people, while it's actual mission is to make money by seemingly radicalizing people to the extremes of our political spectrum.

This highlights the fact that while many may look at content moderation on platforms like Facebook as removing their voices or deplatforming them in the case of people like Donald J. Trump or Alex Jones as an anti-democratic move. In fact it is not. Because of Facebooks active move to accelerate extreme ideas by pushing them algorithmically, they are actively be un-democratic. Democratic behavior on Facebook would look like one voice, one account and reach only commensurate with that person's standing in real life. Instead, the algorithmic timeline gives far outsized influence and reach to some of the most extreme voices on the platform. This is patently un-democratic.

Chris Aldrich's Hypothes.is List<br /> by [[Dan Allosso]]<br /> accessed on 2026-01-03T07:56:28

That is a situation we are now living through, and it is no coincidence that the democratic conversation is breaking down all over the world because the algorithms are hijacking it. We have the most sophisticated information technology in history and we are losing the ability to talk with each other to hold a reasoned conversation.

for - progress trap - social media - misinformation - AI algorithms hijacking and pretending to be human

What got us to the social media problems is everybody optimizing for a narrow metric of eyeballs at the expense of democracy and kids mental health and addiction and loneliness and no one knowing it. You know, being

for - social media - progress trap

Instead of writing Eileen and Rhiannon's texts off as derivative, we began to see them as contributions to an ongoing, intertextual conversation about such issues as friendship, loyalty, power, and sexuality.

As a form, fanfictions make intertextuality visible because they rely on readers' ability to see relationships between the fan-writer's stories and the original media sources.

What many people who brush fan fiction off as irrelevant tend to ignore is the vast understanding of a pre-existing setting needed to contextualize the writings made, as well as the effort and organization required to properly build off of such settings.

While the counters on these sites indicate that they did not receive many visits, Rhiannon did report that they were visited by friends she met online who lived as far away as North Carolina and New Mexico in the United States.

new tools for working with various modes of communication are producing a change in the way that young people are choosing to construct meaningful texts for themselves and others in their affinity spaces.

As more young people spend more time online, they are developing new ways to express themselves linguistically.

Because many young people growing up in a digital world will find their own reasons for becoming literate--reasons that go beyond reading and writing to acquire academic knowledge-it is important to remain open to changes in subject matter learning that will invite and extend the literacy practices they already possess and value.

In sum, these young people's penchant for creating online content that was easily distributed and used by others with similar interests was facilitated in part by their ability to remix multimodal texts, use new tools to show and tell, and rewrite their social identities.

The storylines through which young people exist in online spaces are highly social as are the literacy skills they employ.

Lanier, J. (2013). Who Owns the Future? Simon & Schuster. https://amzn.to/3YzotPZ

https://revolution.social/

poem Children Learn What They Live by Dorothy Nolte. There are variations of it, but the first line is essentially: If children live with criticism, they learn to condemn.

via Live with criticism, learn to condemn by [[Manton Reece]]

InCoWriMo is the short name for International Correspondence Writing Month, otherwise known as February. With an obvious nod to NaNoWriMo for the inspiration, InCoWriMo challenges you to hand-write and mail/deliver one letter, card, note or postcard every day during the month of February.

1All should take special care to guard with great diligence the gates of their senses (especially the eyes, ears, and tongue) from all disorder,2to preserve themselves in peace and true humility of their souls, and to show this by their silence when it should be kept and, when they must speak, by the discretion and edification of their words,3the modesty of their countenance, the maturity of their walk, and all their movements, without giving any sign of impatience or pride.4In all things they should try and desire to give the advantage to the others, esteeming them all in their hearts as if they were their superiors and showing outwardly, in an unassuming and simple religious manner, the respect and reverence appropriate to each one’s state,5so that by consideration of one another they may thus grow in devotion and praise God our Lord, whom each one should strive to recognize in the other as in his image.

Great paragraph about relationship to things and to others

“Monet’s Garden” had a selfie station

selfie stsation....instagram...

‘Instagrammable’

way to promote, the author argues that is a common way for established museums as well

when i hear that kids are on their phones all day, i know that their parents are too.

Social media is the very reason truth is threatened — why our ability to see the world clearly is threatened. Any military expert will tell you that on a real battlefield NATO would be unbeatable to an increasingly weakened Russia. But in this unmoderated vulnerable social media space, our truth is an easy target. And that brings us to how we are under attack.

In its last report before Musk’s acquisition, in just the second half of 2021, Twitter suspended about 105,000 of the more than 5 million accounts reported for hateful conduct. In the first half of 2024, according to X, the social network received more than 66 million hateful-conduct reports, but suspended just 2,361 accounts. It’s not a perfect comparison, as the way X reports and analyzes data has changed under Musk, but the company is clearly taking action far less frequently.

https://experiments.myhub.ai/ai4communities_post

Matthew Lowry experiment

People do not actually spend a lot of time browsing junk content,

The vast majority of people browsing social media streams via the web are doing just this: spending a lot of time browsing junk content.

While much of this "junk content" is for entertainment or some means of mental and/or emotional health, at root it becomes the opiate of the masses.

Know and Master Your Social Media Data Flow by [[Louis Gray]]

See commentary at https://boffosocko.com/2017/04/11/a-new-way-to-know-and-master-your-social-media-flow/

A Minimum Viable Ecosystem for collective intelligence by [[Mathew Lowry]]

Relation to Louis Gray's 2009 diagram/post: https://boffosocko.com/2017/04/11/a-new-way-to-know-and-master-your-social-media-flow/

term spectacle refers to

for - definition - the spectacle - context - the society of the spectacle - cacooning - the spectacle - social media - the spectacle

definition - the spectacle - context - the society of the spectacle - A society where images presented by mass media / mass entertainment not only dominate - but replaces real experiences with a superficial reality that is - focused on appearances designed primarily to distract people from reality - This ultimately disconnects them from - themselves and - those around them

comment - How much does our interaction with virtual reality of - written symbols - audio - video - two dimensional images - derived from our screens both large and small affect our direct experience of life? - When people are distracted by such manufactured entertainment, they have less time to devote to important issues and connecting with real people - We can sit for hours in social isolation, ignoring our bodies need for exercise and our emotional need for real social connection - We can ignore the real crisis going on in the world and instead numb ourselves out with contrived entertainment

Shneiderman’s design principles for creativity support tools

Ben Shneiderman's work is deeply influential in HCI; his work has assisted in creating strong connections between tech and creativity, especially when applied to fostering innovation.

his 2007 national science foundation funded report on creativity support tools, led by UMD, provides a seminal overview of the definitions of creativity at that time.

What To Do With Substack? by [[Dan Allosso]]

The "recency" problem is difficult in general in social media which tends to accentuate it versus the rest of the open web which is more of a network.

On X, meanwhile, there is a self-propagating system known as “the culture war”. This game consists of trying to score points (likes and retweets) by attacking the enemy political tribe. Unlike in a regular war, the combatants can’t kill each other, only make each other angrier, so little is ever achieved, except that all players become stressed by constant bickering. And yet they persist in bickering, if only because their opponents do, in an endless state of mutually assured distraction.

On Instagram, the main self-propagating system is a beauty pageant. Young women compete to be as pretty as possible, going to increasingly extreme lengths: makeup, filters, fillers, surgery. The result is that all women begin to feel ugly, online and off.

These features turned social media into the world’s most addictive status game.

In this respect, we join Fitzpatrick (2011) in exploring “the extent to which the means of media production and distribution are undergoing a process of radical democratization in the Web 2.0 era, and a desire to test the limits of that democratization”

Comment by chrisaldrich: Something about this is reminiscent of WordPress' mission to democratize publishing. We can also compare it to Facebook whose (stated) mission is to connect people, while it's actual mission is to make money by seemingly radicalizing people to the extremes of our political spectrum.

This highlights the fact that while many may look at content moderation on platforms like Facebook as removing their voices or deplatforming them in the case of people like Donald J. Trump or Alex Jones as an anti-democratic move. In fact it is not. Because of Facebooks active move to accelerate extreme ideas by pushing them algorithmically, they are actively be un-democratic. Democratic behavior on Facebook would look like one voice, one account and reach only commensurate with that person's standing in real life. Instead, the algorithmic timeline gives far outsized influence and reach to some of the most extreme voices on the platform. This is patently un-democratic.

meta they just rolled out they're like hey if you want to pay a certain subscription we will show your stuff to your followers 00:03:14 on Instagram and Facebook

for - example - social media platforms bleeding content producers - Meta - Facebook - Instagram

Every event graph has a single root event with no parent

Weird. That means one user must start a topic. Whereas a topic like "Obama" could be started by multiple folks, not knowing about each other, later on discovering and interconnecting their reasoning, if they so wish.

Extensible user management (inviting, joining, leaving, kicking, banning) mediated by a power-level based user privilege system

Additionally: community-based management, ban polls.

Alternative: per-user configuration of access. Let rooms be topics on which peers discuss. A friend can see what he's friends and foafs are saying.

Pen pals with typewriters. Pre-Twitter/Facebook social media modality.

Introducing a network for thoughtful conversations by [[Jatan Mehta]]

Reply by writing a blog post

This has broadly been implemented by Tumblr and is a first class feature within the IndieWeb.

The system will check if the link being submitted has an associated RSS feed, particularly one with an explicit title field instead of just a date, and only then allow posting it. Blogs, many research journals, YouTube channels, and podcasts have RSS feeds to aid reading and distribution, whereas things like tweets, Instagram photos, and LinkedIn posts don’t. So that’s a natively available filter on the web for us to utilize.

Existence of an RSS feed could be used as a filter to remove large swaths of social media content which don't have them.

ShareOpenly https://shareopenly.org/<br /> built by Ben Werdmuller

Ongweso Jr., Edward. “The Miseducation of Kara Swisher: Soul-Searching with the Tech ‘Journalist.’” The Baffler, March 29, 2024. https://thebaffler.com/latest/the-miseducation-of-kara-swisher-ongweso.

ᔥ[[Pete Brown]] in Exploding Comma

ᔥ[[Doug Belshaw]] in I am so tired of moving platforms

The Evaporative Cooling Effect describes the phenomenon that high value contributors leave a community because they cannot gain something from it, which leads to the decrease of the quality of the community. Since the people most likely to join a community are those whose quality is below the average quality of the community, these newcomers are very likely to harm the quality of the community. With the expansion of community, it is very hard to maintain the quality of the community.

via ref to Xianhang Zhang in Social Software Sundays #2 – The Evaporative Cooling Effect « Bumblebee Labs Blog [archived] who saw it

via [[Eliezer Yudkowsky]] in Evaporative Cooling of Group Beliefs

By its very nature, moderation is a form of censorship. You, as a community, space, or platform are deciding who and what is unacceptable. In Substack’s case, for example, they don’t allow pornography but they do allow Nazis. That’s not “free speech” but rather a business decision. If you’re making moderation based on financials, fine, but say so. Then platform users can make choices appropriately.

One could easily replace World War I and idea of war here with social media/media and the essay broadly reads well today.

Untangling Threads by Erin Kissane

I dislike isolated events anddisconnected details. I really hate state-ments, views, prejudices, and beliefsthat jump at you suddenly out of mid-air.

Wells would really hate social media, which he seems to have perfectly defined with this statement.

Untangling Threads by Erin Kissane on 2023-12-21

This immediately brings up the questions of how the following - founder effects and being overwhelmed by the scale of an eternal September - communism of community interactions being subverted bent for the purposes of (surveillance) capitalism (see @Graeber2011, Debt)

On the “Death” of the Typosphere, a Few Thoughts and Ideas by Ted Munk on 2018-06-02

TTSSASTT = To Type, Shoot Straight, and Speak the Truth…

In my book Technology’s Child: Digital Media’s Role in the Ages and Stages of Growing Up, I explore how the design of platforms and the way people engage with those designs helps to shape the cultures that emerge on different social media platforms. I propose three layers for understanding this process.

While social media emphasizes the show-off stuff — the vacation in Puerto Vallarta, the full kitchen remodel, the night out on the town — on blogs it still seems that people are sharing more than signalling.

Yes!

In contrast, media ecologists focus on understanding media as environments and how those environments affect society.

The World Wide Web takes on an ecological identity in that it is defined by the ecology of relationships exercised within, determining the "environmental" aspects of the online world. What of media ecology and its impact on earth's ecology? There are climate change ramifications simply in the use of social media itself, yet alone the influences or behaviors associated with it: here is a carbon emissions calculator for seemingly "innocent" internet use:

Following the Not So Online<br /> by Ryan Barrett

Everyone is super ambitious and that creates a little bit of a toxic environment where people feel like it's a very comparative space

Competitive in what way? Grades? Jobs? Finances? Material things? Relationships?

"And I think social media turbocharged us all of this.

wow...tell me more.

Managing a half-dozen identities on a half-dozen platforms is too much work!

In both cases, it's up to us now to discipline ourselves to avoid the fats in junk food, and the breaking news and dopamine thrill-ride of social media.

A nice encapsulation of evolutionary challenges that humans are facing.

Television, radio, and all the sources of amusement andinformation that surround us in our daily lives are also artificialprops. They can give us the impression that our minds are active, because we are required to react to stimuli from outside.But the power of those external stimuli to keep us going islimited. They are like drugs. We grow used to them, and wecontinuously need more and more of them. Eventually, theyhave little or no effect. Then, if we lack resources within ourselves, we cease to grow intellectually, morally, and spiritually.And when we cease to grow, we begin to die.

One could argue that Adler and Van Doren would lump social media into the sources of amusement category.

DiResta, Renee. “Free Speech Is Not the Same As Free Reach.” Wired, August 30, 2018. https://www.wired.com/story/free-speech-is-not-the-same-as-free-reach/.

for: annotate, annotate - social media, progress trap - social media

source: connectathon 2023 09 23

A social network for "organizing and sharing your knowledge".

T9 (text prediction):generative AI::handgun:machine gun

I do expect new social platforms to emerge that focus on privacy and ‘fake-free’ information, or at least they will claim to be so. Proving that to a jaded public will be a challenge. Resisting the temptation to exploit all that data will be extremely hard. And how to pay for it all? If it is subscriber-paid, then only the wealthy will be able to afford it.

Will members-only, perhaps subscription-based ‘online communities’ reemerge instead of ‘post and we’ll sell your data’ forms of social media? I hope so, but at this point a giant investment would be needed to counter the mega-billions of companies like Facebook!

David E. Williams, Spencer P. Greenhalgh. (2022). Pseudonymous academics: Authentic tales from the Twitter trenches. The Internet and Higher Education. Volume 55, October 2022 https://doi.org/10.1016/j.iheduc.2022.100870

The big tech companies, left to their own devices (so to speak), have already had a net negative effect on societies worldwide. At the moment, the three big threats these companies pose – aggressive surveillance, arbitrary suppression of content (the censorship problem), and the subtle manipulation of thoughts, behaviors, votes, purchases, attitudes and beliefs – are unchecked worldwide

In our early experiments, reported by The Washington Post in March 2013, we discovered that Google’s search engine had the power to shift the percentage of undecided voters supporting a political candidate by a substantial margin without anyone knowing.

What if, early in the morning on Election Day in 2016, Mark Zuckerberg had used Facebook to broadcast “go-out-and-vote” reminders just to supporters of Hillary Clinton? Extrapolating from Facebook’s own published data, that might have given Mrs. Clinton a boost of 450,000 votes or more, with no one but Mr. Zuckerberg and a few cronies knowing about the manipulation.

As Threads "soars", Bluesky and Mastodon are adopting algorithmic feeds. (Tech Crunch) You will eat the bugs. You will live in the pod. You will read what we tell you. You will own nothing and we don't much care if you are happy.

Applying the WEF meme about pods and bugs to Threads inspiring Bluesky and one Mastodon app to push algorithmic feeds.

specific uses of the technology help develop what we call “relational confidence,” or the confidence that one has a close enough relationship to a colleague to ask and get needed knowledge. With greater relational confidence, knowledge sharing is more successful.

Not that an E2E rule precludes algorithmic feeds: remember, E2E is the idea that you see what you ask to see. If a user opts into a feed that promotes content that they haven't subscribed to at the expense of the things they explicitly asked to see, that's their choice. But it's not a choice that social media services reliably offer, which is how they are able to extract ransom payments from publishers.

I don't understand how you could audit this, unless you had to force a default of chronological presentation of posts etc.

the folly of endless bla, bla bla, people viewing the mind as a big boy, while in reality, it is a little boy who is undisciplined and goes on random rants and tangents, liking and disliking everything it sees on social-media

"After years of research, our engineers have created a revolution in social media technology: a Twitter clone on Instagram that offers the absolute worst of both worlds," said a VR headset-wearing Zuckerberg in an address to dozens of friends in the Metaverse. "At long last, you can read caustic hot takes written by talentless idiots, while still enjoying oppressive censorship and sepia-toned thirst traps from yoga pants models with obnoxious lip injections. You're welcome!"

Babylon Bee article with made up Mark Zuckerberg quote touting the virtues of Threads. This is some of the Bee's finest writing and not at all inaccurate.

(14:20-19:00) Dopamine Prediction Error is explained by Andrew Huberman in the following way: When we anticipate something exciting dopamine levels rise and rise, but when we fail it drops below baseline, decreasing motivation and drive immensely, sometimes even causing us to get sad. However, when we succeed, dopamine rises even higher, increasing our drive and motivation significantly... This is the idea that successes build upon each other, and why celebrating the "marginal gains" is a very powerful tool to build momentum and actually make progress. Surprise increases this effect even more: big dopamine hit, when you don't anticipate it.

Social Media algorithms make heavy use of this principle, therefore enslaving its user, in particular infinite scrolling platforms such as TikTok... Your dopamine levels rise as you're looking for that one thing you like, but it drops because you don't always have that one golden nugget. Then it rises once in a while when you find it. This contrast creates an illusion of enjoyment and traps the user in an infinite search of great content, especially when it's shortform. It makes you waste time so effectively. This is related to getting the success mindset of preferring delayed gratification over instant gratification.

It would be useful to reflect and introspect on your dopaminic baseline, and see what actually increases and decreases your dopamine, in addition to whether or not these things help to achieve your ambitions. As a high dopaminic baseline (which means your dopamine circuit is getting used to high hits from things as playing games, watching shortform content, watching porn) decreases your ability to focus for long amounts of time (attention span), and by extent your ability to learn and eventually reach success. Studying and learning can actually be fun, if your dopamine levels are managed properly, meaning you don't often engage in very high-dopamine emitting activities. You want your brain to be used to the low amounts of dopamine that studying gives. A framework to help with this reflection would be Kolb's.

A short-term dopamine reset is to not use the tool or device for about half an hour to an hour (or do NSDR). However, this is not a long-term solution.

Nostr is a simple, open protocol that enables global, decentralized, and censorship-resistant social media.

Peter Kominski likes this generally.

Trakt DataRecoveryIMPORTANTOn December 11 at 7:30 pm PST our main database crashed and corrupted some of the data. We're deeply sorry for the extended downtime and we'll do better moving forward. Updates to our automated backups are already in place and they will be tested on an ongoing basis.Data prior to November 7 is fully restored.Watched history between November 7 and Decmber 11 has been recovered. There is a separate message on your dashboard allowing you to review and import any recovered data.All other data (besides watched history) after November 7 has already been restored and imported.Some data might be permanently lost due to data corruption.Trakt API is back online as of December 20.Active VIP members will get 2 free months added to their expiration date

From late 2022

https://atomicbooks.com/pages/john-waters-fan-mail

John Waters receives fan mail via Atomic Books in Baltimore, MD.

Benefits of sharing permanent notes .t3_12gadut._2FCtq-QzlfuN-SwVMUZMM3 { --postTitle-VisitedLinkColor: #9b9b9b; --postTitleLink-VisitedLinkColor: #9b9b9b; --postBodyLink-VisitedLinkColor: #989898; }

reply to u/bestlunchtoday at https://www.reddit.com/r/Zettelkasten/comments/12gadut/benefits_of_sharing_permanent_notes/

I love the diversity of ideas here! So many different ways to do it all and perspectives on the pros/cons. It's all incredibly idiosyncratic, just like our notes.

I probably default to a far extreme of sharing the vast majority of my notes openly to the public (at least the ones taken digitally which account for probably 95%). You can find them here: https://hypothes.is/users/chrisaldrich.

Not many people notice or care, but I do know that a small handful follow and occasionally reply to them or email me questions. One or two people actually subscribe to them via RSS, and at least one has said that they know more about me, what I'm reading, what I'm interested in, and who I am by reading these over time. (I also personally follow a handful of people and tags there myself.) Some have remarked at how they appreciate watching my notes over time and then seeing the longer writing pieces they were integrated into. Some novice note takers have mentioned how much they appreciate being able to watch such a process of note taking turned into composition as examples which they might follow. Some just like a particular niche topic and follow it as a tag (so if you were interested in zettelkasten perhaps?) Why should I hide my conversation with the authors I read, or with my own zettelkasten unless it really needed to be private? Couldn't/shouldn't it all be part of "The Great Conversation"? The tougher part may be having means of appropriately focusing on and sharing this conversation without some of the ills and attention economy practices which plague the social space presently.

There are a few notes here on this post that talk about social media and how this plays a role in making them public or not. I suppose that if I were putting it all on a popular platform like Twitter or Instagram then the use of the notes would be or could be considered more performative. Since mine are on what I would call a very quiet pseudo-social network, but one specifically intended for note taking, they tend to be far less performative in nature and the majority of the focus is solely on what I want to make and use them for. I have the opportunity and ability to make some private and occasionally do so. Perhaps if the traffic and notice of them became more prominent I would change my habits, but generally it has been a net positive to have put my sensemaking out into the public, though I will admit that I have a lot of privilege to be able to do so.

Of course for those who just want my longer form stuff, there's a website/blog for that, though personally I think all the fun ideas at the bleeding edge are in my notes.

Since some (u/deafpolygon, u/Magnifico99, and u/thiefspy; cc: u/FastSascha, u/A_Dull_Significance) have mentioned social media, Instagram, and journalists, I'll share a relevant old note with an example, which is also simultaneously an example of the benefit of having public notes to be able to point at, which u/PantsMcFail2 also does here with one of Andy Matuschak's public notes:

[Prominent] Journalist John Dickerson indicates that he uses Instagram as a commonplace: https://www.instagram.com/jfdlibrary/ here he keeps a collection of photo "cards" with quotes from famous people rather than photos. He also keeps collections there of photos of notes from scraps of paper as well as photos of annotations he makes in books.

It's reasonably well known that Ronald Reagan shared some of his personal notes and collected quotations with his speechwriting staff while he was President. I would say that this and other similar examples of collaborative zettelkasten or collaborative note taking and their uses would blunt u/deafpolygon's argument that shared notes (online or otherwise) are either just (or only) a wiki. The forms are somewhat similar, but not all exactly the same. I suspect others could add to these examples.

And of course if you've been following along with all of my links, you'll have found yourself reading not only these words here, but also reading some of a directed conversation with entry points into my own personal zettelkasten, which you can also query as you like. I hope it has helped to increase the depth and level of the conversation, should you choose to enter into it. It's an open enough one that folks can pick and choose their own path through it as their interests dictate.

Introducing Substack Notes<br /> by Hamish McKenzie, Chris Best, Jairaj Sethi

In Notes, writers will be able to post short-form content and share ideas with each other and their readers. Like our Recommendations feature, Notes is designed to drive discovery across Substack. But while Recommendations lets writers promote publications, Notes will give them the ability to recommend almost anything—including posts, quotes, comments, images, and links.

Substack slowly adding features and functionality to make them a full stack blogging/social platform... first long form, then short note features...

Also pushing in on Twitter's lunch as Twitter is having issues.

Die schiere Menge sprengt die Möglichkeiten der Buchpublikation, die komplexe, vieldimensionale Struktur einer vernetzten Informationsbasis ist im Druck nicht nachzubilden, und schließlich fügt sich die Dynamik eines stetig wachsenden und auch stetig zu korrigierenden Materials nicht in den starren Rhythmus der Buchproduktion, in der jede erweiterte und korrigierte Neuauflage mit unübersehbarem Aufwand verbunden ist. Eine Buchpublikation könnte stets nur die Momentaufnahme einer solchen Datenbank, reduziert auf eine bestimmte Perspektive, bieten. Auch das kann hin und wieder sehr nützlich sein, aber dadurch wird das Problem der Publikation des Gesamtmaterials nicht gelöst.

link to https://hypothes.is/a/U95jEs0eEe20EUesAtKcuA

Is this phenomenon of "complex narratives" related to misinformation spread within the larger and more complex social network/online network? At small, local scales, people know how to handle data and information which is locally contextualized for them. On larger internet-scale communication social platforms this sort of contextualization breaks down.

For a lack of a better word for this, let's temporarily refer to it as "complex narratives" to get a handle on it.

Related here is the horcrux problem of note taking or even social media. The mental friction of where did I put that thing? As a result, it's best to put it all in one place.

How can you build on a single foundation if you're in multiple locations? The primary (only?) benefit of multiple locations is redundancy in case of loss.

Ryan Holiday and Robert Greene are counter examples, though Greene's books are distinct projects generally while Holiday's work has a lot of overlap.

If Seneca or Martial were around today, they would probably write sarcastic epigrams about the very public exhibition of reading text messages and in-your-face displays of texting. Digital reading, like the perusing of ancient scrolls, constitutes an important statement about who we are. Like the public readers of Martial’s Rome, the avid readers of text messages and other forms of social media appear to be everywhere. Though in both cases the performers of reading are tirelessly constructing their self-image, the identity they aspire to establish is very different. Young people sitting in a bar checking their phones for texts are not making a statement about their refined literary status. They are signalling that they are connected and – most importantly – that their attention is in constant demand.

Internet ‘algospeak’ is changing our language in real time, from ‘nip nops’ to ‘le dollar bean’ by [[Taylor Lorenz]]

shifts in language and meaning of words and symbols as the result of algorithmic content moderation

instead of slow semantic shifts, content moderation is actively pushing shifts of words and their meanings

article suggested by this week's Dan Allosso Book club on Pirate Enlightenment

Could it be the sift from person to person (known in both directions) to massive broadcast that is driving issues with content moderation. When it's person to person, one can simply choose not to interact and put the person beyond their individual pale. This sort of shunning is much harder to do with larger mass publics at scale in broadcast mode.

How can bringing content moderation back down to the neighborhood scale help in the broadcast model?

In January, Kendra Calhoun, a postdoctoral researcher in linguistic anthropology at UCLA, and Alexia Fawcett, a doctoral student in linguistics at UC Santa Barbara, gave a presentation about language on TikTok. They outlined how, by self-censoring words in the captions of TikToks, new algospeak code words emerged.

follow up on this for the relevant forthcoming paper....

“It makes me feel like I need a disclaimer because I feel like it makes you seem unprofessional to have these weirdly spelled words in your captions,” she said, “especially for content that's supposed to be serious and medically inclined.”

Where's the balance for professionalism with respect to dodging the algorithmic filters for serious health-related conversations online?

But algorithmic content moderation systems are more pervasive on the modern Internet, and often end up silencing marginalized communities and important discussions.

What about non-marginalized toxic communities like Neo-Nazis?

Unlike other mainstream social platforms, the primary way content is distributed on TikTok is through an algorithmically curated “For You” page; having followers doesn’t guarantee people will see your content. This shift has led average users to tailor their videos primarily toward the algorithm, rather than a following, which means abiding by content moderation rules is more crucial than ever.

Social media has slowly moved away from communication between people who know each other to people who are farther apart in social spaces. Increasingly in 2021 onward, some platforms like TikTok have acted as a distribution platform and ignored explicit social connections like follower/followee in lieu of algorithmic-only feeds to distribute content to people based on a variety of criteria including popularity of content and the readers' interests.

https://www.thecrimson.com/article/2023/2/2/donovan-forced-leave-hks/

This is a massive loss for HKS, but a potential major win for the school that picks the project up.

It seems to be a sad use of "rules" to shut down a project which may not jive with an administrations' perspective/needs.

Read on Fri 2023-02-03 at 7:14 PM

Man kann die ganze Situation nämlich auch einmal zum Anlass nehmen, darüber nachzudenken, ob man das Ganze wirklich braucht. Ist der Nutzen der sozialen Medien so hoch, dass er den Preis rechtfertigt? Das ist eine Frage, die ich mir stelle, seit ich meinen persönlichen Twitter-Account stillgelegt habe, aber so verkehrt fühlt es sich zumindest für mich nicht an, nicht mehr auf Twitter, Mastodon & Co. vertreten zu sein. Vielleicht hatte ein solcher Dienst auch einfach seine Zeit, und vielleicht überschätzen wir die Relevanz von sozialen Medien, und vielleicht wäre es gut, davon mehr Abstand zu nehmen.

One can find utility in asking questions of their own note box, but why not also leverage the utility of a broader audience asking questions of it as well?!

One of the values of social media is that it can allow you to practice or rehearse the potential value of ideas and potentially getting useful feedback on individual ideas which you may be aggregating into larger works.

We become what we behold, a game by Nicky Case.

A commentary on news cycles and social media.

social media platform

This technical jargon, in the context of Cohost.org, means "a website".

is zettelkasten gamification of note-taking? .t3_zkguan._2FCtq-QzlfuN-SwVMUZMM3 { --postTitle-VisitedLinkColor: #9b9b9b; --postTitleLink-VisitedLinkColor: #9b9b9b; --postBodyLink-VisitedLinkColor: #989898; }

reply to u/theinvertedform at https://www.reddit.com/r/Zettelkasten/comments/zkguan/is_zettelkasten_gamification_of_notetaking/

Social media and "influencers" have certainly grabbed onto the idea and squeezed with both hands. Broadly while talking about their own versions of rules, tips, tricks, and tools, they've missed a massive history of the broader techniques which pervade the humanities for over 500 years. When one looks more deeply at the broader cross section of writers, educators, philosophers, and academics who have used variations on the idea of maintaining notebooks or commonplace books, it becomes a relative no-brainer that it is a useful tool. I touch on some of the history as well as some of the recent commercialization here: https://boffosocko.com/2022/10/22/the-two-definitions-of-zettelkasten/.

"Queer people built the Fediverse," she said, adding that four of the five authors of the ActivityPub standard identify as queer. As a result, protections against undesired interaction are built into ActivityPub and the various front ends. Systems for blocking entire instances with a culture of trolling can save users the exhausting process of blocking one troll at a time. If a post includes a “summary” field, Mastodon uses that summary as a content warning.

Investigating social structures through the use of network or graphs Networked structures Usually called nodes ((individual actors, people, or things within the network) Connections between nodes: Edges or Links Focus on relationships between actors in addition to the attributes of actors Extensively used in mapping out social networks (Twitter, Facebook) Examples: Palantir, Analyst Notebook, MISP and Maltego

Drawing from negativity bias theory, CFM, ICM, and arousal theory, this study characterizes the emotional responses of social media users and verifies how emotional factors affect the number of reposts of social media content after two natural disasters (predictable and unpredictable disasters). In addition, results from defining the influential users as those with many followers and high activity users and then characterizing how they affect the number of reposts after natural disasters

Using actual fake-news headlines presented as they were seen on Facebook, we show that even a single exposure increases subsequent perceptions of accuracy, both within the same session and after a week. Moreover, this “illusory truth effect” for fake-news headlines occurs despite a low level of overall believability and even when the stories are labeled as contested by fact checkers or are inconsistent with the reader’s political ideology. These results suggest that social media platforms help to incubate belief in blatantly false news stories and that tagging such stories as disputed is not an effective solution to this problem.

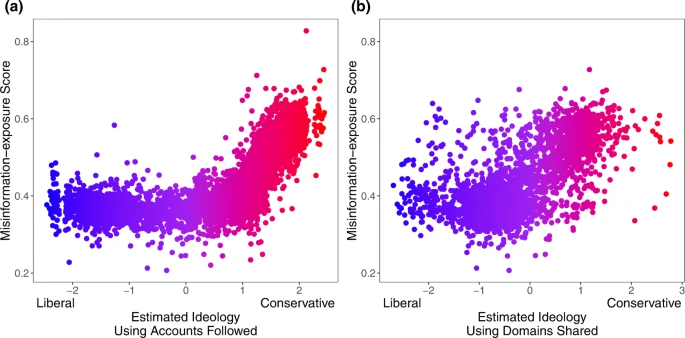

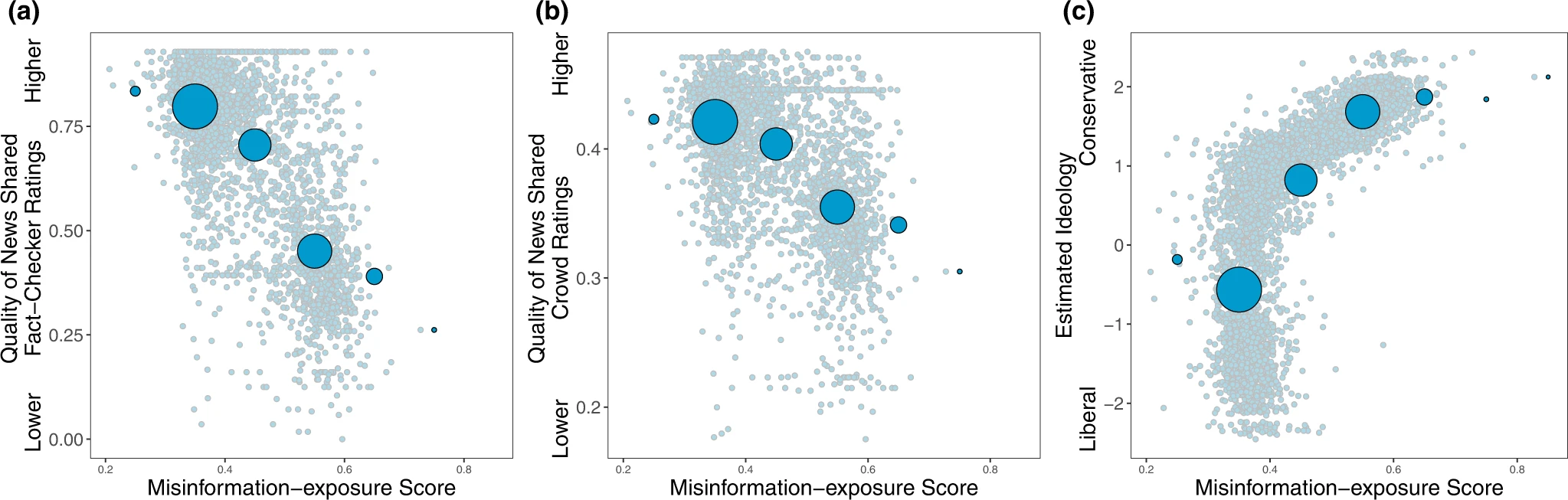

. Furthermore, our results add to the growing body of literature documenting—at least at this historical moment—the link between extreme right-wing ideology and misinformation8,14,24 (although, of course, factors other than ideology are also associated with misinformation sharing, such as polarization25 and inattention17,37).

Misinformation exposure and extreme right-wing ideology appear associated in this report. Others find that it is partisanship that predicts susceptibility.

. We also find evidence of “falsehood echo chambers”, where users that are more often exposed to misinformation are more likely to follow a similar set of accounts and share from a similar set of domains. These results are interesting in the context of evidence that political echo chambers are not prevalent, as typically imagined

And finally, at the individual level, we found that estimated ideological extremity was more strongly associated with following elites who made more false or inaccurate statements among users estimated to be conservatives compared to users estimated to be liberals. These results on political asymmetries are aligned with prior work on news-based misinformation sharing

This suggests the misinformation sharing elites may influence whether followers become more extreme. There is little incentive not to stoke outrage as it improves engagement.

Political ideology is estimated using accounts followed10. b Political ideology is estimated using domains shared30 (Red: conservative, blue: liberal). Source data are provided as a Source Data file.

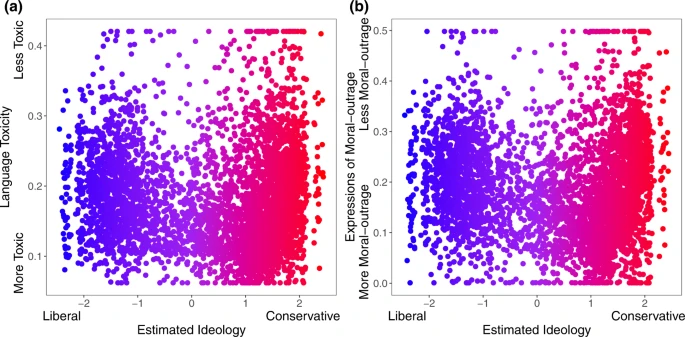

The relationship between estimated political ideology and (a) language toxicity and (b) expressions of moral outrage. Extreme values are winsorized by 95% quantile for visualization purposes. Source data are provided as a Source Data file.

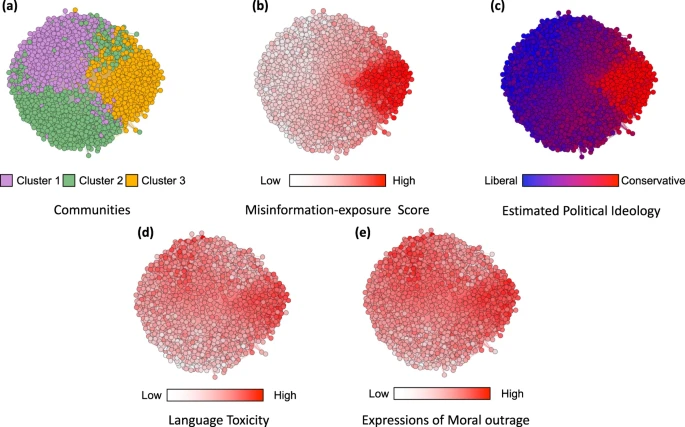

Nodes represent website domains shared by at least 20 users in our dataset and edges are weighted based on common users who shared them. a Separate colors represent different clusters of websites determined using community-detection algorithms29. b The intensity of the color of each node shows the average misinformation-exposure score of users who shared the website domain (darker = higher PolitiFact score). c Nodes’ color represents the average estimated ideology of the users who shared the website domain (red: conservative, blue: liberal). d The intensity of the color of each node shows the average use of language toxicity by users who shared the website domain (darker = higher use of toxic language). e The intensity of the color of each node shows the average expression of moral outrage by users who shared the website domain (darker = higher expression of moral outrage). Nodes are positioned using directed-force layout on the weighted network.

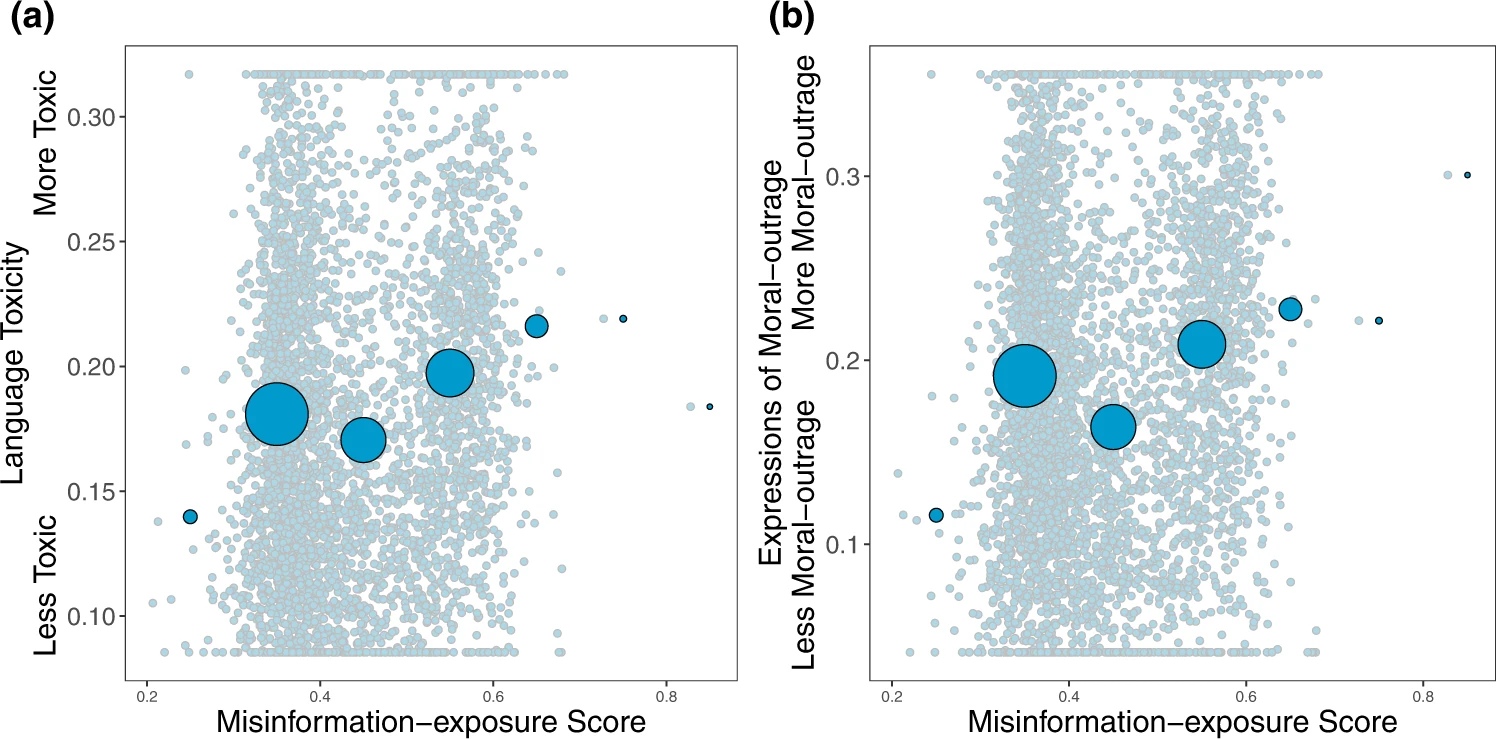

Shown is the relationship between users’ misinformation-exposure scores and (a) the toxicity of the language used in their tweets, measured using the Google Jigsaw Perspective API27, and (b) the extent to which their tweets involved expressions of moral outrage, measured using the algorithm from ref. 28. Extreme values are winsorized by 95% quantile for visualization purposes. Small dots in the background show individual observations; large dots show the average value across bins of size 0.1, with size of dots proportional to the number of observations in each bin. Source data are provided as a Source Data file.

Shown is the relationship between users’ misinformation-exposure scores and (a) the quality of the news outlets they shared content from, as rated by professional fact-checkers21, (b) the quality of the news outlets they shared content from, as rated by layperson crowds21, and (c) estimated political ideology, based on the ideology of the accounts they follow10. Small dots in the background show individual observations; large dots show the average value across bins of size 0.1, with size of dots proportional to the number of observations in each bin.

Notice that Twitter’s account purge significantly impacted misinformation spread worldwide: the proportion of low-credible domains in URLs retweeted from U.S. dropped from 14% to 7%. Finally, despite not having a list of low-credible domains in Russian, Russia is central in exporting potential misinformation in the vax rollout period, especially to Latin American countries. In these countries, the proportion of low-credible URLs coming from Russia increased from 1% in vax development to 18% in vax rollout periods (see Figure 8 (b), Appendix).

Interestingly, the fraction of low-credible URLs coming from U.S. dropped from 74% in the vax devel-opment period to 55% in the vax rollout. This large decrease can be directly ascribed to Twitter’s moderationpolicy: 46% of cross-border retweets of U.S. users linking to low-credible websites in the vax developmentperiod came from accounts that have been suspended following the U.S. Capitol attack (see Figure 8 (a), Ap-pendix).

Considering the behavior of users in no-vax communities,we find that they are more likely to retweet (Figure 3(a)), share URLs (Figure 3(b)), and especially URLs toYouTube (Figure 3(c)) than other users. Furthermore, the URLs they post are much more likely to be fromlow-credible domains (Figure 3(d)), compared to those posted in the rest of the networks. The differenceis remarkable: 26.0% of domains shared in no-vax communities come from lists of known low-credibledomains, versus only 2.4% of those cited by other users (p < 0.001). The most common low-crediblewebsites among the no-vax communities are zerohedge.com, lifesitenews.com, dailymail.co.uk (consideredright-biased and questionably sourced) and childrenshealthdefense.com (conspiracy/pseudoscience)

We applied two scenarios to compare how these regular agents behave in the Twitter network, with and without malicious agents, to study how much influence malicious agents have on the general susceptibility of the regular users. To achieve this, we implemented a belief value system to measure how impressionable an agent is when encountering misinformation and how its behavior gets affected. The results indicated similar outcomes in the two scenarios as the affected belief value changed for these regular agents, exhibiting belief in the misinformation. Although the change in belief value occurred slowly, it had a profound effect when the malicious agents were present, as many more regular agents started believing in misinformation.

Therefore, although the social bot individual is “small”, it has become a “super spreader” with strategic significance. As an intelligent communication subject in the social platform, it conspired with the discourse framework in the mainstream media to form a hybrid strategy of public opinion manipulation.

There were 120,118 epidemy-related tweets in this study, and 34,935 Twitter accounts were detected as bot accounts by Botometer, accounting for 29%. In all, 82,688 Twitter accounts were human, accounting for 69%; 2495 accounts had no bot score detected.In social network analysis, degree centrality is an index to judge the importance of nodes in the network. The nodes in the social network graph represent users, and the edges between nodes represent the connections between users. Based on the network structure graph, we may determine which members of a group are more influential than others. In 1979, American professor Linton C. Freeman published an article titled “Centrality in social networks conceptual clarification“, on Social Networks, formally proposing the concept of degree centrality [69]. Degree centrality denotes the number of times a central node is retweeted by other nodes (or other indicators, only retweeted are involved in this study). Specifically, the higher the degree centrality is, the more influence a node has in its network. The measure of degree centrality includes in-degree and out-degree. Betweenness centrality is an index that describes the importance of a node by the number of shortest paths through it. Nodes with high betweenness centrality are in the “structural hole” position in the network [69]. This kind of account connects the group network lacking communication and can expand the dialogue space of different people. American sociologist Ronald S. Bert put forward the theory of a “structural hole” and said that if there is no direct connection between the other actors connected by an actor in the network, then the actor occupies the “structural hole” position and can obtain social capital through “intermediary opportunities”, thus having more advantages.

We analyzed and visualized Twitter data during the prevalence of the Wuhan lab leak theory and discovered that 29% of the accounts participating in the discussion were social bots. We found evidence that social bots play an essential mediating role in communication networks. Although human accounts have a more direct influence on the information diffusion network, social bots have a more indirect influence. Unverified social bot accounts retweet more, and through multiple levels of diffusion, humans are vulnerable to messages manipulated by bots, driving the spread of unverified messages across social media. These findings show that limiting the use of social bots might be an effective method to minimize the spread of conspiracy theories and hate speech online.

I want to insist on an amateur internet; a garage internet; a public library internet; a kitchen table internet.

Social media should be comprised of people from end to end. Corporate interests inserted into the process can only serve to dehumanize the system.

Robin Sloan is in the same camp as Greg McVerry and I.

Alas, lawmakers are way behind the curve on this, demanding new "online safety" rules that require firms to break E2E and block third-party de-enshittification tools: https://www.openrightsgroup.org/blog/online-safety-made-dangerous/ The online free speech debate is stupid because it has all the wrong focuses: Focusing on improving algorithms, not whether you can even get a feed of things you asked to see; Focusing on whether unsolicited messages are delivered, not whether solicited messages reach their readers; Focusing on algorithmic transparency, not whether you can opt out of the behavioral tracking that produces training data for algorithms; Focusing on whether platforms are policing their users well enough, not whether we can leave a platform without losing our important social, professional and personal ties; Focusing on whether the limits on our speech violate the First Amendment, rather than whether they are unfair: https://doctorow.medium.com/yes-its-censorship-2026c9edc0fd

This list is particularly good.

Proper regulation of end to end services would encourage the creation of filtering and other tools which would tend to benefit users rather than benefit the rent seeking of the corporations which own the pipes.

my best guess is it’s the moderation

I'd love it to be normal and everyday to not assume that when you post a message on your social network, every person is reading it in a similar UI, either to the one you posted from, or to the one everyone else is reading it in.

🤗

[https://a.gup.pe/ Guppe Groups] a group of bot accounts that can be used to aggregate social groups within the [[fediverse]] around a variety of topics like [[crafts]], books, history, philosophy, etc.

https://zephoria.medium.com/what-if-failure-is-the-plan-2f219ea1cd62

A lot has changed about our news media ecosystem since 2007. In the United States, it’s hard to overstate how the media is entangled with contemporary partisan politics and ideology. This means that information tends not to flow across partisan divides in coherent ways that enable debate.

Our media and social media systems have been structured along with the people who use them such that debate is stifled because information doesn't flow coherently across the political partisan divide.

Humans didn’t evolve to thrive in frictionless social networks with high fanout and velocity, and arguably we shouldn’t.

https://oulipo.social/about

Social media without the letter "e".

https://blog.erinshepherd.net/2022/11/a-better-moderation-system-is-possible-for-the-social-web/

The trust one must place in the creator of a blocklist is enormous, because the most dangerous failure mode isn’t that it doesn’t block who it says it does, but that it blocks who it says it doesn’t and they just disappear.

<small><cite class='h-cite via'>ᔥ <span class='p-author h-card'>Erin Alexis Owen Shepherd</span> in A better moderation system is possible for the social web (<time class='dt-published'>12/03/2022 11:10:32</time>)</cite></small>

“The damage commercial social media has done to politics, relationships and the fabric of society needs undoing.

As users begin migrating to the noncommercial fediverse, they need to reconsider their expectations for social media — and bring them in line with what we expect from other arenas of social life. We need to learn how to become more like engaged democratic citizens in the life of our networks.

I have about fourteen or sixteen weeks to do this, so I'm breaking the course into an "intro" section that covers some basic stuff like affordances, and other insights into how tech functions. There's a section on AI which is nothing but critical appraisals on AI from a variety of areas. And there's a section on Social Media, which is the most well formed section in terms of readings.

https://zirk.us/@shengokai/109440759945863989

If the individuals in an environment don't understand or perceive the affordances available to them, can the interactions between them and the environment make it seem as if the environment possesses agency?

cross reference: James J. Gibson book The Senses Considered as Perceptual Systems (1966)

People often indicate that social media "causes" outcomes among groups of people who use it. Eg: Social media (via algorithmic suggestions of fringe content) causes people to become radicalized.

The TTRG (time to reply guy) was getting so fast, that I can’t actually remember the last time I tweeted something helpful like a design or development tip. I just couldn’t be arsed, knowing some dickhead would be around to waste my time with whataboutisms and “will it scale”?

The Post analyzed data from ProPublica’s Represent tool, which tracks congressional Twitter activity.

11/30 Youth Collaborative

I went through some of the pieces in the collection. It is important to give a platform to the voices that are missing from the conversation usually.

Just a few similar initiatives that you might want to check out:

Storycorps - people can record their stories via an app

Project Voice - spoken word poetry

Living Library - sharing one's story

Freedom Writers - book and curriculum based on real-life stories

As part of the Election Integrity Partnership, my team at the Stanford Internet Observatory studies online rumors, and how they spread across the internet in real time.

https://socialmediaissues.net/

Website for Social Media Issues, A resource for Comm 182/282. A course offered by Howard Rheingold at Stanford, Autumn, 2013

An aggregation site with data about the broader Fediverse and projects within it.

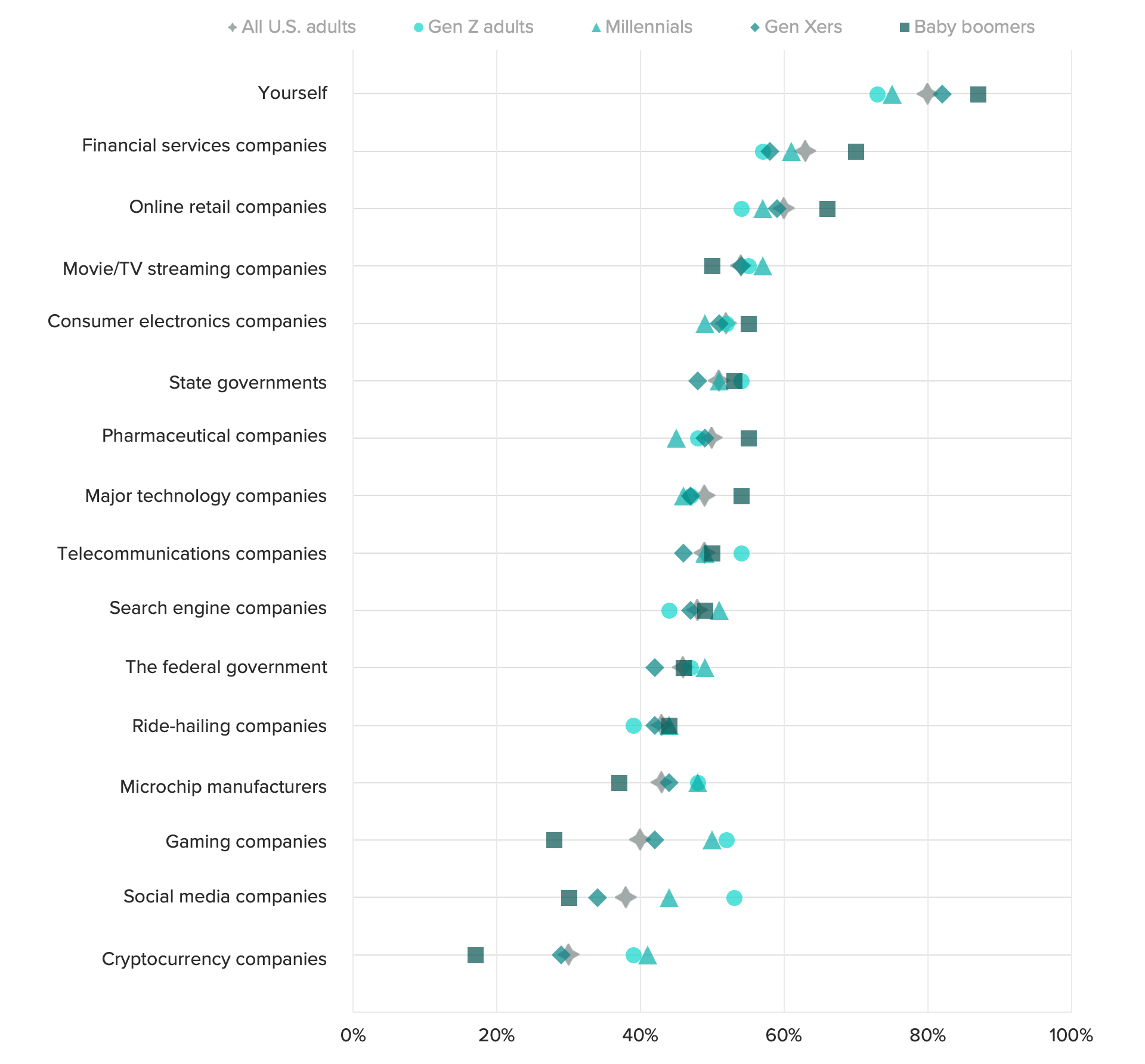

The notable exception: social media companies. Gen Zers are more likely to trust social media companies to handle their data properly than older consumers, including millennials, are.

For most categories of businesses, Gen Z adults are less likely to trust a business to protect the privacy of their data as compared to other generations. Social media is the one exception.

https://zettelkasten.social/about

Someone has registered the domain and it is hosted by masto.host, but not yet active as of 2022-11-13

Looking forward to many social media alternatives: Blue Sky, Matrix, and many others.

If wishing only made it happen...

meh... This looks dreadful...

Why not just use the built in rel-me verification available in Twitter directly with respect to individual websites?

https://pruvisto.org/debirdify/

Tool for moving some of your Twitter data over to Mastodon or other parts of the Fediverse.

Any migration is likely to face many of the challenges previous platform migrations have faced: content loss, fragmented communities, broken social networks and shifted community norms.

By asking participants about their experiences moving across these platforms – why they left, why they joined and the challenges they faced in doing so – we gained insights into factors that might drive the success and failure of platforms, as well as what negative consequences are likely to occur for a community when it relocates.

DHS’s mission to fight disinformation, stemming from concerns around Russian influence in the 2016 presidential election, began taking shape during the 2020 election and over efforts to shape discussions around vaccine policy during the coronavirus pandemic. Documents collected by The Intercept from a variety of sources, including current officials and publicly available reports, reveal the evolution of more active measures by DHS. According to a draft copy of DHS’s Quadrennial Homeland Security Review, DHS’s capstone report outlining the department’s strategy and priorities in the coming years, the department plans to target “inaccurate information” on a wide range of topics, including “the origins of the COVID-19 pandemic and the efficacy of COVID-19 vaccines, racial justice, U.S. withdrawal from Afghanistan, and the nature of U.S. support to Ukraine.”

The U.S. Department of Homeland Security pivots from externally-focused terrorism to domestic social media monitoring.

A recent writer has called attention to apassage in Paxson's presidential address before the American Historical Associationin 1938, in which he remarked that historians "needed Cheyney's warning . . . not towrite in 1917 or 1918 what might be regretted in 1927 and 1928."

There are lessons in Frederic L. Paxson's 1938 address to the American Historical Association for todays social media culture and the growing realm of cancel culture when he remarked that historians "needed Cheyney's warning... not to write in 1917 or 1918 what might be regretted in 1927 and 1928.

Mastodon

https://mastodon.social/

Running Twitter is more complicated than you think.

Glasp is a startup competitor in the annotations space that appears to be a subsidiary web-based tool and response to a large portion of the recent spate of note taking applications.

Some of the first users and suggested users are names I recognize from this tools for thought space.

On first blush it looks like it's got a lot of the same features and functionality as Hypothes.is, but it also appears to have some slicker surfaces and user interface as well as a much larger emphasis on the social aspects (followers/following) and gamification (graphs for how many annotations you make, how often you annotate, streaks, etc.).

It could be an interesting experiment to watch the space and see how quickly it both scales as well as potentially reverts to the mean in terms of content and conversation given these differences. Does it become a toxic space via curation of the social features or does it become a toxic intellectual wasteland when it reaches larger scales?

What will happen to one's data (it does appear to be a silo) when the company eventually closes/shuts down/acquihired/other?

The team behind it is obviously aware of Hypothes.is as one of the first annotations presented to me is an annotation by Kei, a cofounder and PM at the company, on the Hypothes.is blog at: https://web.hypothes.is/blog/a-letter-to-marc-andreessen-and-rap-genius/

But this is true for Glasp. Science researchers/writers use it a lot on our service, too.—Kei

cc: @dwhly @jeremydean @remikalir

Edgerly noted that disinformation spreads through two ways: The use of technology and human nature.Click-based advertising, news aggregation, the process of viral spreading and the ease of creating and altering websites are factors considered under technology.“Facebook and Google prioritize giving people what they ‘want’ to see; advertising revenue (are) based on clicks, not quality,” Edgerly said.She noted that people have the tendency to share news and website links without even reading its content, only its headline. According to her, this perpetuates a phenomenon of viral spreading or easy sharing.There is also the case of human nature involved, where people are “most likely to believe” information that supports their identities and viewpoints, Edgerly cited.“Vivid, emotional information grabs attention (and) leads to more responses (such as) likes, comments, shares. Negative information grabs more attention than (the) positive and is better remembered,” she said.Edgerly added that people tend to believe in information that they see on a regular basis and those shared by their immediate families and friends.

Spreading misinformation and disinformation is really easy in this day and age because of how accessible information is and how much of it there is on the web. This is explained precisely by Edgerly. Noted in this part of the article, there is a business for the spread of disinformation, particularly in our country. There are people who pay what we call online trolls, to spread disinformation and capitalize on how “chronically online” Filipinos are, among many other factors (i.e., most Filipinos’ information illiteracy due to poverty and lack of educational attainment, how easy it is to interact with content we see online, regardless of its authenticity, etc.). Disinformation also leads to misinformation through word-of-mouth. As stated by Edgerly in this article, “people tend to believe in information… shared by their immediate families and friends”; because of people’s human nature to trust the information shared by their loved ones, if one is not information literate, they will not question their newly received information. Lastly, it most certainly does not help that social media algorithms nowadays rely on what users interact with; the more that a user interacts with a certain information, the more that social media platforms will feed them that information. It does not help because not all social media websites have fact checkers and users can freely spread disinformation if they chose to.

Trolls, in this context, are humans who hold accounts on social media platforms, more or less for one purpose: To generate comments that argue with people, insult and name-call other users and public figures, try to undermine the credibility of ideas they don’t like, and to intimidate individuals who post those ideas. And they support and advocate for fake news stories that they’re ideologically aligned with. They’re often pretty nasty in their comments. And that gets other, normal users, to be nasty, too.

Not only programmed accounts are created but also troll accounts that propagate disinformation and spread fake news with the intent to cause havoc on every people. In short, once they start with a malicious comment some people will engage with the said comment which leads to more rage comments and disagreements towards each other. That is what they do, they trigger people to engage in their comments so that they can be spread more and produce more fake news. These troll accounts usually are prominent during elections, like in the Philippines some speculates that some of the candidates have made troll farms just to spread fake news all over social media in which some people engage on.

So, bots are computer algorithms (set of logic steps to complete a specific task) that work in online social network sites to execute tasks autonomously and repetitively. They simulate the behavior of human beings in a social network, interacting with other users, and sharing information and messages [1]–[3]. Because of the algorithms behind bots’ logic, bots can learn from reaction patterns how to respond to certain situations. That is, they possess artificial intelligence (AI).

In all honesty, since I don't usually dwell on technology, coding, and stuff. I thought when you say "Bot" it is controlled by another user like a legit person, never knew that it was programmed and created to learn the usual patterns of posting of some people may be it on Twitter, Facebook, and other social media platforms. I think it is important to properly understand how "Bots" work to avoid misinformation and disinformation most importantly during this time of prominent social media use.

Good overview article of some of the psychology research behind misinformation in social media spaces including bots, AI, and the effects of cognitive bias.

Probably worth mining the story for the journal articles and collecting/reading them.

Bots can also accelerate the formation of echo chambers by suggesting other inauthentic accounts to be followed, a technique known as creating “follow trains.”

We observed an overall increase in the amount of negative information as it passed along the chain—known as the social amplification of risk.

Could this be linked to my FUD thesis about decisions based on possibilities rather than realities?

We confuse popularity with quality and end up copying the behavior we observe.

Popularity ≠ quality in social media.

“Limited individual attention and online virality of low-quality information,” By Xiaoyan Qiu et al., in Nature Human Behaviour, Vol. 1, June 2017

The upshot of this paper seems to be "information overload alone can explain why fake news can become viral."

Running this simulation over many time steps, Lilian Weng of OSoMe found that as agents' attention became increasingly limited, the propagation of memes came to reflect the power-law distribution of actual social media: the probability that a meme would be shared a given number of times was roughly an inverse power of that number. For example, the likelihood of a meme being shared three times was approximately nine times less than that of its being shared once.

One of the first consequences of the so-called attention economy is the loss of high-quality information.

In the attention economy, social media is the equivalent of fast food. Just like going out for fine dining or even healthier gourmet cooking at home, we need to make the time and effort to consume higher quality information sources. Books, journal articles, and longer forms of content with more editorial and review which take time and effort to produce are better choices.

https://mleddy.blogspot.com/2005/05/tools-for-serious-readers.html

Interesting (now discontinued) reading list product from Levenger that in previous generations may have been covered by a commonplace book but was quickly replaced by digital social products (bookmark applications or things like Goodreads.com or LibraryThing.com).

Presently I keep a lot of this sort of data digitally myself using either/both: Calibre or Zotero.

Indie sites can’t complete with that. And what good is hosting and controlling your own content if no one else looks at it? I’m driven by self-satisfaction and a lifelong archivist mindset, but others may not be similarly inclined. The payoffs here aren’t obvious in the short-term, and that’s part of the problem. It will only be when Big Social makes some extremely unpopular decision or some other mass exodus occurs that people lament about having no where else to go, no other place to exist. IndieWeb is an interesting movement, but it’s hard to find mentions of it outside of hippie tech circles. I think even just the way their “Getting Started” page is presented is an enormous barrier. A layperson’s eyes will 100% glaze over before they need to scroll. There is a lot of weird jargon and in-joking. I don’t know how to fix that either. Even as someone with a reasonably technical background, there are a lot of components of IndieWeb that intimidate me. No matter the barriers we tear down, it will always be easier to just install some app made by a centralised platform.

We’re trapped in a Never-Ending Now — blind to history, engulfed in the present moment, overwhelmed by the slightest breeze of chaos. Here’s the bottom line: You should prioritize the accumulated wisdom of humanity over what’s trending on Twitter.

Recency bias and social media will turn your daily inputs into useless, possibly rage-inducing, information.

Schafer, B. (2021, October 5). RT Deutsch Finds a Home with Anti-Vaccination Skeptics in Germany. Alliance For Securing Democracy. https://securingdemocracy.gmfus.org/rt-deutsch-youtube-antivaccination-germany/

Bevan, R. (2022, February 27). Discord Bans Covid-19 And Vaccine Misinformation. The Gamer. https://www.thegamer.com/discord-anti-vax-covid-19-misinformation-ban-community-guidelines/

Hunderte Menschen protestierten gegen Corona-Maßnahmen. (2021, November 28). www.t-online.de. https://www.t-online.de/-/91225726

Zaig, G. (n.d.). 20% of Americans believe microchips are inside COVID-19 vaccines—Study. The Jerusalem Post | JPost.Com. Retrieved July 21, 2021, from https://www.jpost.com/omg/20-percent-of-americans-believe-microchips-are-inside-covid-19-vaccine-study-674272

Harford, T. (2021, May 6). What magic teaches us about misinformation. https://www.ft.com/content/5cea69f0-7d44-424e-a121-78a21564ca35

How to report misinformation. (n.d.). Retrieved August 27, 2021, from https://www.who.int/campaigns/connecting-the-world-to-combat-coronavirus/how-to-report-misinformation-online