The AI-generated image of Neukgu had prompted Daejeon city government to issue an emergency text to residents, warning them of a wolf near the intersection.

这一描述表明AI图像在误导当局方面起到了直接作用,引发了对AI技术潜在滥用问题的关注。

The AI-generated image of Neukgu had prompted Daejeon city government to issue an emergency text to residents, warning them of a wolf near the intersection.

这一描述表明AI图像在误导当局方面起到了直接作用,引发了对AI技术潜在滥用问题的关注。

That is a situation we are now living through, and it is no coincidence that the democratic conversation is breaking down all over the world because the algorithms are hijacking it. We have the most sophisticated information technology in history and we are losing the ability to talk with each other to hold a reasoned conversation.

for - progress trap - social media - misinformation - AI algorithms hijacking and pretending to be human

The more we flood the world with information, unless we make the effort to construct institutions that invest in truth, we'll be flooded by fiction and illusion and delusion and junk information.

for - quote - flooded with misinformation - Yuval Noah Harari - The more we flood the world with information, - unless we make the effort to construct institutions that invest in truth, - we'll be flooded by fiction, illusion, delusion and junk information.

First, he says, in the age of the typewriter—the twentieth century, more or less—there was a mythology that what was typewritten was true, that the machine somehow caused writers to bare their souls. This is a central idea of “The Iron Whim,”

via Darren Herschler-Henry's book

he is the most powerful person in the world himself he is the elite of all Elites so this elitist cabal if there were one he'd be a part of it right and and that's what breaks down their framework If there really were this deep state globalist cabal

for - youtube - Trump's Epstein Problem just got much worse! - polycrisis - misinformation - conspiracy theory - inconsistency with Trump now in power - If Deep State cabal existed and had all this power, why allow Trump to win? - Luke Beasley - 2025, Jan 30

Holy Moly. Zuckerberg goes all out Elon, joins hands with Trump and Meta content is heading to pretty much ‘anything goes’

for - post - LinkedIn - misinformation - Meta joins Elon Musk and Twitter no moderation - 2025, Jan 8

for - adjacency - climate crisis - raw milk - Boevar food supplement for cows to lower methane emissions of farting cows - misinformation - far right conspiracy theories - deeper issue - blindspot revealed by anxiety - Deep Humanity - progress traps

for - misinformation - twitter - how the platform amplifies misinformation

during these 200 years the main effects of print on Europe was a wave of Wars of religion and witch hunts and things like that because the big best sellers of early modern Europe were not Copernicus and Galileo galile almost nobody read them the big best sellers were religious tracks and were uh witch hunting manuals

for - Gutenberg printing press - initial use - misinformation - witchhunting manuals were the best sellers - Yuval Noah Harari

The song's criticism on mass media is mainly related to sensationalism.

"Good" things are usually not sensational. They do not demand attention, hence why the code of known/unknown based on selectors for attention filters it out.

Reference Hans-Georg Moeller's explanations of Luhmann's mass media theory based on functionally differentiated systems theory.

Can also compare to Simone Weil's thoughts on collectives and opinion; organizations (thus most part of mass media) should not be allowed to form opinions as this is an act of the intellect, only residing in the individual. Opinion of any form meant to spread lies or parts of the truth rather than the whole truth should be disallowed according to her because truth is a foundational, even the most sacred, need for the soul.

People must be protected against misinformation.

A Lie Can Travel Halfway Around the World While the Truth Is Putting On Its Shoes by [[Quote Investigator]]

Venkataraman, Bina. “How Latino Influencers Are Fighting Misinformation in South Florida.” The Washington Post, May 30, 2024, sec. Opinion. https://www.washingtonpost.com/opinions/2024/05/30/misinformation-spanish-latinos-south-florida/.

the latest trend in investment to fight online rumors, which is far too focused on technological fixes for what is fundamentally a human problem.

Often, because misinformation is spread using technological means, we focus on using technology to dampen the spread of rumors. The root of the problem is really a human one, however, so it may help significantly to focus efforts on that locus.

Link to Swift quote about lies and truth: https://hypothes.is/a/Ys0x_m65Ee6GOPtD0OROJw

The South Florida influencers, for instance, heard a rumor circulating that the government had put microchips in the coronavirus vaccine so it could track people.

Notice that many fake news stories begin from a place of fear. This fear hijacks our brains and triggers fight or flight options in our system I circuitry and actively prevent the use of the rational parts of system II which would quickly reveal problems in the information.

The formula: Empathize with a concern instead of shaming people or telling them they are wrong. And acknowledge — rather than ignore — the kernels of truth that make false claims seem convincing.

A formula for fighting misinformation: empathize, don't shame, acknowledge kernels of truth

Presumably this came out of the Information Futures Lab, but it would be nice to have the source and related research.

Information Futures Lab at Brown University

Der Guardian ist in den Besitz eines detaillierten Dokuments zur PR und Desinformations-Strategie der Vereinigten Arabischen Emirate und des COP28- Präsidenten Sultan Al Jaber gekommen. Zu den sogenannten sensiblen Punkten gehört die Ausweitung der Öl- und Gas-Produktion der Emirate. Im Zentrum der Strategie steht offensichtlich das Bemühen, die Verbrennung von fossilen Energieträgern so wenig wie möglich zu thematisieren. https://www.theguardian.com/environment/2023/aug/01/leak-uae-presidency-un-climate-summit-oil-gas-emissions-yemen

Historians pilloried the show as historically illiterate, but they were missing the point. It wasn’t really about the past. It was a blueprint for the future.

Bad history can be paraded under the umbrella of propaganda, not to serve to tell the story of the past, but to tell a story about a coming future.

‘speak truth to power,’

simultaneously one should speak truth to the dis-empowered and dis-enfranchised to rise up against those who have power as a check to that power.

we 00:11:13 have a media that needs to survive based on clicks and controversy and serving the most engaged people

for - quote - roots of misinformation, quote - roots of fake news, key insight - roots of misinformation

key insight - roots of misinformation - (see below)

quote - roots of misinformation - we have a media that needs to survive based on - clicks and - controversy and - serving the most engaged people - so they both sides the issues - they they lift up - facts and - lies - as equivalent in order to claim no bias but - that in itself is a bias because - it gives more oxygen to the - lies and - the disinformation - that is really dangerous to our society and - we are living through the impacts of - those errors and - that malpractice -done by media in America

for - misinformation - media misinformation

Because, scholars are annotators.

Recently saw some original Copernicus books "annotated" (read censored) by the church.

Ain’t I A Woman

Famously misquoted, the earliest most accurate transcription of her speech does not include the line "Ain't I a woman?" The closest line is "I am a woman's rights." https://www.thesojournertruthproject.com/compare-the-speeches/

Uncontrolledself-replication in these newer technologies runs a much greater risk: arisk of substantial damage in the physical world.

As a case in point, the self-replication of misinformation on social media networks has become a substantial physical risk in the early 21st century causing not only swings in elections, but riots, take overs, swings in the stock market (GameStop short squeeze January 2021), and mob killings. It is incredibly difficult to create risk assessments for these sorts of future harms.

In biology, we see major damage to a wide variety of species as the result of uncontrolled self-replication. We call it cancer.

We also see programmed processes in biological settings including apoptosis and necrosis as means of avoiding major harms. What might these look like with respect to artificial intelligence?

Mind1, which refers to the neurocognitive activity that allows you to behave in the world.

for: hard problem of consciousness - UTok, question - consciosness - UTok mind 1a, Gregg Henrique

comment

Ashby's law of requisite variety may also be at play for overloading our system 1 heuristic abilities with respect to misinformation (particularly in high velocity social media settings). Switching context from system 1 to system 2 on a constant basis to fact check everything in our (new digital) immediate environment can be very mentally and emotionally taxing. This can result in both mental exhaustion as well as anxiety.

The disaster of misinformation: a review of research in social media

The article is considered a primary source, because while it references to several other sources for study, it generates primary information from this particular subject. The study focuses on social media misinformation during the COVID-19 pandemic, and is aimed at exploring its characteristics, determinants, impacts, theoretical perspectives, and strategies for halting its spread. Authors Muhamed and Mathew comprehensively explained how misinformation is propagated by several causes: anxiety which prevents such tension in efforts of filling in the missing information, source ambiguity due to trustworthiness to the origin of information, personal involvement, social ties, confirmation bias which reinforces preexisting beliefs, attractiveness of the platform, illiteracy, ease of sharing options, and device attachment. In terms of mitigation, the study suggests early communication to public officials and health professionals, use of scientific evidence for fact-checking, rumor refutation, intervention on misinformation by experts, all of which are aligned to the same goal. The study takes from 28 credible sources to discuss misinformation using a systematic literary review. Along with the resources used were tables and graphs that illustrate an overview of the results. While the discussion is rich in explanations and details, the study is obviously limited because of its research on specific domains.

https://context.center/topics/misinformation/#dealing-with-misinformation

https://checkpleasecc.notion.site/checkpleasecc/Check-Please-Starter-Course-ae34d043575e42828dc2964437ea4eed

Befides, asthe vileft Writer has his Readers, fothe greateft Liarbas his Believers ; and it often happens, that if aLie be believ'd only for an Hour, it has done itsWork, and there is no farther occafion for it. Falfhcod flies, and Truth comes limping after it ; fo thatwhen Men come to be undeceiv'd, it is too late, theJeft is over, and the Tale has had its Effect : Like aMan who has thought of a good Repay per ed . Oh,Repartee, when thelike a Phyfician who has found out an infallible Medicine, after the Patient is dead

Falsehood flies, and Truth comes limping after it;<br /> —Jonathan Swift, “The Examiner, From Thursday Nov 2 to Thursday Nov 9, 1710.” In The Examiner [Afterw.] The Whig Examiner, edited by Joseph Addison and Jonathan Swift, Vol. 15. London: John Morphew, near Stationers Hall, 1710.

found via https://quoteinvestigator.com/2014/07/13/truth/

with variations on "A lie travels around the globe while the truth is putting on its shoes." attributed variously to Mark Twain, Jonathan Swift, Thomas Francklin, Fisher Ames, Thomas Jefferson, John Randolph, Charles Haddon Spurgeon, Winston Churchill, Terry Pratchett?

But the last question, What of it?, requires considerable restraint on the part of the reader. It is here that the situationwe described earlier may occur-namely, the situation in whichthe reader says, "I cannot fault the author's conclusions, butI nevertheless disagree with them." This comes about, of course,because of the prejudgments that the reader is likely to haveconcerning the author's approach and his conclusions.

How to protect against these sorts of outcomes? Relation to identity and cognitive biases?

According to YouTube chief product officer Neal Mohan, 70 percent of views on YouTube are from recommendations—so the site’s algorithms are largely responsible for amplifying RT’s propaganda hundreds of millions of times.

These algorithms are invisible, but they have an outsized impact on shaping individuals’ experience online and society at large.

"... I am willing to believe that history is for the most part inaccurate and biased, but what is peculair to our own age is the abandonment of the idea that history could be truthfully written."—George Orwell

check source and verify text <br /> (8:42)

favela, also spelled favella

This is incorrect. "Favela" is NEVER spelled "favela". "Favela" is a Portuguese word. According to Portuguese spelling and pronunciation rules, a double-L should NOT be used in that word.

Also known as: favella

This is incorrect. "Favela" is not known as "favella".

Amazon has become a marketplace for AI-produced tomes that are being passed off as having been written by humans, with travel books among the popular categories for fake work.

Timmy Broderick in Evidence Undermines ‘Rapid Onset Gender Dysphoria’ Claims In Scientific American at 2023-08-24 (accessed:: 2023-08-25 09:26:00)

The instinctual BS-meter is not enough. The next version of the ‘BS-meter’ will need to be technologically based. The tricks of misinformation have far outstripped the ability of people to reliably tell whether they are receiving BS or not – not to mention that it requires a constant state of vigilance that’s exhausting to maintain. I think that the ability and usefulness of the web to enable positive grassroots civic communication will be harnessed, moving beyond mailing lists and fairly static one-way websites.

Upton Sinclair ran for Governor of California in the 1930s, and the coverage he received from newspapers was unsympathetic. Yet, in 1934 some California papers published installments from his forthcoming book about the ill-fated campaign titled “I, Candidate for Governor, and How I Got Licked”. Emphasis added to excerpts by QI:[1] 1934 December 11, Oakland Tribune, I, Candidate for Governor and How I Got Licked by Upton Sinclair, Quote Page 19, Column 3, Oakland, California. (Newspapers_com) I used to say to our audiences: “It is difficult to get a man to understand something, when his salary depends upon his not understanding it!”

via https://quoteinvestigator.com/2017/11/30/salary/

Some underlying tension for the question of identity/misinformation and personal politics vis-a-vis work and one's identity generated via work.

https://twitter.com/TheGreenLineTO

Local storytelling creating identity.

Suggested by Aram Zucker-Scharff

The reporter concerned, a tweedy pipe smoker named James Gibbins, waslater found to have concocted a myriad similarly implausible stories. Amongthem: A survey conducted at New York’s Algonquin Hotel showing thatwatching half an hour of As the World Turns had the same narcoleptic effect asdowning three vodka-and-tonics. A bar in Laurel, Maryland, that kept a petmonkey, with free drinks given to the first customer on whom the creature sat.Pigeons in Cornwall seen sporting human heads. Mr. Gibbins was exposed as“The Faker of Fleet Street” in an article in the Washington Post, and he wasrelieved of his job as a Mail foreign correspondent.

The reiteration of slogans, the distortionof the news, the great storm of propaganda that beats uponthe citizen twenty-four hours a day all his life long meaneither that democracy must fall a prey to the loudest andmost persistent propagandists or that the people must savethemselves by strengthening their minds so that they can ap-praise the issues for themselves.

Things have not improved measurably since the 1950s apparently. The drumbeat has only gotten worse.

Climate misinformation in a climate of misinformation

United States biomedical researchers and pharmaceutical companies are conducting and paying African doctors to conduct unethical and illegal testing of human subjects. Nonconsensual research on human subjects is an atrocity that occurred in Tuskegee, Alabama, and in Guatemala for over forty years. Once outlawed in the U.S., medical researchers began experimenting on thousands of human research subjects without their consent in Cameroon, Ghana, Namibia, Nigeria, Uganda, South Africa, Zimbabwe and other African countries.

Content that is borderline makes it into a designated Spam folder, where masochists can read through it if they choose. And legitimate companies that use spammy email marketing tactics are penalized, so they’re incentivized to be on their best behavior.

I believe that seeing false news in the same light as spam is a better way to look at identifying the problem. This may help decrease some of the damage posed by false news for consumers who aren't well-versed in spotting disinformation on the internet. Most individuals can read an email in their inbox claiming that they won the million-dollar lottery and still classify it as spam because they recognize the all too familiar ploy. If we applied this approach to disinformation on social media, I believe it would help people become more familiar with the lies spread on the internet.

https://www.nytimes.com/live/2023/04/24/business/tucker-carlson-fox-news

Following the settlement with Dominion Voting Systems last week, Tucker Carlson is out at Fox News.

Lying press (German: Lügenpresse, lit. 'press of lies') is a pejorative and disparaging political term used largely for the printed press and the mass media at large. It is used as an essential part of propaganda and is thus usually dishonest or at least not based on careful research.

Now, I've made a number of documentaries about fake news. And what interests me is the first person to use the phrase mainstream media was Joseph Goebbels. And he, in one of his propaganda sheets, said “It's very important that you don't read the mainstream media because they'll tell you lies.” You must read the truth by the ramblings of his boss and his associated work. And you do have to watch this. This is a very, very well-established technique of fascists, is to tell you, don't read this stuff, read our stuff.<br /> —Ian Hislop, Editor, Private Eye Magazine 00:16:00, Satire in the Age of Murdoch and Trump, The Problem with Jon Stewart Podcast

He said one upside for publishers was that audiences might soon find it harder to know what information to trust on the web, so “they’ll have to go to trusted sources.”

That seems somewhat comically optimistic. Misinformation has spread rampantly online without the accelerant of AI.

Die schiere Menge sprengt die Möglichkeiten der Buchpublikation, die komplexe, vieldimensionale Struktur einer vernetzten Informationsbasis ist im Druck nicht nachzubilden, und schließlich fügt sich die Dynamik eines stetig wachsenden und auch stetig zu korrigierenden Materials nicht in den starren Rhythmus der Buchproduktion, in der jede erweiterte und korrigierte Neuauflage mit unübersehbarem Aufwand verbunden ist. Eine Buchpublikation könnte stets nur die Momentaufnahme einer solchen Datenbank, reduziert auf eine bestimmte Perspektive, bieten. Auch das kann hin und wieder sehr nützlich sein, aber dadurch wird das Problem der Publikation des Gesamtmaterials nicht gelöst.

link to https://hypothes.is/a/U95jEs0eEe20EUesAtKcuA

Is this phenomenon of "complex narratives" related to misinformation spread within the larger and more complex social network/online network? At small, local scales, people know how to handle data and information which is locally contextualized for them. On larger internet-scale communication social platforms this sort of contextualization breaks down.

For a lack of a better word for this, let's temporarily refer to it as "complex narratives" to get a handle on it.

Title: Fox News producer files explosive lawsuits against the network, alleging she was coerced into providing misleading Dominion testimony

// - This is an example of how big media corporations can deceive the public and compromise the truth - It helps create a nation of misinformed people which destabilizes political governance - the workspace sounds toxic - the undertone of this story: the pathological transformation of media brought about by capitalism - it is the need for ratings, which is the indicator for profit in the marketing world, that has corrupted the responsibility to report truthfully - making money becomes the consumerist dream at the expense of all else of intrinsic value within a culture - knowledge is what enables culture to exist, modernity is based on cumulative cultural evolution - this is an example of NON-conscious cumulative cultural evolution or pathological cumulaitve cultural evolution

subtitle: why most climate scientists can’t tell the truth (in public) Author: Jackson Damien

This is a good article written from a psychotherapist's perspective,

People know it’s bad but not how bad. This gap in understanding remains wide enough for denialists and minimisers to legitimise inadequate action under the camouflage of empty eco-jargon and false optimism. This gap allows nations, corporations and individuals to remain distracted by short-term crises, which, however serious, pale into insignificance compared with the unprecedented threat of climate change.

And it constitutes an important but overlooked signpost in the 20th-centuryhistory of information, as ‘facts’ fell out of fashion but big data became big business.

Of course the hardest problem in big data has come to be realized as the issue of cleaning up messing and misleading data!

Deutsch’s index and his ‘facts’, then, seemedto his students to embody a moral value in addition to epistemological utility.

Beyond their epistemological utility do zettelkasten also "embody a moral value"? Jason Lustig argues that they may have in the teaching context of Gotthard Deutsch where the reliance on facts was of extreme importance for historical research.

Some of this is also seen in Scott Scheper's religious framing of zettelkasten method though here the aim has a different focus.

He tried to show that this‘favorite topic’ of his, ‘insistence on exactness in chronological dates’, amounted tomore than a trifling (Deutsch, 1915, 1905a). Deutsch compared such historical accuracyto that of a bookkeeper who might recall his ledger by memory. ‘People would look uponsuch an achievement’, he reflected, ‘as a freak, harmless, but of no particular value, infact rather a waste of mental energy’ (Deutsch, 1916). However, he sought to show thatthese details mattered, no different from how ‘a difference in a ledger of one centremains just as grievous as if it were a matter of $100,000’ (Deutsch, 1904a: 3).

Interesting statement about how much memory matters, though it's missing some gravitas somehow.

Is there more in the original source?

Anecdotes’, he concluded, ‘havetheir historic value, if properly tested’ – reflecting both his interest in details and also theneed to ascertain whether they were true (Deutsch, 1905b).

“It makes me feel like I need a disclaimer because I feel like it makes you seem unprofessional to have these weirdly spelled words in your captions,” she said, “especially for content that's supposed to be serious and medically inclined.”

Where's the balance for professionalism with respect to dodging the algorithmic filters for serious health-related conversations online?

One of the most well-documented shortcomings of large language models is that they can hallucinate. Because these models have no direct knowledge of the physical world, they're prone to conjuring up facts out of thin air. They often completely invent details about a subject, even when provided a great deal of context.

PolitiFact - People are using coded language to avoid social media moderation. Is it working?<br /> by Kayla Steinberg<br /> November 4, 2021

Moran said the codes themselves may end up limiting the reach of misinformation. As they get more cryptic, they become harder to understand. If people are baffled by a unicorn emoji in a post about COVID-19, they might miss or dismiss the misinformation.

"The coded language is effective in that it creates this sense of community," said Rachel Moran, a researcher who studies COVID-19 misinformation at the University of Washington. People who grasp that a unicorn emoji means "vaccination" and that "swimmers" are vaccinated people are part of an "in" group. They might identify with or trust misinformation more, said Moran, because it’s coming from someone who is also in that "in" group.

A shared language and even more specifically a coded shared language can be used to create a sense of community or define an in group identity.

https://www.thecrimson.com/article/2023/2/2/donovan-forced-leave-hks/

This is a massive loss for HKS, but a potential major win for the school that picks the project up.

It seems to be a sad use of "rules" to shut down a project which may not jive with an administrations' perspective/needs.

Read on Fri 2023-02-03 at 7:14 PM

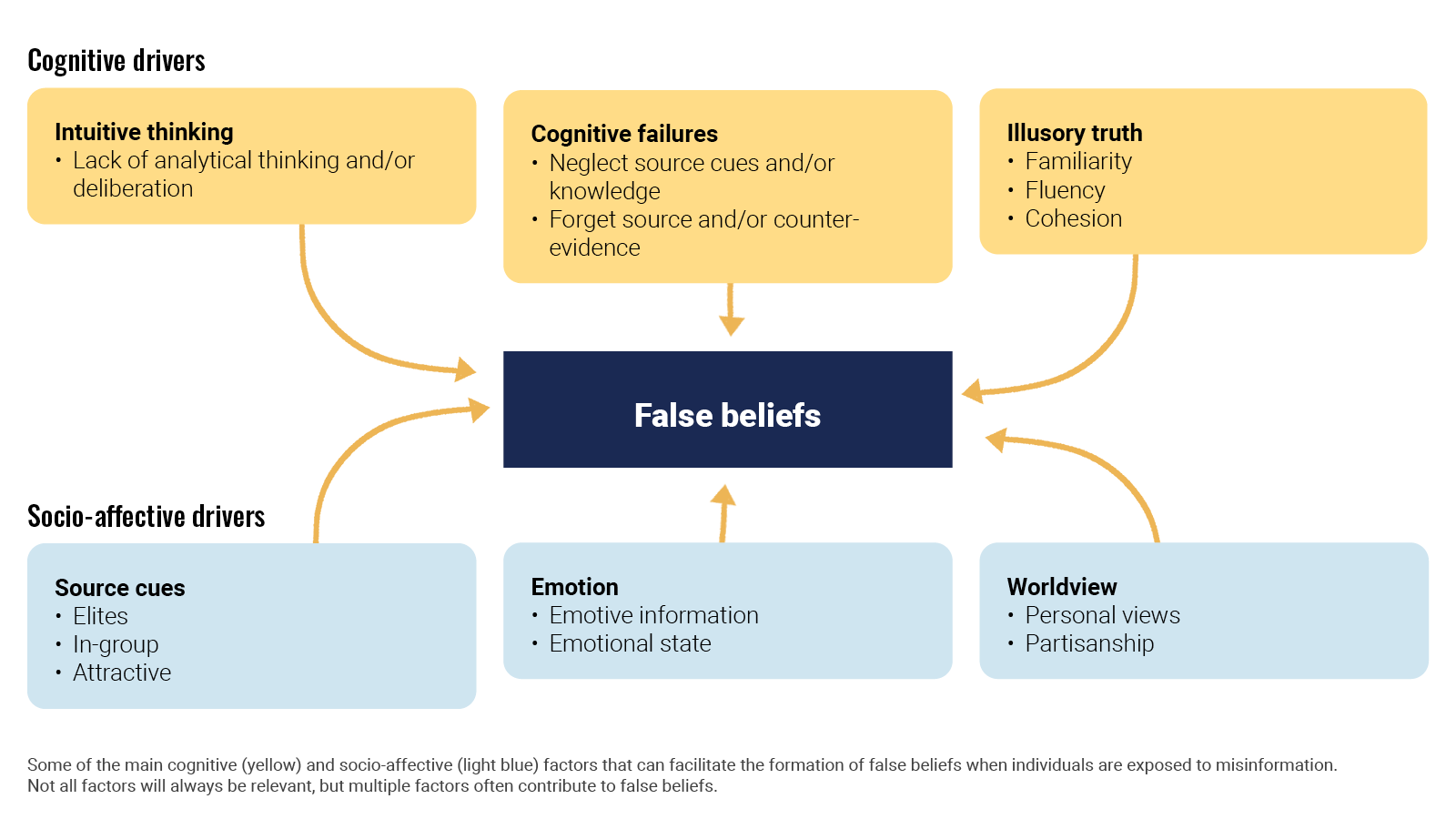

The uptake of mis- and disinformation is intertwined with the way our minds work. The large body of research on the psychological aspects of information manipulation explains why.

In an article for Nature Review Psychology, Ullrich K. H. Ecker et al looked(opens in a new tab) at the cognitive, social, and affective factors that lead people to form or even endorse misinformed views. Ironically enough, false beliefs generally arise through the same mechanisms that establish accurate beliefs. It is a mix of cognitive drivers like intuitive thinking and socio-affective drivers. When deciding what is true, people are often biased to believe in the validity of information and to trust their intuition instead of deliberating. Also, repetition increases belief in both misleading information and facts.

Ecker, U.K.H., Lewandowsky, S., Cook, J. et al. (2022). The psychological drivers of misinformation belief and its resistance to correction.

Going a step further, Álex Escolà-Gascón et al investigated the psychopathological profiles that characterise people prone to consuming misleading information. After running a number of tests on more than 1,400 volunteers, they concluded that people with high scores in schizotypy (a condition not too dissimilar from schizophrenia), paranoia, and histrionism (more commonly known as dramatic personality disorder) are more vulnerable to the negative effects of misleading information. People who do not detect misleading information also tend to be more anxious, suggestible, and vulnerable to strong emotions.

. Furthermore, our results add to the growing body of literature documenting—at least at this historical moment—the link between extreme right-wing ideology and misinformation8,14,24 (although, of course, factors other than ideology are also associated with misinformation sharing, such as polarization25 and inattention17,37).

Misinformation exposure and extreme right-wing ideology appear associated in this report. Others find that it is partisanship that predicts susceptibility.

. We also find evidence of “falsehood echo chambers”, where users that are more often exposed to misinformation are more likely to follow a similar set of accounts and share from a similar set of domains. These results are interesting in the context of evidence that political echo chambers are not prevalent, as typically imagined

Aligned with prior work finding that people who identify as conservative consume15, believe24, and share more misinformation8,14,25, we also found a positive correlation between users’ misinformation-exposure scores and the extent to which they are estimated to be conservative ideologically (Fig. 2c; b = 0.747, 95% CI = [0.727,0.767] SE = 0.010, t (4332) = 73.855, p < 0.001), such that users estimated to be more conservative are more likely to follow the Twitter accounts of elites with higher fact-checking falsity scores. Critically, the relationship between misinformation-exposure score and quality of content shared is robust controlling for estimated ideology

highlights the need for public health officials to disseminate information via multiple media channels to increase the chances of accessing vaccine resistant or hesitant individuals.

Across the Irish and UK samples, similarities and differences emerged regarding those in the population who were more likely to be hesitant about, or resistant to, a vaccine for COVID-19. Three demographic factors were significantly associated with vaccine hesitance or resistance in both countries: sex, age, and income level. Compared to respondents accepting of a COVID-19 vaccine, women were more likely to be vaccine hesitant, a finding consistent with a number of studies identifying sex and gender-related differences in vaccine uptake and acceptance37,38. Younger age was also related to vaccine hesitance and resistance.

There were no significant differences in levels of consumption and trust between the vaccine accepting and vaccine hesitant groups in the Irish sample. Compared to vaccine hesitant responders, vaccine resistant individuals consumed significantly less information about the pandemic from television and radio, and had significantly less trust in information disseminated from newspapers, television broadcasts, radio broadcasts, their doctor, other health care professionals, and government agencies.

Censorship and Information Control During Information RevolutionsExploring how new information technologies from the printing press to the digital age have stimulated new forms of censorship and information control.

https://voices.uchicago.edu/censorship/

Related YouTube channel/videos: https://www.youtube.com/channel/UCeNP7NIWmB70wFBv9QolYkg

The Gish gallop /ˈɡɪʃ ˈɡæləp/ is a rhetorical technique in which a person in a debate attempts to overwhelm their opponent by providing an excessive number of arguments with no regard for the accuracy or strength of those arguments. In essence, it is prioritizing quantity of one's arguments at the expense of quality of said arguments. The term was coined in 1994 by anthropologist Eugenie Scott, who named it after American creationist Duane Gish and argued that Gish used the technique frequently when challenging the scientific fact of evolution.[1][2] It is similar to another debating method called spreading, in which one person speaks extremely fast in an attempt to cause their opponent to fail to respond to all the arguments that have been raised.

I'd always known this was a thing, but didn't have a word for it.

https://docs.google.com/document/d/163G79vq-mFWjIqMb9AzYGbr5Y8YMGcpbSzJRutO8tpw/edit

Howard Rheingold, et al. A Guide to Crap Detection Resources

Our familiarity with these elements makes the overall story seem plausible, even—or perhaps especially—when facts and evidence are in short supply.

Storytelling tropes play into our system one heuristics and cognitive biases by riding on the tailcoats of familiar story plotlines we've come to know and trust.

What are the ways out of this trap? Creating lists of tropes which should trigger our system one reactions to switch into system two thinking patterns? Can we train ourselves away from these types of misinformation?

As part of the Election Integrity Partnership, my team at the Stanford Internet Observatory studies online rumors, and how they spread across the internet in real time.

<script async src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>Something similar! Here it is: https://t.co/x1DPx9dm0P

— Renee DiResta (@noUpside) November 26, 2022

Lilienfeld, S. O., Sauvigné, K. C., Lynn, S. J., Cautin, R. L., Latzman, R. D., & Waldman, I. D. (2014). Fifty psychological and psychiatric terms to avoid: a list of inaccurate, misleading, misused, ambiguous, and logically confused words and phrases. Frontiers in Psychology. https://doi.org/10.3389/fpsyg.2015.01100

Trope, trope, trope, strung into a Gish Gallop.

One of the issues we see in the Sunday morning news analysis shows (Meet the Press, Face the Nation, et al.) is that there is usually a large amount of context collapse mixed with lack of general knowledge about the topics at hand compounded with large doses of Gish Gallop and F.U.D. (fear, uncertainty, and doubt).

a more nuanced view of context.

Almost every new technology goes through a moral panic phase where the unknown is used to spawn potential backlashes against it. Generally these disappear with time and familiarity with the technology.

Bicycles cause insanity, for example...

Why does medicine and vaccines not follow more of this pattern? Is it lack of science literacy in general which prevents it from becoming familiar for some?

What if instead of addressing individual pieces of misinformation reactively, we instead discussed the underpinnings — preemptively?

Perhaps we might more profitably undermine misinformation by dismantling the underlying tropes the underpin them?

And that, perhaps, is what we might get to via prebunking. Not so much attempts to counter or fact-check misinfo on the internet, but defanging the tropes that underpin the most recurringly manipulative claims so that the public sees, recognizes, & thinks:

<script async src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>And that, perhaps, is what we might get to via prebunking. Not so much attempts to counter or fact-check misinfo on the internet, but defanging the tropes that underpin the most recurringly manipulative claims so that the public sees, recognizes, & thinks:😬

— Renee DiResta (@noUpside) June 19, 2021

suspect evaded Colorado’s red flag gun law

If you read lower in the article you'll see that the headline is a blatant lie.

The Gov failed to prosecute a violent person, so AP spins it as if this guy "evaded" (which is an action).

One can't evade a law that is never applied against them.

Under President Joe Biden, the shifting focus on disinformation has continued. In January 2021, CISA replaced the Countering Foreign Influence Task force with the “Misinformation, Disinformation and Malinformation” team, which was created “to promote more flexibility to focus on general MDM.” By now, the scope of the effort had expanded beyond disinformation produced by foreign governments to include domestic versions. The MDM team, according to one CISA official quoted in the IG report, “counters all types of disinformation, to be responsive to current events.” Jen Easterly, Biden’s appointed director of CISA, swiftly made it clear that she would continue to shift resources in the agency to combat the spread of dangerous forms of information on social media.

These definitions from earlier in the article: * misinformation (false information spread unintentionally) * disinformation (false information spread intentionally) * malinformation (factual information shared, typically out of context, with harmful intent)

Today, the people in politics who most often invoke the name of Jesus for their political causes tend to be the most merciless and judgmental, the most consumed by rage and fear and vengeance. They hate their enemies, and they seem to want to make more of them. They claim allegiance to the truth and yet they have embraced, even unwittingly, lies. They have inverted biblical ethics in the name of biblical ethics.

The Internet offers voters in any country an easily accessible and streamlined way to obtain election information, news, and updates. On the other side of that coin lies the opportunity for anti-democratic actors to grow and professionalize digital manipulation campaigns. In the Philippines, a 2020 Oxford Internet Institute survey found that government agencies, politicians, CSOs, and political parties had all personally conducted or hired private firms to conduct digital manipulation campaigns.

Because of the fact that the Internet is accessible to virtually anyone today makes it among the best exploitable tools for politicians. However that in itself does not explain why it became a pivotal element of the recent elections. Misinformation is believed by many Filipinos due to their inability to fact check information on the Internet. Normally, fact checking should be an ability that individuals can do as a byproduct of critical thinking skills honed through their formal education. Unfortunately the implementation of Philippine education is not conducive to the practice of critical thinking for a number of reasons, such as the lack of teachers and learning facilities. Moreover, socioeconomic factors prevent people from focusing on their education. Substandard education and an unstable economy may explain why, in addition to falling for misinformation, people choose to propagate it in exchange for financial stability, thus increasing the number of perpetrators that those with ulterior motives can use for their own ends.

Edgerly noted that disinformation spreads through two ways: The use of technology and human nature.Click-based advertising, news aggregation, the process of viral spreading and the ease of creating and altering websites are factors considered under technology.“Facebook and Google prioritize giving people what they ‘want’ to see; advertising revenue (are) based on clicks, not quality,” Edgerly said.She noted that people have the tendency to share news and website links without even reading its content, only its headline. According to her, this perpetuates a phenomenon of viral spreading or easy sharing.There is also the case of human nature involved, where people are “most likely to believe” information that supports their identities and viewpoints, Edgerly cited.“Vivid, emotional information grabs attention (and) leads to more responses (such as) likes, comments, shares. Negative information grabs more attention than (the) positive and is better remembered,” she said.Edgerly added that people tend to believe in information that they see on a regular basis and those shared by their immediate families and friends.

Spreading misinformation and disinformation is really easy in this day and age because of how accessible information is and how much of it there is on the web. This is explained precisely by Edgerly. Noted in this part of the article, there is a business for the spread of disinformation, particularly in our country. There are people who pay what we call online trolls, to spread disinformation and capitalize on how “chronically online” Filipinos are, among many other factors (i.e., most Filipinos’ information illiteracy due to poverty and lack of educational attainment, how easy it is to interact with content we see online, regardless of its authenticity, etc.). Disinformation also leads to misinformation through word-of-mouth. As stated by Edgerly in this article, “people tend to believe in information… shared by their immediate families and friends”; because of people’s human nature to trust the information shared by their loved ones, if one is not information literate, they will not question their newly received information. Lastly, it most certainly does not help that social media algorithms nowadays rely on what users interact with; the more that a user interacts with a certain information, the more that social media platforms will feed them that information. It does not help because not all social media websites have fact checkers and users can freely spread disinformation if they chose to.

"In 2013, we spread fake news in one of the provinces I was handling," he says, describing how he set up his client's opponent. "We got the top politician's cell phone number and photo-shopped it, then sent out a text message pretending to be him, saying he was looking for a mistress. Eventually, my client won."

This statement from a man who claims to work for politicians as an internet troll and propagator of fake news was really striking, because it shows how fabricating something out of the blue can have a profound impact in the elections--something that is supposed to be a democratic process. Now more than ever, mudslinging in popular information spaces like social media can easily sway public opinion (or confirm it). We have seen this during the election season, wherein Leni Robredo bore the brunt of outrageous rumors; one rumor I remember well was that Leni apparently married an NPA member before and had a kid with him. It is tragic that misinformation and disinformation is not just a mere phenomenon anymore, but a fully blown industry. It has a tight clutch on the decisions people make for the country, while also deeply affecting their values and beliefs.

Trolls, in this context, are humans who hold accounts on social media platforms, more or less for one purpose: To generate comments that argue with people, insult and name-call other users and public figures, try to undermine the credibility of ideas they don’t like, and to intimidate individuals who post those ideas. And they support and advocate for fake news stories that they’re ideologically aligned with. They’re often pretty nasty in their comments. And that gets other, normal users, to be nasty, too.

Not only programmed accounts are created but also troll accounts that propagate disinformation and spread fake news with the intent to cause havoc on every people. In short, once they start with a malicious comment some people will engage with the said comment which leads to more rage comments and disagreements towards each other. That is what they do, they trigger people to engage in their comments so that they can be spread more and produce more fake news. These troll accounts usually are prominent during elections, like in the Philippines some speculates that some of the candidates have made troll farms just to spread fake news all over social media in which some people engage on.

So, bots are computer algorithms (set of logic steps to complete a specific task) that work in online social network sites to execute tasks autonomously and repetitively. They simulate the behavior of human beings in a social network, interacting with other users, and sharing information and messages [1]–[3]. Because of the algorithms behind bots’ logic, bots can learn from reaction patterns how to respond to certain situations. That is, they possess artificial intelligence (AI).

In all honesty, since I don't usually dwell on technology, coding, and stuff. I thought when you say "Bot" it is controlled by another user like a legit person, never knew that it was programmed and created to learn the usual patterns of posting of some people may be it on Twitter, Facebook, and other social media platforms. I think it is important to properly understand how "Bots" work to avoid misinformation and disinformation most importantly during this time of prominent social media use.

On a volume basis, hydrogen is one of the least energy dense fuels. One liter of hydrogen contains only 25% of the energy of one liter of gasoline and only 20% of the energy of one liter of diesel fuel.

It's the vapors of Gasoline and Diesel Fuel that burn, so to measure fuel density based on volume of the liquid for fuels that burn in vapor form - but not doing the same for hydrogen is simply dishonest.

They all burn as a vapor and store and transport well as a liquid. If you compare them all based on the gas volume when they are burned, Hydrogen is still by far the most energy dense.

It's the capacity to store hydrogen that makes the other fuels fuel at all, so it's hard to improve on 100% hydrogen for doing that.

Could the maintenance of these mythsactually be useful for particularly powerful constituencies? Does the contin-uation of these myths serve a purpose or function for other segments of theAmerican population? If so, who and what might that be?

Good overview article of some of the psychology research behind misinformation in social media spaces including bots, AI, and the effects of cognitive bias.

Probably worth mining the story for the journal articles and collecting/reading them.

n a recent laboratory study, Robert Jagiello, also at Warwick, found that socially shared information not only bolsters our biases but also becomes more resilient to correction.

Even our ability to detect online manipulation is affected by our political bias, though not symmetrically: Republican users are more likely to mistake bots promoting conservative ideas for humans, whereas Democrats are more likely to mistake conservative human users for bots.

“Limited individual attention and online virality of low-quality information,” By Xiaoyan Qiu et al., in Nature Human Behaviour, Vol. 1, June 2017

The upshot of this paper seems to be "information overload alone can explain why fake news can become viral."

vaccines

Vaccines are "administered primarily to prevent disease." https://www.britannica.com/science/vaccine

Thusly, a "vaccine" that does not actually prevent disease is, by definition, not a vaccine but marketing spin aimed at enriching the manufacturer's shareholders.

We know that "vaccines" are not effective as vaccines because it is so common for people who are "fully vaxxed and boosted" to announce that hey have become infected with the pathogen the "vaccine" is claimed to protect against.

The highly scientific reaction is to respond "Well, just imagine how bad it could have been if they weren't vaccinated" which is an unfalsifiable unscientific claim.

Schafer, B. (2021, October 5). RT Deutsch Finds a Home with Anti-Vaccination Skeptics in Germany. Alliance For Securing Democracy. https://securingdemocracy.gmfus.org/rt-deutsch-youtube-antivaccination-germany/

Warner, E. L., Barbati, J. L., Duncan, K. L., Yan, K., & Rains, S. A. (2022). Vaccine misinformation types and properties in Russian troll tweets. Vaccine. https://doi.org/10.1016/j.vaccine.2021.12.040

Laurent, C. de S., Murphy, G., Hegarty, K., & Greene, C. (2021). Measuring the effects of misinformation exposure on behavioural intentions. PsyArXiv. https://doi.org/10.31234/osf.io/2xngy

Bevan, R. (2022, February 27). Discord Bans Covid-19 And Vaccine Misinformation. The Gamer. https://www.thegamer.com/discord-anti-vax-covid-19-misinformation-ban-community-guidelines/

Caulfield, T. (2017, October 24). The Vaccination Picture by Timothy Caulfield. Penguin Random House Canada. https://www.penguinrandomhouse.ca/books/565776/the-vaccination-picture-by-timothy-caulfield/9780735234994

Notion – The all-in-one workspace for your notes, tasks, wikis, and databases. (n.d.). Notion. Retrieved 14 February 2022, from https://www.notion.so

With Only 26% of Pregnant People in the United States Vaccinated Against COVID-19, New Survey Sheds Light on the Reasons Why. (n.d.). Retrieved November 1, 2021, from https://www.mavenclinic.com/post/covid-19-vaccine-survey-pregnant-people?utm_content=185156625&utm_medium=social&utm_source=twitter&hss_channel=tw-2236392565

De Block Golding, D. (2021, April 7). Viral video contains several false pandemic claims. Full Fact. https://fullfact.org/health/viral-video-contains-several-false-pandemic-claims/

Roth, E. (2021, October 30). Facebook puts tighter restrictions on vaccine misinformation targeted at children. The Verge. https://www.theverge.com/2021/10/30/22754046/facebook-tighter-restrictions-vaccine-misinformation-children

Johnson, S. (2021, June 7). Spat at, abused, attacked: Healthcare staff face rising violence during Covid. The Guardian. http://www.theguardian.com/global-development/2021/jun/07/spat-at-abused-attacked-healthcare-staff-face-rising-violence-during-covid

DemTech | COVID-19 Misinformation Newsletter 24 August 2021. (2021, August 24). https://demtech.oii.ox.ac.uk/covid-19-misinformation-newsletter-24-august-2021/#continue

Cubbon, S. (2021, March 17). Fringe communities feed on RT coverage to undermine Covid-19 vaccinations. First Draft. https://firstdraftnews.org:443/articles/rt-fringe-undermine-covid-vaccinations/

Zaig, G. (n.d.). 20% of Americans believe microchips are inside COVID-19 vaccines—Study. The Jerusalem Post | JPost.Com. Retrieved July 21, 2021, from https://www.jpost.com/omg/20-percent-of-americans-believe-microchips-are-inside-covid-19-vaccine-study-674272

Harford, T. (2021, May 6). What magic teaches us about misinformation. https://www.ft.com/content/5cea69f0-7d44-424e-a121-78a21564ca35

Borger, J. (2021, October 29). Covid bioweapon claims ‘scientifically invalid’, US intelligence reports. The Guardian. https://www.theguardian.com/world/2021/oct/29/us-intelligence-report-covid-origins

How to report misinformation. (n.d.). Retrieved August 27, 2021, from https://www.who.int/campaigns/connecting-the-world-to-combat-coronavirus/how-to-report-misinformation-online

Swire-Thompson, B., Miklaucic, N., Wihbey, J., Lazer, D., & DeGutis, J. (2021). Backfire effects after correcting misinformation are strongly associated with reliability. PsyArXiv. https://doi.org/10.31234/osf.io/e3pvx

Swire-Thompson, B., Cook, J., Butler, L., Sanderson, J., Lewandowsky, S., & Ecker, U. (2021). Correction Format has a Limited Role when Debunking Misinformation. PsyArXiv. https://doi.org/10.31234/osf.io/gwxe4

Epstein, Z., Berinsky, A., Cole, R., Gully, A., Pennycook, G., & Rand, D. (2021). Developing an accuracy-prompt toolkit to reduce COVID-19 misinformation online. PsyArXiv. https://doi.org/10.31234/osf.io/sjfbn

Karan, A. (2022). We cannot afford to repeat these four pandemic mistakes. BMJ, 376, o631. https://doi.org/10.1136/bmj.o631

ReconfigBehSci. (2022, January 3). masking is not an “unevidenced intervention” and, at this point, it is outright disinformation to claim so. Sad coming from an academic at a respectable institution [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1478003733518819334

Meet the media startups making big money on vaccine conspiracies. (n.d.). Fortune. Retrieved December 23, 2021, from https://fortune.com/2021/05/14/disinformation-media-vaccine-covid19/

Petersen, M. B., Rasmussen, M. S., Lindholt, M. F., & Jørgensen, F. J. (2021). Pandemic Fatigue and Populism: The Development of Pandemic Fatigue during the COVID-19 Pandemic and How It Fuels Political Discontent across Eight Western Democracies. PsyArXiv. https://doi.org/10.31234/osf.io/y6wm4

Desai, S. C., & Reimers, S. (2022). Does explaining the origins of misinformation improve the effectiveness of a given correction? PsyArXiv. https://doi.org/10.31234/osf.io/fxkzc

Kahne and Bowyer (2017) exposed thousands of young people in California tosome true messages and some false ones, similar to the memes they may see on social media

Many U.S.educators believe that increasing political polarization combine with the hazards ofmisinformation and disinformation in ways that underscore the need for learners to acquire theknowledge and skills required to navigate a changing media landscape (Hamilton et al. 2020a)

An

Find common ground. Clear away the kindling. Provide context...don't de-platform.

You have three options:Continue fighting fires with hordes of firefighters (in this analogy, fact-checkers).Focus on the arsonists (the people spreading the misinformation) by alerting the town they're the ones starting the fire (banning or labeling them).Clear the kindling and dry brush (teach people to spot lies, think critically, and ask questions).Right now, we do a lot of #1. We do a little bit of #2. We do almost none of #3, which is probably the most important and the most difficult. I’d propose three strategies for addressing misinformation by teaching people to ask questions and spot lies.

Simply put, the threat of "misinformation" being spread at scale is not novel or unique to our generation—and trying to slow the advances of information sharing is futile and counter-productive.

It’s worth reiterating precisely why: The very premise of science is to create a hypothesis, put that hypothesis up to scrutiny, pit good ideas against bad ones, and continue to test what you think you know over and over and over. That’s how we discovered tectonic plates and germs and key features of the universe. And oftentimes, it’s how we learn from great public experiments, like realizing that maybe paper or reusable bags are preferable to plastic.

develop a hypothesis, and pit different ideas against one another

All of these approaches tend to be built on an assumption that misinformation is something that can and should be censored. On the contrary, misinformation is a troubling but necessary part of our political discourse. Attempts to eliminate it carry far greater risks than attempts to navigate it, and trying to weed out what some committee or authority considers "misinformation" would almost certainly restrict our continued understanding of the world around us.

To start, it is worth defining “misinformation”: Simply put, misinformation is “incorrect or misleading information.” This is slightly different from “disinformation,” which is “false information deliberately and often covertly spread (by the planting of rumors) in order to influence public opinion or obscure the truth.” The notable difference is that disinformation is always deliberate.

Five biggest myths about the COVID-19 vaccines, debunked. (n.d.). Fortune. Retrieved April 29, 2022, from https://fortune.com/2021/10/02/five-biggest-myths-covid-19-vaccines/

Michael Armstrong [@ArmstrongGN]. (2021, September 29). NBA player says he doesn’t need vaccine… 40-thousand likes and 1.4 million views. Scientist/doctor corrects NBA player… 4-thousand likes. We’re so screwed… [Tweet]. Twitter. https://twitter.com/ArmstrongGN/status/1443052037160251392

Dr. Deepti Gurdasani [@dgurdasani1]. (2021, October 30). A very disturbing read on the recent JCVI minutes released. They seem to consider immunity through infection in children advantageous, discussing children as live “booster” vaccines for adults. I would expect this from anti-vaxx groups, not a scientific committee. [Tweet]. Twitter. https://twitter.com/dgurdasani1/status/1454383106555842563

Sir Karam Bales ✊ 🇺🇦. (2022, January 29). 1/🧵Some questionable stats, studies and statements over past 6 week Examples and evaluation First of all its worth looking at the impact some studies and articles have had in recent months https://t.co/o83T5fJW2N [Tweet]. @karamballes. https://twitter.com/karamballes/status/1487240863835119617

Kolina Koltai, PhD [@KolinaKoltai]. (2021, September 27). When you search ‘Covid-19’ on Amazon, the number 1 product is from known antivaxxer Dr. Mercola. 4 out of the top 8 items are either vaccine opposed/linked to conspiratorial narratives about covid. Amazon continues to be a venue for vaccine misinformation. Https://t.co/rWHhZS8nPl [Tweet]. Twitter. https://twitter.com/KolinaKoltai/status/1442545052954202121

ReconfigBehSci on Twitter: “RT @CaulfieldTim: Incredible how ‘natural immunity’ topic continually misrepresented by #antivaxx community. The usual suspects (e.g., th…” / Twitter. (n.d.). Retrieved January 21, 2022, from https://twitter.com/SciBeh/status/1474060743217754115

Katherine Ognyanova. (2022, February 15). Americans who believe COVID vaccine misinformation tend to be more vaccine-resistant. They are also more likely to distrust the government, media, science, and medicine. That pattern is reversed with regard to trust in Fox News and Donald Trump. Https://osf.io/9ua2x/ (5/7) https://t.co/f6jTRWhmdF [Tweet]. @Ognyanova. https://twitter.com/Ognyanova/status/1493596109926768645

Nerd, G. M.-K. H. (2021, December 22). Of Course Unvaccinated People Should Get Medical Care. Medium. https://gidmk.medium.com/of-course-unvaccinated-people-should-get-medical-care-34b26ae7eaa4

Dr. Jonathan N. Stea. (2021, January 25). Covid-19 misinformation? We’re over it. Pseudoscience? Over it. Conspiracies? Over it. Want to do your part to amplify scientific expertise and evidence-based health information? Join us. 🇨🇦 Follow us @ScienceUpFirst. #ScienceUpFirst https://t.co/81iPxXXn4q. Https://t.co/mIcyJEsPXe [Tweet]. @jonathanstea. https://twitter.com/jonathanstea/status/1353705111671869440

Kit Yates. (2021, September 27). This is absolutely despicable. This bogus “consent form” is being sent to schools and some are unquestioningly sending it out with the real consent form when arranging for vaccination their pupils. Please spread the message and warn other parents to ignore this disinformation. Https://t.co/lHUvraA6Ez [Tweet]. @Kit_Yates_Maths. https://twitter.com/Kit_Yates_Maths/status/1442571448112013319

John West 🕯💙. (2021, February 5). Today is a good day In terms of one less source of misinformation https://t.co/VGUvcqkMWV [Tweet]. @JohnWest_JAWS. https://twitter.com/JohnWest_JAWS/status/1357814722482089985

ReconfigBehSci. (2021, February 17). The global infodemic has driven trust in all news sources to record lows with social media (35%) and owned media (41% the least trusted; traditional media (53%) saw largest drop in trust at 8 points globally. Https://t.co/C86chd3bb4 [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1362022502743105541

ReconfigBehSci. (2021, February 17). The Covid-19 pandemic has accelerated the erosion of trust around the world: Significant drop in trust in the two largest economies: The U.S. (40%) and Chinese (30%) governments are deeply distrusted by respondents from the 26 other markets surveyed. 1/2 https://t.co/C86chd3bb4 [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1362021569476894726

A_thankless_task. (2021, September 27). @judysimpson222 @KatyMcconkey @beverleyturner Just for anyone interested in why this is a piece of crap. Https://t.co/2XmuuImYzy [Tweet]. @AThankless. https://twitter.com/AThankless/status/1442586893502140417

(20) James 💙 Neill—😷 🇪🇺🇮🇪🇬🇧🔶 on Twitter: “The domain sending that fake NHS vaccine consent hoax form to schools has been suspended. Excellent work by @martincampbell2 and fast co-operation by @kualo 👍 FYI @fascinatorfun @Kit_Yates_Maths @dgurdasani1 @AThankless https://t.co/pbAgNfkbEs” / Twitter. (n.d.). Retrieved November 22, 2021, from https://twitter.com/jneill/status/1442784873014566913

ReconfigBehSci. (2020, November 10). Now #scibeh2020: Presentation and Q&A with Martha Scherzer, senior risk communication and community engagement (RCCE) Consultant at the World Health Organization https://t.co/Gsr66BRGcJ [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1326148149870809089

Matthew Hodson. (2021, February 14). Doctors @vanessa_apea and @crageshri busting myths about COVID-19 vaccination. #aidsmapLIVE https://t.co/AgJemVJXWV [Tweet]. @Matthew_Hodson. https://twitter.com/Matthew_Hodson/status/1360856207242768384

Mike Caulfield. (2021, March 10). One of the drivers of Twitter daily topics is that topics must be participatory to trend, which means one must be able to form a firm opinion on a given subject in the absence of previous knowledge. And, it turns out, this is a bit of a flaw. [Tweet]. @holden. https://twitter.com/holden/status/1369551099489779714

Amy Maxmen, PhD. (2020, August 26). 🙄The CDC’s only substantial communication with the public in the pandemic is through its MMW Reports. But the irrelevant & erroneous 1st line of this latest report suggests political meddling to me. (The WHO doesn’t declare pandemics. They declare PHEICs, which they did Jan 30) https://t.co/Y1NlHbQIYQ [Tweet]. @amymaxmen. https://twitter.com/amymaxmen/status/1298660729080356864

(1) ReconfigBehSci on Twitter: ‘RT @MDaware: But 3 people had URI symptoms in november https://t.co/JcAom73VzM’ / Twitter. (n.d.). Retrieved 21 June 2021, from https://twitter.com/SciBeh/status/1402049602015023106

Mills, M. C., & Sivelä, J. (2021). Should spreading anti-vaccine misinformation be criminalised? BMJ, 372, n272. https://doi.org/10.1136/bmj.n272

Stefan Simanowitz. (2021, March 18). 1/. The PM claims that the govt “stuck to the science like glue” But this is not true At crucial times they ignored the science or concocted pseudo-scientific justifications for their actions & inaction This thread, & the embedded threads, set them out https://t.co/dhXqkSL1bz [Tweet]. @StefSimanowitz. https://twitter.com/StefSimanowitz/status/1372460227619135493

Adam Kucharski. (2020, December 13). I’ve turned down a lot of COVID-related interviews/events this year because topic was outside my main expertise and/or I thought there were others who were better placed to comment. Science communication isn’t just about what you take part in – it’s also about what you decline. [Tweet]. @AdamJKucharski. https://twitter.com/AdamJKucharski/status/1338079300097077250

The Troll Zoo. (2021, May 4). 3. As an example, this popular post amended the headline of a Guardian story, to say that Devi Sridhar had claimed that ‘coronavirus can infect camels’. Https://t.co/6lRPYNZgdQ [Tweet]. @TrollZoo. https://twitter.com/TrollZoo/status/1389547190863994882

ReconfigBehSci on Twitter: ‘RT @MollyJongFast: One of the most powerful conservative celebrities is actively working against vaccinations https://t.co/Kz8hJuLQtK’ / Twitter. (n.d.). Retrieved 16 June 2021, from https://twitter.com/SciBeh/status/1389984427158110216

ReconfigBehSci. (2021, April 23). I’m starting the critical examination of the success of behavioural science in rising to the pandemic challenge over the last year with the topic of misinformation comments and thoughts here and/or on our reddits 1/2 https://t.co/sK7r3f7mtf [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1385631665175896070

Youyang Gu. (2021, May 25). Is containing COVID-19 a requirement for preserving the economy? My analysis suggests: Probably not. In the US, there is no correlation between Covid deaths & changes in unemployment rates. However, blue states are much more likely to have higher increases in unemployment. 🧵 https://t.co/JrikBtawEb [Tweet]. @youyanggu. https://twitter.com/youyanggu/status/1397230156301930497

Dr. Syra Madad. (2021, February 7). What we hear most often “talk to your health care provider if you have any questions/concerns on COVID19 vaccines” Vs Where many are actually turning to for COVID19 vaccine info ⬇️ This is also why it’s so important for the media to report responsibly based on science/evidence [Tweet]. @syramadad. https://twitter.com/syramadad/status/1358509900398272517

EU DisinfoLab. (2021, March 9). 🗣️In this week’s #IWD2021 update: We highlight initiatives promoting gender equality and women safeguarding our digital information ecosystem. We also acknowledging the rise in online GBV and the threat of gendered disinformation. ▶️https://disinfo.eu/outreach/our-newsletter/disinfo-update-09-03-2021 #WomeninOSINT [Tweet]. @DisinfoEU. https://twitter.com/DisinfoEU/status/1369198151639441411

David Leonhardt. (2021, February 19). For weeks, the public messages about vaccines have been more negative than the facts warrant. Now we are seeing the cost: A large percentage of Americans wouldn’t take a vaccine if offered one. 🧵... [Tweet]. @DLeonhardt. https://twitter.com/DLeonhardt/status/1362767520764203011

ReconfigBehSci on Twitter. (n.d.). Twitter. Retrieved 8 November 2021, from https://twitter.com/SciBeh/status/1444360973750444032

Multiple issues with @scotgov assessment of vaccine passports. 1. No evidence that passports will decrease cases at venues (just an infographic!). This is a complex modelling issue that must also account for waning immunity and possibility of more unvaxxed in other settings

ReconfigBehSci on Twitter: ‘@Holdmypint @ollysmithtravel @AllysonPollock Omicron might be changing things- the measure has to be evaluated relative to the situation in Austria at the time, not Ireland 3 months later with a different variant’ / Twitter. (n.d.). Retrieved 25 March 2022, from https://twitter.com/SciBeh/status/1487130621696741388

Carl T. Bergstrom. (2022, January 11). Curious if this what @Twitter meant when they talked about their commitment to combat covid disinformation. Https://t.co/sxrhNVTFW8 [Tweet]. @CT_Bergstrom. https://twitter.com/CT_Bergstrom/status/1480760362496446464

ReconfigBehSci [@SciBeh]. (2022, January 28). @ollysmithtravel @AllysonPollock that is a policy alternative one could consider- whether it’s more or less effective, more or less equitable, or even implementable in the current Austrian health care framework would need careful consideration.... None of that saves the argument in the initial tweet [Tweet]. Twitter. https://twitter.com/SciBeh/status/1487043954654818316

ReconfigBehSci. (2022, February 4). RT @CT_Bergstrom: An unusual degree of self-awareness from the twitter sidebar. Https://t.co/kqQBcscd4r [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1489492019928080386

Nerd, G. M.-K. H. (2022, March 2). Focused Protection From the Great Barrington Declaration Never Made Sense. Medium. https://gidmk.medium.com/focused-protection-from-the-great-barrington-declaration-never-made-sense-416b86ac5f06

Social Media Conversations in Support of Herd Immunity are Driven by Bots. (n.d.). Federation Of American Scientists. Retrieved March 31, 2022, from https://fas.org/blogs/fas/2020/10/social-media-conversations-in-support-of-herd-immunity-are-driven-by-bots/

ReconfigBehSci. (2022, March 14). RT @jitsuvax: Https://hackmd.io/@scibehC19vax/home Short update to the @jitsuvax and @SciBeh COVID-19 Communication Handbook. 🥪 Using the Fact-Sandwich… [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1503641642129145857

🦇Amy Lasky, MD 🦇. (2021, October 8). If anyone likes anecdotes and has concerns about the covid vaccine and fertility—Let me tell you without question it does NOT cause infertility 👀 [Tweet]. @AmyLaskyMD. https://twitter.com/AmyLaskyMD/status/1446569443035914248

ReconfigBehSci on Twitter: ‘this really is now a disinformation account. I retweeted posts earlier in the pandemic as part of a balanced spread of opinion. But this will be the last one...’ / Twitter. (n.d.). Retrieved 29 March 2022, from https://twitter.com/SciBeh/status/1478485258395951108

Eric Nelson. (2021, November 20). RFK jr’s book alleging Bill Gates funded fake negative hydroxycholoroquine studies to rob us of a Covid miracle cure is now #1 on Amazon. [Tweet]. @literaryeric. https://twitter.com/literaryeric/status/1462065394802429960

Prof. Christina Pagel 🇺🇦. (2021, December 7). This is what it feels like again https://xkcd.com/2278/ https://t.co/q6XyUTYiPe [Tweet]. @chrischirp. https://twitter.com/chrischirp/status/1468184343399084034

ReconfigBehSci. (2022, January 6). RT @GidMK: Perhaps unsurprisingly, this is an absolutely awful study filled with issues and numeric mistakes https://t.co/hvEv5gMMn2 [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1478987492552589314

Prof. Gavin Yamey MD MPH. (2021, December 29). Good things sometimes do happen One of the world’s worst peddlers of dangerous vaccine disinformation His supporters will scream “censorship!” but I for one am happy that his horrific nonsense about vaccines won’t feature on Twitter https://t.co/9DvateIuDG [Tweet]. @GYamey. https://twitter.com/GYamey/status/1476283673376956422

Prof Peter Hotez MD PhD. (2021, December 30). When the antivaccine disinformation crowd declares twisted martyrdom when bumped from social media or condemned publicly: They contributed to the tragic and needless loss of 200,000 unvaccinated Americans since June who believed their antiscience gibberish. They’re the aggressors [Tweet]. @PeterHotez. https://twitter.com/PeterHotez/status/1476393357006065670

Abdul-Jabbar, K. (2021, November 8). Aaron Rodgers Didn’t Just Lie [Substack newsletter]. Kareem Abdul-Jabbar. https://kareem.substack.com/p/aaron-rodgers-didnt-just-lie

ReconfigBehSci. (2021, December 20). RT @CaulfieldTim: Timothy Caulfield: Misinformation – Vaccines, Vaccine Hesitancy & Media https://youtu.be/wQSIo1AmQMw via @CARPNews @Zoomer… [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1472984068291764224

How Fauci fooled America | Opinion. (2021, November 1). Newsweek. https://www.newsweek.com/how-fauci-fooled-america-opinion-1643839

Myths about COVID-19 vaccination. (n.d.). HackMD. Retrieved March 23, 2022, from https://hackmd.io/@scibehC19vax/misinfo_myths

John Bye. (2022, January 6). Despite repeatedly being proven wrong by subsequent events, covid disinformation groups like HART have constantly been given a platform on TV and radio throughout the pandemic. Even after #hartleaks revealed many of their members to be anti-vax conspiracy cranks. 🧵2: Broadcast https://t.co/I3unq04gij [Tweet]. @_johnbye. https://twitter.com/_johnbye/status/1479202308139409413

ReconfigBehSci on Twitter: ‘RT @amymaxmen: Link to the meeting: Https://t.co/3UH1R8fblN’ / Twitter. (n.d.). Retrieved 22 March 2022, from https://twitter.com/SciBeh/status/1486268859741052930

Bad Vaccine Takes. (2022, January 26). This account is fake https://t.co/r5SXmzuPQj [Tweet]. @BadVaccineTakes. https://twitter.com/BadVaccineTakes/status/1486445924071129097

‘Freedom convoy’ forums find new focus: Disinformation about Russia-Ukraine war - National | Globalnews.ca. (n.d.). Global News. Retrieved 21 March 2022, from https://globalnews.ca/news/8659667/ukraine-russia-convoy-misinformation-conspiracy/

Quinn, E. K., Fenton, S., Ford-Sahibzada, C. A., Harper, A., Marcon, A. R., Caulfield, T., Fazel, S. S., & Peters, C. E. (2022). COVID-19 and Vitamin D Misinformation on YouTube: Content Analysis. JMIR Infodemiology, 2(1), e32452. https://doi.org/10.2196/32452

Arechar, A. A., Allen, J. N. L., berinsky, a., Cole, R., Epstein, Z., Garimella, K., … Rand, D. G. (2022, February 11). Understanding and Reducing Online Misinformation Across 16 Countries on Six Continents. https://doi.org/10.31234/osf.io/a9frz

Kim Reynolds ending COVID disaster declaration, shutting down vaccination and case count websites. (n.d.). Des Moines Register. Retrieved March 14, 2022, from https://www.desmoinesregister.com/story/news/politics/2022/02/03/iowa-governor-kim-reynolds-end-covid-disaster-policies-vaccination-case-websites-omicron/6653655001/

ReconfigBehSci. (2022, February 15). RT @Ryanair: We’re not an airline but we do fly planes #Djokovic https://t.co/wivO3L2dTp [Tweet]. @SciBeh. https://twitter.com/SciBeh/status/1493901621578936324

University, G. W. (n.d.). Facebook’s vaccine misinformation policy reduces anti-vax information. Retrieved March 7, 2022, from https://medicalxpress.com/news/2022-03-facebook-vaccine-misinformation-policy-anti-vax.html

“One man’s dirty trickster is another man’s freedom fighter,” he wrote in his 2018 book “Stone’s Rules,” a collection of career lessons including how voters will believe a “big lie” if it is kept simple and repeated often enough.

The Family Soup Company. (2022, February 19). A lesson in how misinformation becomes fact in too many minds. Thread: Meet @SaraCarterDC. Her bio says she’s an award winning correspondent who works with @FoxNews. Three hours ago, Sara tweeted that someone in the occupier demo died after police on horses pushed through. 1/ https://t.co/fpaYDctQVn [Tweet]. @mypoortiredsoul. https://twitter.com/mypoortiredsoul/status/1494912722156331008

Fidalgo, P. (2022, February 22). How the Hell Did It Get This Bad? Timothy Caulfield Battles the Infodemic, March 3 | Center for Inquiry. https://centerforinquiry.org/news/how-the-hell-did-it-get-this-bad-timothy-caulfield-battles-the-infodemic-march-3/

Dan Freedman, DO. (2022, February 19). No, the CDC did not quietly revise language development guidelines to hide mask induced delays. This is misinformation. The change is based on a 15 year update on the 2004 recs & a lit review performed in 2019 with the explicit goal of identifying higher risk kids. 1/ https://t.co/TlV76bIb7n [Tweet]. @dfreedman7. https://twitter.com/dfreedman7/status/1494846691752751104

Tyler Black, MD. (2022, January 25). /1 Hi Lucy and your colleagues. Your advocacy toolkit contains poorly sourced, contexted, and biased information on mental health during the pandemic/schooling. And I have receipts too! (Thread) #urgencyofnormal https://t.co/JeWKE0iGn1 [Tweet]. @tylerblack32. https://twitter.com/tylerblack32/status/1486111652076527623