It demonstrated incredible generalization. Without any retraining, TRINITY transferred zero-shot to four unseen tasks

作者强调其系统无需重新训练即可零样本泛化到新任务,这与当前AI模型通常需要针对特定任务进行微调的主流实践形成鲜明对比,提出了一个反直觉的泛化能力观点。

It demonstrated incredible generalization. Without any retraining, TRINITY transferred zero-shot to four unseen tasks

作者强调其系统无需重新训练即可零样本泛化到新任务,这与当前AI模型通常需要针对特定任务进行微调的主流实践形成鲜明对比,提出了一个反直觉的泛化能力观点。

non-expert humans comfortably exceed 60%

【洞察】120 倍的人机差距意味着:当前 AI 推理能力的提升是「在已知模式上的优化」,而非「真正的归纳推理泛化」。这对所有声称「AI 已接近人类」的产品宣传都是正面挑战——AGI 时间线的预期需要重新校准,而非渐进式调整。

The fact that the RL model has larger improvements on Levenshtein Distance and Added Cognitive Complexity than on Pass@1 is further evidence that it is not just memorizing corruption reversals but has actually generalized to minimal editing.

大多数人认为强化学习模型只能记住特定情况,但作者发现强化学习模型在最小化编辑任务上不仅能够记住,而且能够泛化到更广泛的场景。

Learning fields turns S-parameter extrapolation into something closer to an in-distribution task.

极具启发性的观点。传统ML模型在未见过的结构上往往失效,因为从S参数看这是“外推”。但底层电磁场遵循不变的麦克斯韦方程。通过学习场,模型掌握了普适物理规律,从而将看似“外推”的预测转化为基于物理的“内插”,打破了ML只能插值的偏见。

We find internal representations of emotion concepts, which encode the broad concept of a particular emotion and generalize across contexts and behaviors it might be linked to.

研究发现 Claude 内部存在「情绪概念向量」,能够跨上下文泛化——同一个「恐惧」向量,既能在直接表达恐惧时激活,也能在暗示危险情境时激活。这说明模型习得的是情绪的抽象概念而非表面模式,与人类神经科学中对情绪的理解高度同构,令人惊讶于这种结构竟然自发涌现。

How do we know all this? My work focuses on tests of adult knowledge — what adults retain after graduation. The general pattern is that grown-ups have shockingly little academic knowledge. College graduates know about what you’d expect high school graduates to know; high school graduates know about what you’d expect dropouts to know; dropouts know next to nothing. This doesn’t mean that these students never knew more; it just means that only a tiny fraction of what they learn durably stays in their heads.

Week 9- In this paragraph the author included that he works on testing adult's knowledge and what they're retained after graduation. He states that education levels are below that of where they're expected to be. I believe here he is exhibiting generalization. The sample size of his research is not included, leaving many undefined variables that may give a confirming impression when it may not be if the study is expanded. While he may have credentials for it, statistics are not shown or provided with context and therefore I find the correlation not appropriate.

here are several ways I havefound useful to invite the sociological imagination:

C. Wright Mills delineates a rough definition of "sociological imagination" which could be thought of as a framework within tools for thought: 1. Combinatorial creativity<br /> 2. Diffuse thinking, flâneur<br /> 3. Changing perspective (how would x see this?) Writing dialogues is a useful method to accomplish this. (He doesn't state it, but acting as a devil's advocate is a useful technique here as well.)<br /> 4. Collecting and lay out all the multiple viewpoints and arguments on a topic. (This might presume the method of devil's advocate I mentioned above 😀)<br /> 5. Play and exploration with words and terms<br /> 6. Watching levels of generality and breaking things down into smaller constituent parts or building blocks. (This also might benefit of abstracting ideas from one space to another.)<br /> 7. Categorization or casting ideas into types 8. Cross-tabulating and creation of charts, tables, and diagrams or other visualizations 9. Comparative cases and examples - finding examples of an idea in other contexts and time settings for comparison and contrast 10. Extreme types and opposites (or polar types) - coming up with the most extreme examples of comparative cases or opposites of one's idea. (cross reference: Compass Points https://hypothes.is/a/Di4hzvftEeyY9EOsxaOg7w and thinking routines). This includes creating dimensions of study on an object - what axes define it? What indices can one find data or statistics on? 11. Create historical depth - examples may be limited in number, so what might exist in the historical record to provide depth.

Of course, there is a utopian aspect to abolitionist thinking.

I believe this is a generalization the author is making. Nothing is a utopian, especially policies in a society or government.

This isn't to say that on a case by case basis there aren't modules that are grossly overcomplicated.

Hasty generalization usually follows the pattern:

Hasty generalization is the fallacy of examining just one or very few examples or studying a single case, and generalizing that to be representative of the whole class of objects or phenomena.

While other monads will embody different logical processes, and some may have extra properties, all of them will have three similar components (directly or indirectly) that follow the basic outline of this example.

Just saying “snaps are slow” is not helpful to anyone. Because frankly, they’re not. Some might be, but others aren’t. Using blanket statements which are wildly inaccurate will not help your argument. Bring data to the discussion, not hearsay or hyperbole.

Blanket statements are never useful. They are nebulous and often send the wrong message. They seed doubt and mistrust and are usually intended to make a grand point about how right the person making the statement might be. They tend to be self-serving even when outwardly it doesn’t appear that way. In other words, we make blanket statements because we want to make a point that makes us look right and therefore look good in the position we are taking.

A blanket statement is a sentence that assumes as truth that something applies to absolutely everything it is discussing. As an example: All people get angry. And the difficulty with such a statement is that the vast majority of the cases, the sentence simply isn't accurate. As we know, people, such as monks, who work to control their emotions, simply DON'T get angry. So it is a comment made to convince one of the validity of an argument when the statement itself has no validity.

A blanket statement is a vague and noncommittal statement asserting a premise without providing evidence (such as specific numbers).

Professionally our methods of transmitting and reviewing the results of research are generations old and by now are totally inadequate for their purpose. If the aggregate time spent in writing scholarly works and in reading them could be evaluated, the ratio between these amounts of time might well be startling. Those who conscientiously attempt to keep abreast of current thought, even in restricted fields, by close and continuous reading might well shy away from an examination calculated to show how much of the previous month's efforts could be produced on call. Mendel's concept of the laws of genetics was lost to the world for a generation because his publication did not reach the few who were capable of grasping and extending it; and this sort of catastrophe is undoubtedly being repeated all about us, as truly significant attainments become lost in the mass of the inconsequential.

Specialization, although necessary, has rendered it impossible to stay up to date with the advances of a field.

Guo, L., Boocock, J., Tome, J. M., Chandrasekaran, S., Hilt, E. E., Zhang, Y., Sathe, L., Li, X., Luo, C., Kosuri, S., Shendure, J. A., Arboleda, V. A., Flint, J., Eskin, E., Garner, O. B., Yang, S., Bloom, J. S., Kruglyak, L., & Yin, Y. (2020). Rapid cost-effective viral genome sequencing by V-seq. BioRxiv, 2020.08.15.252510. https://doi.org/10.1101/2020.08.15.252510

Speelman, C., & McGann, M. (2020). Statements about the Pervasiveness of Behaviour Require Data about the Pervasiveness of Behaviour [Preprint]. PsyArXiv. https://doi.org/10.31234/osf.io/bxzm4

Morey, R.A., Haswell, C.C., Stjepanović, D. et al. Neural correlates of conceptual-level fear generalization in posttraumatic stress disorder. Neuropsychopharmacol. (2020). https://doi.org/10.1038/s41386-020-0661-8

Are All Layers Created Equal?

Google的这文2个 idea 很简单:一个是在 trained 网络各层的参数分别换回训练前的初始参数而观察相应各层的鲁棒性;另一个是把上一个 idea 基础上把那套初始参数再从某分布中随机取一次瞅效果。此 paper 的严谨的验证试验过程是最值得学习的~[并不简单]

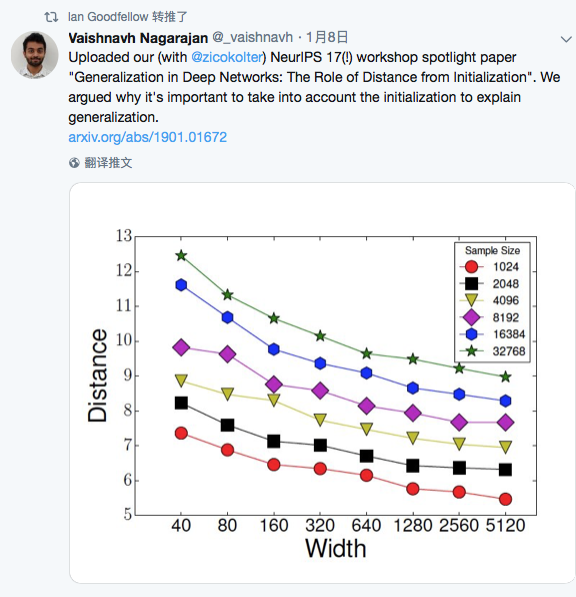

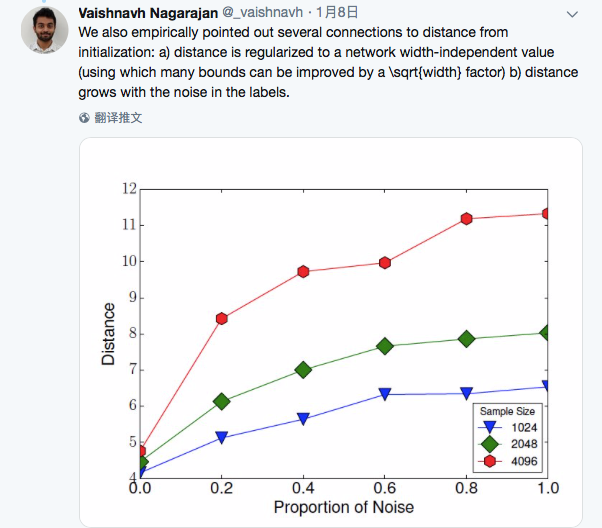

Generalization in Deep Networks: The Role of Distance from Initialization

Goodfellow 转推了此文。

作者强调了模型的初始化参数对解释泛化能力的重要性!

Generalization and Equilibrium in Generative Adversarial Nets (GANs)

转自作者 Yi Zhang 在知乎上的回答:https://www.zhihu.com/question/60374968/answer/189371146

老板在Simons给的talk:https://www.youtube.com/watch?v=V7TliSCqOwI

这应该是第一个认真研究 theoretical guarantees of GANs的工作

使用的techniques比较简单,但得到了一些insightful的结论:

在只给定training samples 而不知道true data training distribution的情况下,generator's distribution会不会converge to the true data training distribution.

答案是否定的。 假设discriminator有p个parameters, 那么generator 使用O(p log p) samples 就能够fool discriminator, 即使有infinitely many training data。

这点十分反直觉,因为对于一般的learning machines, getting more data always helps.

几乎所有的GAN papers都会提到GANs' training procedure is a two-player game, and it's computing a Nash Equilibrium. 但是没有人提到此equilibrium是否存在。

大家都知道对于pure strategy, equilibrium doesn't always exist。很不幸的是,GANs 的结构使用的正是pure strategy。

很自然的我们想到把GANs扩展到mixed strategy, 让equilibrium永远存在。

In practice, 我们只能使用finitely supported mixed strategy, 即同时训练多个generator和多个discriminator。借此方法,我们在CIFAR-10上获得了比DCGAN高许多的inception score.

通过分析GANs' generalization, 我们发现GANs training objective doesn't encourage diversity. 所以经常会发现mode collapse的情况。但至今没有paper严格定义diversity或者分析各种模型mode collapse的严重情况。

关于这点在这片论文里讨论的不多。我们有一篇follow up paper用实验的方法估计各种GAN model的diversity, 会在这一两天挂到arxiv上。

Interpreting Adversarial Robustness: A View from Decision Surface in Input Space

通常人们都认为,局部最小损失的超平面在参数空间中越平坦,就意味着泛化能力越好。但此文通过可视化某种决策边界认为在原始输入空间中就可以察觉到对抗性鲁棒的端倪~(这个结论还需广泛复现吧,自己不试验下也不敢确信,毕竟缺乏理论基础~[可怜])

An analytic theory of generalization dynamics and transfer learning in deep linear networks

这是一篇谈论泛化error和Transfer L.的理论 paper. 虽实验细节还没看懂, 但结论很意义:新提出一个解析的理论方法,发现网络最首要先学到并依赖的是tast structure(通过early-stoping)而不是网络size!这也就解释了为啥随机data比real data更容易被学习,似乎存在更好的non-GD优化策略.

关于 SNR 也有迁移实验,说可以从高 SNR 迁移到低 SNR。。。

Stochastic Gradient Descent Optimizes Over-parameterized Deep ReLU Networks

这个哥们的文章和这个月内好几篇的立意基本一致(1811.03804/1811.03962/1811.04918) [抓狂] ,估计作者正写的时候,内心是崩溃的~[笑cry] 赶快强调自己有着不同的 assumption~

An Information-Theoretic View for Deep Learning

这是一篇关于DL理论的 paper,很有亮点!其给出了期望泛化误差范围内 CNN 层数的理论上界!“the deeper the better” 的神话终将是要设限的。。

Generalization Error in Deep Learning

一篇谈深度学习模型泛化能力的 review paper~ 不错。。。就是很理论。。很泛泛。。。

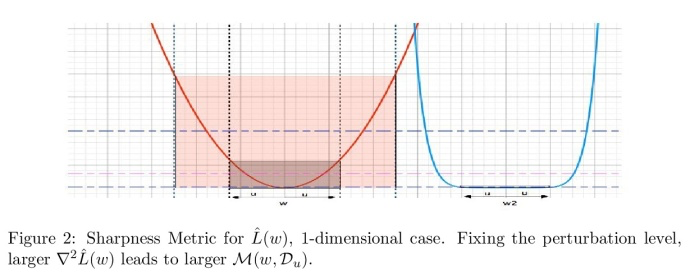

Identifying Generalization Properties in Neural Networks

按作者说法,证明了模型泛化能力确实与 Hessian矩阵相关,还提出了一个新 metric 来量化泛化能力。有趣的是(下方)的那一幅图,可以明显说明较平坦的局部最小对应于更好的泛化能力~~

来自 NATURE.AI 的 summary 评论:

This paper discusses the mathematical factors that are attributed to a deep network's generalisation ability. We have written a 2-minute summary that breakdowns the key mathematical framework that underlies this paper.

Learning and Generalization in Overparameterized Neural Networks, Going Beyond Two Layers

全篇的数学理论推导,意在回答2/3层过参的网络可以足够充分地学习和有良好的泛化表现,即使在简单的优化策略(类SGD)等假定下。(FYI: 文章可谓行云流水,直截了当,标准规范,阅读有种赏心悦目的感觉~)

Approximate Fisher Information Matrix to Characterise the Training of Deep Neural Networks

深度神经网络训练(收敛/泛化性能)的近似Fisher信息矩阵表征,可自动优化mini-batch size/learning rate

挺有趣的 paper,提出了从 Fisher 矩阵抽象出新的量用来衡量训练过程中的模型表现,来优化mini-batch sizes and learning rates | 另外 paper 中的figure画的很好看 | 作者认为逐步增加batch sizes的传统理解只是partially true,存在逐步递减该 size 来提高 model 收敛和泛化能力的可能。

The most valuable insights are both general and surprising. F = ma for example.

Why is this surprising? It is a definition. A force is what causes an acceleration.

I must speak honestly about the things that I believe -- the things that we, as Americans, believe

I think that this would be Hasty Generalization because he is saying what he believes is what all Americans believe. He is using this to bring together America as one.

And in examining his life and his words, I'm sure we both realize we have more work to do to promote equality in our own countries -- to reduce discrimination based on race in our own countries. And in Cuba, we want our engagement to help lift up the Cubans who are of African descent -- (applause) -- who’ve proven that there’s nothing they cannot achieve when given the chance.

It is ironic that he speaks of elimination discrimination based on race, but then insinuates that Cubans specifically of African descent have proven to be superior in overcoming adversity. I could be wrong.