💥【令人震惊】AI 基础设施的地缘政治风险第一次从「理论」变成「实际损失」:伊朗无人机打击 UAE 和 Bahrain 的 AWS 设施,全面恢复需数月。这事件的意义不只是 AWS 的物理损失,而是它彻底终结了「数据中心是安全的」的天真假设。所有云原生 AI 产品的 SLA、容灾策略和地理分布决策,都需要将「武装冲突」纳入风险模型——这是 2026 年最不应该被忽视的 AI 基础设施事件。

- Last 7 days

-

arstechnica.com arstechnica.com

- Apr 2026

-

-

What Happens When You Give an AI Agent Your AWS Credentials

- The Bottleneck Problem: While AI can write application code in minutes, the infrastructure required to run it (Terraform/HCL) often creates a manual bottleneck, requiring human review and slow deployment cycles.

- Risks of Direct Access: Giving AI agents full AWS/Terraform access is dangerous because:

- Full API Surface: Agents can inadvertently create public databases, unencrypted buckets, or overly permissive IAM roles.

- Review Burden: As AI output scales, human reviewers cannot keep up with thousands of lines of generated HCL, leading to "misconfiguration creep."

- The Failure of "Policy-as-Code": Tools like OPA or Checkov are "blocklist" approaches. They require humans to anticipate every dangerous configuration, which is difficult given AWS's 1,500+ resource types.

- Infrastructure-from-Code (IfC) Solution: Instead of giving agents access to the cloud API, developers give them a "bounded interface" using typed primitives (e.g.,

new SQLDatabase). - Benefits of Bounded Interfaces:

- No Credentials Needed: The agent never touches AWS keys; it only declares what the app needs in the application code.

- Automatic Security: The platform handles the "how" (private subnets, encryption, least-privilege IAM) based on pre-set organizational standards.

- Type-Safety: Errors (like a missing database migration) are caught by the compiler during development, not after a failed deployment.

- Architectural Visibility: Because the infrastructure is derived from code, the platform can automatically generate up-to-date architecture maps, making it easier for humans to audit the agent's work.

Tags

Annotators

URL

-

- Mar 2026

-

alexeyondata.substack.com alexeyondata.substack.com

-

How I Dropped Our Production Database and Now Pay 10% More for AWS

- The author accidentally dropped their production database while using an AI agent (Claude Code) to manage AWS infrastructure via Terraform.

- The incident occurred because the author attempted to merge two separate projects into one, ignoring the AI’s advice to keep them separate to save on VPC costs.

- The AI agent generated a Terraform plan that included deleting existing resources to recreate them under the new unified structure.

- The author authorized a

terraform applyand subsequently aterraform destroywithout carefully reviewing the plan, mistakenly believing the agent was only cleaning up temporary resources. - Because the author had not set up external backups and the automated RDS snapshots were deleted along with the instance, all data was initially lost.

- AWS Support was miraculously able to recover a snapshot, though the author now pays 10% more for AWS due to implementing more robust (and expensive) backup and security measures.

- The "lesson learned" highlights the dangers of "vibe engineering"—relying on AI agents to execute destructive commands without human oversight or a deep understanding of the underlying tools.

Hacker News Discussion

- Negligence Over AI Risk: Many commenters argue that the issue wasn't the AI itself, but the author's decision to bypass standard safety procedures, such as reviewing

terraform planbefore execution. - Critique of "Vibe Engineering": Users criticized the trend of letting LLMs handle infrastructure (IaC) without the human operator understanding the deterministic tools they are using.

- Infrastructure Over-engineering: Several participants pointed out that the project seemed over-engineered with AWS and Terraform when a simple VPS or SQLite database might have sufficed and been easier to manage.

- AWS Data Recovery: Former AWS employees expressed surprise that support could recover the data, noting that AWS typically treats a user-initiated deletion as a final security command to wipe the data.

- The Importance of Staging: A recurring theme was that major migrations should be tested in a staging environment first; running unverified AI-generated scripts directly against production was labeled as "insanity."

-

- Jan 2026

-

nesbitt.io nesbitt.io

-

Europe shouldn’t try to build its own AWS. Instead, governments should use procurement power to enforce interoperability standards.

(David Eaves argument recap:) The answer is not in replicating similar type of organisations (like AWS) but in interoperability. I tend to agree. The problem w hyperscalers is the hyper and the scale. We don't do that for internet infrastructure either. Vgl [[The Path to a Sovereign Tech Stack is Via a Commodified Tech Stack]]

-

S3 API became a de facto standard

Vgl [[S3 API How Amazon’s Storage Protocol Became the Industry Standard]]

-

-

-

On the s3 API, simple storage service. A proprietary protocol by Amazon, that is now mostly the standard for cloud storage services, used by other vendors

-

-

www.computerworld.com www.computerworld.com

-

AWS European cloud service launch raises questions over sovereignty. not really though. It's quite obvious what kind of attempt this is. There are plenty that now feel nervous but will opt for anything that lets them say with plausible deniability that they did something without going through the actual work of a full transition

-

While there are questions around the implications of the ownership structure, AWS’s European Sovereign Cloud still provides a greater level of sovereignty compared to its standard public cloud service in terms of enabling regulatory compliance, according to analysts. This could appeal to European organizations, they said, depending on their sovereignty requirements.

for companies perhaps, not for public sector in any way though. precisely bc the core problem is not 'regulatory compliance' (and doesn't that imply their current public cloud is a bit shit then?)

-

-

www.nrc.nl www.nrc.nl

-

Good on NRC to point out that Amazon is not answering the key question, and the CEO saying things like "I'm not the person to best answer your question" is rich too.

-

-

-

Amazon sovereignty washing. It is all irrelevant bc Amazon as owner is US-ian

-

-

www.dnsbelgium.be www.dnsbelgium.be

-

We are aware that AWS, as the largest player, cannot be exactly matched, but we are confident that we do not have to compromise on security and availability with a European counterpart.

this is the sticking point, and true. You cannot expect a plug and play replacement. Analyse the components, make a new necklace of the different pieces.

-

That is not currently planned. We primarily want our services within Europe to run on European infrastructure with a view to digital independence, greater control and as little vendor lock-in as possible.

not moving off US tech infra completely. Yet, European services on European infra is the goal. The nameserver infra is global, they say, so is that the carve-out, or are there other US vendors with EU datacenters in play?

-

The most crucial services run redundantly, both within AWS and in a Belgian data centre. In the event of a serious incident at AWS, zone signing, zone distribution and the database can be deployed immediately.

They have a redundant set-up. Meaning they could leave anytime, just need a new redundancy plan then. Makes it more realistic they will move next year.

-

Everything related to registering and managing the .be, .vlaanderen and .brussels domains runs within AWS, except for the name servers. The latter are the “GPS” servers that ensure that when you type in a domain name, you are sent to the correct IP address. These are spread across the globe.

Client facing interfaces run on AWS, registration, management of domains etc. Name servers are a global network. Makes it quite a bit less dramatic (both the before and after)

-

It is important that the nameserver infrastructure, which has never run on AWS, is not interrupted

the nameserver infra never ran on AWS.

-

Peter Vergote, legal advisor at DNS Belgium: "Our data and processes at AWS are located on European soil, spread across multiple data centres. This is perfectly in line with European legislation such as GDPR and NIS2 .

That is technically true, but not in practice. The 2018 Cloud Act makes all EU reg moot points from the US perspective. That makes any American involvement in your stack by def a breach of NIS2, GDPR and other digital and data related regs.

-

The migration away from AWS is still in its early stages. The market is currently being surveyed. The transition will begin in 2027 and is expected to be completed in the second half of 2027.

The decision is made, but transition still in early planning phases. To be completed 2027. Market survey ongoing, meaning there's no new contractor in sight as yet.

-

At the same time, DNS Belgium wants to inspire other organisations in Belgium and Europe with its AWS exit. Technological dependence on, or possible access and influence by non-European players, is a growing concern among Belgian companies.

DNS Belgium wants to set an example. It is also responding to concerns of their customers.

-

Since 2017, the system that processes the registrations of .be domain names has been located in AWS's European data centres.

DNS Belgium is on AWS since 2017, in EU data centers but that means nothing, definitely not since 2018

-

- Dec 2025

-

pierce.dev pierce.dev

-

I'm not advocating that everyone should self-host everything. But the pendulum has swung too far toward managed services. There's a large sweet spot where self-hosting makes perfect sense, and more teams should seriously consider it. Start small. If you're paying more than $200/month for RDS, spin up a test server and migrate a non-critical database. You might be surprised by how straightforward it is. The future of infrastructure is almost certainly more hybrid than it's been recently: managed services where they add genuine value, self-hosted where they're just expensive abstractions. Postgres often falls into the latter category. Footnotes They're either just hosting a vanilla postgres instance that's tied to the deployed hardware config, or doing something opaque with edge deploys and sharding. In the latter case they near guarantee your DB will stay highly available but costs can quickly spiral out of control. ↩ Maybe up to billions at this point. ↩ Even on otherwise absolutely snail speed hardware. ↩ This was Jeff Bezos's favorite phrase during the early AWS days, and it stuck. ↩ Similar options include OVH, Hetzner dedicated instances, or even bare metal from providers like Equinix. ↩ AWS RDS & S3 has had several major outages over the years. The most memorable was the 2017 US-East-1 outage that took down half the internet. ↩

Cloud hosting can become an expensive abstraction layer quickly. I also think there's an entire generation of coders / engineers who treat silo'd cloudhosting as a given, without considering other options and their benefits. Large window for selfhosting in which postgres almost always falls

-

When self-hosting doesn't make sense I'd argue self-hosting is the right choice for basically everyone, with the few exceptions at both ends of the extreme: If you're just starting out in software & want to get something working quickly with vibe coding, it's easier to treat Postgres as just another remote API that you can call from your single deployed app If you're a really big company and are reaching the scale where you need trained database engineers to just work on your stack, you might get economies of scale by just outsourcing that work to a cloud company that has guaranteed talent in that area. The second full freight salaries come into play, outsourcing looks a bit cheaper. Regulated workloads (PCI-DSS, FedRAMP, HIPAA, etc.) sometimes require a managed platform with signed BAAs or explicit compliance attestations.

Sees use for silo'd postgres hosting on the extremes of the spectrum: when you start without knowledge and are vibecoding, so you can treat the database as just another API, and when you are megacorp (outsourcing looks cheaper quickly if you have to otherwise pay multiple FTE salaries otherwise), or/and have to prove regulatory compliance.

-

I helped prove this to myself when I migrated off RDS. I took a pg_dump of my RDS instance, restored it to a self-hosted server with identical specs, and ran my application's test suite. Performance was identical. In some cases, it was actually better because I could tune parameters that RDS locks down.

This reads like what I did wrt Gmail 2014 and Amazon ebooks 2025. [[Leaving a walled garden or silo 20160820203833]] is listing the bits it does and originally improved for you, then rebuild outside the silo based on same components. Not difficult but needs bit of focus.

-

But the actual database engine? It's the same Postgres running the same SQL queries with the same performance characteristics.

Underneath the wrappers it is all the same.

-

The value proposition is operational: they handle the monitoring, alerting, backup verification, and incident response. It's also a production ready configuration at minute zero of your first deployment.

The value proposition is twofold: 1. operational handling (monitoring, backups all that) 2. production ready config out of the box (which feels like fast progress, but also locks you in ofc)

-

For the most part managed database services aren't running some magical proprietary technology. They're just running the same open-source Postgres you can download with some operational tooling wrapped around it. Take AWS RDS. Under the hood, it's: Standard Postgres compiled with some AWS-specific monitoring hooks A custom backup system using EBS snapshots Automated configuration management via Chef/Puppet/Ansible Load balancers and connection pooling (PgBouncer) Monitoring integration with CloudWatch Automated failover scripting

AWS RDS is not much else as open source postgres with operational tooling that is not complex itself.

-

Fast forward to 2025 and I hope the pendulum might be swinging back. RDS pricing has grown considerably more aggressive. A db.r6g.xlarge instance (4 vCPUs, 32GB RAM) now costs $328/month before you add storage, backups, or multi-AZ deployment. For that price, you could rent a dedicated server with 32 cores and 256GB of RAM.

Amazon database servers have become much more expensive since 2015. You could run a dedicated full server for similar money.

-

The real shift happened around 2015 when cloud adoption accelerated. Companies started to view any infrastructure management as "undifferentiated heavy lifting"4. Running your own database became associated with legacy thinking. A new orthodoxy emerged of focusing on your application logic and letting AWS handle the infrastructure.

This post places the shift to hyperscaler dependency in 2015, when (I presume: software) companies began to view any involvement in digital infrastructure management as hassle.

-

-

doc.anytype.io doc.anytype.io

-

Our backup nodes are located in Switzerland, and we use AWS (Amazon Web Services).

Anytype uses Swiss data centers for backup nodes, and uses AWS to get stuff there. Everything is encrypted so in that sense not problematic, but still it means Amazon holds the off-switch.

-

- Nov 2025

-

www.youtube.com www.youtube.com

-

AWS is 10x slower than a dedicated server for the same price

- Video Title: AWS is 10x slower than a dedicated server for the same price

- Core Argument: Cloud providers, particularly AWS, charge significantly more for base-level compute instances than traditional Virtual Private Server (VPS) providers while delivering substantially less performance. The video argues that horizontal scaling is often unnecessary for 95% of businesses.

- Comparison Setup: The video compared an entry-level AWS instance (EC2 and ECS Fargate) with a similarly specced VPS (1 vCPU, 2 GB RAM) from a popular German provider (Hetzner, referred to as HTNA in the video) using the Sysbench tool.

- AWS EC2 Results: The base EC2 instance cost almost 3 times more than the VPS but delivered poor performance:

- CPU: Approximately 20% of the VPS performance.

- Memory: Only 7.74% of the VPS performance.

- AWS ECS Fargate Results: Using the "serverless" Fargate option, setup was complex and involved many AWS services (ECS, ECR, IAM).

- Cost: The instance was 6 times more expensive than the VPS.

- Performance: Performance improved over EC2 but was still slower and less consistent: 23% (CPU), 80% (Memory), and 84% (File I/O) of the VPS's performance, with fluctuations up to 18%.

- Cost Efficiency: A dedicated VPS server with 4vCPU and 16 GB of RAM was found to be cheaper than the 1 vCPU ECS Fargate task used in the test.

- Conclusion: For a similar price point, a dedicated server is about 10 times faster than an equivalent AWS cloud instance. The video concludes that AWS's dominance is due to its large marketing spend, not superior technical or cost efficiency. A real-world example cited is Lichess, which supports 5.2 million chess games per day on a single dedicated server [00:12:06].

Hacker News Discussion

The discussion was split between criticizing the video's methodology and debating the fundamental value proposition of hyperscale cloud providers versus traditional hosting.

- Criticism of Methodology: Several top comments argued the video was a "low effort 'ha ha AWS sucks' video" with an "AWFUL analysis." Critics suggested the author did not properly configure or understand ECS/Fargate and that comparing the lowest-end shared instances isn't a "proper comparison," which should involve mid-range hardware and careful configuration.

- The Value of AWS Services: Many users defended AWS by stating that customers rarely choose it just for the base EC2 instance price. The true value lies in the managed ecosystem of services like RDS, S3, EKS, ELB, and Cognito, which abstract away operational complexity and allow large customers to negotiate off-list pricing.

- Complexity and Cost Rebuttals: Counter-arguments highlighted that managing AWS complexity often requires hiring expensive "cloud wizards" (Solutions Architects or specialized DevOps staff), shifting the high cost of a SysAdmin team to high cloud management costs. Anecdotes about sudden huge AWS bills and complex debugging were common.

- The "Nobody Gets Fired" Factor: The most common justification for choosing AWS, even at a higher cost, is risk aversion and the avoidance of personal liability. If a core AWS region (like US-East-1) goes down, it's a shared industry failure, but if a self-hosted server fails, the admin is solely responsible for fixing it at 3 a.m.

- Alternative Recommendations: The discussion frequently validated the use of non-hyperscale providers like Hetzner and OVH for significant cost savings and comparable reliability for many non-"cloud native" workloads.

-

-

-

How when AWS was down, we were not

Brief summary

Authress avoided downtime during the AWS us-east-1 outage by implementing a multi-region, redundant infrastructure with automated DNS failover using custom health checks, edge-optimized routing, and robust anomaly detection, backed by rigorous testing and incremental deployments to minimize risk and impact. Their system design assumes failure is inevitable and focuses on quick detection, seamless failover, and minimizing single points of failure through automation and continuous validation.

Long summary

- Authress experienced a major AWS us-east-1 outage affecting DynamoDB and other critical AWS services.

- They run infrastructure in us-east-1 due to customer location demands, despite known risks.

- AWS services like CloudFront, Certificate Manager, Lambda@Edge, and IAM control planes are centralized in us-east-1, impacting availability during incidents.

- Aiming for a 5-nines SLA (99.999% uptime) requires more than relying on AWS SLAs alone, which are insufficient.

- Simple single-region architectures fail to meet high reliability due to frequent AWS incidents.

- Authress recognizes "everything fails all the time" and designs systems assuming failure.

- Retry strategies are mathematically analyzed; third-party components must have at least 99.7% reliability to be usable.

- Multi-region redundant infrastructure with DNS failover via AWS Route 53 health checks enables automatic failover.

- Custom health checks validate actual service health beyond default DNS checks.

- Edge-optimized architecture using CloudFront and Lambda@Edge improves latency and provides better failover options.

- DynamoDB Global Tables replicate data across regions to support failover.

- Rigorous testing and validation, including application-level tests, mitigate risks of bugs in production.

- Incremental deployment (customer buckets) limits impact by rolling out changes gradually.

- Asynchronous validation tests check consistency across databases after deployments.

- Anomaly detection is used to identify meaningful incidents impacting business logic, beyond mere HTTP error codes.

- Customer support feedback is integrated into incident detection to catch undetected or gray failures.

- Security measures include rate limiting, AWS WAF with IP reputation lists, and blocking suspicious high-volume requests.

- Resource exhaustion prevention is critical, with rate limiting implemented at multiple infrastructure layers.

- Infrastructure as Code (IaC) deployment differences across regions and edge leads to challenges in consistency.

- Despite all these measures, achieving a true 5-nines SLA is extremely challenging but remains a core commitment.

Summary of HN discussion

https://news.ycombinator.com/item?id=45955565

- The discussion highlights concerns about automation and Infrastructure as Code (IaC) being potential failure points, emphasizing the challenge of safely updating these systems.

- Rollbacks are rarely automatic; often, knowing in advance to avoid certain rollouts is preferable as automated rollbacks can worsen failures.

- Simple, less complex infrastructure changes are preferred to reduce human error, which is the leading cause of incidents.

- There is skepticism about the reliability of Route 53 failover in practice, with concerns about its failure modes and the complexity of multi-cloud DNS failover.

- Some contributors suggest modular IaC approaches (Pulumi, Terragrunt) for safer, repeatable deployments but warn about added complexity.

- Retry logic in failures is criticized; retries may not improve reliability linearly due to correlated failures and overall system overload during outages.

- Latency and client timeout constraints limit the practical number of retries possible.

- DNS is acknowledged as a single point of failure with caching and failover timing challenges.

- Multi-cloud failover at DNS level is complex, costly, and not widely implemented due to infrastructure and coordination requirements.

- Gray failures (where the system reports healthy but customers experience issues) and the difficulty in knowing real incident impact without customer feedback are noted.

- Customer support is critical in incident detection since automated systems cannot catch every failure.

- Detailed monitoring via CloudFront and telemetry helps identify actual service issues during outages.

- Overall, the theme is the difficulty in achieving perfect reliability, the importance of simplicity, and the need for layered detection and response strategies to manage failures.

-

-

www.linkedin.com www.linkedin.com

-

How we slashed our EKS costs by 43% with one simple scheduler tweak 🚀

- AWS EKS costs can escalate due to massive, parallel workloads in life sciences/drug development (e.g., genomic sequencing, molecular modeling).

- Default Kubernetes scheduler uses

leastAllocatedstrategy, spreading pods across many nodes for fairness/high availability. leastAllocatedstrategy causes many partially utilized nodes, preventing autoscalers from scaling down idle nodes, increasing costs.mostAllocatedscheduling strategy "packs" pods onto fewer nodes, maximizing utilization and enabling autoscalers like Karpenter to remove idle nodes.- Switching to

mostAllocatedcan reduce runtime costs significantly (e.g., ~10% in UAT, 43% in PROD environments). - Custom scheduler deployment on AWS EKS requires creating a service account,

ClusterRoleBindings,RoleBinding, aConfigMapwith themostAllocatedscoring strategy, and a deployment with a matching Kubernetes version container image. - Resource weights can prioritize packing of expensive resources (e.g., high weight on GPUs for ML workloads).

- Testing in non-production environments is recommended before full rollout.

- Implementing

mostAllocatedscheduling can dramatically optimize costs by enabling cluster autoscalers to shut down unused nodes.

-

-

tim.siosm.fr tim.siosm.fr

-

Description of moving away from Spotify to Qobuz. Spotify is Swedish but in the spotlight for hosting ICE ads in the USA at the moment. Qobuz is French, but hosts their stuff on AWS.

-

-

www.qobuz.com www.qobuz.com

-

Qobuz is a possible Spotify alternative. Qobuz is a French company (Xandrie s.a.), service started in 2008. They host their stuff on Amazon AWS though.

Tags

Annotators

URL

-

- Oct 2025

-

docs.aws.amazon.com docs.aws.amazon.com

-

You can access Amazon S3 from your VPC using gateway VPC endpoints. After you create the gateway endpoint, you can add it as a target in your route table for traffic destined from your VPC to Amazon S3.

Access AWS S3 from your VPC using Gateway Endpoints, not a bucket policy.

-

- Jul 2025

-

aws.amazon.com aws.amazon.com

-

Automating complex document processing: How Onity Group built an intelligent solution using Amazon Bedrock

-

- Mar 2025

-

repost.aws repost.aws

-

The main difference between the Amazon EKS-optimized AMI (amazon-eks-node-1.29) and the Bottlerocket AMI (bottlerocket-aws-k8s-1.29) lies in their purpose

See the summary below this highlight

-

-

aws.amazon.com aws.amazon.com

-

Reduce container startup time on Amazon EKS with Bottlerocket data volume

-

Introduction

- Containers are widely used for scalable applications but face challenges with startup times for large images (e.g., AI/ML workloads).

- Pulling large images from Amazon Elastic Container Registry (ECR) can take several minutes, impacting performance.

- Bottlerocket, an AWS open-source Linux OS optimized for containers, offers a solution to reduce container startup time.

-

Solution Overview

- Bottlerocket's data volume feature allows prefetching container images locally, eliminating the need for downloading during startup.

- Prefetching is achieved by creating an Amazon Elastic Block Store (EBS) snapshot of Bottlerocket's data volume and mapping it to new Amazon EKS nodes.

- Steps to implement:

- Spin up an Amazon EC2 instance with Bottlerocket AMI.

- Pull application images from the repository.

- Create an EBS snapshot of the data volume.

- Map the snapshot to Amazon EKS node groups.

-

Benefits of Bottlerocket

- It separates OS and container data volumes, ensuring consistency and security during updates.

- Prefetched images significantly reduce startup times for large containers.

-

Implementation Walkthrough

- Step 1: Build EBS Snapshot

- Automate snapshot creation using a script.

- Prefetch images like Jupyter-PyTorch and Kubernetes pause containers.

- Export the snapshot ID for use in node group configuration.

- Step 2: Setup Amazon EKS Cluster

- Create two node groups:

no-prefetch-mng: Without prefetched images.prefetch-mng: With prefetched images mapped via EBS snapshot.

- Step 3: Deploy Pods

- Test deployment on both node groups.

- Prefetched nodes start pods in just 3 seconds, compared to 49 seconds without prefetching.

- Step 1: Build EBS Snapshot

-

Results

- Prefetching reduced container startup time from 49 seconds to 3 seconds, improving efficiency and user experience.

-

Further Enhancements

- Use Karpenter for automated scaling with Bottlerocket nodes.

- Automate snapshot creation in CI pipelines using GitHub Actions.

-

Cleaning Up

- Delete AWS resources (EKS cluster, Cloud9 environment, EBS snapshots) to avoid charges after testing.

-

Conclusion

- Bottlerocket's data volume prefetching dramatically enhances container startup performance for large workloads on Amazon EKS.

-

-

- Nov 2024

-

python.plainenglish.io python.plainenglish.io

-

Deploying Machine Learning Models with Flask and AWS Lambda: A Complete Guide

In essence, this article is about:

1) Training a sample model and uploading it to an S3 bucket:

```python from sklearn.datasets import load_iris from sklearn.model_selection import train_test_split from sklearn.linear_model import LogisticRegression import joblib

Load the Iris dataset

iris = load_iris() X, y = iris.data, iris.target

Split the data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

Train the logistic regression model

model = LogisticRegression(max_iter=200) model.fit(X_train, y_train)

Save the trained model to a file

joblib.dump(model, 'model.pkl') ```

- Creating a sample Zappa config, because AWS Lambda doesn’t natively support Flask, we need to use Zappa, a tool that helps deploy WSGI applications (like Flask) to AWS Lambda:

```json { "dev": { "app_function": "app.app", "exclude": [ "boto3", "dateutil", "botocore", "s3transfer", "concurrent" ], "profile_name": null, "project_name": "flask-test-app", "runtime": "python3.10", "s3_bucket": "zappa-31096o41b" },

"production": { "app_function": "app.app", "exclude": [ "boto3", "dateutil", "botocore", "s3transfer", "concurrent" ], "profile_name": null, "project_name": "flask-test-app", "runtime": "python3.10", "s3_bucket": "zappa-31096o41b" }} ```

- Writing a sample Flask app:

```python import boto3 import joblib import os

Initialize the Flask app

app = Flask(name)

S3 client to download the model

s3 = boto3.client('s3')

Download the model from S3 when the app starts

s3.download_file('your-s3-bucket-name', 'model.pkl', '/tmp/model.pkl') model = joblib.load('/tmp/model.pkl')

@app.route('/predict', methods=['POST']) def predict(): # Get the data from the POST request data = request.get_json(force=True)

# Convert the data into a numpy array input_data = np.array(data['input']).reshape(1, -1) # Make a prediction using the model prediction = model.predict(input_data) # Return the prediction as a JSON response return jsonify({'prediction': int(prediction[0])})if name == 'main': app.run(debug=True) ```

- Deploying this app to production (to AWS):

bash zappa deploy productionand later eventually updating it:

bash zappa update production- We should get a URL like this:

https://xyz123.execute-api.us-east-1.amazonaws.com/productionwhich we can query:

curl -X POST -H "Content-Type: application/json" -d '{"input": [5.1, 3.5, 1.4, 0.2]}' https://xyz123.execute-api.us-east-1.amazonaws.com/production/predict

-

- Aug 2024

-

brooker.co.za brooker.co.za

-

Aurora Serverless packs a number of database instances onto a single physical machine (each isolated inside its own virtual machine using AWS’s Nitro Hypervisor). As these databases shrink and grow, resources like CPU and memory are reclaimed from shrinking workloads, pooled in the hypervisor, and given to growing workloads

Oh, wow, so the workload themselves are dynamically scaling up and down "vertically" as opposed to "horizontally" - I think this is a bit like dynamically changing the size of Docker containers that are running the databases while they're running

-

-

bitsand.cloud bitsand.cloud

-

Slashing Data Transfer Costs in AWS by 99%

The essence of cutting AWS data transfer costs by 99% is to use Amazon S3 as an intermediary for data transfers between EC2 instances in different Availability Zones (AZs). Instead of direct transfers, which incur significant costs, you upload the data to S3 (free upload), and then download it within the same region (free download). By keeping the data in S3 only temporarily, you minimize storage costs, drastically reducing overall transfer expenses.

Tags

Annotators

URL

-

- Jul 2024

-

-

This is a good policy, as unbundled REC purchases have a bad reputation when they are used across regions and countries as a cheap substitute for actual investments. But here they are being purchased to match investments in new generating capacity.

This is an interesting framing. I haven't seen it present like this before. Is there a way to "re-bundle" them?

-

- Jun 2024

-

spacelift.io spacelift.io

-

Neither of the methods shown above are ideal in environments where you require several clusters or need them to be provisioned in a consistent way by multiple people.

In this case, IaC is favored over using EKS directly or manually deploying on EC2

-

Running a cluster directly on EC2 also gives you the choice of using any available Kubernetes distribution, such as Minikube, K3s, or standard Kubernetes as deployed by Kubeadm.

-

EKS is popular because it’s so simple to configure and maintain. You don’t need to understand the details of how Kubernetes works or how Nodes are joined to your cluster and secured. The EKS service automates cluster management procedures, leaving you free to focus on your workloads. This simplicity can come at a cost, though: you could find EKS becomes in-flexible as you grow, and it might be challenging to migrate from if you switch to a different cloud provider.

Why use EKS

-

The EKS managed Kubernetes engine isn’t included in the free tier. You’ll always be billed $0.10 per hour for each cluster you create, in addition to the EC2 or Fargate costs associated with your Nodes. The basic EKS charge only covers the cost of running your managed control plane. Even if you don’t use EKS, you’ll still need to pay to run Kubernetes on AWS. The free tier gives you access to EC2 for 750 hours per month on a 12-month trial, but this is restricted to the t2.micro and t3.micro instance types. These only offer 1 GiB of RAM so they’re too small to run most Kubernetes distributions.

Cost of EKS

-

Some of the other benefits of Kubernetes on AWS include

Benefits of using Kubernetes on AWS: - scalability - cost efficiency - high availability

Tags

Annotators

URL

-

- Apr 2024

-

-

Lesson 3: When executing a lot of requests to S3, make sure to explicitly specify the AWS region.

-

Lesson 2: Adding a random suffix to your bucket names can enhance security.

-

Lesson 1: Anyone who knows the name of any of your S3 buckets can ramp up your AWS bill as they like.

The author was charged over $1300 after two days of using an S3 bucket, because some OS tool stored a default bucket name in the config, which was the same as his bucket name.

Luckily, after everything AWS made an exception and he did not have to pay the bill.

-

-

-

To address the issues of CAS, Karpenter uses a different approach. Karpenter directly interacts with the EC2 Fleet API to manage EC2 instances, bypassing the need for autoscaling groups.

Karpenter

-

The problem occurs when you want to move the pod to another node, in cases such as cluster rebalancing, spot interruptions, and other events. This is because the EBS volumes are zonal bound and can only be attached to EC2 instances within the zone they were originally provisioned in.This is a key limitation that CAS is not able to take into an account when provisioning a new node.

Key limitation of CAS

-

Since Karpenter can schedule nodes quicker, it will most often win this race and provide a new node for the pending workload. CAS will still attempt to create a new node, however will be slower and will most likely have to remove the node after some time, due to emptiness. This brings unnecessary costs to your cloud bill

-

It’s worth mentioning that Cluster Autoscaler and Karpenter can co-exist within the same cluster.

-

- Feb 2024

-

docs.aws.amazon.com docs.aws.amazon.com

-

At a minimum, each ADR should define the context of the decision, the decision itself, and the consequences of the decision for the project and its deliverables

ADR sections from the example: * Title * Status * Date * Context * Decision * Consequences * Compliance * Notes

-

- Jan 2024

-

-

LocalStack is a cloud service emulator that runs AWS services solely on your laptop without connecting to a remote cloud provider .

https://www.localstack.cloud/

-

-

aws.amazon.com aws.amazon.com

-

dynamo db stream best practice

-

- Nov 2023

-

aws.amazon.com aws.amazon.com

-

You can now run Amazon EKS clusters on a Kubernetes version for up to 26 months from the time the version is generally available on Amazon EKS.

-

-

boavizta.org boavizta.org

-

It should be noted that in France, regulations do not allow this market-based approach when reporting company level CO2e emissions : “The assessment of the impact of electricity consumption in the GHG emissions report is carried out on the basis of the average emission factor of the electrical network (…) The use of any other factor is prohibited. There is therefore no discrimination by [electricity] supplier to be established when collecting the data.” (Regulatory method V5-BEGES decree).

Companies are barred from using market based approaches for reporting?

How does it work for Amazon then?

-

- Sep 2023

-

start.jcolemorrison.com start.jcolemorrison.com

-

VPC Subnets belong to a Network ACL that determines if traffic is allowed / denied entry and exit to the ENTIRE subnet

-

- Aug 2023

-

aws.amazon.com aws.amazon.com

-

aws infra changes also emit events with payload, we can catch and enrich these events to make decisions in downstream services

-

- Jun 2023

-

aws.amazon.com aws.amazon.com

- May 2023

-

arstechnica.com arstechnica.com

-

Amazon has a new set of services that include an LLM called Titan and corresponsing cloud/compute services, to roll your own chatbots etc.

-

- Mar 2023

-

tomaszdudek.substack.com tomaszdudek.substack.com

-

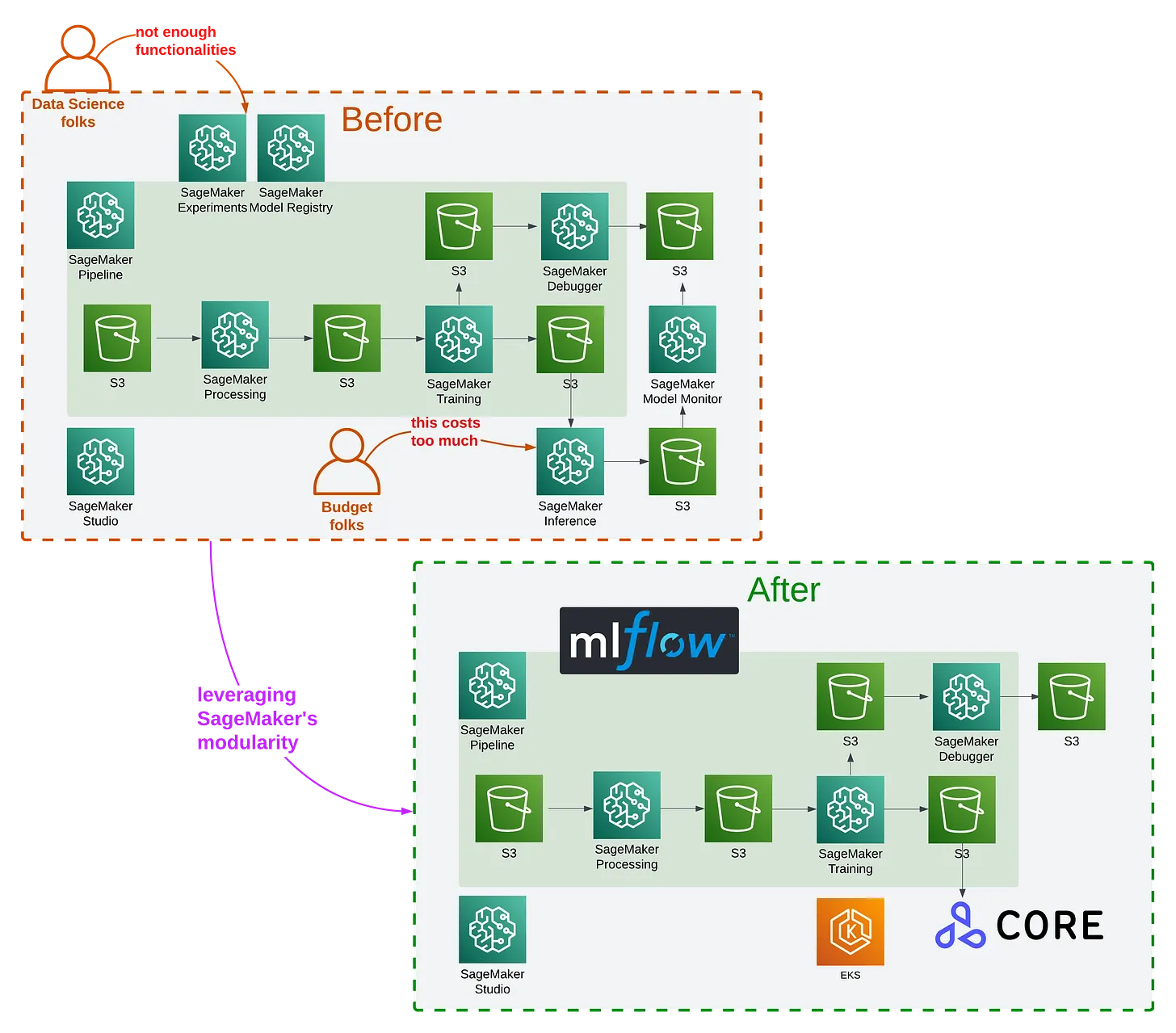

You can freely replace SageMaker services with other components as your project grows and potentially outgrows SageMaker.

-

- Jan 2023

-

www.proud2becloud.com www.proud2becloud.com

-

Configure Fargate tasks as gitlab runners, with many code samples.

-

- Oct 2022

-

-

transferring data across Availability zones within the same region is also a good way to save money

1) data transferring tip on AWS

-

When you use a private IP address, you are charged less when compared to a public IP address or Elastic IP address.

2) data transferring tip on AWS

-

But when you transfer data from one Amazon region to another, AWS charges you for that. It depends on the AWS region you are and this is the real deciding factor. For example, if you are in the US West(Oregon) region, you have to shell out $0.080/GB whereas in Asia Pacific (Seoul) region it bumps up to $0.135/GB.

Transferring data in AWS within separate regions is quite costly

-

When you transfer data between Amazon EC2, Amazon Redshift, Amazon RDS, Amazon Network Interfaces, and Amazon Elasticache, you have to pay zero charges if they are within the same Availability Zone.

Transferring data in AWS within the same AZ is free

-

When you transfer data from the internet to AWS, it is free of charge. AWS services like EC2 instances, S3 storage, or RDS instances, when you transfer data from the Internet into these you don’t have to pay any charge for it. However, if you transfer data using Elastic IPv4 address or peered VPC using an IPv6 address you will be charged $0.01/gb whenever you transfer data into an EC2 instance. The real catch is when you transfer data out of any of the AWS services. This is where AWS charges you money depending on the area you have chosen and the amount of data you are transferring. Some regions have higher charges than others.

Data transfer costs on AWS

-

-

levelup.gitconnected.com levelup.gitconnected.com

-

we made sure to implement fail safes at each stage of the migration to make sure we could fall back if something were to go wrong. It’s also why we tested on a small scale before proceeding with the rest of the migration.

While planning a big migration, make sure to have a fall back plan

-

We mirrored PostgreSQL shards storing cached_urls tables in CassandraWe switched service.prerender.io to Cloudflare load balancer to allow dynamic traffic distributionWe set up new EU private-recache serversWe keep performing stress tests to solve any performance issues

Steps of phase 3 migration

-

“The true hidden price for AWS is coming from the traffic cost, they sell a reasonably priced storage, and it’s even free to upload it. But when you get it out, you pay an enormous cost.

AWS may be reasonably price, but moving data out will cost a lot (e.g. $0.080/GB in the US West, or $0.135/GB in the Asia Pacific)!

-

In the last four weeks, we moved most of the cache workload from AWS S3 to our own Cassandra cluster.

Moving from AWS s3 to an own Cassandra cluster

-

After testing whether Prerender pages could be cached in both S3 and minio, we slowly diverted traffic away from AWS S3 and towards minio.

Moving from AWS S3 towards minio

-

Phase 1 mostly involved setting up the bare metal servers and testing the migration on a small and more manageable setting before scaling. This phase required minimal software adaptation, which we decided to run on KVM virtualization on Linux.

Migration from AWS to on-prem started by: - setting bare metal servers - testing - adapting software to run on KVM virtualization on Linux

-

The solution? Migrate the cached pages and traffic onto Prerender’s own internal servers and cut our reliance on AWS as quickly as possible.

When the Prerender team moved from AWS to on-prem, they have cut the cost from $1,000,000 to $200,000, for the data storage and traffic cost

-

- Aug 2022

-

aws.amazon.com aws.amazon.com

-

A data lake is different, because it stores relational data from line of business applications, and non-relational data from mobile apps, IoT devices, and social media.

A data lake vs a Data Warehouse (

-

The data structure, and schema are defined in advance to optimize for fast SQL queries, where the results are typically used for operational reporting and analysis. Data is cleaned, enriched, and transformed so it can act as the “single source of truth”

Data warehouse

-

- Jun 2022

-

catalog.us-east-1.prod.workshops.aws catalog.us-east-1.prod.workshops.aws

- Nov 2021

-

aws.amazon.com aws.amazon.com

-

We implemented a bash script to be installed in the master node of the EMR cluster, and the script is scheduled to run every 5 minutes. The script monitors the clusters and sends a CUSTOM metric EMR-INUSE (0=inactive; 1=active) to CloudWatch every 5 minutes. If CloudWatch receives 0 (inactive) for some predefined set of data points, it triggers an alarm, which in turn executes an AWS Lambda function that terminates the cluster.

Solution to terminate EMR cluster; however, right now EMR supports auto-termination policy out of the box

-

- Oct 2021

-

cloudcasts.io cloudcasts.io

-

So, while DELETE operations are free, LIST operations (to get a list of objects) are not free (~$.005 per 1000 requests, varying a bit by region).

Deleting buckets on S3 is not free. If you use either Web Console or AWS CLI, it will execute the LIST call per 1000 objects

Tags

Annotators

URL

-

-

www.oreilly.com www.oreilly.com

-

few battle-hardened options, for instance: Airflow, a popular open-source workflow orchestrator; Argo, a newer orchestrator that runs natively on Kubernetes, and managed solutions such as Google Cloud Composer and AWS Step Functions.

Current top orchestrators:

- Airflow

- Argo

- Google Cloud Composer

- AWS Step Functions

-

- Sep 2021

- Aug 2021

-

docs.docker.com docs.docker.com

-

docker compose up

Deploy to AWS with docker compose.

Tags

Annotators

URL

-

- Jun 2021

- Apr 2021

-

docs.aws.amazon.com docs.aws.amazon.com

-

A VPN connection or an AWS Direct Connect connection to a corporate network

This won't work!

-

- Mar 2021

-

aws.amazon.com aws.amazon.com

-

Werner Vogels, Amazon CTO, notes that one of the lessons we have learned at Amazon is to expect the unexpected. He reminds us that failures are a given, and as a consequence it’s desirable to build systems that embrace failure as a natural occurrence. Coding around these failures is important, but undifferentiated, work that improves the integrity of the solution being delivered. However, it takes time away from investing in differentiating code.

This is an annotation I made.

-

When asked to define the role of the teacher, for example, Reggio educators do not begin in the way typical to

This is an annotation I made.

-

This is an annotation I made.

-

This is an annotation I made.

-

This is an annotation I made.

-

-

aws.amazon.com aws.amazon.com

-

Another application that demands extreme reliability is the configuration of foundational components from AWS, such as Network Load Balancers. When a customer makes a change to their Network Load Balancer, such as adding a new instance or container as a target, it is often critical and urgent. The customer might be experiencing a flash crowd and needs to add capacity quickly. Under the hood, Network Load Balancers run on AWS Hyperplane, an internal service that is embedded in the Amazon Elastic Compute Cloud (EC2) network. AWS Hyperplane could handle configuration changes by using a workflow. So, whenever a customer makes a change, the change is turned into an event and inserted into a workflow that pushes that change out to all of the AWS Hyperplane nodes that need it. They can then ingest the change.

This article clearly describes the functionality about aws elb

-

- Feb 2021

- Jan 2021

-

-

Zappos created models to predict customer apparel sizes, which are cached and exposed at runtime via microservices for use in recommendations.

There is another company named Virtusize who is doing the same thing like size predicting or recommendation

-

-

protonmail.com protonmail.com

-

Note: If your DNS does not allow you to add “@” as the hostname, please try leaving this field blank when you enter the ProtonMail verification information.

If you're using AWS Route53, the console will silently accept the @ for the host, but WILL BE INVALID. You will need to follow this guidance to complete DNS configuration for ProtonMail.

Tags

Annotators

URL

-

- Nov 2020

-

docs.aws.amazon.com docs.aws.amazon.com

-

The details of what goes into a policy vary for each service, depending on what actions the service makes available, what types of resources it contains, and so on.

This means that some kinds of validation cannot be done on write. For example, I've been able to write Resource values that contain invalid characters.

-

- Sep 2020

- Aug 2020

-

-

Archive your AWS data to reduce storage cost

By archiving data on AWS we can reduce the costs up to 97%

Tags

Annotators

URL

-

- Jun 2020

-

www.savjee.be www.savjee.be

-

The best all-around performer is AWS CloudFront, followed closely by GitHub Pages. Not only do they have the fastest response times (median), they’re also the most consistent. They are, however, closely followed by Google Cloud Storage. Interestingly, there is very little difference between a regional and multi-regional bucket. The only reason to pick a multi-regional bucket would be the additional uptime guarantee. Cloudflare didn’t perform as well I would’ve expected.

Results of static webhosting benchmark (2020 May):

- AWS CloudFront

- GitHub Pages

- Google Cloud Storage

-

- May 2020

-

adayinthelifeof.nl adayinthelifeof.nl

-

Amazon Machine Learning Deprecated. Use SageMaker instead.

Instead of Amazon Machine Learning use Amazon SageMaker

Tags

Annotators

URL

-

-

medium.com medium.com

-

My friends ask me if I think Google Cloud will catch up to its rivals. Not only do I think so — I’m positive five years down the road it will surpass them.

GCP more popular than AWS in 2025?

-

So if GCP is so much better, why so many more people use AWS?

Why so many people use AWS:

- they were first

- aggressive expansion of product line

- following the crows

- fear of not getting a job based on GCP

- fear that GCP may be abandoned by Google

-

As I mentioned I think that AWS certainly offers a lot more features, configuration options and products than GCP does, and you may benefit from some of them. Also AWS releases products at a much faster speed.You can certainly do more with AWS, there is no contest here. If for example you need a truck with a server inside or a computer sent over to your office so you can dump your data inside and return it to Amazon, then AWS is for you. AWS also has more flexibility in terms of location of your data centres.

Advantages of AWS over GCP:

- a lot more features (but are they necessary for you?)

- a lot more configuration options

- a lot more products

- releases products at a much faster speed

- you can do simply more with AWS

- offers AWS Snowmobile

- more flexibility in terms of your data centres

-

Both AWS and GCP are very secure and you will be okay as long as are not careless in your design. However GCP for me has an edge in the sense that everything is encrypted by default.

Encryption is set to default in GCP

-

I felt that performance was almost always better in GCP, for example copying from instances to buckets in GCP is INSANELY fast

Performance wise GCP also seems to outbeat AWS

-

AWS charges substantially more for their services than GCP does, but most people ignore the real high cost of using AWS, which is; expertise, time and manpower.

AWS is more costly, requires more time and manpower over GCP

-

GCP provides a smaller set of core primitives that are global and work well for lots of use cases. Pub/Sub is probably the best example I have for this. In AWS you have SQS, SNS, Amazon MQ, Kinesis Data Streams, Kinesis Data Firehose, DynamoDB Streams, and maybe another queueing service by the time you read this post. 2019 Update: Amazon has now released another streaming service: Amazon Managed Streaming Kafka.

Pub/Sub of GCP might be enough to replace most (all?) of the following Amazon products: SQS, SNS, Amazon MQ, Kinesis Data Streams, Kinesis Data Firehose, DynamoDB Streams, Amazon Managed Streaming Kafka

-

At the time of writing this, there are 169 AWS products compared to 90 in GCP.

AWS has more products than GCP but that's not necessarily good since some even nearly duplicate

-

Spinning an EKS cluster gives you essentially a brick. You have to spin your own nodes on the side and make sure they connect with the master, which a lot of work for you to do on top of the promise of “managed”

Managing Kubernetes in AWS (EKS) also isn't as effective as in GCP or GKE

-

You can forgive the documentation in AWS being a nightmare to navigate for being a mere reflection of the confusing mess that is trying to describe. Whenever you are trying to solve a simple problem far too often you end up drowning in reference pages, the experience is like asking for a glass of water and being hosed down with a fire hydrant.

Great documentation is contextual, not referential (like AWS's)

-

Jeff Bezos is an infamous micro-manager. He micro-manages every single pixel of Amazon’s retail site. He hired Larry Tesler, Apple’s Chief Scientist and probably the very most famous and respected human-computer interaction expert in the entire world, and then ignored every goddamn thing Larry said for three years until Larry finally — wisely — left the company. Larry would do these big usability studies and demonstrate beyond any shred of doubt that nobody can understand that frigging website, but Bezos just couldn’t let go of those pixels, all those millions of semantics-packed pixels on the landing page. They were like millions of his own precious children. So they’re all still there, and Larry is not.

Case why AWS doesn't look as it supposed to be

-

The AWS interface looks like it was designed by a lonesome alien living in an asteroid who once saw a documentary about humans clicking with a mouse. It is confusing, counterintuitive, messy and extremely overcrowded.

:)

-

After you login with your token you then need to create a script to give you a 12 hour session, and you need to do this every day, because there is no way to extend this.

One of the complications when we want to use AWS CLI with 2FA (not a case of GCP)

-

In GCP you have one master account/project that you can use to manage the rest of your projects, you log in with your company google account and then you can set permissions to any project however you want.

Setting up account permission to the projects in GCP is far better than in AWS

-

It’s not that AWS is harder to use than GCP, it’s that it is needlessly hard; a disjointed, sprawl of infrastructure primitives with poor cohesion between them.

AWS management isn't as straightforward as the one of GCP

-

-

aws.amazon.com aws.amazon.com

-

A portfolio is a collection of products, together with configuration information. Portfolios help manage product configuration, and who can use specific products and how they can use them. With AWS Service Catalog, you can create a customized portfolio for each type of user in your organization and selectively grant access to the appropriate portfolio.

Tags

Annotators

URL

-

-

aws.amazon.com aws.amazon.com

-

Amazon Kinesis Data Streams (KDS) is a massively scalable and durable real-time data streaming service. KDS can continuously capture gigabytes of data per second from hundreds of thousands of sources such as website clickstreams, database event streams, financial transactions, social media feeds, IT logs, and location-tracking events.

firehose is different

- es

- s3

- redshift

Tags

Annotators

URL

-

-

aws.amazon.com aws.amazon.com

-

Amazon Kinesis Data Firehose is the easiest way to reliably load streaming data into data lakes, data stores and analytics tools. It can capture, transform, and load streaming data into Amazon S3, Amazon Redshift, Amazon Elasticsearch Service, and Splunk,

Tags

Annotators

URL

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

DynamoDB Streams enables solutions such as these, and many others. DynamoDB Streams captures a time-ordered sequence of item-level modifications in any DynamoDB table and stores this information in a log for up to 24 hours.

record db item changes

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

AWS OpsWorks Stacks uses Chef cookbooks to handle tasks such as installing and configuring packages and deploying apps.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

Your Amazon Athena query performance improves if you convert your data into open source columnar formats, such as Apache Parquet

s3 perfomance use columnar formats

-

-

aws.amazon.com aws.amazon.com

-

Amazon Macie is a security service that uses machine learning to automatically discover, classify, and protect sensitive data in AWS.

-

-

aws.amazon.com aws.amazon.com

-

Amazon AppStream 2.0 is a fully managed application streaming service. You centrally manage your desktop applications on AppStream 2.0 and securely deliver them to any computer.

fro streaming apps

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

A VPC endpoint enables you to privately connect your VPC to supported AWS services and VPC endpoint services powered by AWS PrivateLink without requiring an internet gateway, NAT device, VPN connection, or AWS Direct Connect connection. Instances in your VPC do not require public IP addresses to communicate with resources in the service. Traffic between your VPC and the other service does not leave the Amazon network.

-

-

-

Endpoint policies are currently supported by CodeBuild, CodeCommit, ELB API, SQS, SNS, CloudWatch Logs, API Gateway, SageMaker notebooks, SageMaker API, SageMaker Runtime, Cloudwatch Events and Kinesis Firehose.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

Using VPC endpoint policies A VPC endpoint policy is an IAM resource policy that you attach to an endpoint when you create or modify the endpoint. If you do not attach a policy when you create an endpoint, we attach a default policy for you that allows full access to the service. If a service does not support endpoint policies, the endpoint allows full access to the service. An endpoint policy does not override or replace IAM user policies or service-specific policies (such as S3 bucket policies). It is a separate policy for controlling access from the endpoint to the specified service.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

An interface VPC endpoint (interface endpoint) enables you to connect to services powered by AWS PrivateLink.

let you connect to aws service in private vpc

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

You can associate a health check with an alias record instead of or in addition to setting the value of Evaluate Target Health to Yes. However, it's generally more useful if Route 53 responds to queries based on the health of the underlying resources—the HTTP servers, database servers, and other resources that your alias records refer to. For example, suppose the following configuration:

aws

evaluate target health

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

For a non-proxy integration, you must set up at least one integration response, and make it the default response, to pass the result returned from the backend to the client. You can choose to pass through the result as-is or to transform the integration response data to the method response data if the two have different formats. For a proxy integration, API Gateway automatically passes the backend output to the client as an HTTP response. You do not set either an integration response or a method response.

integration vs method response

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

Set up method response status code The status code of a method response defines a type of response. For example, responses of 200, 400, and 500 indicate successful, client-side error and server-side error responses, respectively.

method response status code

-

-

aws.amazon.com aws.amazon.com

-

AWS Glue is a fully managed extract, transform, and load (ETL) service that makes it easy for customers to prepare and load their data for analytics.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

AWS Organizations terminology and concepts

organization

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

You can use organizational units (OUs) to group accounts together to administer as a single unit. This greatly simplifies the management of your accounts.

AWS Organization Unit

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

What is AWS Elastic Beanstalk?

AWS PaaS

-

Because AWS Elastic Beanstalk performs an in-place update when you update your application versions, your application can become unavailable to users for a short period of time. You can avoid this downtime by performing a blue/green deployment, where you deploy the new version to a separate environment, and then swap CNAMEs of the two environments to redirect traffic to the new version instantly.

CNAM swap

-

-

aws.amazon.com aws.amazon.com

-

Using AWS SCT to convert objects (tables, indexes, constraints, functions, and so on) from the source commercial engine to the open-source engine. Using AWS DMS to move data into the appropriate converted objects and keep the target database in complete sync with the source. Doing this takes care of the production workload while the migration is ongoing.

DMS vs SCT

data migration service vs schema conversion tool

DMS source and target db are the same

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

When an instance is stopped and restarted, the Host affinity setting determines whether it's restarted on the same, or a different, host.

host affinity setting helps for manage dedicated hosts

-

-

www.amazonaws.cn www.amazonaws.cn

-

Available Internet Connection Theoretical Min. Number of Days to Transfer 100TB at 80% Network Utilization When to Consider AWS Snowball? T3 (44.736Mbps) 269 days 2TB or more 100Mbps 120 days 5TB or more 1000Mbps 12 days 60TB or more

when snowball

1000Mbps 12 days 60TB

-

-

-

Amazon WorkSpaces is a managed, secure Desktop-as-a-Service (DaaS) solution. You can use Amazon WorkSpaces to provision either Windows or Linux desktops in just a few minutes and quickly scale to provide thousands of desktops to workers across the globe.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

The AWS Security Token Service (STS) is a web service that enables you to request temporary, limited-privilege credentials for AWS Identity and Access Management (IAM) users or for users that you authenticate (federated users)

aws resource

Tags

Annotators

URL

-

-

-

AWS Batch enables developers, scientists, and engineers to easily and efficiently run hundreds of thousands of batch computing jobs on AWS. AWS Batch dynamically provisions the optimal quantity and type of compute resources (e.g., CPU or memory optimized instances) based on the volume and specific resource requirements of the batch jobs submitted.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

For example, assume that you have a load balancer configuration that you use for most of your stacks. Instead of copying and pasting the same configurations into your templates, you can create a dedicated template for the load balancer. Then, you just use the resource to reference that template from within other templates.

nested stack

-

-

aws.amazon.com aws.amazon.com

-

AWS CloudHSM is a cloud-based hardware security module (HSM) that enables you to easily generate and use your own encryption keys on the AWS Cloud.

-

-

docs.alfresco.com docs.alfresco.com

-

Expedited retrieval allows you to quickly access your data when you need to have almost immediate access to your information. This retrieval type can be used for archives up to 250MB. Expedited retrieval usually completes within 1 and 5 minutes.

https://aws.amazon.com/glacier/faqs/

3 types of retrieval

expecited 1~5minutes

-

-

www.megaport.com www.megaport.com

-

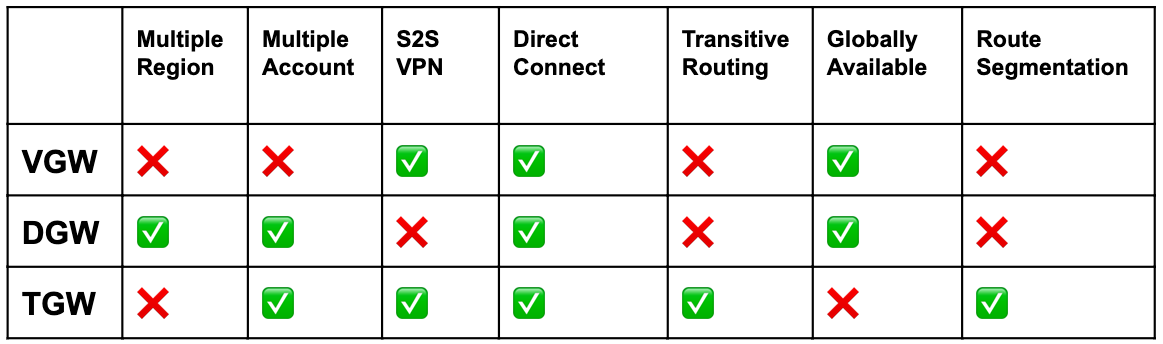

TGW coupled with AWS Resource Access Manager will allow you to use a single Transit Gateway across multiple AWS accounts, however, it’s still limited to a single region.

TGW, cross multi accounts

-

Direct Connect Gateway – DGW DGW builds upon VGW capabilities adding the ability to connect VPCs in one region to a Direct Connect in another region. CIDR addresses cannot overlap. In addition, traffic will not route from VPC-A to the Direct Connect Gateway and to VPC-B. Traffic will have to route from the VPC-A —> Direct Connect —-> Data Centre Router —-> Direct Connect —> VPC-B.

besides VGW, connect to another region through direct connect.

-

Virtual Private Gateway – VGW The introduction of the VGW introduced the ability to let multiple VPCs, in the same region, on the same account, share a Direct Connect. Prior to this, you’d need a Direct Connect Private Virtual Interface (VIF) for each VPC, establishing a 1:1 correlation, which didn’t scale well both in terms of cost and administrative overhead. VGW became a solution that reduced the expense of requiring new Direct Connect circuits for each VPC as long as both VPCs were in the same region, on the same account. This construct can be used with either Direct Connect or the Site-to-Site VPN.

VGW, save direct connect fee, by using one to coonect all vpcs in same region

-

AWS VGW vs DGW vs TGW

Tags

Annotators

URL

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

In general, bucket owners pay for all Amazon S3 storage and data transfer costs associated with their bucket. A bucket owner, however, can configure a bucket to be a Requester Pays bucket. With Requester Pays buckets, the requester instead of the bucket owner pays the cost of the request and the data download from the bucket. The bucket owner always pays the cost of storing data.

Request Pays

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

When CloudFront receives a request, you can use a Lambda function to generate an HTTP response that CloudFront returns directly to the viewer without forwarding the response to the origin. Generating HTTP responses reduces the load on the origin, and typically also reduces latency for the viewer.

can be helpful when auth

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

Amazon S3 event notifications are designed to be delivered at least once. Typically, event notifications are delivered in seconds but can sometimes take a minute or longer.

event notification of s3 might take minutes

BTW,

cloud watch does not support s3, but cloud trail does

-

-

aws.amazon.com aws.amazon.com

-

By default, Amazon Redshift has excellent tools to back up your cluster via snapshot to Amazon Simple Storage Service (Amazon S3). These snapshots can be restored in any AZ in that region or transferred automatically to other regions for disaster recovery. Amazon Redshift can even prioritize data being restored from Amazon S3 based on the queries running against a cluster that is still being restored.

Redshift is single az

-

-

aws.amazon.com aws.amazon.com

-

For this setup, do the following: 1. Create a custom AWS Identity and Access Management (IAM) policy and execution role for your Lambda function. 2. Create Lambda functions that stop and start your EC2 instances. 3. Create CloudWatch Events rules that trigger your function on a schedule. For example, you could create a rule to stop your EC2 instances at night, and another to start them again in the morning.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

FIFO queues also provide exactly-once processing but have a limited number of transactions per second (TPS):

standard quere not gurantee exactly one

-

- Apr 2020

-

stackoverflow.com stackoverflow.com

-

One way to put it is this: LSI - allows you to perform a query on a single Hash-Key while using multiple different attributes to "filter" or restrict the query. GSI - allows you to perform queries on multiple Hash-Keys in a table, but costs extra in throughput, as a result.

Secondary Index LSI vs GDI

-

-

content.aws.training content.aws.training

-

Cognito authorizers–Amazon Cognito user pools provide a set of APIs that you can integrate into your application to provide authentication. User pools are intended for mobile or web applications where you handle user registration and sign-in directly in the application.To use an Amazon Cognito user pool with your API, you must first create an authorizer of the COGNITO_USER_POOLS authorizer type, and then configure an API method to use that authorizer. After a user is authenticated against the user pool, they obtain an Open ID Connect token, or OIDC token, formatted in a JSON web token.Users who have signed in to your application will have tokens provided to them by the user pool. Then, your application can use that token to inject information into a header in subsequent API calls that you make against your API Gateway endpoint.The API call succeeds only if the required token is supplied and the supplied token is valid. Otherwise, the client isn't authorized to make the call, because the client did not have credentials that could be authorized.

-

IAM authorizers–All requests are required to be signed using the AWS Version 4 signing process (also known as SigV4). The process uses your AWS access key and secret key to compute an HMAC signature using SHA-256. You can obtain these keys as an AWS Identity and Access Management (IAM) user or by assuming an IAM role. The key information is added to the Authorization header, and behind the scenes, API Gateway takes that signed request, parses it, and determines whether or not the user who signed the request has the IAM permissions to invoke your API.

-

Lambda authorizers–A Lambda authorizer is simply a Lambda function that you can write to perform any custom authorization that you need. There are two types of Lambda authorizers: token and request parameter. When a client calls your API, API Gateway verifies whether a Lambda authorizer is configured for the API method. If it is, API Gateway calls the Lambda function.In this call, API Gateway supplies the authorization token (or the request parameters, based on the type of authorizer), and the Lambda function returns a policy that allows or denies the caller’s request.API Gateway also supports an optional policy cache that you can configure for your Lambda authorizer. This feature increases performance by reducing the number of invocations of your Lambda authorizer for previously authorized tokens. And with this cache, you can configure a custom time to live (TTL).To make it easy to get started with this method, you can choose the API Gateway Lambda authorizer blueprint when creating your authorizer function from the Lambda console.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

DynamoDB supports two types of secondary indexes: Global secondary index — An index with a partition key and a sort key that can be different from those on the base table. A global secondary index is considered "global" because queries on the index can span all of the data in the base table, across all partitions. A global secondary index is stored in its own partition space away from the base table and scales separately from the base table. Local secondary index — An index that has the same partition key as the base table, but a different sort key. A local secondary index is "local" in the sense that every partition of a local secondary index is scoped to a base table partition that has the same partition key value.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

Amazon SQS supports dead-letter queues, which other queues (source queues) can target for messages that can't be processed (consumed) successfully. Dead-letter queues are useful for debugging your application or messaging system because they let you isolate problematic messages to determine why their processing doesn't succeed.

-

-

aws.amazon.com aws.amazon.com

-

Amazon Lex is a service for building conversational interfaces into any application using voice and text

-

-

www.examtopics.com www.examtopics.com

-

A company runs a memory-intensive analytics application using on-demand Amazon EC2 C5 compute optimized instance. The application is used continuously and application demand doubles during working hours. The application currently scales based on CPU usage. When scaling in occurs, a lifecycle hook is used because the instance requires 4 minutes to clean the application state before terminating.Because users reported poor performance during working hours, scheduled scaling actions were implemented so additional instances would be added during working hours. The Solutions Architect has been asked to reduce the cost of the application.Which solution is MOST cost-effective?

should be A here, cause C5 is 40% cheaper than R5

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

When a user in an AWS account creates a blockchain network on Amazon Managed Blockchain, they also create the first member in the network. This first member has no peer nodes associated with it until you create them. After you create the network and the first member, you can use that member to create an invitation proposal for other members in the same AWS account or in other AWS accounts. Any member can create an invitation proposal.

about members of blockchain

-

-

aws.amazon.com aws.amazon.com

-

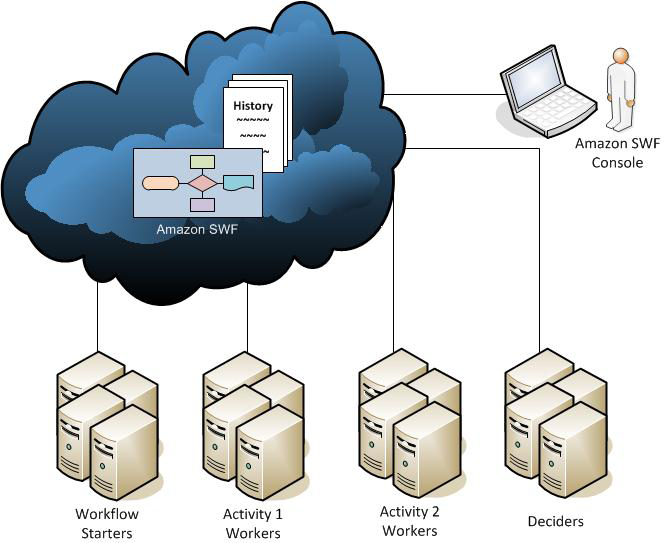

AWS Step Functions is a fully managed service that makes it easy to coordinate the components of distributed applications and microservices using visual workflows. Instead of writing a Decider program, you define state machines in JSON. AWS customers should consider using Step Functions for new applications. If Step Functions does not fit your needs, then you should consider Amazon Simple Workflow (SWF)

-

Workers are programs that interact with Amazon SWF to get tasks, process received tasks, and return the results. The decider is a program that controls the coordination of tasks,

SWF worker and decider

-

-

medium.com medium.com

-

SQS is a fully managed message queuing service that enables you to decouple and scale microservices, distributed systems, and serverless applications. SQS eliminates the complexity and overhead associated with managing and operating message oriented middleware, and empowers developers to focus on differentiating work. Using SQS, you can send, store, and receive messages between software components at any volume, without losing messages or requiring other services to be available.SWF helps developers build, run, and scale background jobs that have parallel or sequential steps. You can think of Amazon SWF as a fully-managed state tracker and task coordinator in the Cloud.

-

-

stackoverflow.com stackoverflow.com

-

SNS is a distributed publish-subscribe system. Messages are pushed to subscribers as and when they are sent by publishers to SNS. SQS is distributed queuing system. Messages are NOT pushed to receivers. Receivers have to poll or pull messages from SQS.

-

-

aws.amazon.com aws.amazon.com

-

Amazon SimpleDB passes on to you the financial benefits of Amazon’s scale. You pay only for resources you actually consume. For Amazon SimpleDB, this means data store reads and writes are charged by compute resources consumed by each operation, and you aren’t billed for compute resources when you aren’t actively using them (i.e. making requests).

-

-

stackoverflow.com stackoverflow.com

-

While SimpleDB has scaling limitations, it may be a good fit for smaller workloads that require query flexibility. Amazon SimpleDB automatically indexes all item attributes and thus supports query flexibility at the cost of performance and scale.

Simple DB vs DynamoDB

-

-

aws.amazon.com aws.amazon.com

-

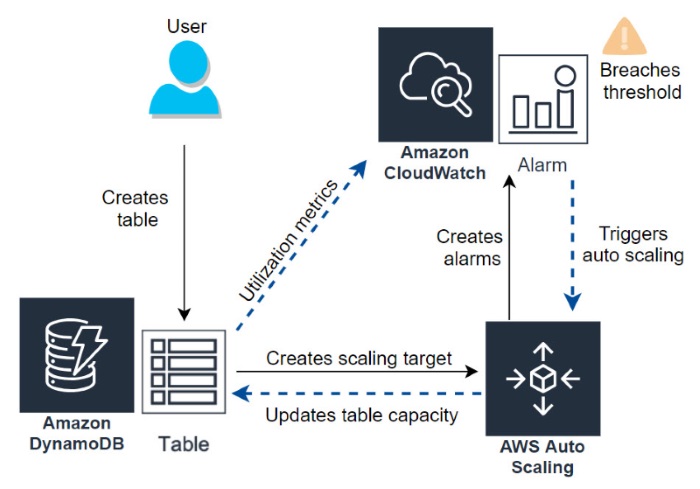

When you create a DynamoDB table, auto scaling is the default capacity setting, but you can also enable auto scaling on any table that does not have it active

-

-

docs.aws.amazon.com docs.aws.amazon.com

-

An elastic network interface (referred to as a network interface in this documentation) is a logical networking component in a VPC that represents a virtual network card.

-

-

docs.aws.amazon.com docs.aws.amazon.com

-