Author response:

The following is the authors’ response to the previous reviews

Responses to Editors:

We appreciate the editors’ concern regarding the difficulty of disentangling the contributions of tightly-coupled brain regions to the speech-gesture integration process—particularly due to the close temporal and spatial proximity of the stimulation windows and the potential for prolonged disruption. While we agree with that stimulation techniques, such as transcranial magnetic stimulation (TMS), can evoke or modulate neuronal activity both locally within the target region and in remote connected areas of the network. This complex interaction makes drawing clear conclusions about the causal relationship between stimulation and cognitive function more challenging. However, we believe that cause-and-effect relationships in cognitive neuroscience studies using non-invasive brain stimulation (NIBS) can still be robustly established if key assumptions are explicitly tested and confounding factors are rigorously controlled (Bergmann & Hartwigsen et al., 2021, J Cogn Neurosci).

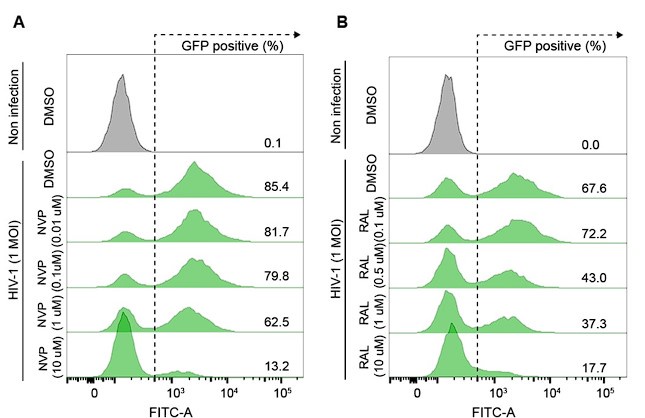

In our experiment, we addressed these concerns by including a sham TMS condition, an irrelevant control task, and multiple control time points. The results showed that TMS selectively disrupted the IFG-pMTG interaction during specific time windows of the task related to gesture-speech semantic congruency, but not in the sham TMS condition or the control task (gender congruency effect) (Zhao et al., 2021, JN). This selective disruption provides strong evidence for a causal link between IFG-pMTG connectivity and gesture-speech integration in the targeted time window.

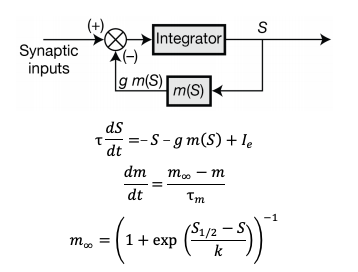

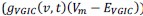

Regarding the potential for transient artifacts from TMS, we acknowledge that previous research has demonstrated that single-pulse TMS induces brief artifacts (0–10 ms) due to direct depolarization of cortical neurons, which momentarily disrupts electrical activity in the stimulated area (Romero et al., 2019, NC). However, in the case of paired-pulse TMS (ppTMS), the interaction between the first and second pulses is more complex. The first pulse increases membrane conductance in the target neurons via shunting inhibition mediated by GABAergic interneurons. This effectively lowers neuronal membrane resistance, “leaking” excitatory current and diminishing the depolarization induced by the second pulse, leading to a reduction in excitability during the paired-pulse interval. This mechanism suppresses the excitatory response to the second pulse, which is reflected in a reduced motor evoked potential (MEP) (Paulus & Rothwell, 2016, J Physiol).

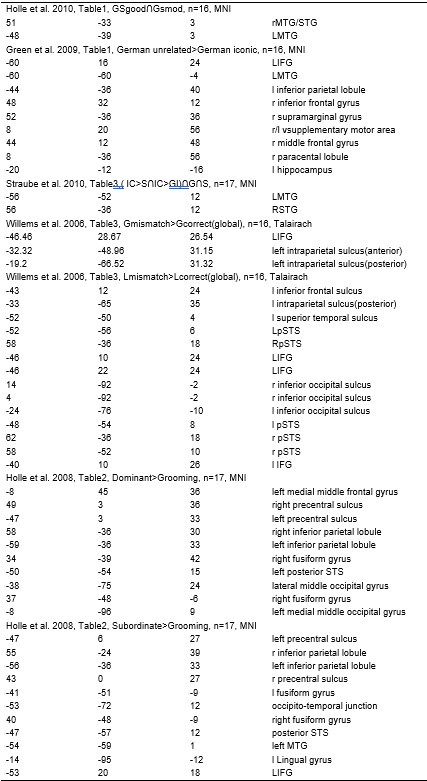

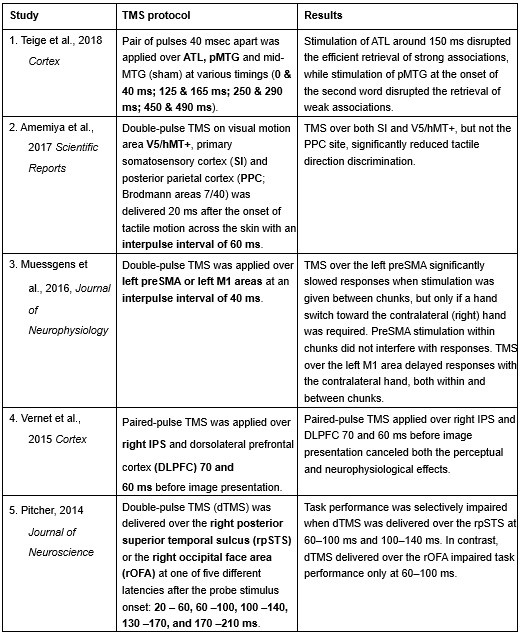

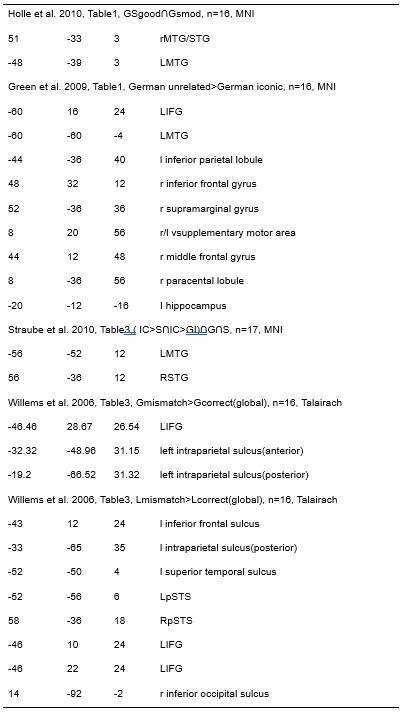

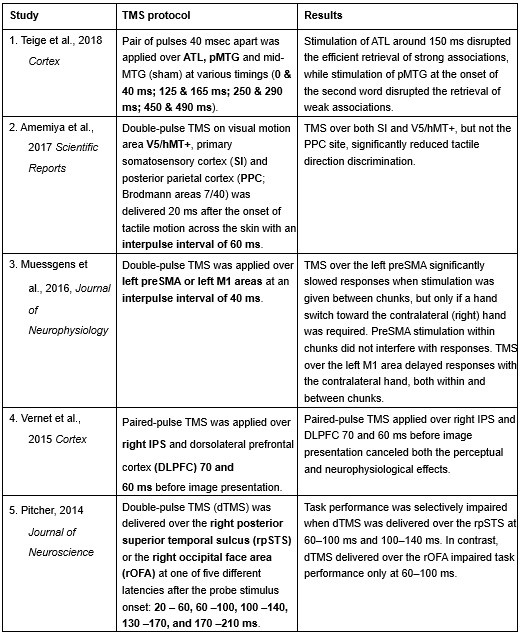

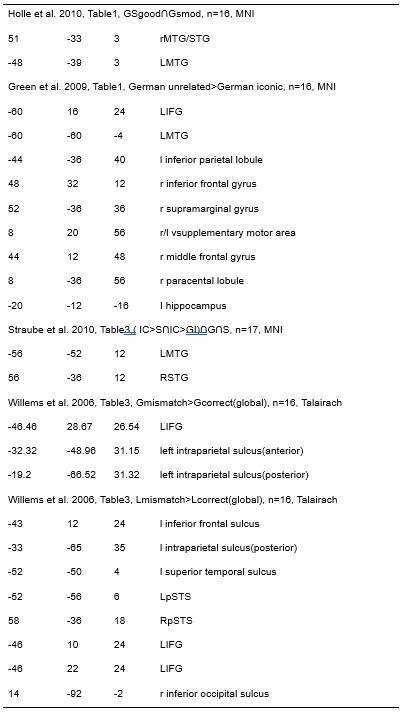

Furthermore, ppTMS has been widely used in previous studies to infer causal temporal relationships and explore the neural contributions of both structurally and functionally connected brain regions, across timescales as brief as 3–60 ms. We have reviewed several studies that employed paired-pulse TMS to investigate neural dynamics in regions such as the tongue and lip areas of the primary motor cortex (M1), as well as high-level semantic regions like the pMTG, PFC, and ATL (Table 1). These studies consistently demonstrate the methodological rigor and precision of double-pulse TMS in elucidating the temporal dynamics between different brain regions within short temporal windows.

Given these precedents and the evidence provided, we respectfully assert the validity of the methods employed in our study. We therefore kindly request the editors to reconsider the assessment that “the methods are insufficient for studying tightly-coupled brain regions over short timescales.” We hope that the editors’ concerns about the complexities of TMS-induced effects have been adequately addressed, and that our study’s design and results provide a clear and convincing causal argument for the role of IFG-pMTG in gesture-speech integration.

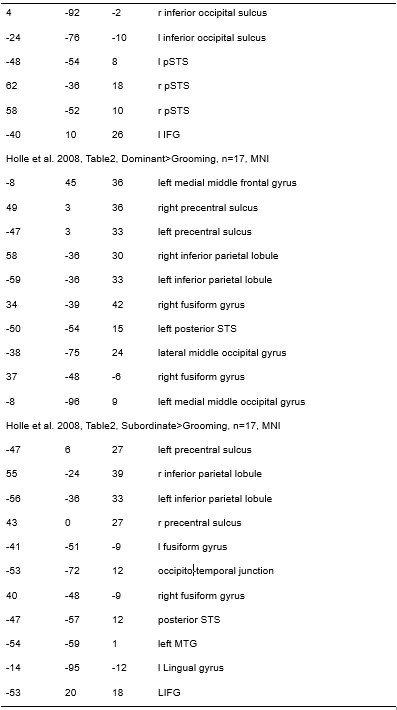

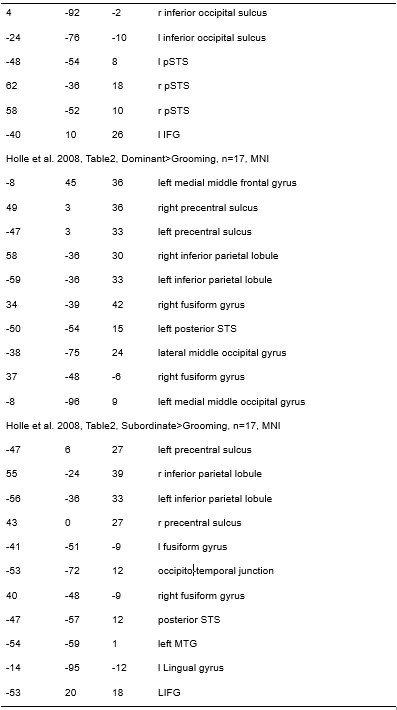

Author response table 1.

Double-pulse TMS studies on brain regions over 3-60 ms time interval

Reference

Teige, C., Mollo, G., Millman, R., Savill, N., Smallwood, J., Cornelissen, P. L., & Jefferies, E. (2018). Dynamic semantic cognition: Characterising coherent and controlled conceptual retrieval through time using magnetoencephalography and chronometric transcranial magnetic stimulation. Cortex, 103, 329-349.

Amemiya, T., Beck, B., Walsh, V., Gomi, H., & Haggard, P. (2017). Visual area V5/hMT+ contributes to perception of tactile motion direction: a TMS study. Scientific reports, 7(1), 40937.

Muessgens, D., Thirugnanasambandam, N., Shitara, H., Popa, T., & Hallett, M. (2016). Dissociable roles of preSMA in motor sequence chunking and hand switching—a TMS study. Journal of Neurophysiology, 116(6), 2637-2646.

Vernet, M., Brem, A. K., Farzan, F., & Pascual-Leone, A. (2015). Synchronous and opposite roles of the parietal and prefrontal cortices in bistable perception: a double-coil TMS–EEG study. Cortex, 64, 78-88.

Pitcher, D. (2014). Facial expression recognition takes longer in the posterior superior temporal sulcus than in the occipital face area. Journal of Neuroscience, 34(27), 9173-9177.

Bardi, L., Kanai, R., Mapelli, D., & Walsh, V. (2012). TMS of the FEF interferes with spatial conflict. Journal of cognitive neuroscience, 24(6), 1305-1313.

D’Ausilio, A., Bufalari, I., Salmas, P., & Fadiga, L. (2012). The role of the motor system in discriminating normal and degraded speech sounds. Cortex, 48(7), 882-887.

Pitcher, D., Duchaine, B., Walsh, V., & Kanwisher, N. (2010). TMS evidence for feedforward and feedback mechanisms of face and body perception. Journal of Vision, 10(7), 671-671.

Gagnon, G., Blanchet, S., Grondin, S., & Schneider, C. (2010). Paired-pulse transcranial magnetic stimulation over the dorsolateral prefrontal cortex interferes with episodic encoding and retrieval for both verbal and non-verbal materials. Brain Research, 1344, 148-158.

Kalla, R., Muggleton, N. G., Juan, C. H., Cowey, A., & Walsh, V. (2008). The timing of the involvement of the frontal eye fields and posterior parietal cortex in visual search. Neuroreport, 19(10), 1067-1071.

Pitcher, D., Garrido, L., Walsh, V., & Duchaine, B. C. (2008). Transcranial magnetic stimulation disrupts the perception and embodiment of facial expressions. Journal of Neuroscience, 28(36), 8929-8933.

Til Ole Bergmann, Gesa Hartwigsen; Inferring Causality from Noninvasive Brain Stimulation in Cognitive Neuroscience. J Cogn Neurosci 2021; 33 (2): 195–225. https://doi.org/10.1162/jocn_a_01591

Romero, M.C., Davare, M., Armendariz, M. et al. Neural effects of transcranial magnetic stimulation at the single-cell level. Nat Commun 10, 2642 (2019). https://doi.org/10.1038/s41467-019-10638-7

Paulus W, Rothwell JC. Membrane resistance and shunting inhibition: where biophysics meets state-dependent human neurophysiology. J Physiol. 2016 May 15;594(10):2719-28. doi: 10.1113/JP271452. PMID: 26940751; PMCID: PMC4865581.

Staat, C., Gattinger, N., & Gleich, B. (2022). PLUSPULS: A transcranial magnetic stimulator with extended pulse protocols. HardwareX, 13. https://doi.org/10.1016/j.ohx.2022.e00380

Zhao, W., Li, Y., and Du, Y. (2021). TMS reveals dynamic interaction between inferior frontal gyrus and posterior middle temporal gyrus in gesture-speech semantic integration. The Journal of Neuroscience, 10356-10364. https://doi.org/10.1523/jneurosci.1355-21.2021.

Reviewer #1 (Public review):

Summary:

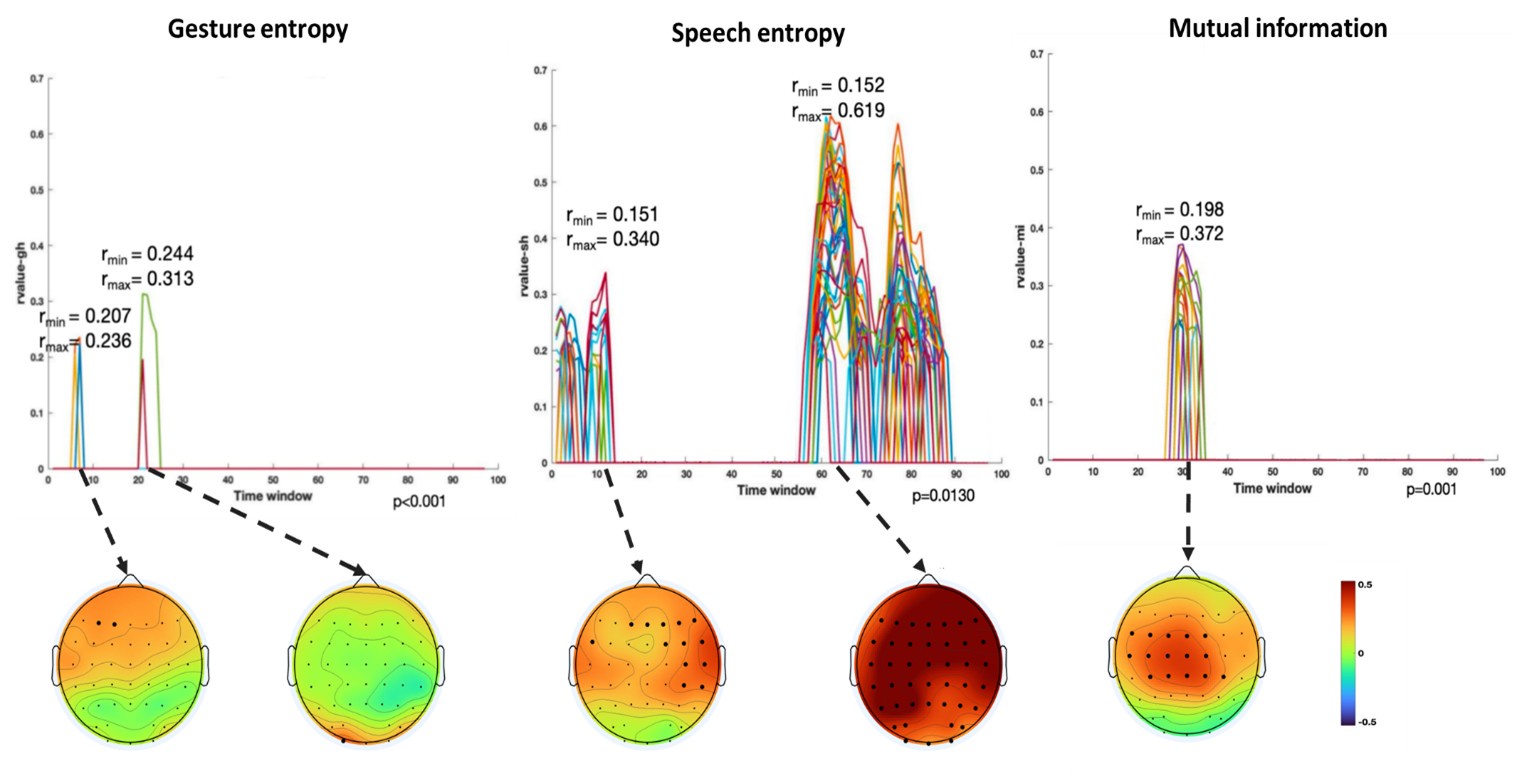

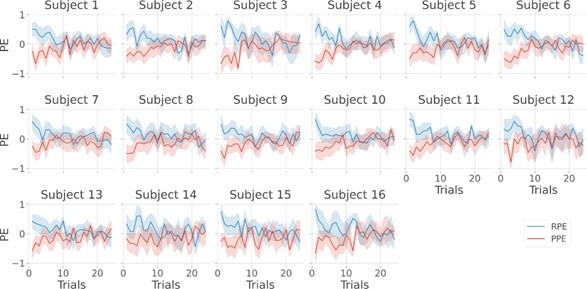

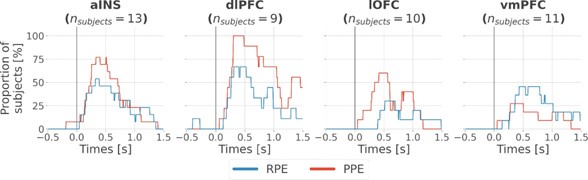

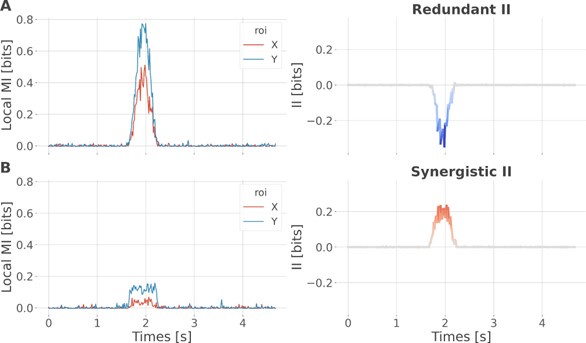

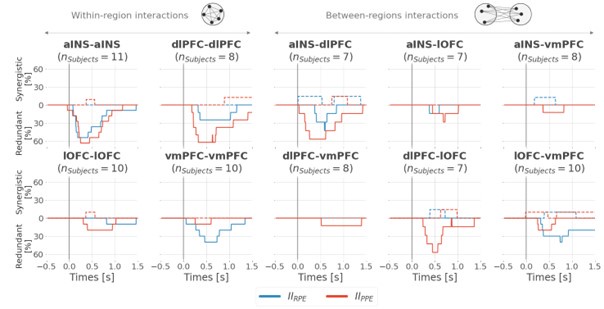

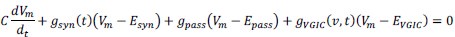

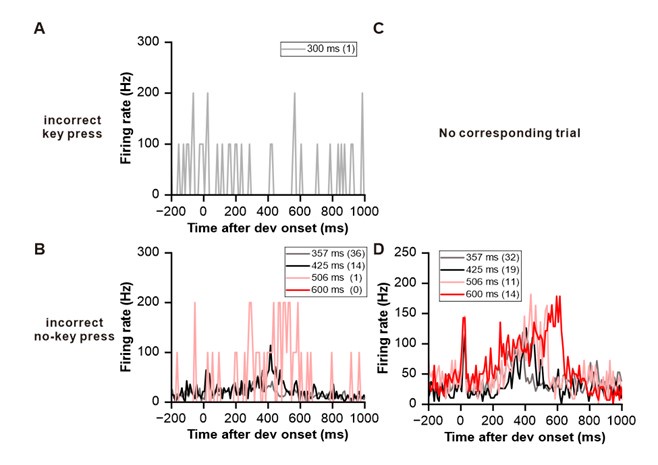

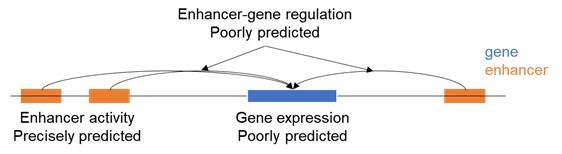

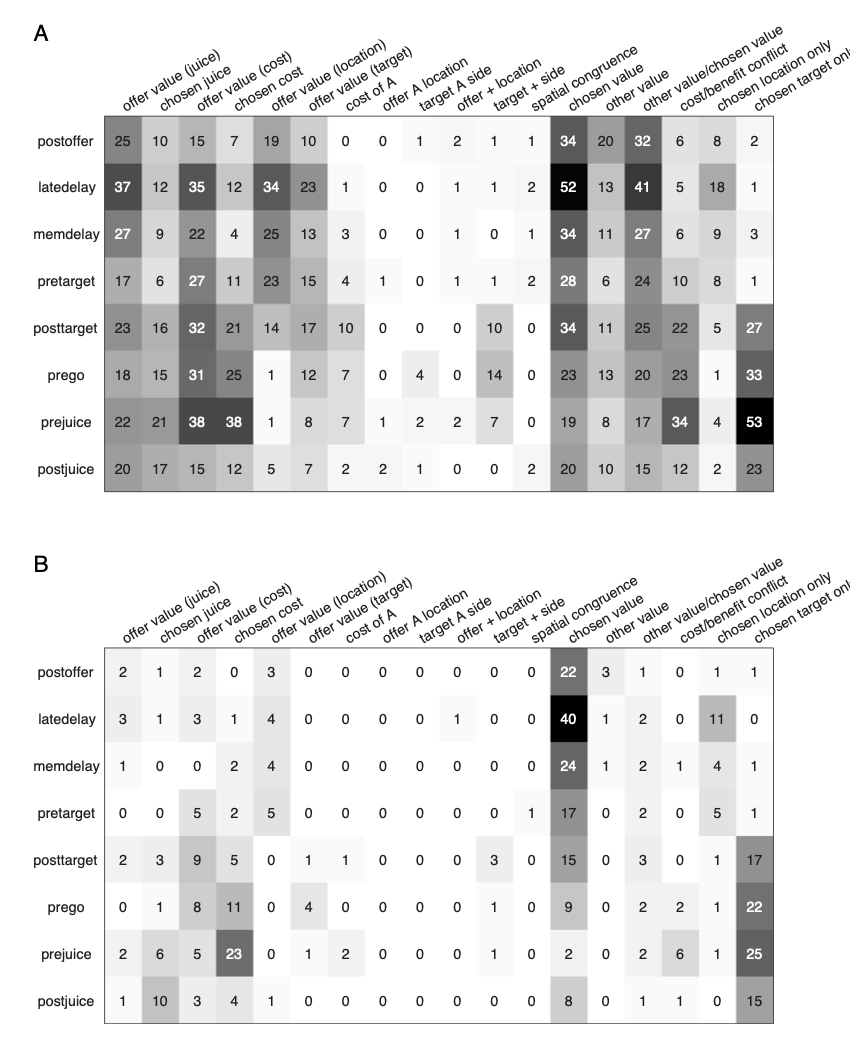

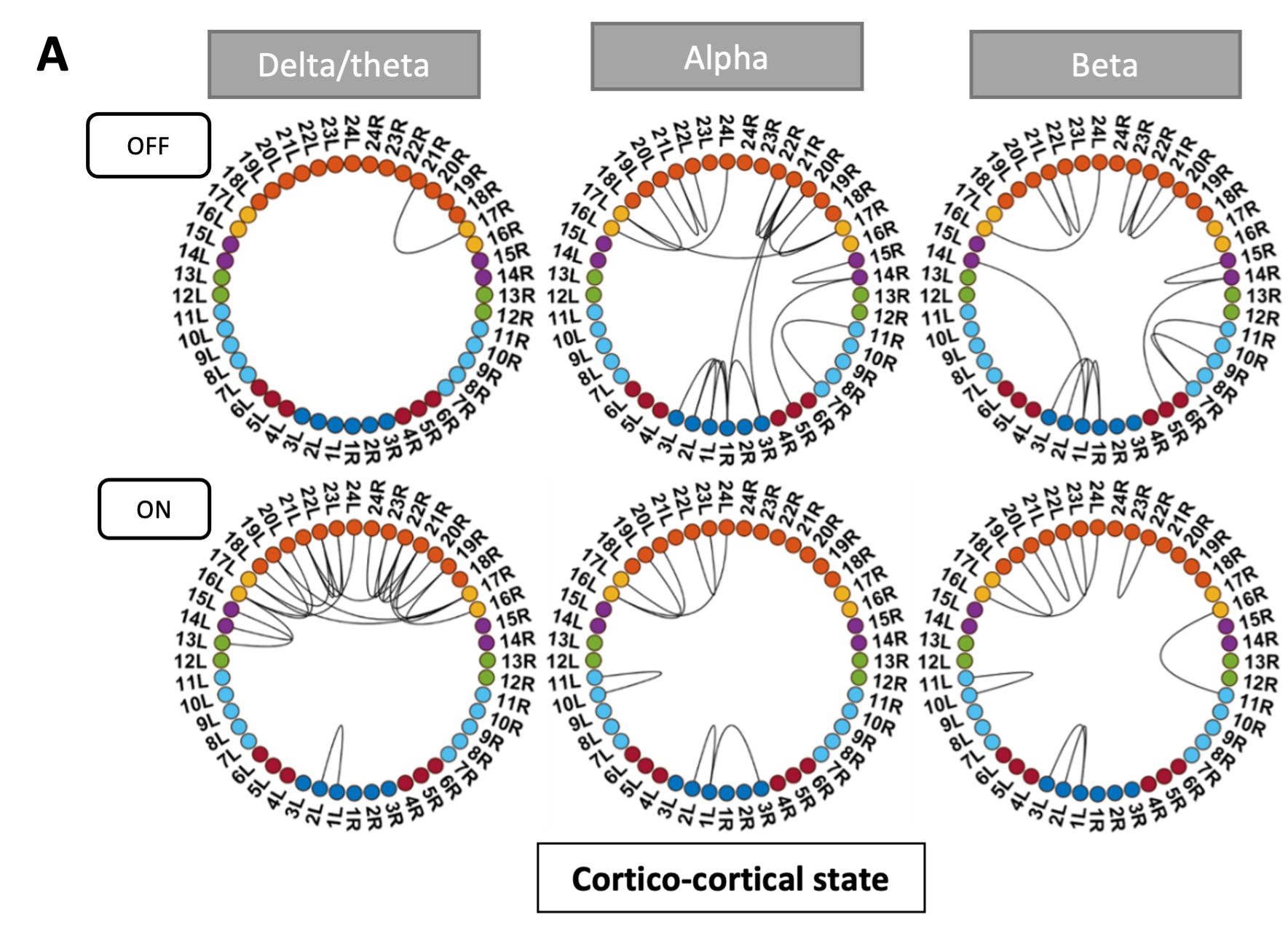

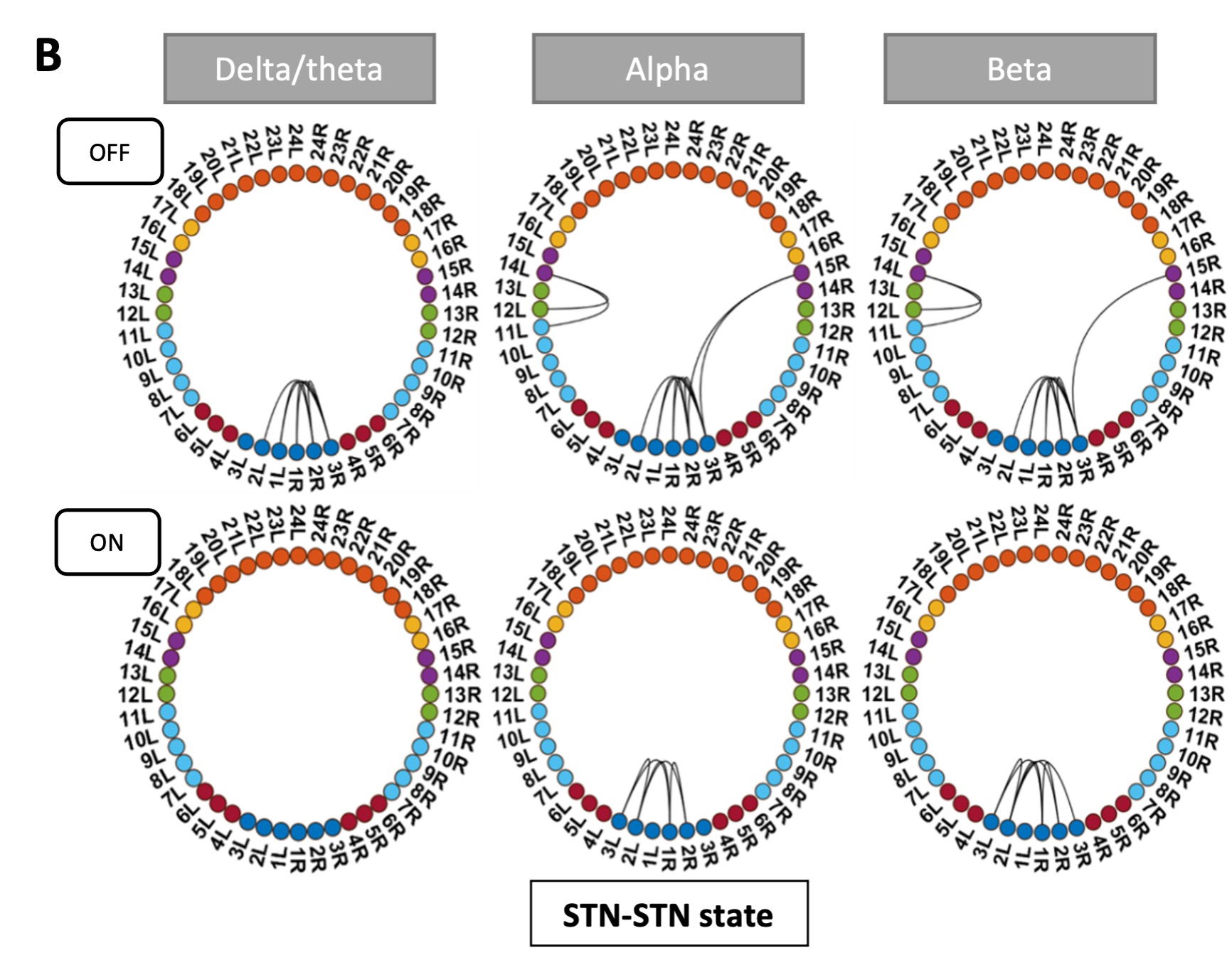

The authors quantified information in gesture and speech, and investigated the neural processing of speech and gestures in pMTG and LIFG, depending on their informational content, in 8 different time-windows, and using three different methods (EEG, HD-tDCS and TMS). They found that there is a time-sensitive and staged progression of neural engagement that is correlated with the informational content of the signal (speech/gesture).

Strengths:

A strength of the paper is that the authors attempted to combine three different methods to investigate speech-gesture processing.

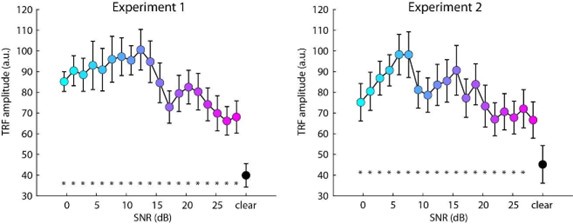

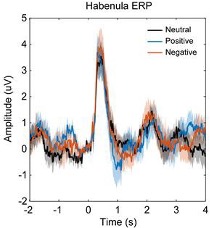

We sincerely thank the reviewer for recognizing our efforts in conducting three experiments to explore the neural activity linked to the amount of information processed during multisensory gesture-speech integration. In Experiment 1, we observed that the extent of inhibition in the pMTG and LIFG was closely linked to the overlapping gesture-speech responses, as quantified by mutual information. Building on the established roles of the pMTG and LIFG in our previous study (Zhao et al., 2021, JN), we then expanded our investigation to determine whether the dynamic neural engagement between the pMTG and LIFG during gesture-speech processing was also associated with the quality of the information. This hypothesis was further validated through high-temporal resolution EEG, where we examined ERP components related to varying information contents. Notably, we observed a close time alignment between the ERP components and the time windows of the TMS effects, which were associated with the same informational matrices in gesture-speech processing.

Weaknesses:

(1) One major issue is that there is a tight anatomical coupling between pMTG and LIFG. Stimulating one area could therefore also result in stimulation of the other area (see Silvanto and Pascual-Leone, 2008). I therefore think it is very difficult to tease apart the contribution of these areas to the speech-gesture integration process, especially considering that the authors stimulate these regions in time windows that are very close to each other in both time and space (and the disruption might last longer over time).

Response 1: We greatly appreciate the reviewer’s careful consideration. We trust that the explanation provided above has clarified this issue (see Response to Editors for detail).

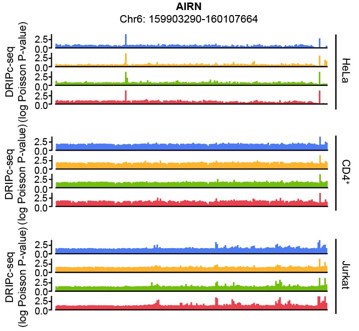

(2) Related to this point, it is unclear to me why the HD-TDCS/TMS is delivered in set time windows for each region. How did the authors determine this, and how do the results for TMS compare to their previous work from 2018 and 2023 (which describes a similar dataset+design)? How can they ensure they are only targeting their intended region since they are so anatomically close to each other?

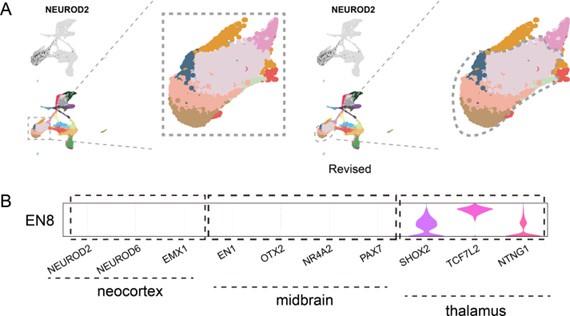

Response 2: The current study builds on a series of investigations that systematically examined the temporal and spatial dynamics of gesture-speech integration. In our earlier work (Zhao et al., 2018, J. Neurosci), we demonstrated that interrupting neural activity in the IFG or pMTG using TMS selectively disrupted the semantic congruency effect (reaction time costs due to semantic incongruence), without affecting the gender congruency effect (reaction time costs due to gender incongruence). These findings identified the IFG and pMTG as critical hubs for gesture-speech integration. This informed the brain regions selected for subsequent studies.

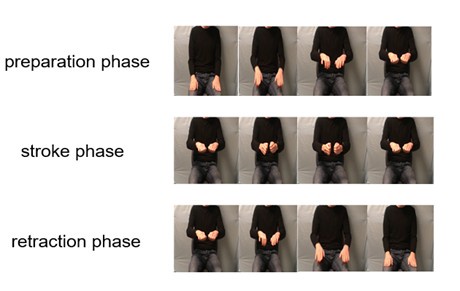

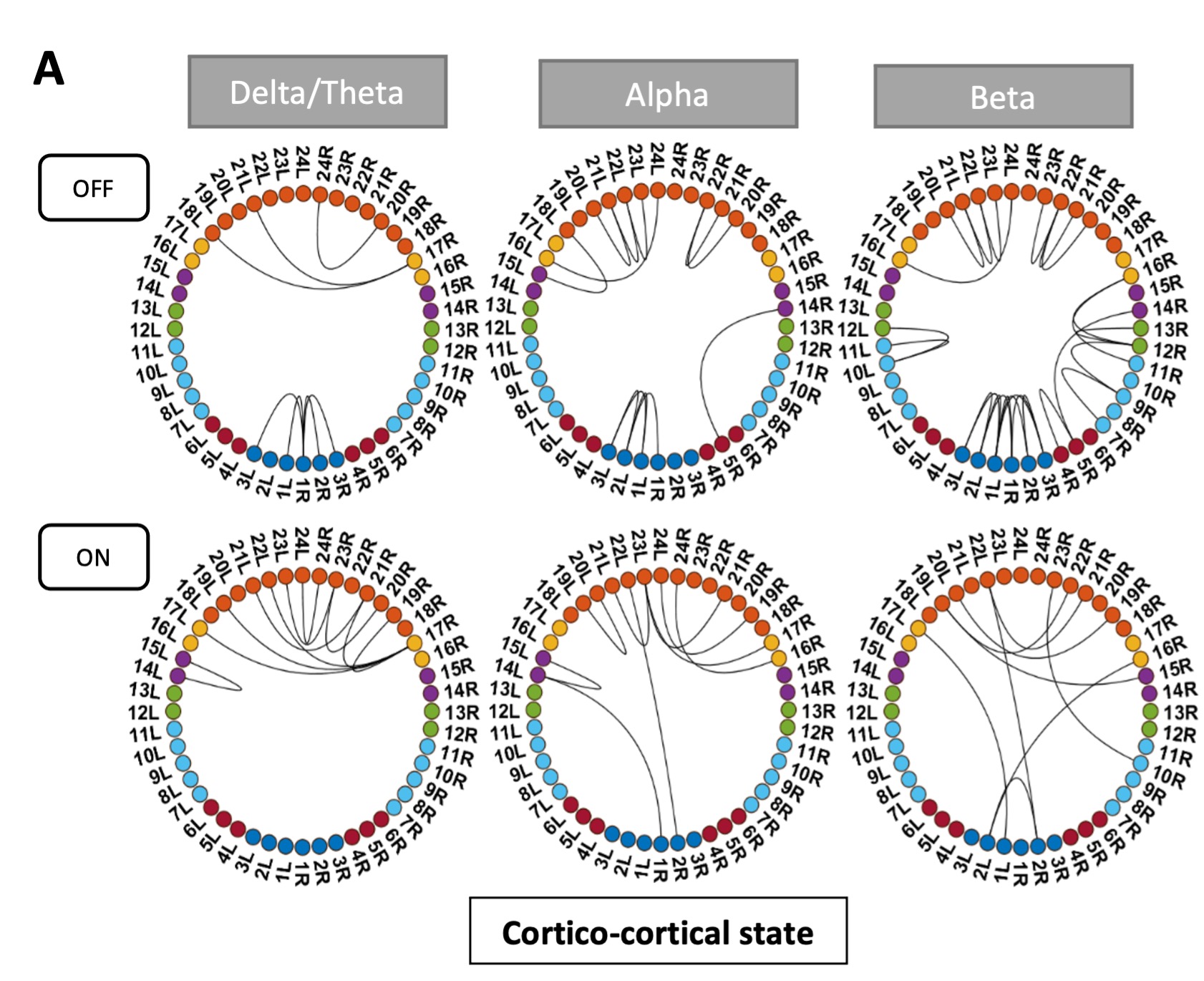

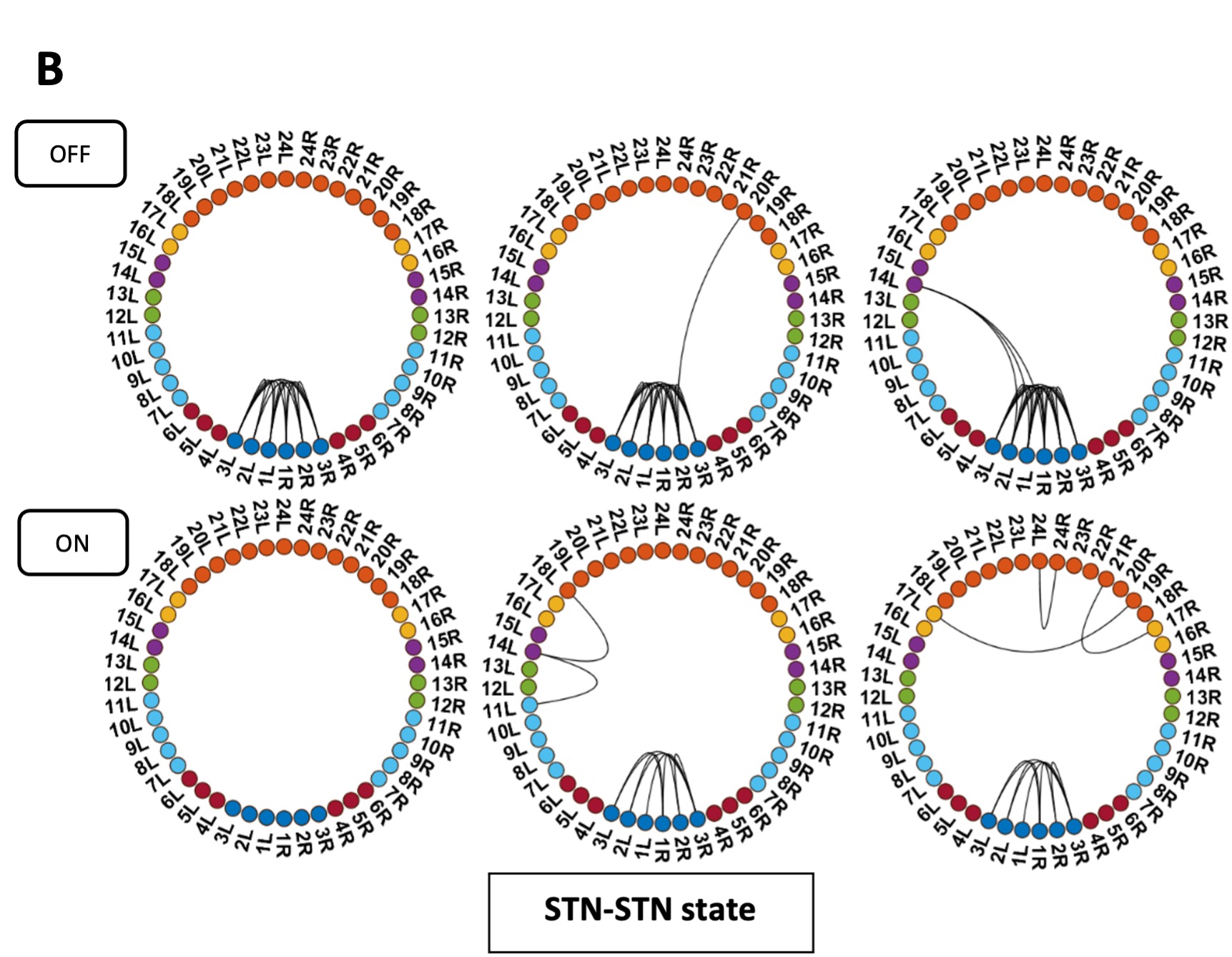

In Zhao et al. (2021, J. Neurosci), we employed a double-pulse TMS protocol, delivering stimulation within one of eight 40-ms time windows, to further examine the temporal involvement of the IFG and pMTG. The results revealed time-window-selective disruptions of the semantic congruency effect, confirming the dynamic and temporally staged roles of these regions during gesture-speech integration.

In Zhao et al. (2023, Frontiers in Psychology), we investigated the semantic predictive role of gestures relative to speech by comparing two experimental conditions: (1) gestures preceding speech by a fixed interval of 200 ms, and (2) gestures preceding speech at its semantic identification point. We observed time-window-selective disruptions of the semantic congruency effect in the IFG and pMTG only in the second condition, leading to the conclusion that gestures exert a semantic priming effect on co-occurring speech. These findings underscored the semantic advantage of gesture in facilitating speech integration, further refining our understanding of the temporal and functional interplay between these modalities.

The design of the current study—including the choice of brain regions and time windows—was directly informed by these prior findings. Experiment 1 (HD-tDCS) targeted the entire gesture-speech integration process in the IFG and pMTG to assess whether neural activity in these regions, previously identified as integration hubs, is modulated by changes in informativeness from both modalities (i.e., entropy) and their interactions (mutual information, MI). The results revealed a gradual inhibition of neural activity in both areas as MI increased, evidenced by a negative correlation between MI and the tDCS inhibition effect in both regions. Building on this, Experiments 2 and 3 employed double-pulse TMS and ERPs to further assess whether the engaged neural activity was both time-sensitive and staged. These experiments also evaluated the contributions of various sources of information, revealing correlations between information-theoretic metrics and time-locked brain activity, providing insights into the ‘gradual’ nature of gesture-speech integration.

We acknowledge that the rationale for the design of the current study was not fully articulated in the original manuscript. In the revised version, we provided a more comprehensive and coherent explanation of the logic behind the three experiments, as well as the alignment with our previous findings in Lines 75-102:

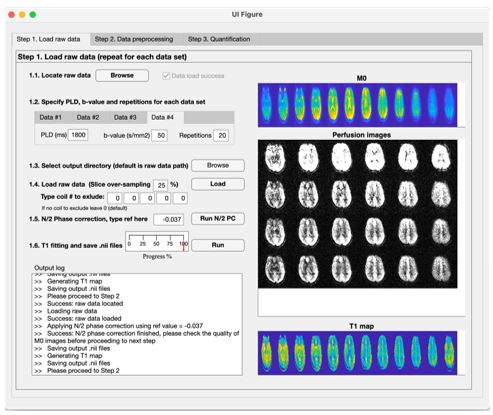

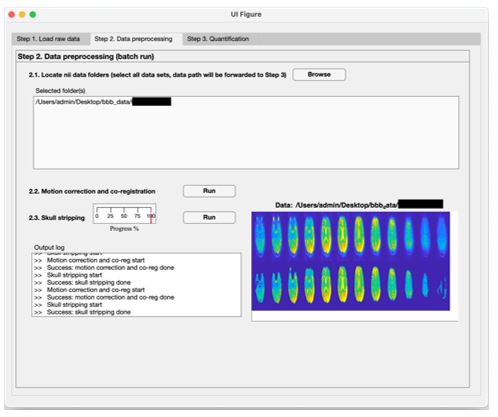

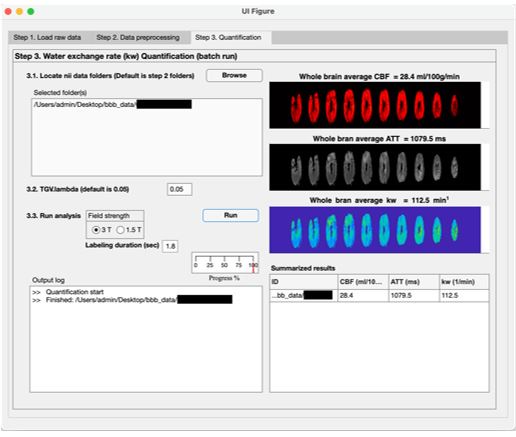

‘To investigate the neural mechanisms underlying gesture-speech integration, we conducted three experiments to assess how neural activity correlates with distributed multisensory integration, quantified using information-theoretic measures of MI. Additionally, we examined the contributions of unisensory signals in this process, quantified through unisensory entropy. Experiment 1 employed high-definition transcranial direct current stimulation (HD-tDCS) to administer Anodal, Cathodal and Sham stimulation to either the IFG or the pMTG. HD-tDCS induces membrane depolarization with anodal stimulation and membrane hyperpolarization with cathodal stimulation[26], thereby increasing or decreasing cortical excitability in the targeted brain area, respectively. This experiment aimed to determine whether the overall facilitation (Anodal-tDCS minus Sham-tDCS) and/or inhibitory (Cathodal-tDCS minus Sham-tDCS) of these integration hubs is modulated by the degree of gesture-speech integration, as measure by MI.

Given the differential involvement of the IFG and pMTG in gesture-speech integration, shaped by top-down gesture predictions and bottom-up speech processing [23], Experiment 2 was designed to further assess whether the activity of these regions was associated with relevant informational matrices. Specifically, we applied inhibitory chronometric double-pulse transcranial magnetic stimulation (TMS) to specific temporal windows associated with integration processes in these regions[23], assessing whether the inhibitory effects of TMS were correlated with unisensory entropy or the multisensory convergence index (MI).

Experiment 3 complemented these investigations by focusing on the temporal dynamics of neural responses during semantic processing, leveraging high-temporal event-related potentials (ERPs). This experiment investigated how distinct information contributors modulated specific ERP components associated with semantic processing. These components included the early sensory effects as P1 and N1–P2[27,28], the N400 semantic conflict effect[14,28,29], and the late positive component (LPC) reconstruction effect[30,31]. By integrating these ERP findings with results from Experiments 1 and 2, Experiment 3 aimed to provide a more comprehensive understanding of how gesture-speech integration is modulated by neural dynamics.’

Although the IFG and pMTG are anatomically close, the consistent differentiation of their respective roles, as evidenced by our experiment across various time windows (TWs) and supported by previous research (see Response to editors for details), reinforces the validity of the stimulation effect observed in our study.

References

Zhao, W.Y., Riggs, K., Schindler, I., and Holle, H. (2018). Transcranial magnetic stimulation over left inferior frontal and posterior temporal cortex disrupts gesture-speech integration. Journal of Neuroscience 38, 1891-1900. 10.1523/Jneurosci.1748-17.2017.

Zhao, W., Li, Y., and Du, Y. (2021). TMS reveals dynamic interaction between inferior frontal gyrus and posterior middle temporal gyrus in gesture-speech semantic integration. The Journal of Neuroscience, 10356-10364. https://doi.org/10.1523/jneurosci.1355-21.2021.

Zhao, W. (2023). TMS reveals a two-stage priming circuit of gesture-speech integration. Front Psychol 14, 1156087. 10.3389/fpsyg.2023.1156087.

Bikson, M., Inoue, M., Akiyama, H., Deans, J.K., Fox, J.E., Miyakawa, H., and Jefferys, J.G.R. (2004). Effects of uniform extracellular DC electric fields on excitability in rat hippocampal slices. J Physiol-London 557, 175-190. 10.1113/jphysiol.2003.055772.

Federmeier, K.D., Mai, H., and Kutas, M. (2005). Both sides get the point: hemispheric sensitivities to sentential constraint. Memory & Cognition 33, 871-886. 10.3758/bf03193082.

Kelly, S.D., Kravitz, C., and Hopkins, M. (2004). Neural correlates of bimodal speech and gesture comprehension. Brain and Language 89, 253-260. 10.1016/s0093-934x(03)00335-3.

Wu, Y.C., and Coulson, S. (2005). Meaningful gestures: Electrophysiological indices of iconic gesture comprehension. Psychophysiology 42, 654-667. 10.1111/j.1469-8986.2005.00356.x.

Fritz, I., Kita, S., Littlemore, J., and Krott, A. (2021). Multimodal language processing: How preceding discourse constrains gesture interpretation and affects gesture integration when gestures do not synchronise with semantic affiliates. J Mem Lang 117, 104191. 10.1016/j.jml.2020.104191.

Gunter, T.C., and Weinbrenner, J.E.D. (2017). When to take a gesture seriously: On how we use and prioritize communicative cues. J Cognitive Neurosci 29, 1355-1367. 10.1162/jocn_a_01125.

Ozyurek, A., Willems, R.M., Kita, S., and Hagoort, P. (2007). On-line integration of semantic information from speech and gesture: Insights from event-related brain potentials. J Cognitive Neurosci 19, 605-616. 10.1162/jocn.2007.19.4.605.

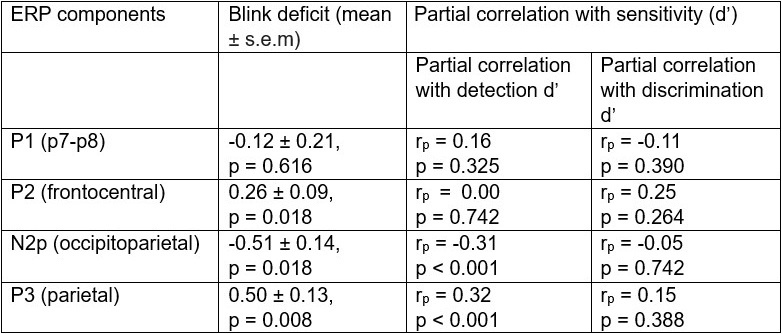

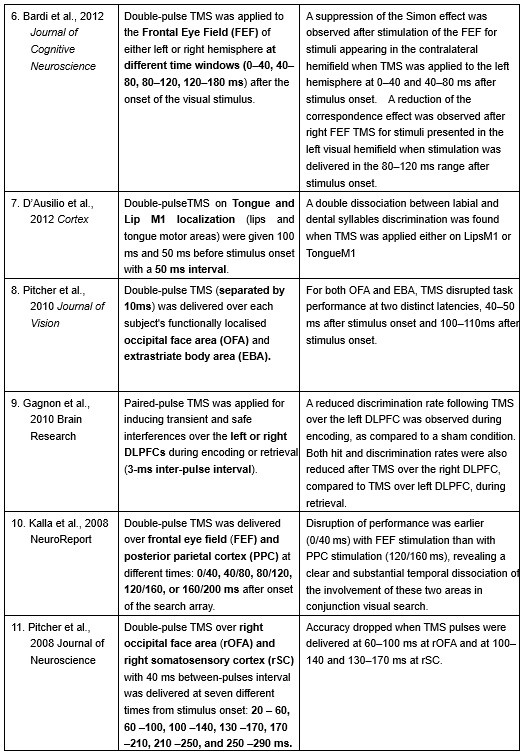

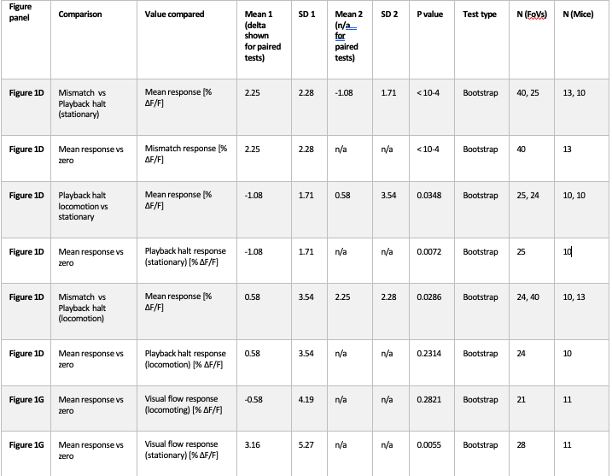

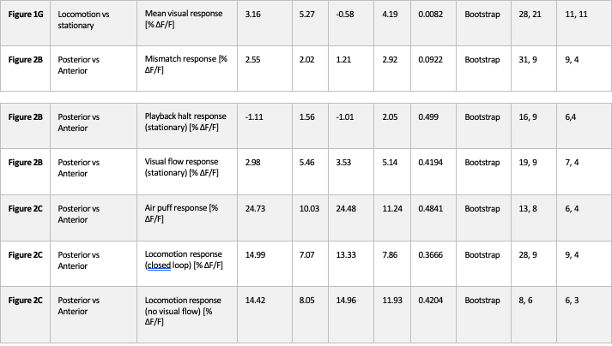

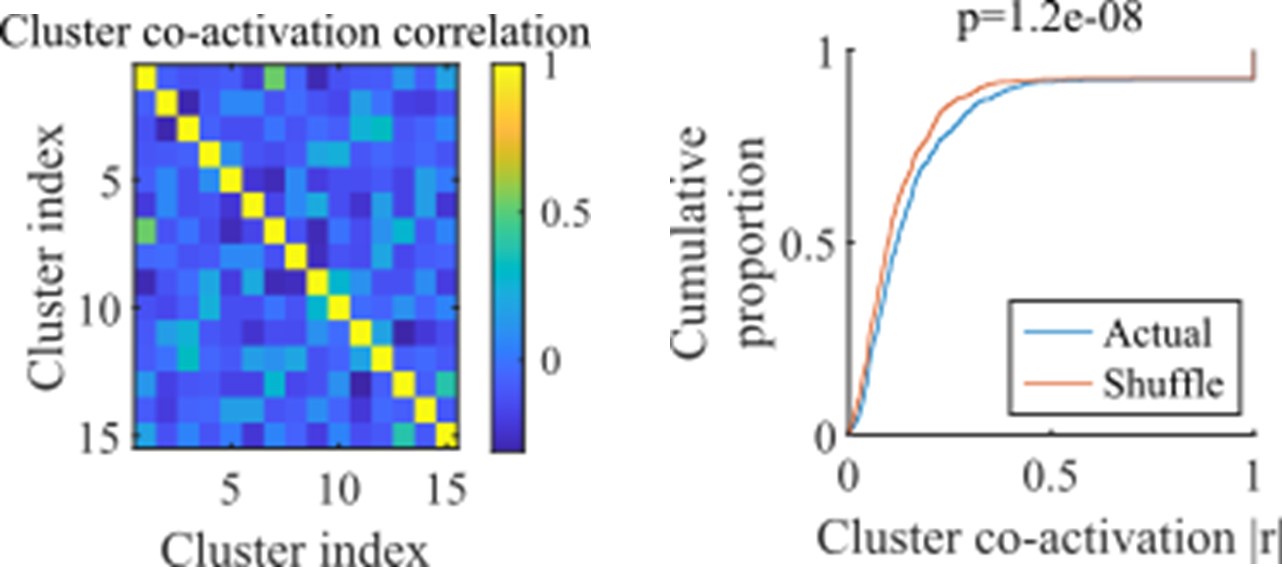

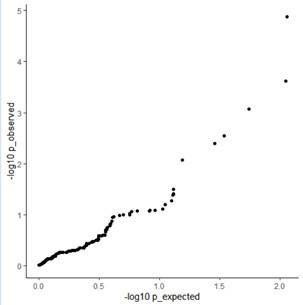

(3) As the EEG signal is often not normally distributed, I was wondering whether the authors checked the assumptions for their Pearson correlations. The authors could perhaps better choose to model the different variables to see whether MI/entropy could predict the neural responses. How did they correct the many correlational analyses that they have performed?

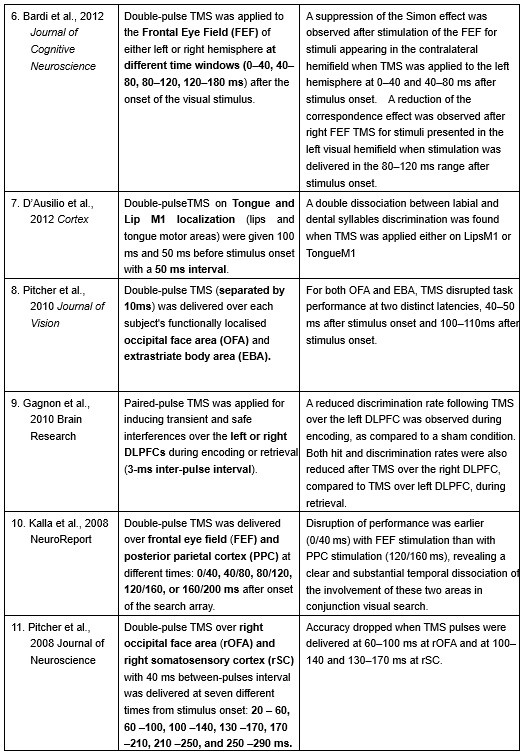

Response 3: We greatly appreciate the reviewer’s thoughtful comments.

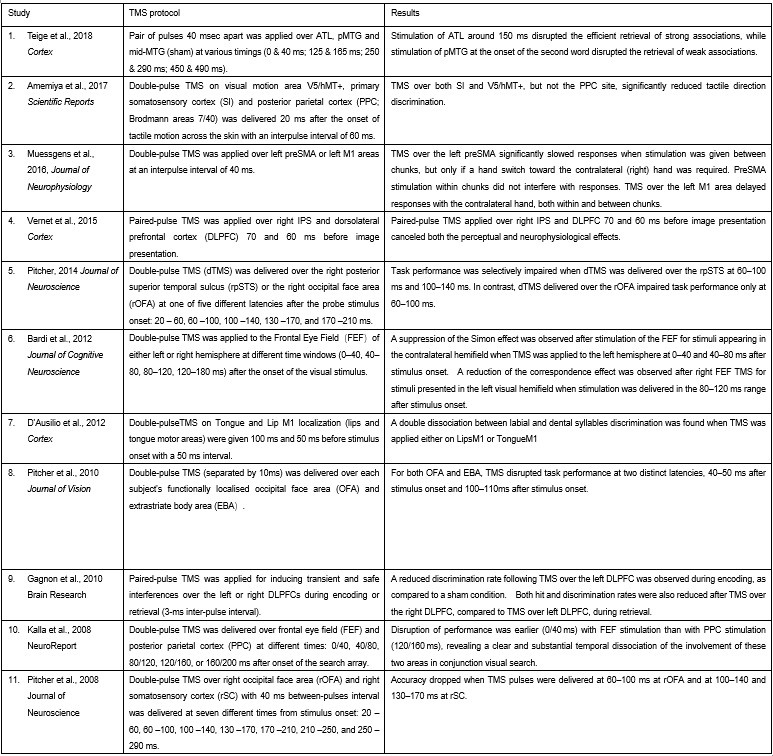

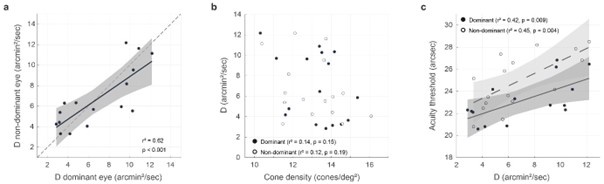

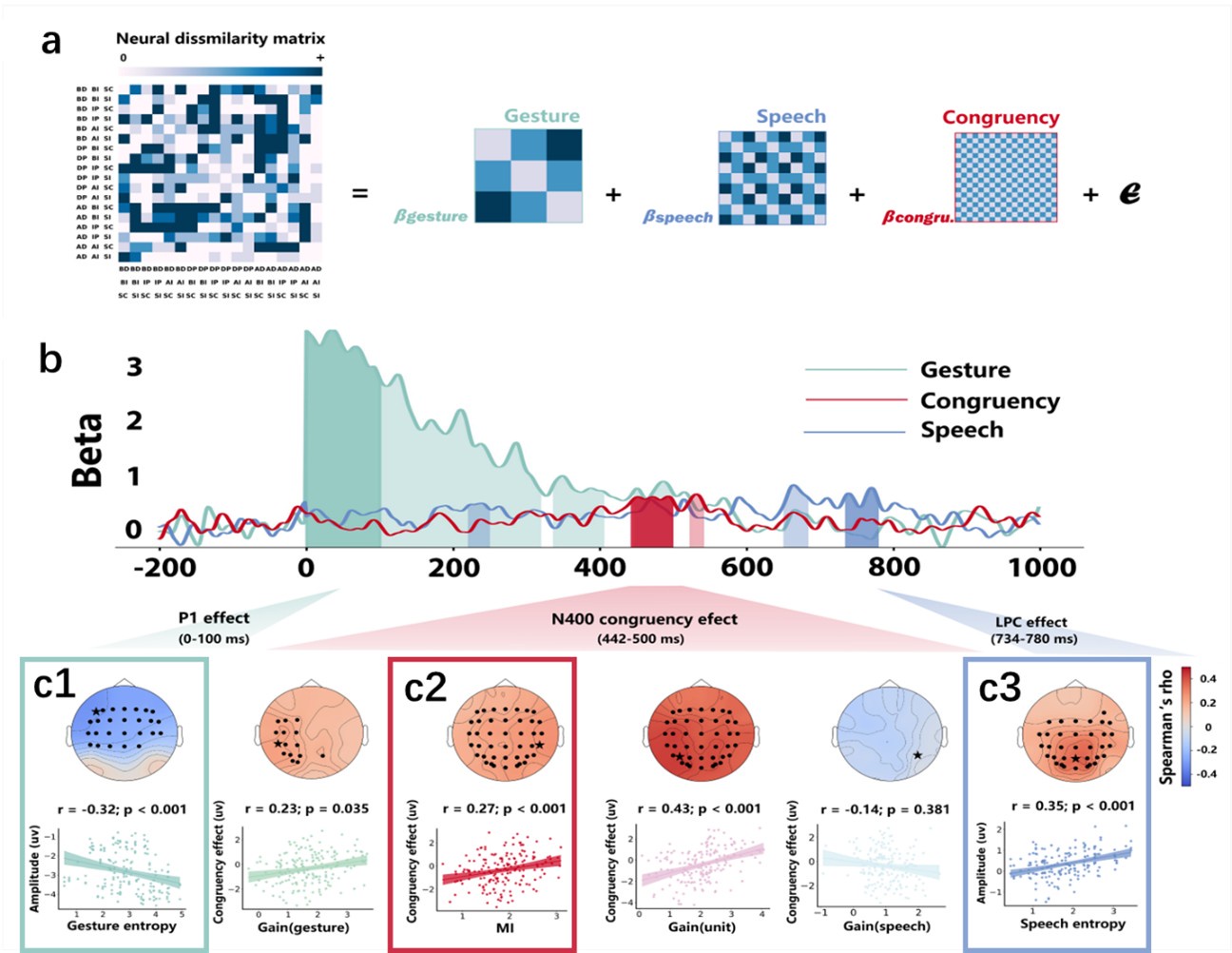

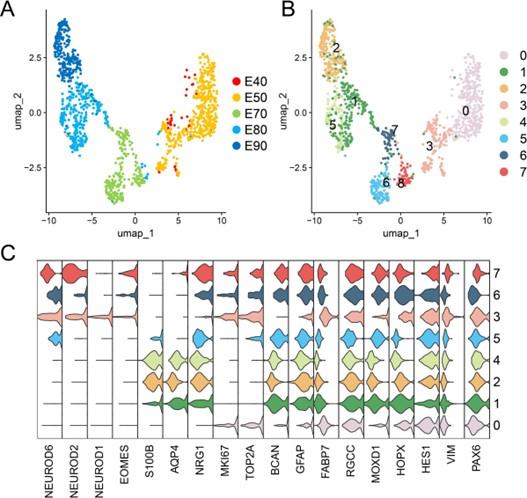

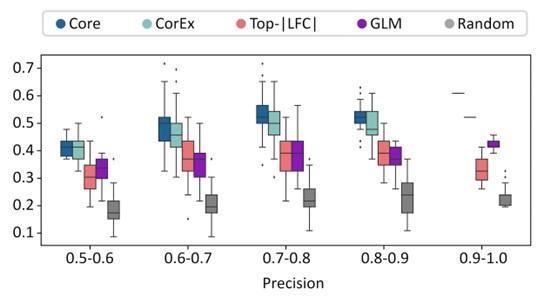

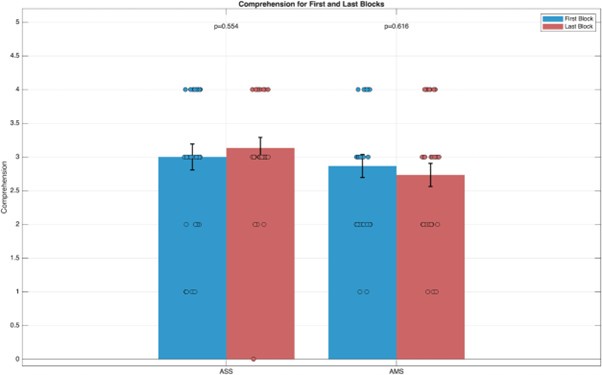

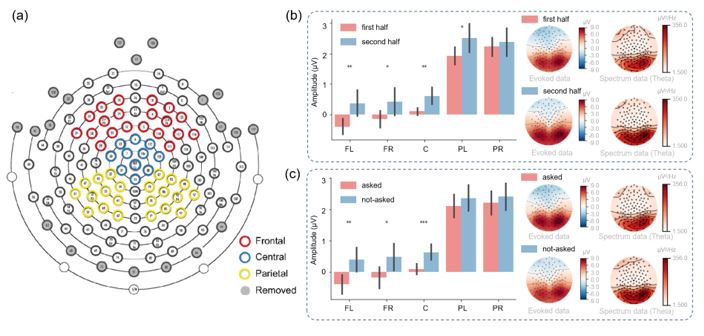

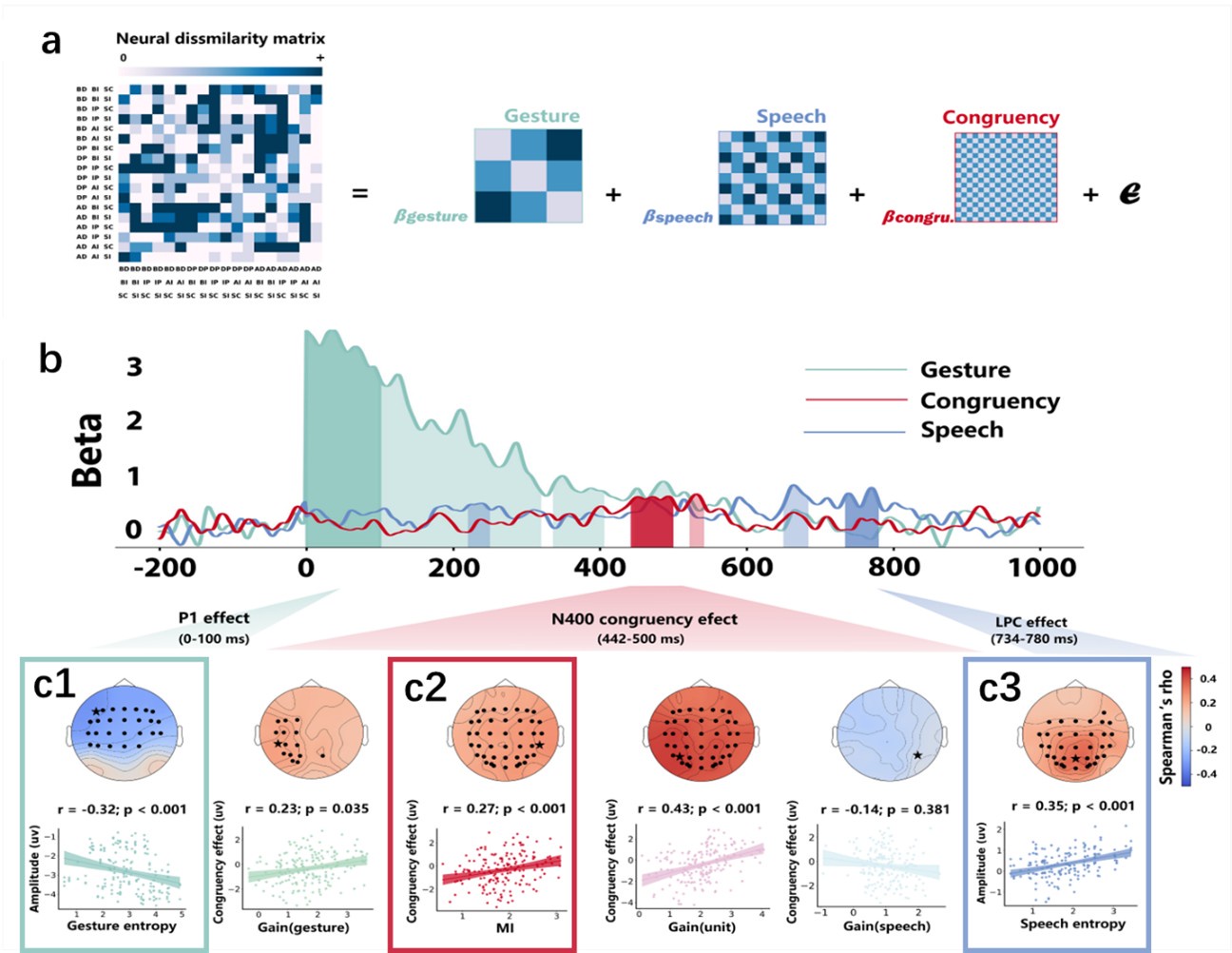

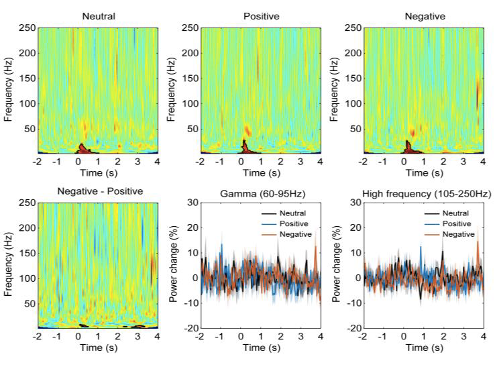

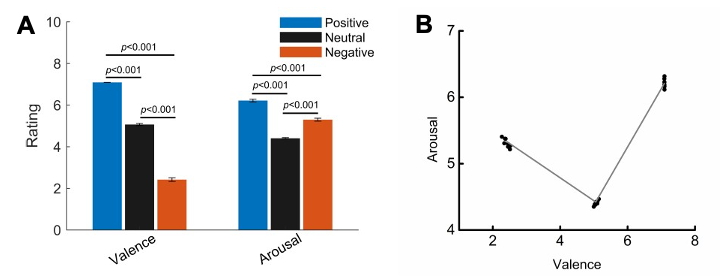

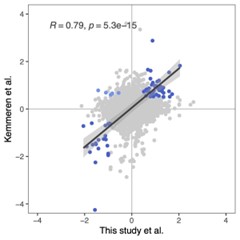

(1) Regarding the questioning of normal distribution of EEG signals and the use of Pearson correlation, in Figure 5 of the manuscript, we have already included normal distribution curves to illustrate the relationships between average ERP amplitudes across each ROI or elicited cluster and the three information models.

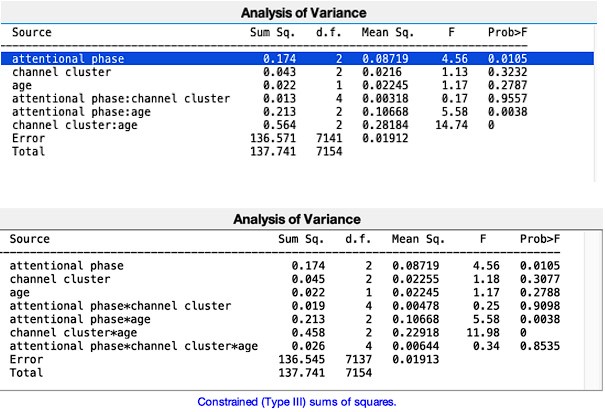

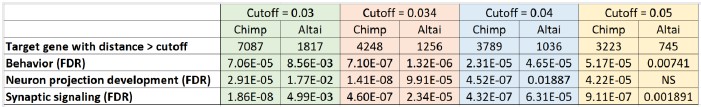

Additionally, we performed the Shapiro-Wilk test, a widely accepted method for assessing bivariate normality, on both the MI/entropy and averaged ERP data. The p-values for all three combinations were greater than 0.05, indicating that the sample data from all bivariate combinations were normally distributed (Author response table 2).

Author response table 2.

Shapiro-Wilk results of bivariable normality test

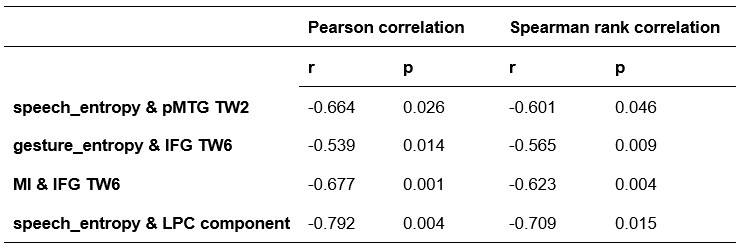

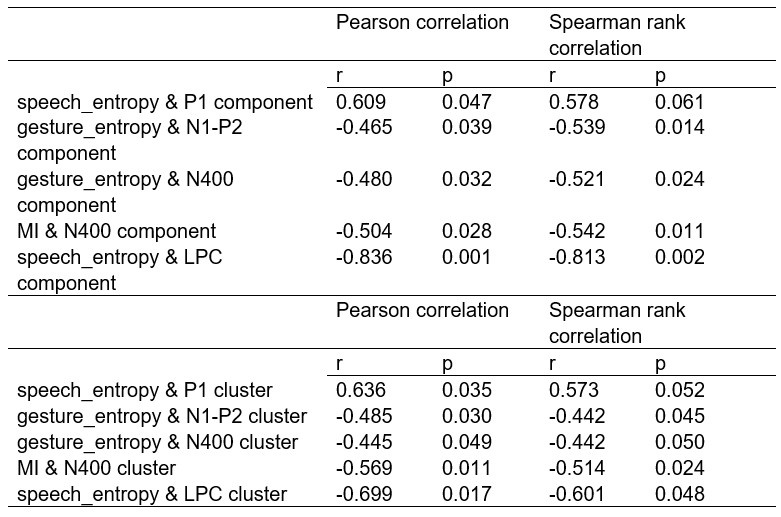

To further consolidate the relationship between entropy/MI and various ERP components, we also conducted a Spearman rank correlation analysis (Author response table 3-5). While the correlation between speech entropy and ERP amplitude in the P1 component yielded a p-value of 0.061, all other results were consistent with those obtained from the Pearson correlation analysis across the three experiments. Therefore, our conclusion that progressive neural responses reflected the degree of information remains robust. Although the Spearman rank and Pearson correlation analyses yielded similar results, we opted to report the Pearson correlation coefficients throughout the manuscript to maintain consistency.

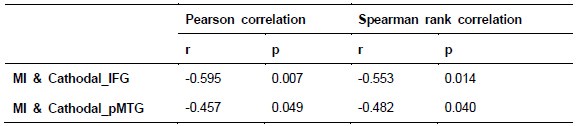

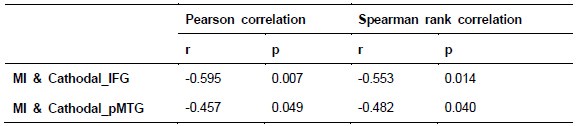

Author response table 3.

Comparison of Pearson and Spearman results in Experiment 1

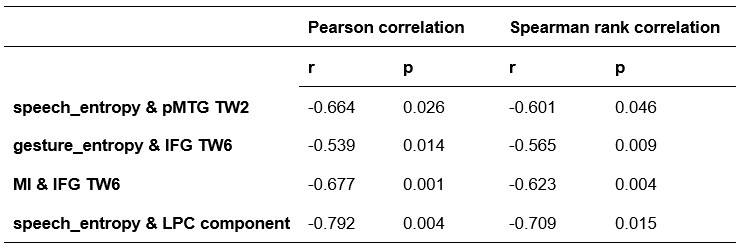

Author response table 4.

Comparison of Pearson and Spearman results in Experiment 2

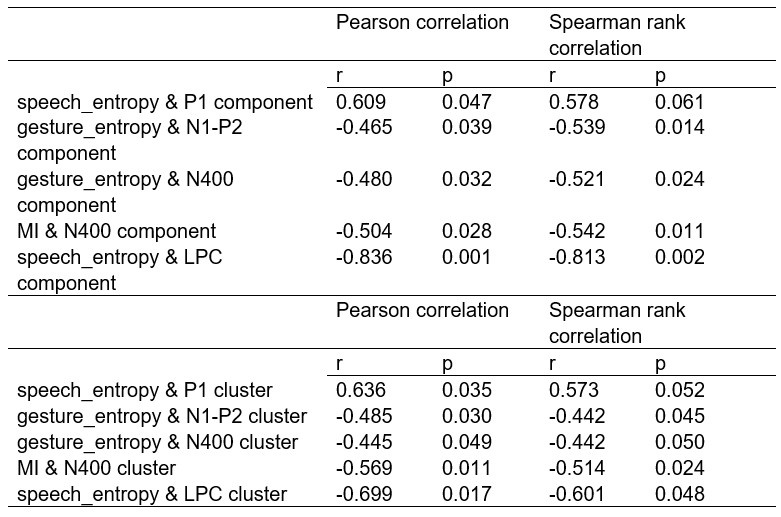

Author response table 5.

Comparison of Pearson and Spearman results in Experiment 3

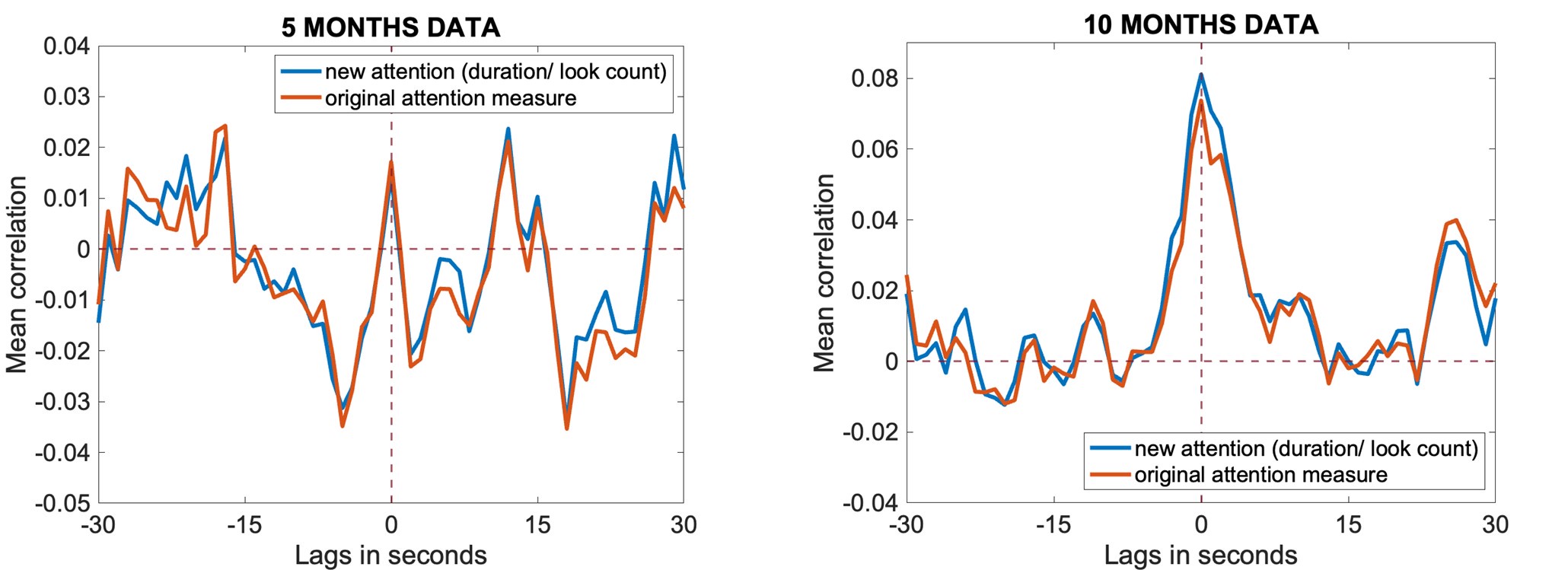

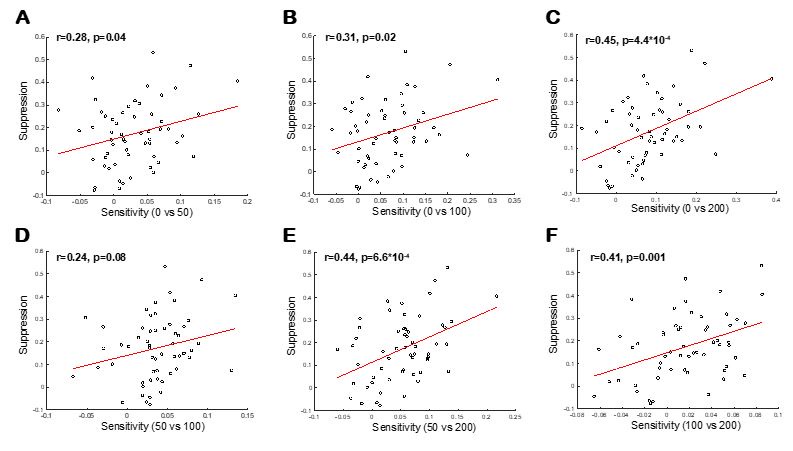

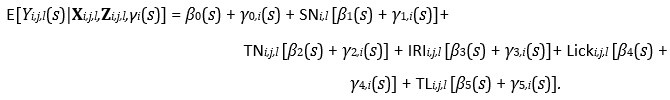

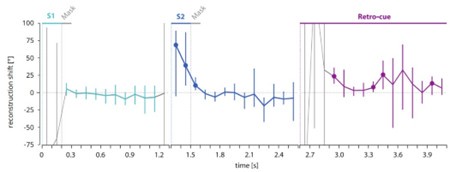

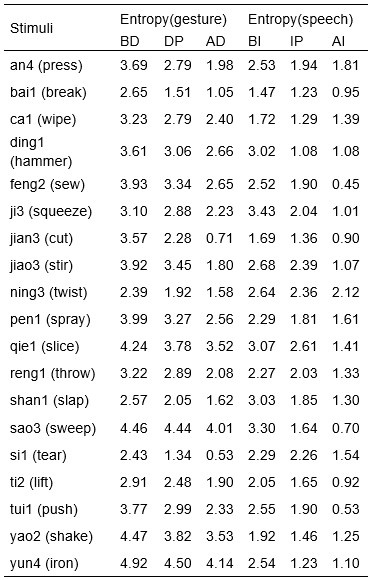

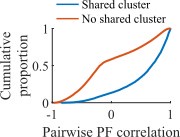

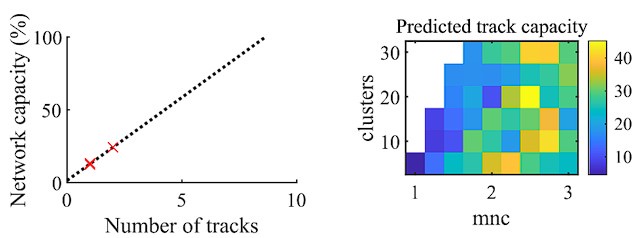

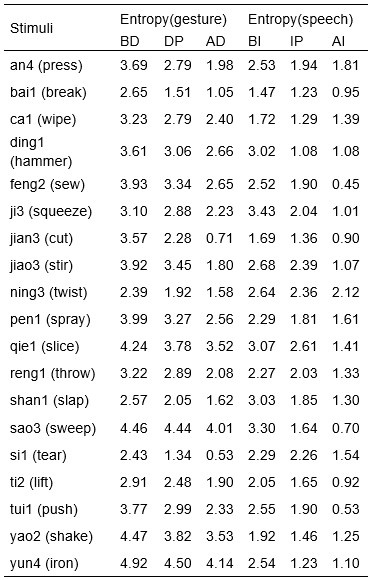

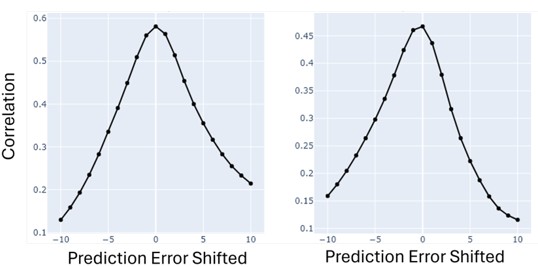

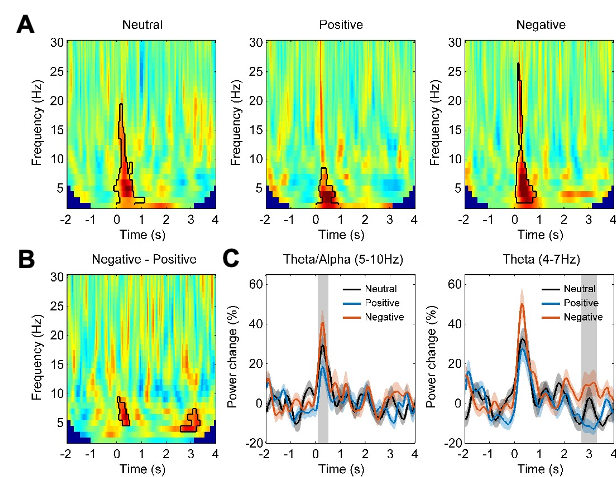

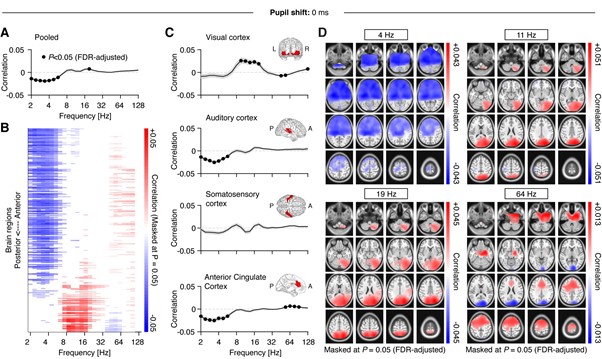

(2) Regarding the reviewer’s comment ‘choose to model the different variables to see whether MI/entropy could predict the neural responses’, we employed Representational Similarity Analysis (RSA) (Popal et.al, 2019) with MI and entropy as continuous variables. This analysis aimed to build a model to predict neural responses based on these feature metrics.

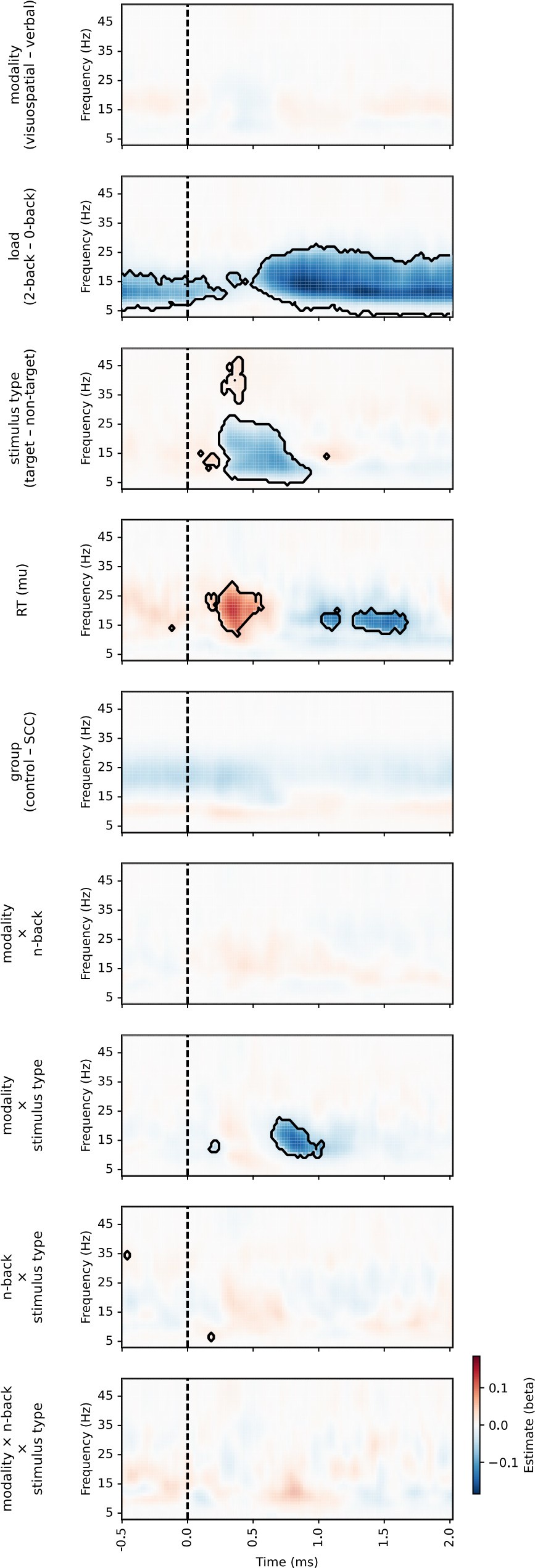

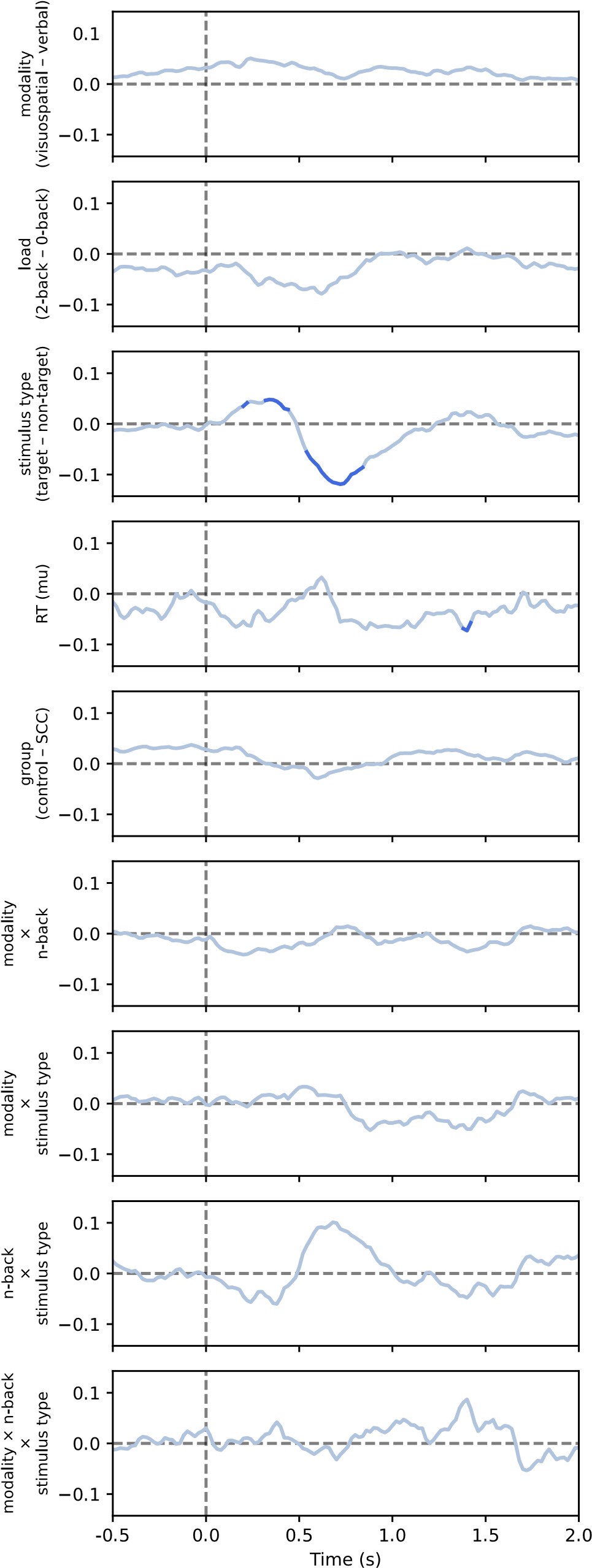

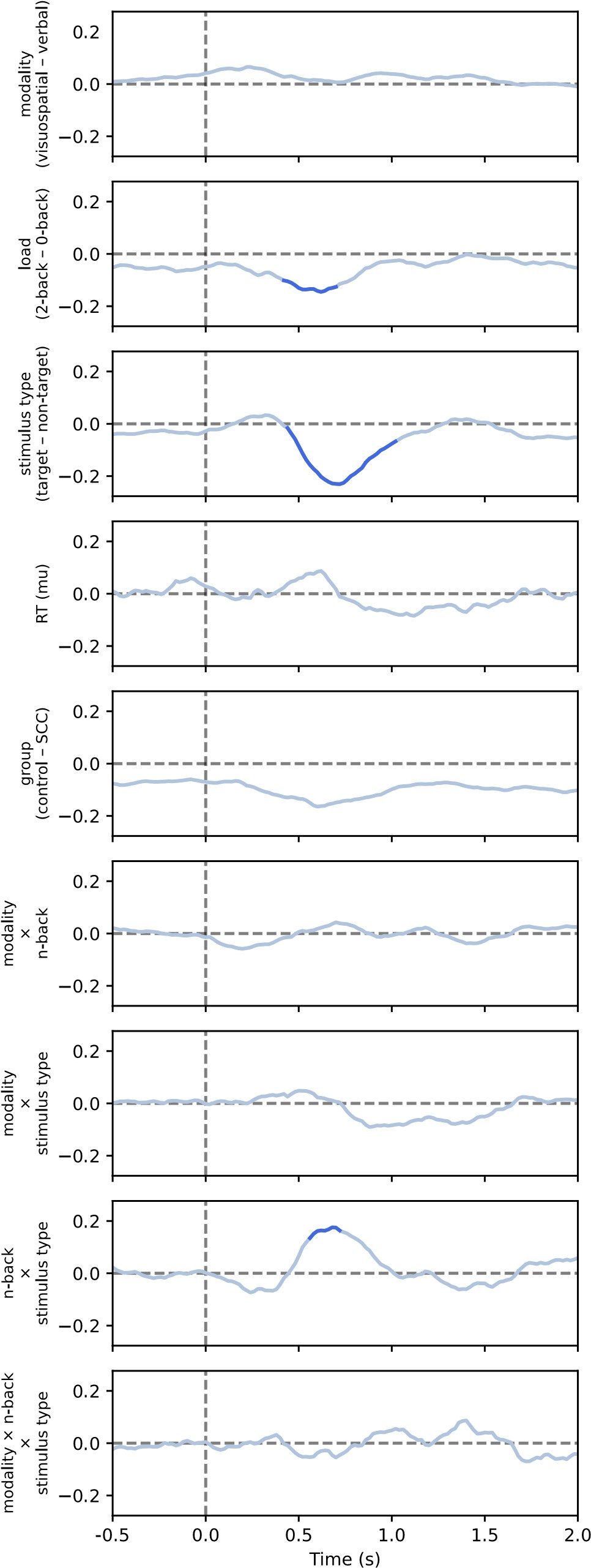

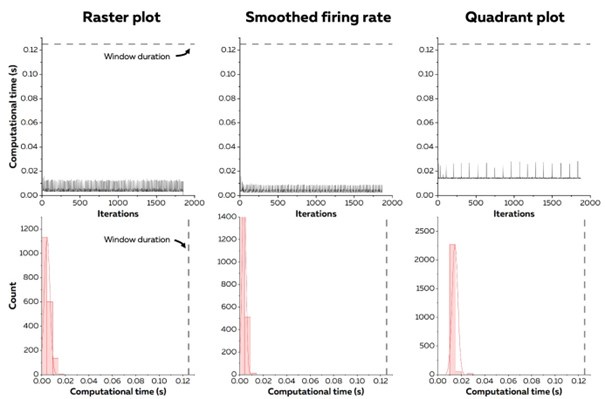

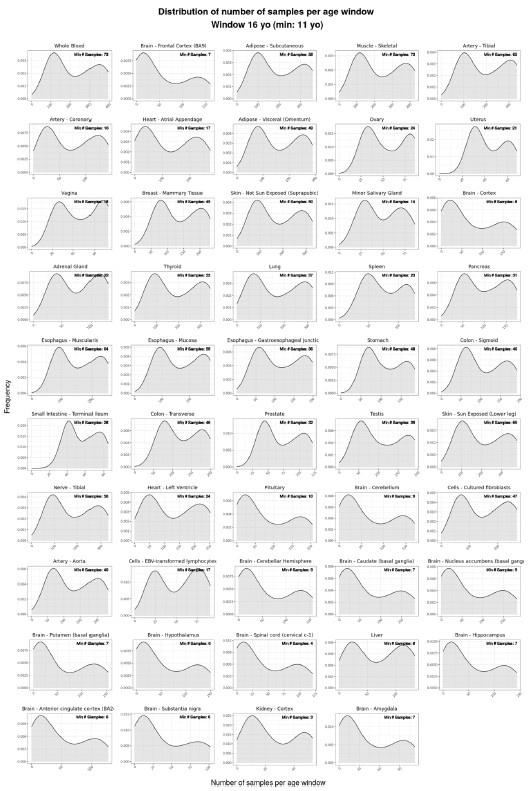

To capture dynamic temporal features indicative of different stages of multisensory integration, we segmented the EEG data into overlapping time windows (40 ms in duration with a 10 ms step size). The 40 ms window was chosen based on the TMS protocol used in Experiment 2, which also employed a 40 ms time window. The 10 ms step size (equivalent to 5 time points) was used to detect subtle shifts in neural responses that might not be captured by larger time windows, allowing for a more granular analysis of the temporal dynamics of neural activity.

Following segmentation, the EEG data were reshaped into a four-dimensional matrix (42 channels × 20 time points × 97 time windows × 20 features). To construct a neural similarity matrix, we averaged the EEG data across time points within each channel and each time window. The resulting matrix was then processed using the pdist function to compute pairwise distances between adjacent data points. This allowed us to calculate correlations between the neural matrix and three feature similarity matrices, which were constructed in a similar manner. These three matrices corresponded to (1) gesture entropy, (2) speech entropy, and (3) mutual information (MI). This approach enabled us to quantify how well the neural responses corresponded to the semantic dimensions of gesture and speech stimuli at each time window.

To determine the significance of the correlations between neural activity and feature matrices, we conducted 1000 permutation tests. In this procedure, we randomized the data or feature matrices and recalculated the correlations repeatedly, generating a null distribution against which the observed correlation values were compared. Statistical significance was determined if the observed correlation exceeded the null distribution threshold (p < 0.05). This permutation approach helps mitigate the risk of spurious correlations, ensuring that the relationships between the neural data and feature matrices are both robust and meaningful.

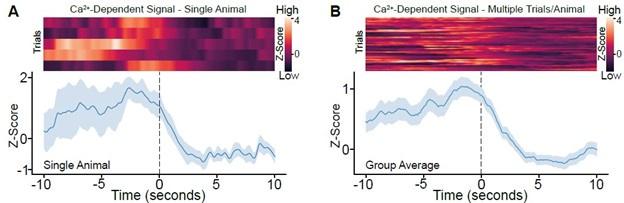

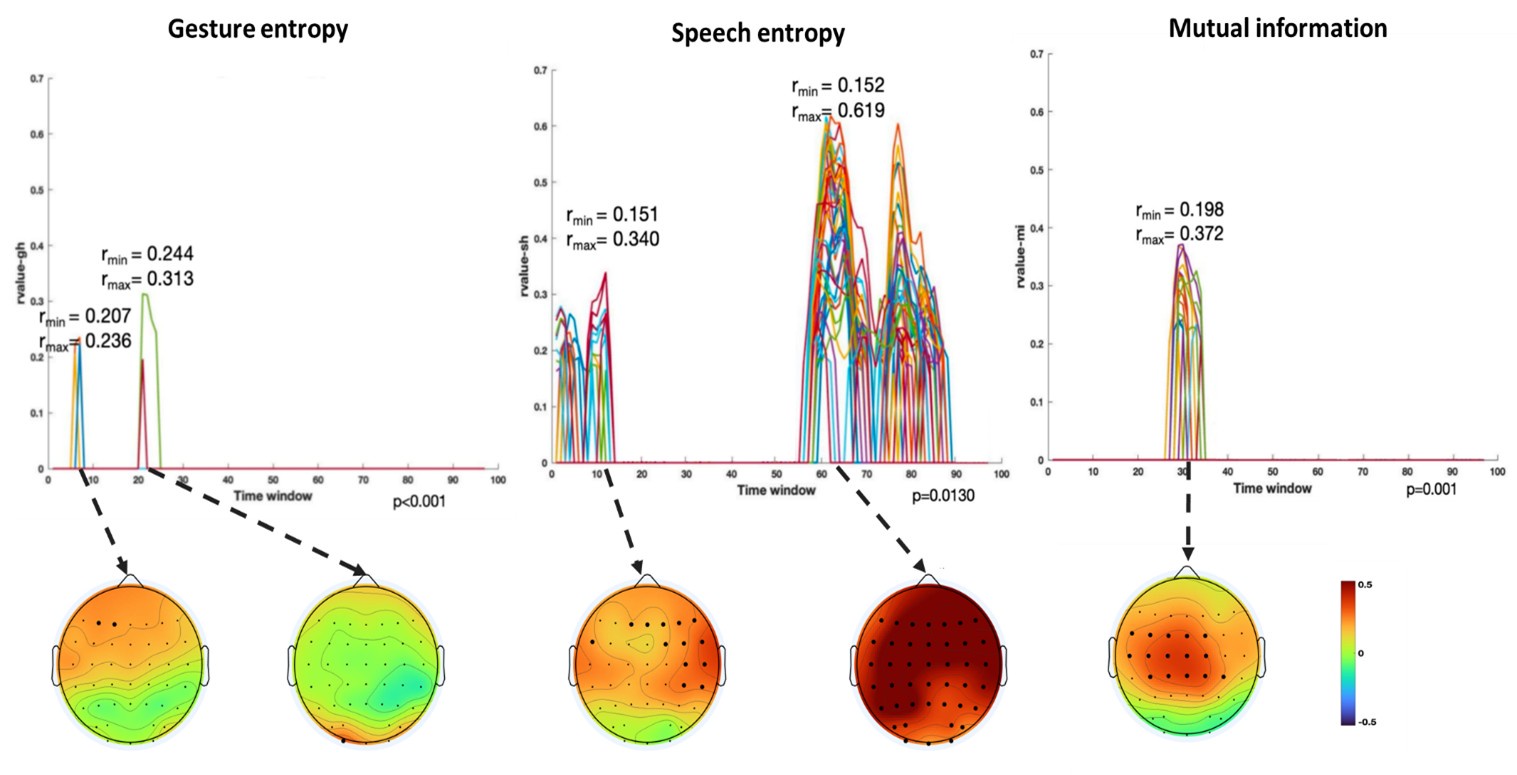

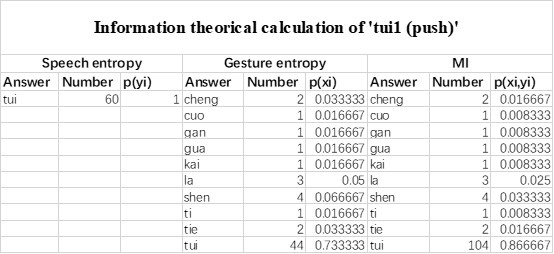

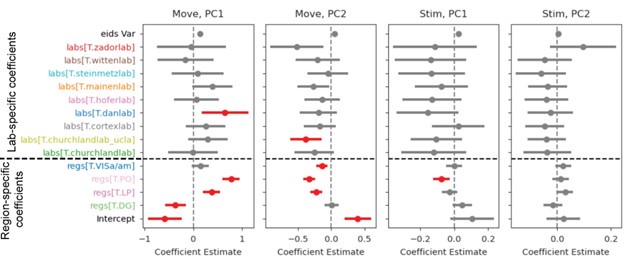

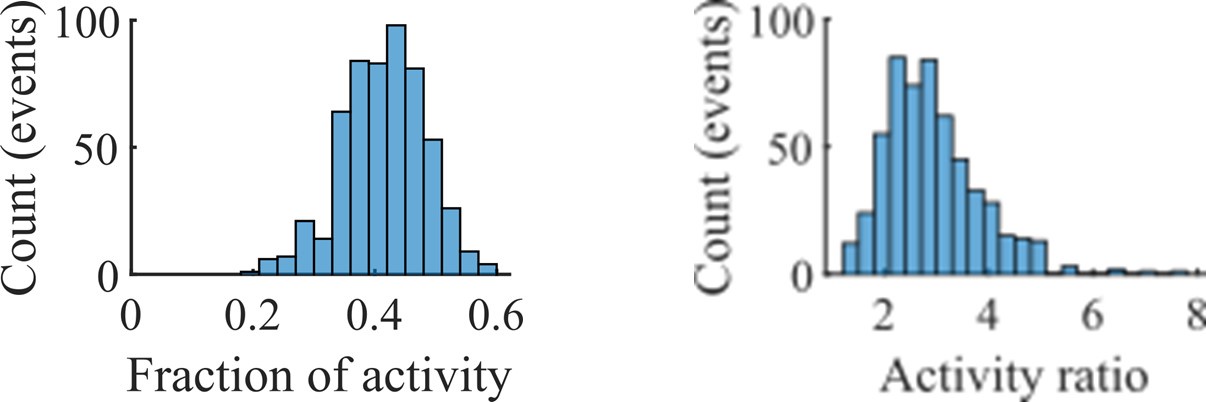

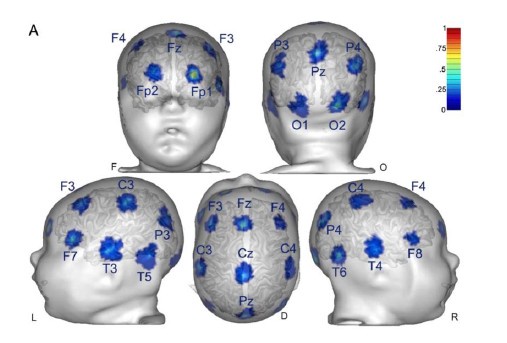

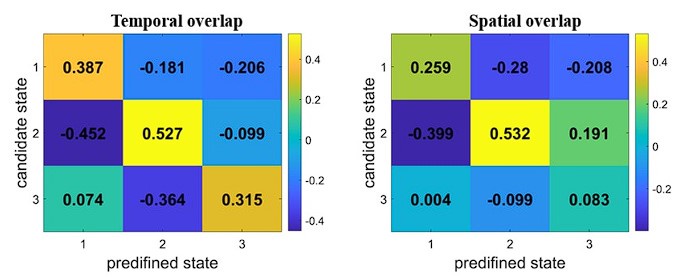

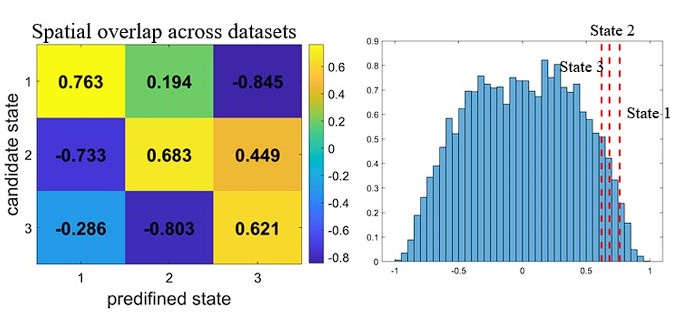

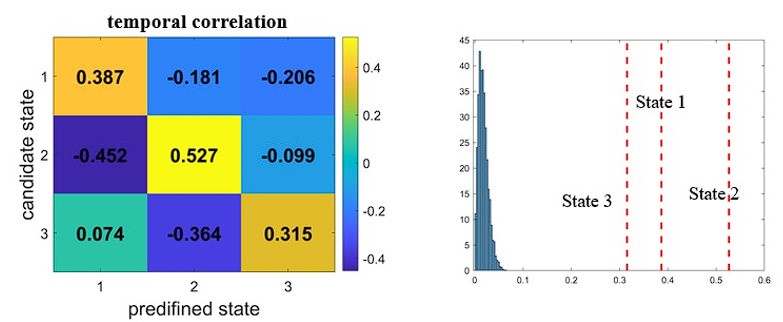

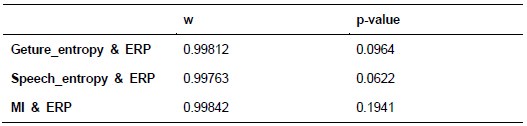

Finally, significant correlations were subjected to clustering analysis, which grouped similar neural response patterns across time windows and channels. This clustering allowed us to identify temporal and spatial patterns in the neural data that consistently aligned with the semantic features of gesture and speech stimuli, thus revealing the dynamic integration of these multisensory modalities across time. Results are as follows:

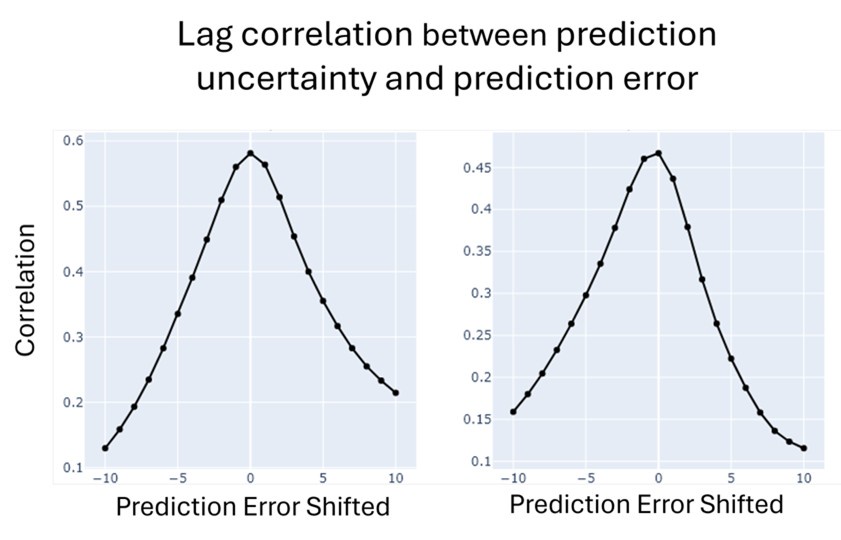

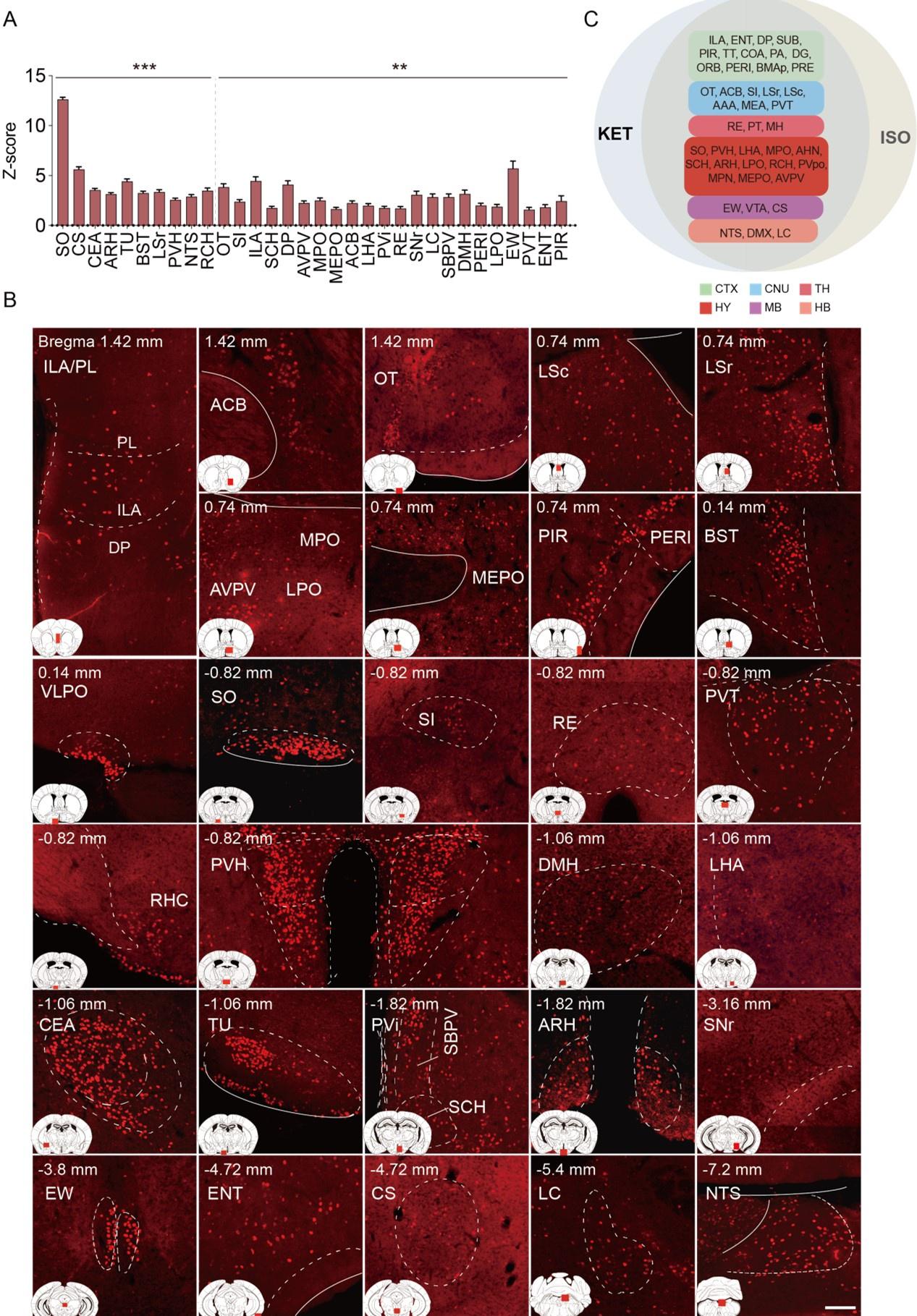

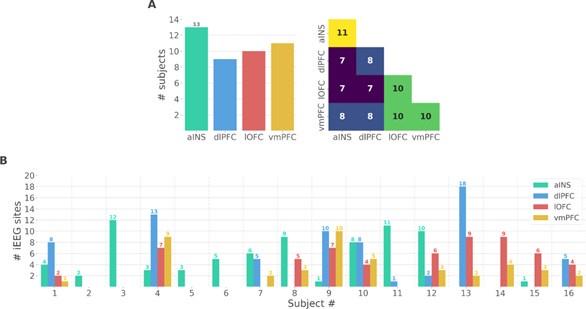

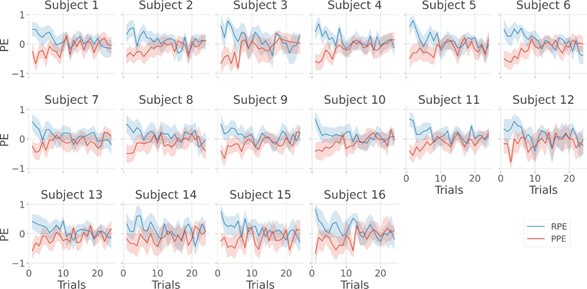

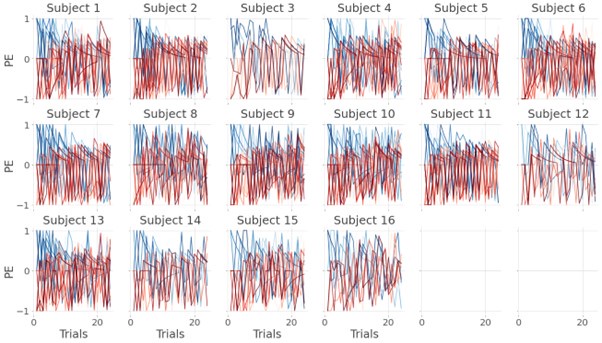

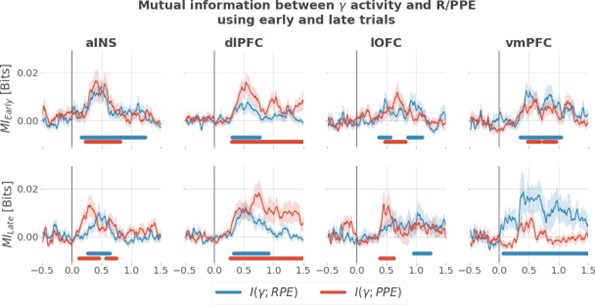

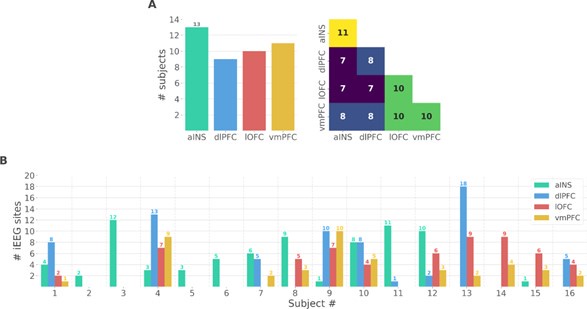

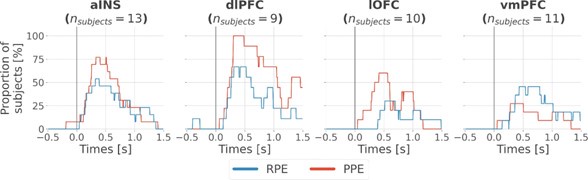

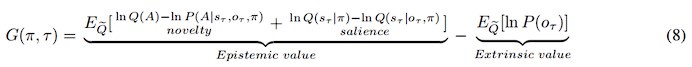

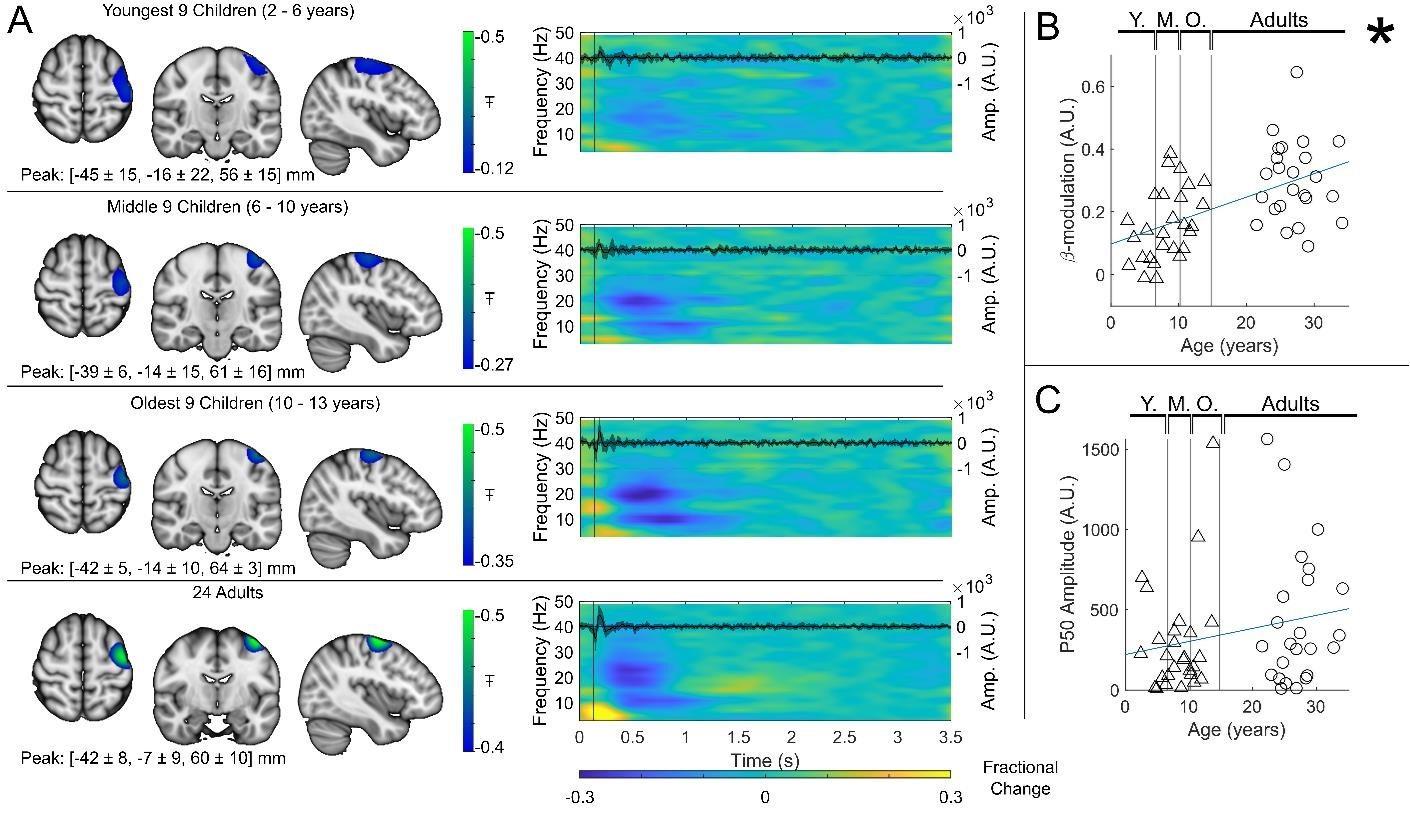

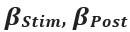

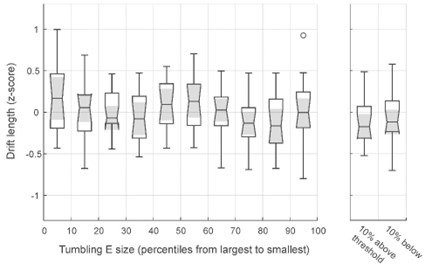

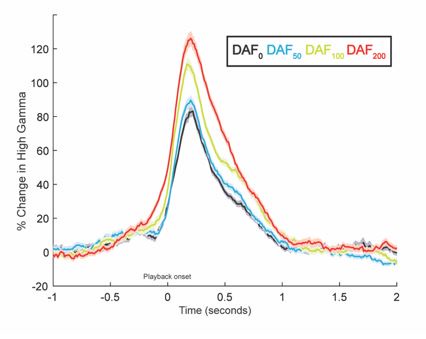

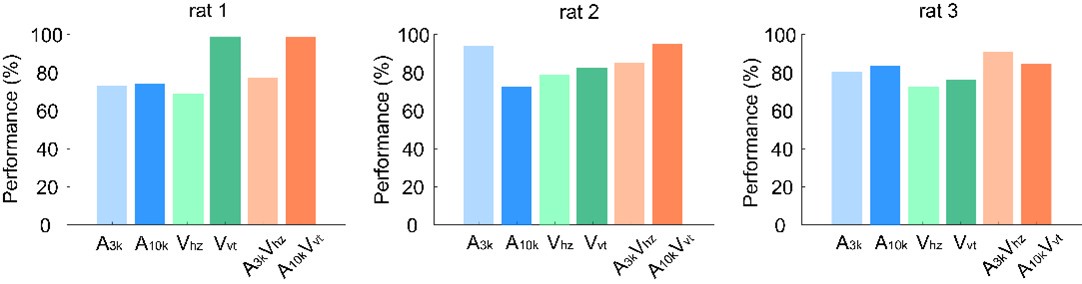

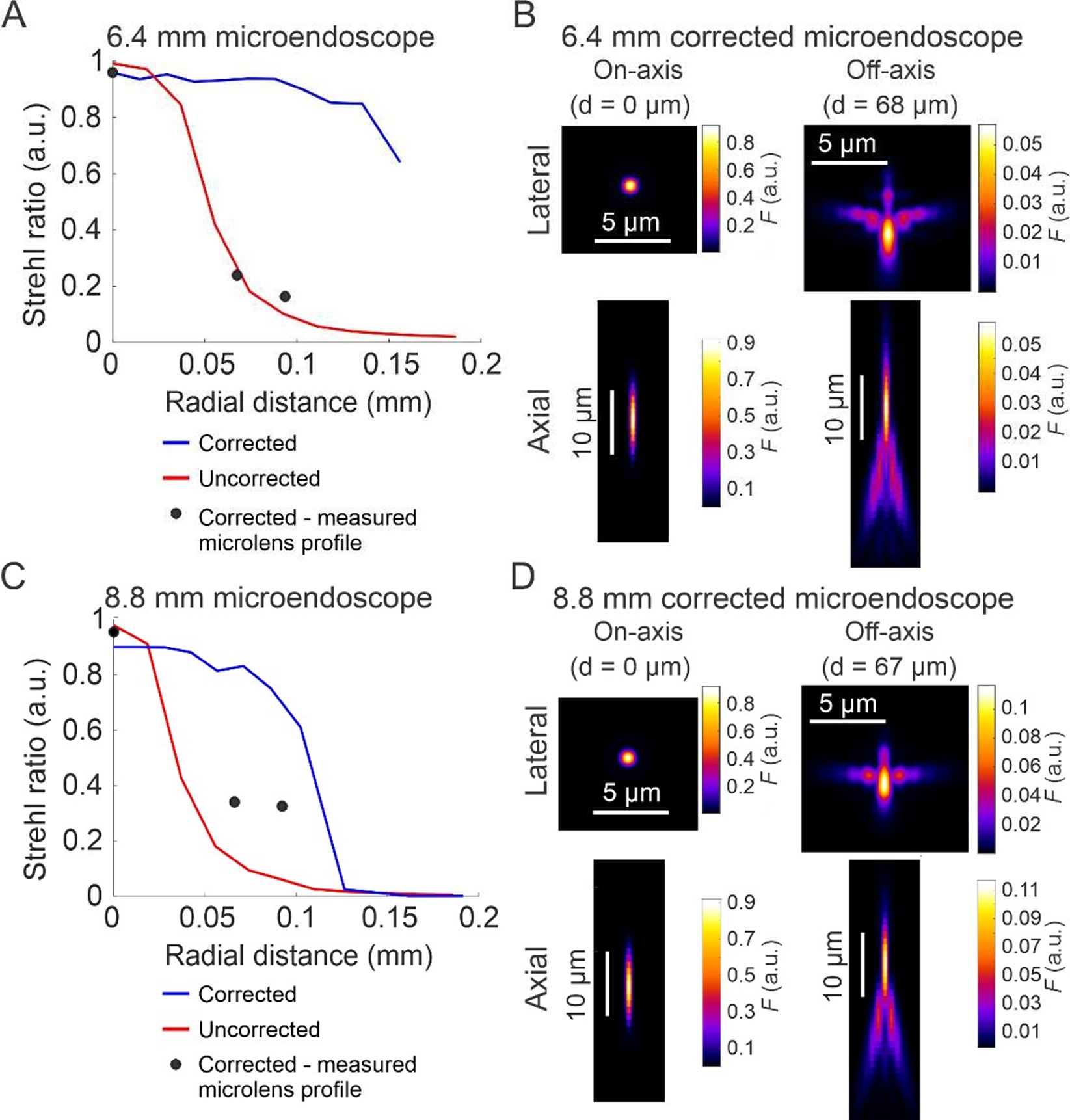

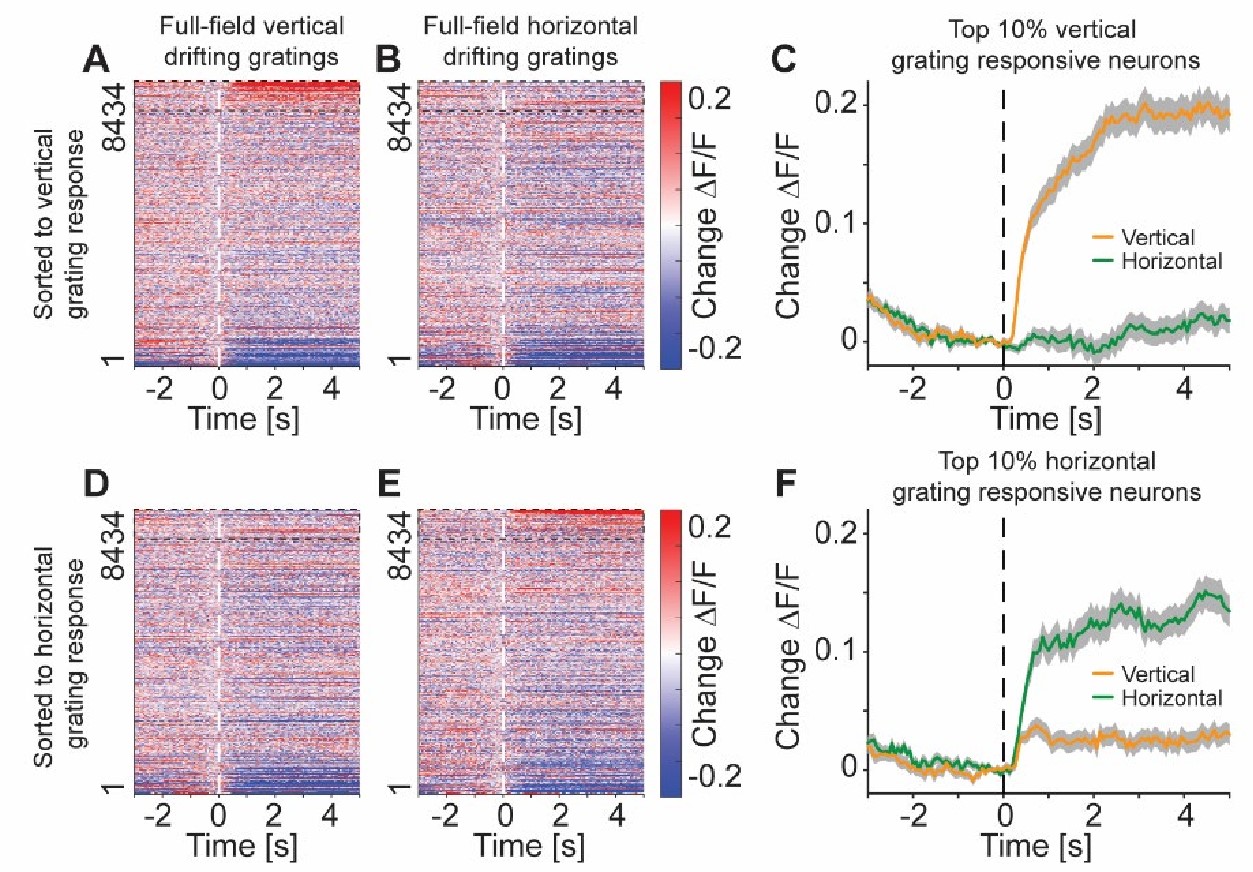

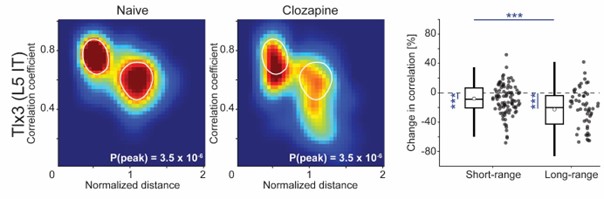

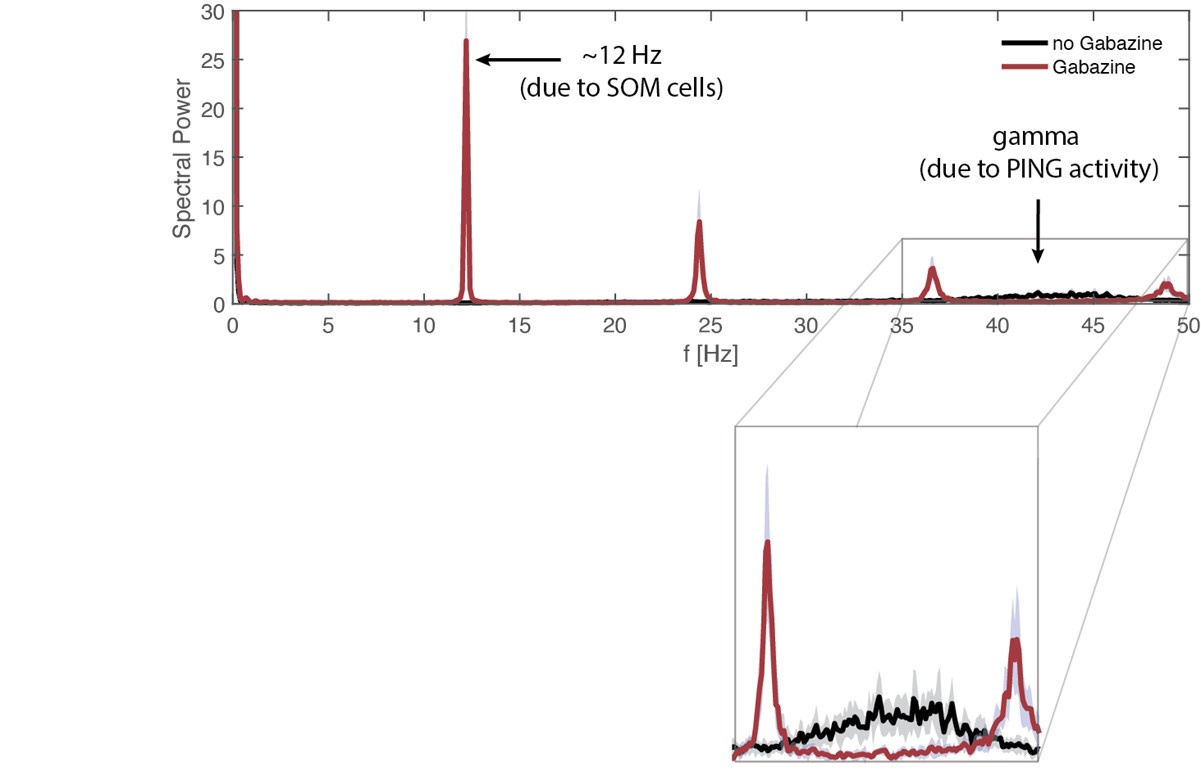

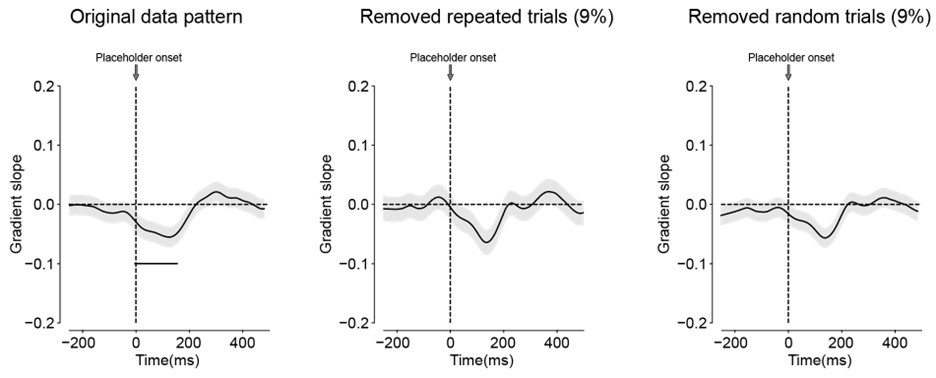

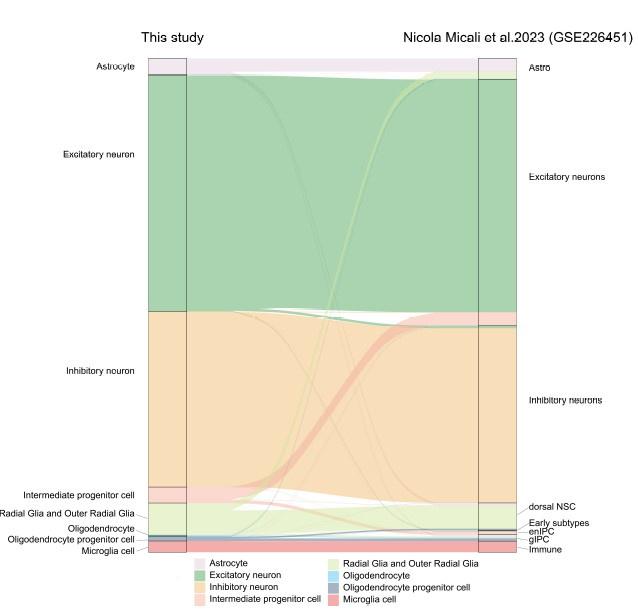

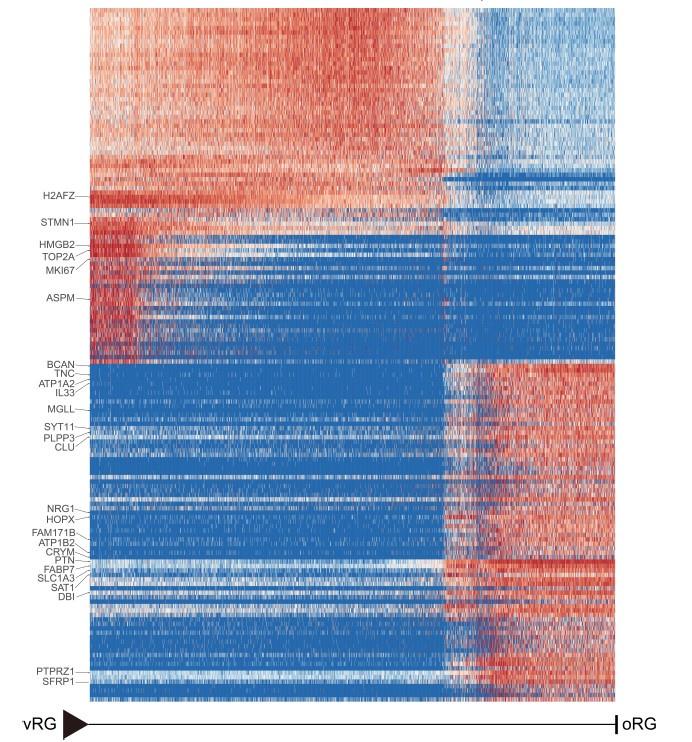

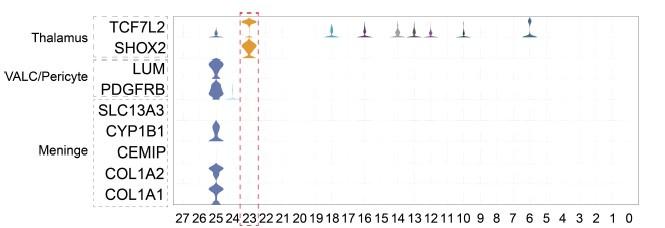

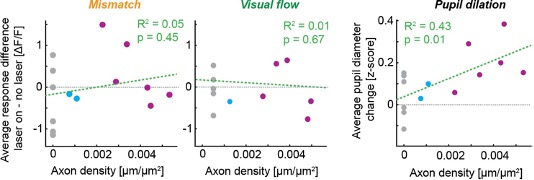

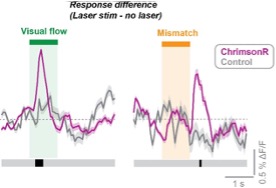

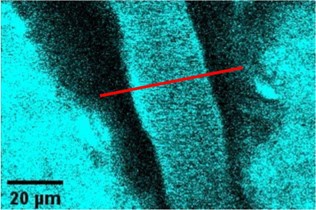

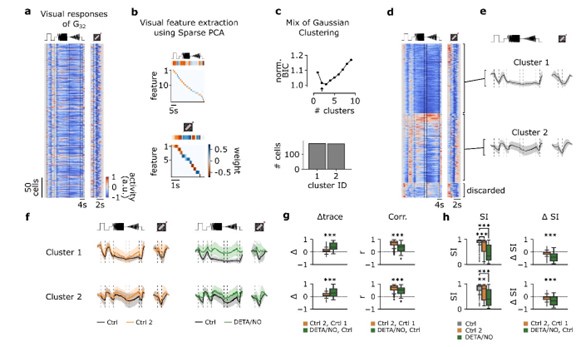

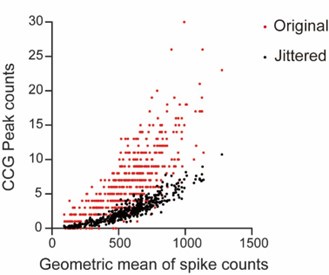

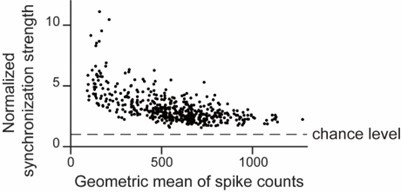

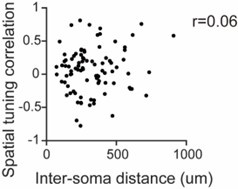

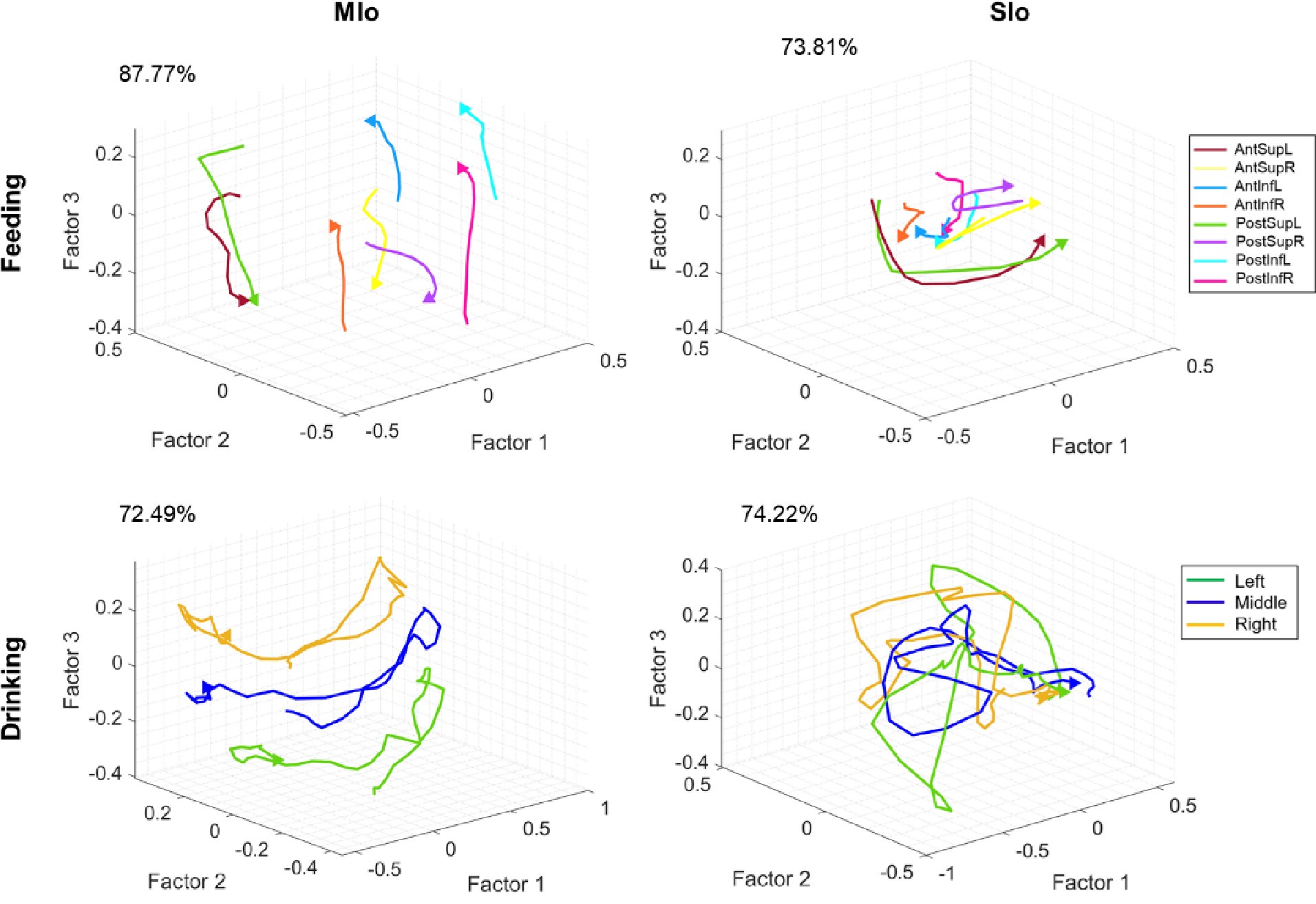

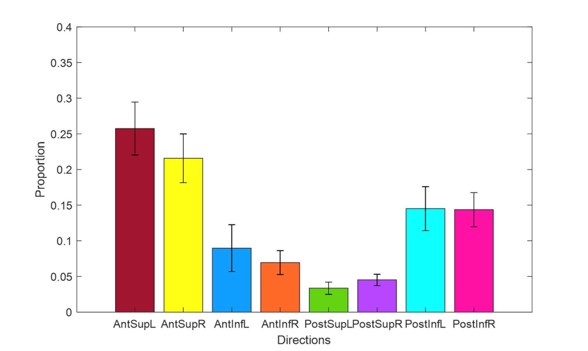

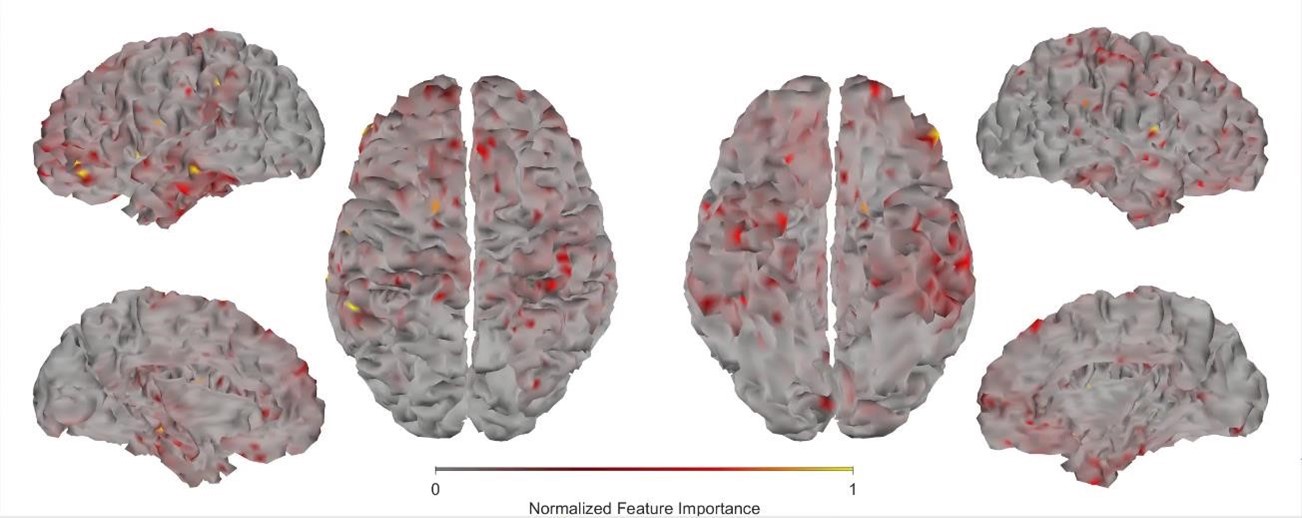

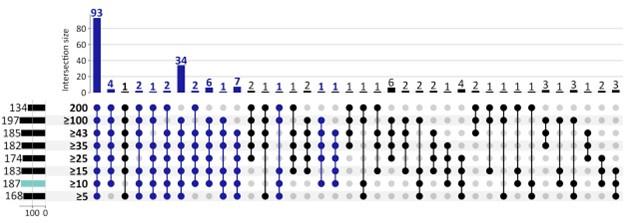

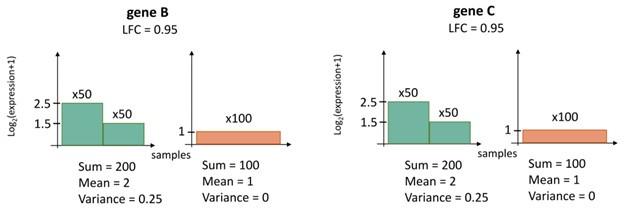

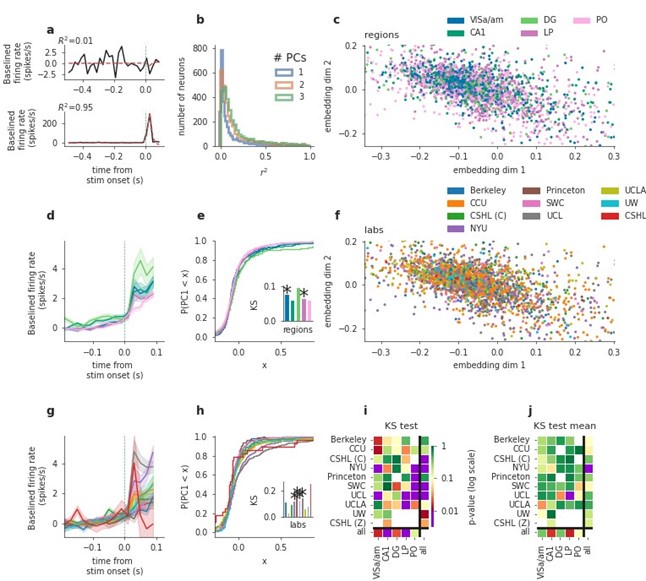

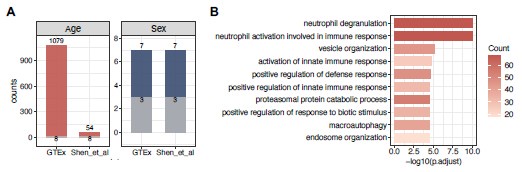

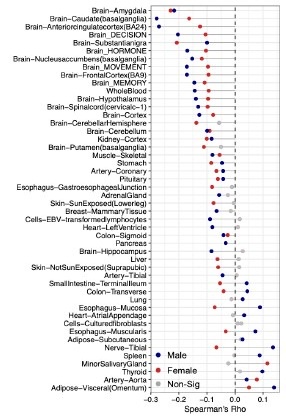

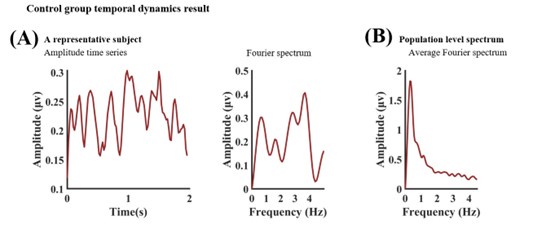

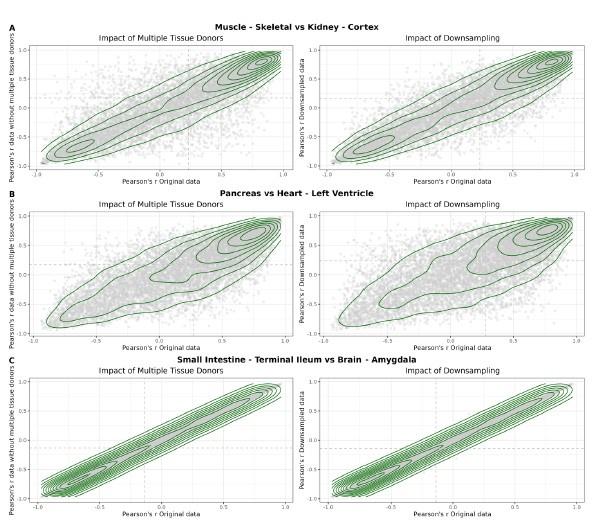

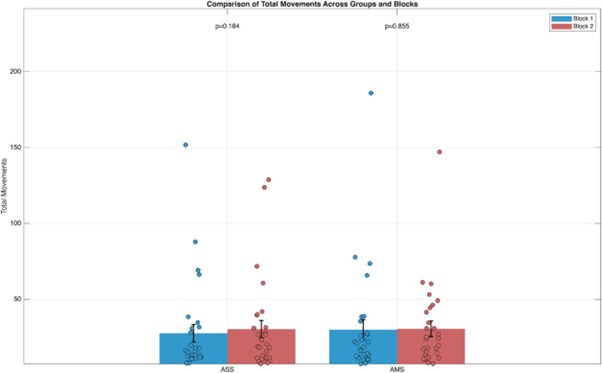

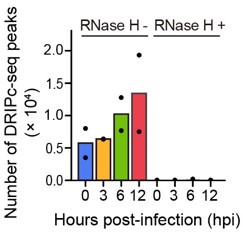

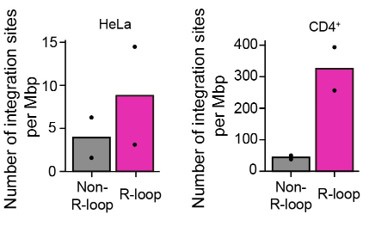

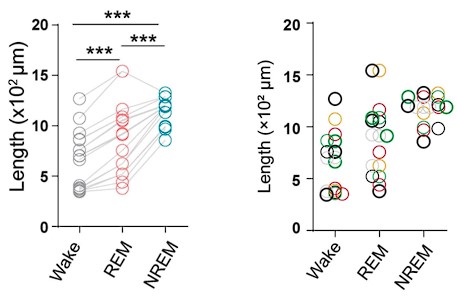

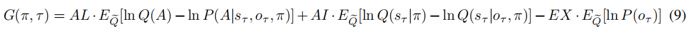

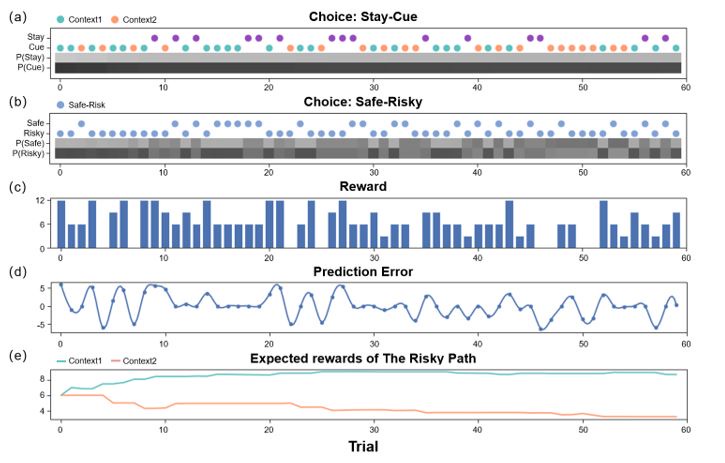

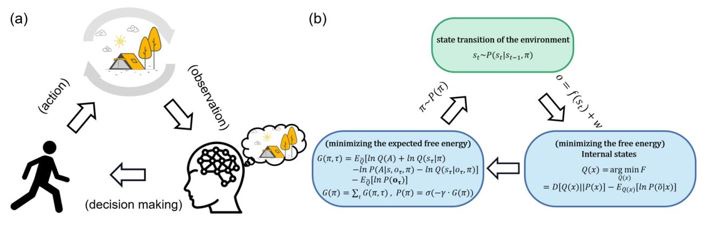

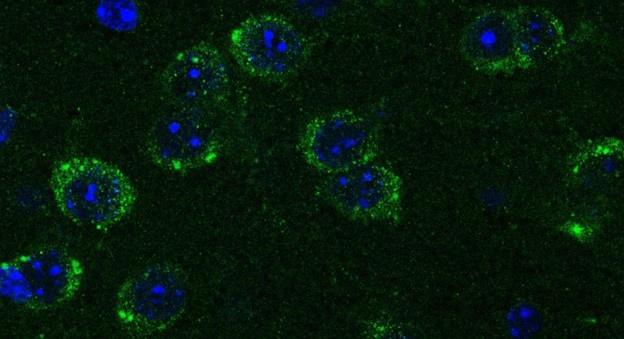

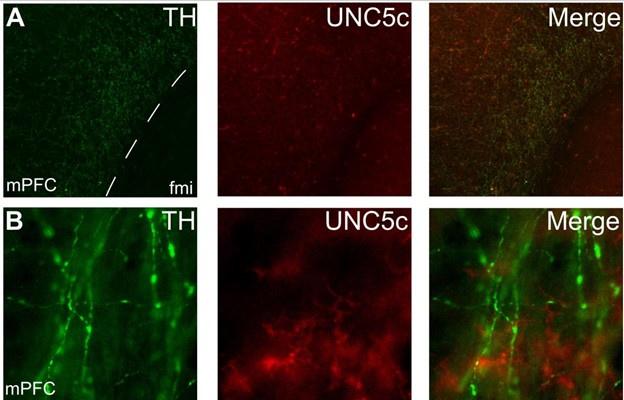

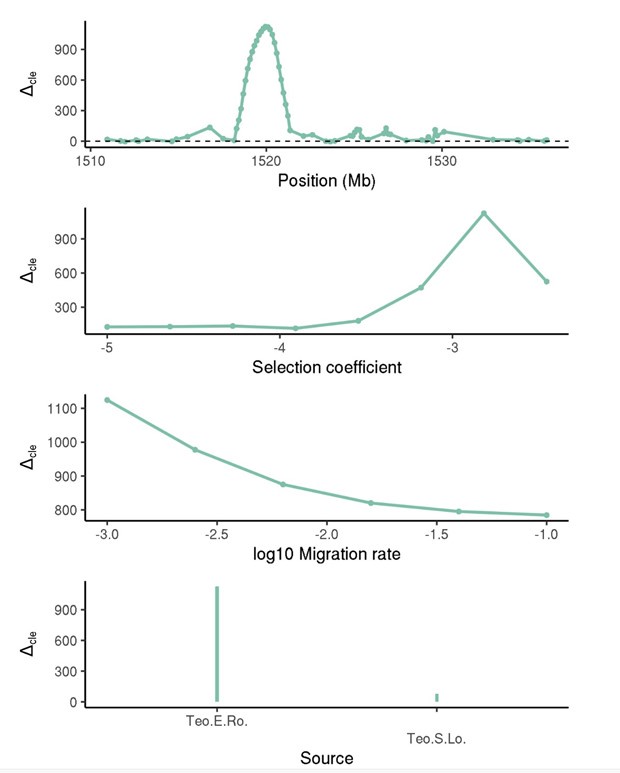

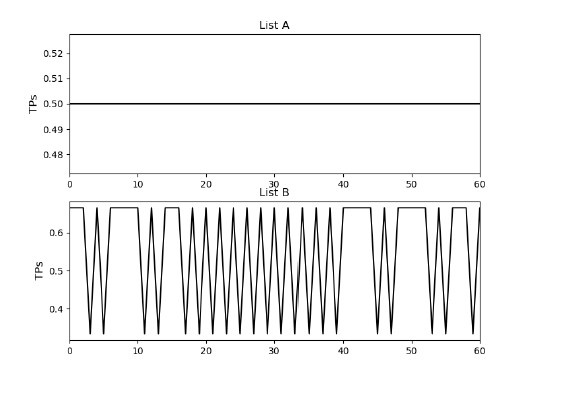

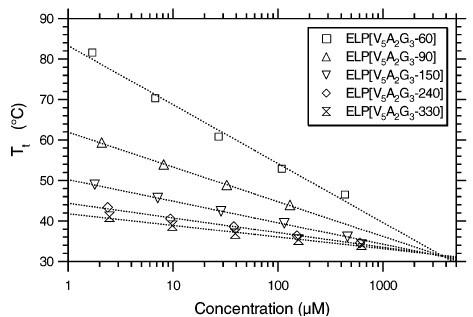

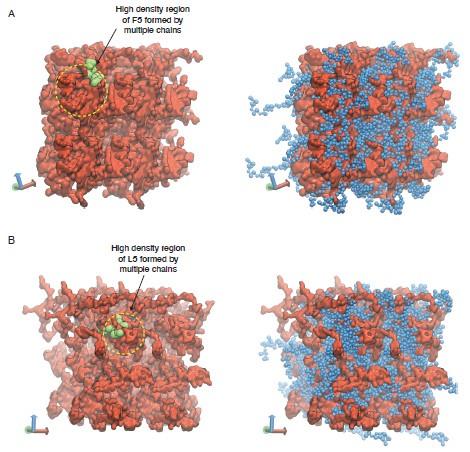

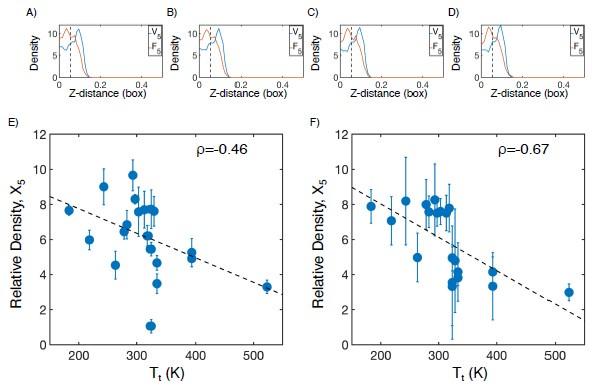

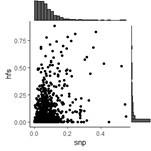

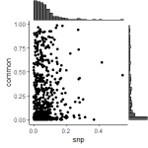

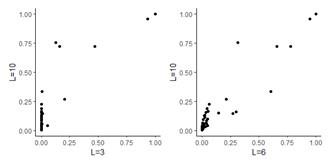

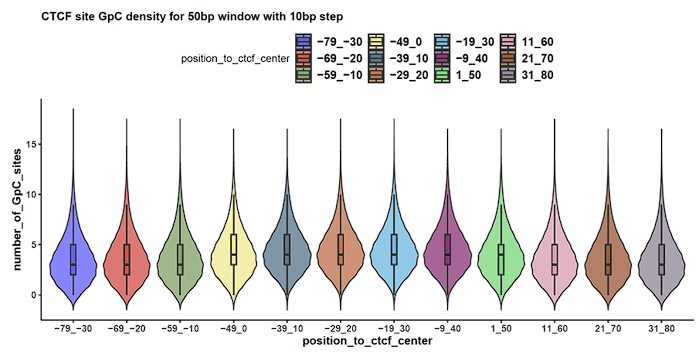

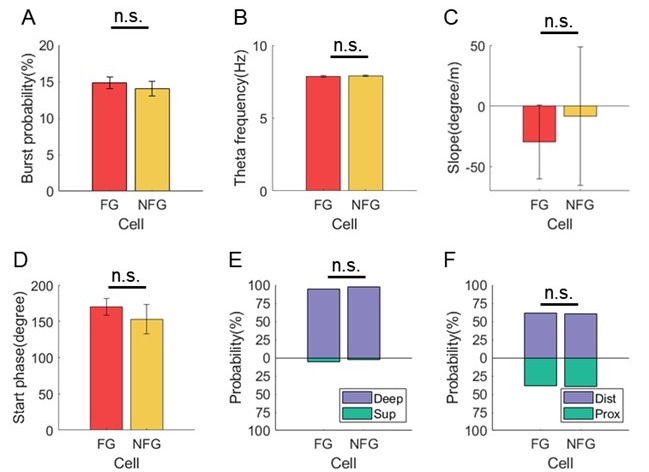

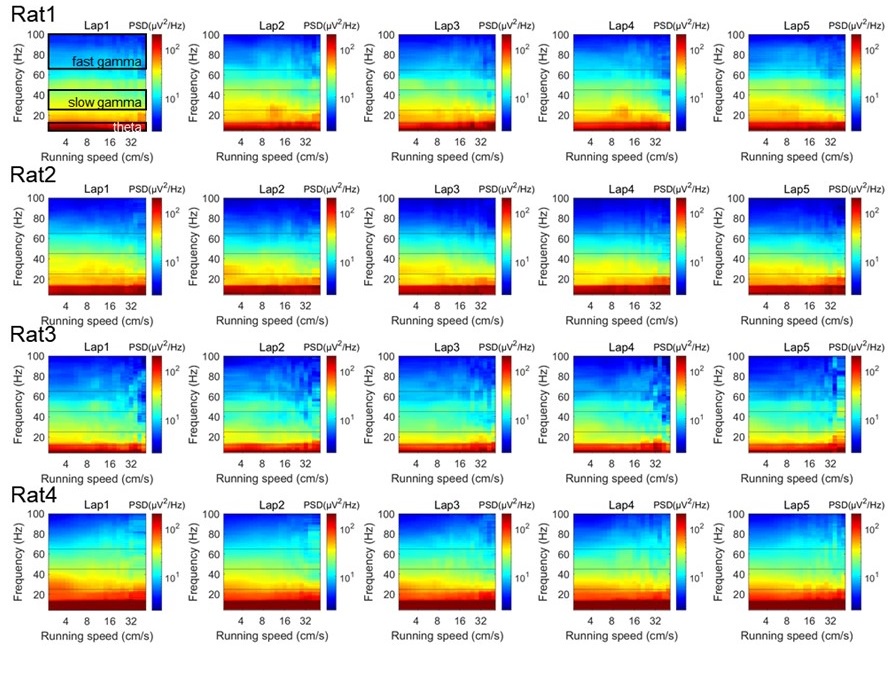

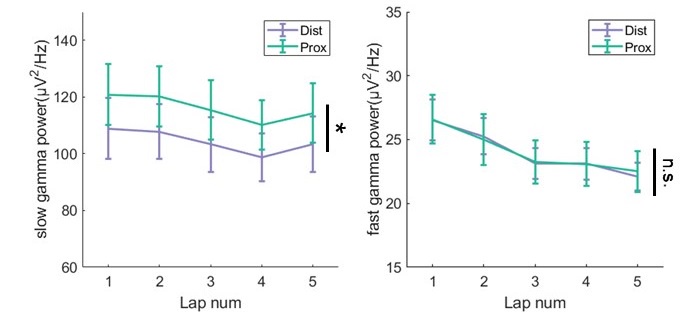

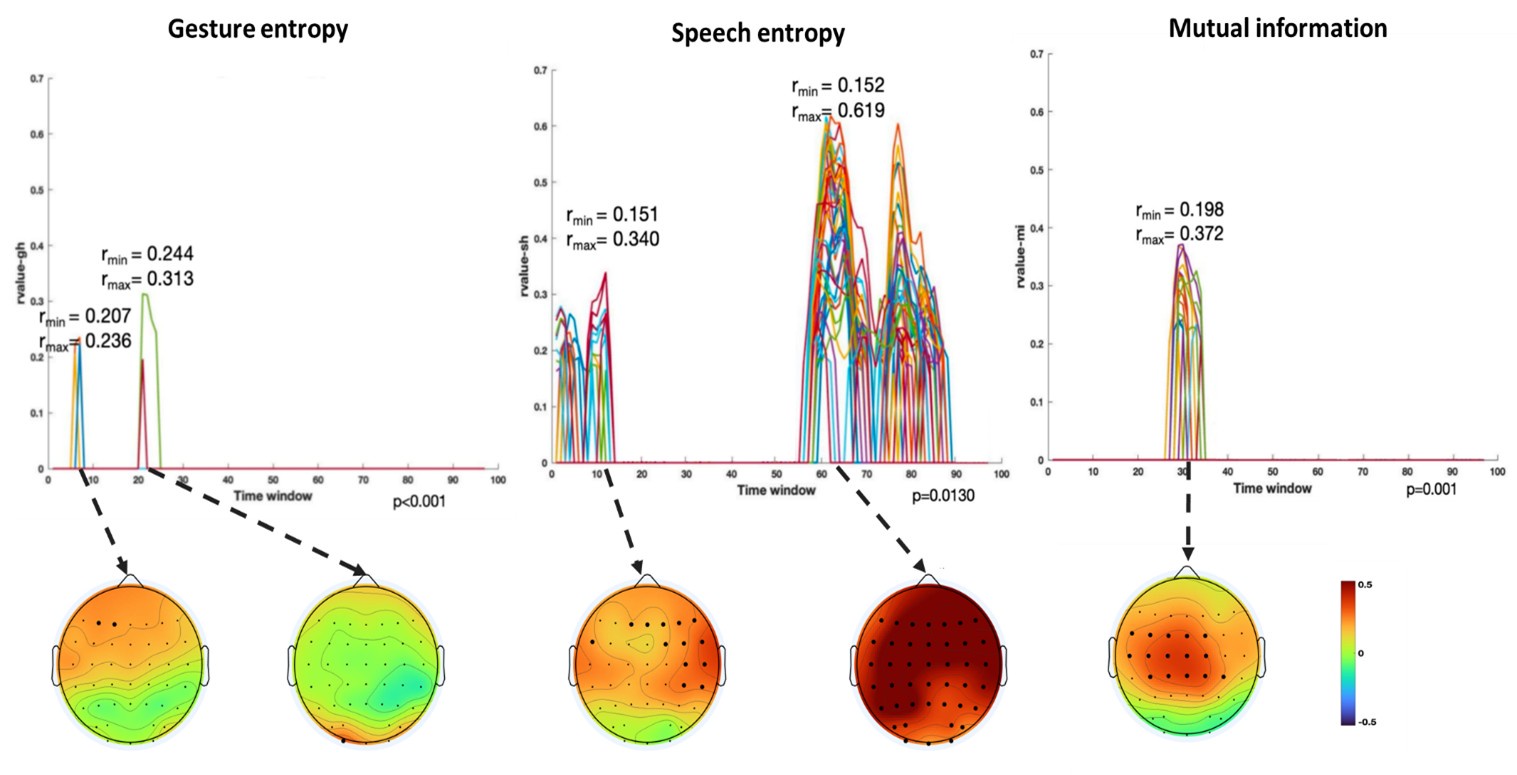

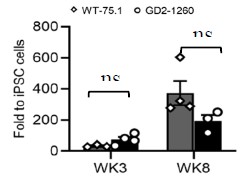

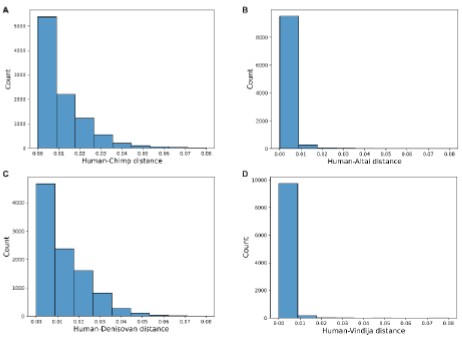

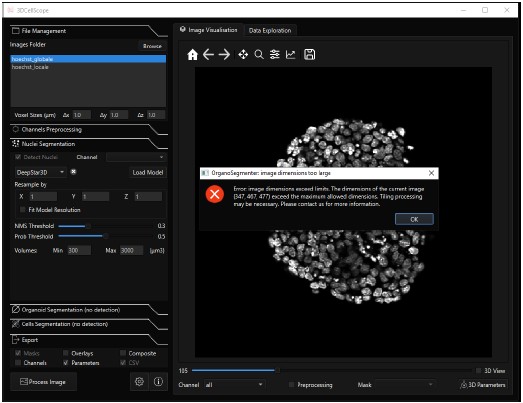

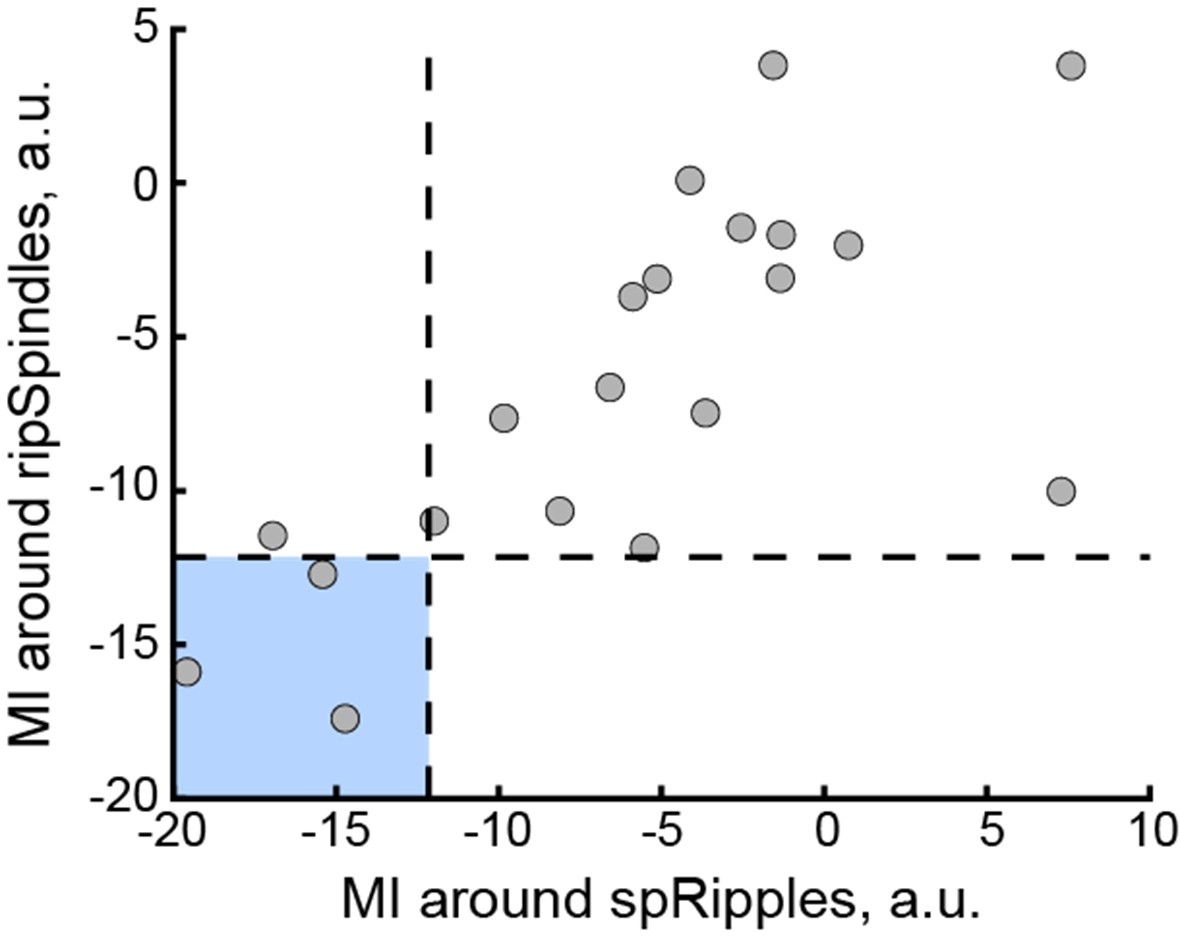

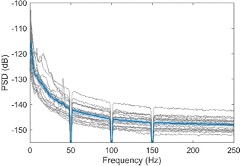

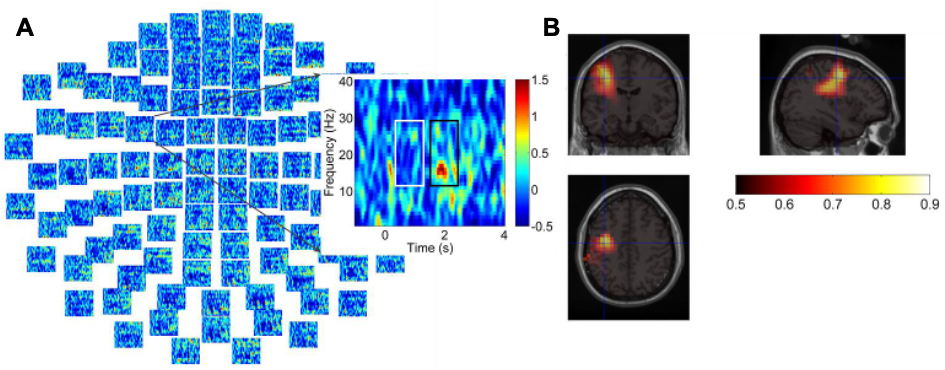

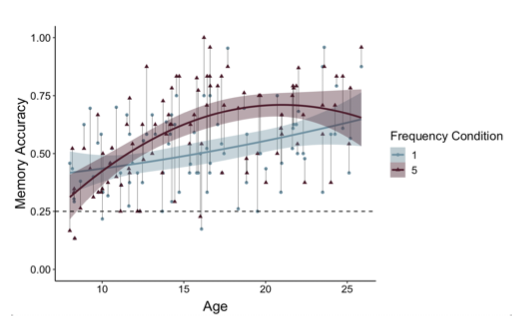

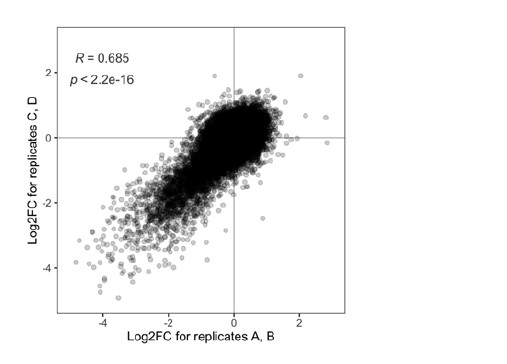

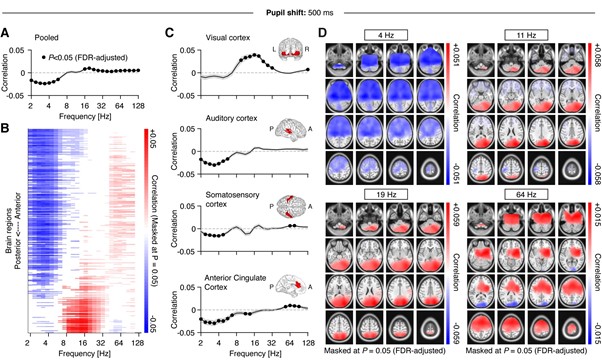

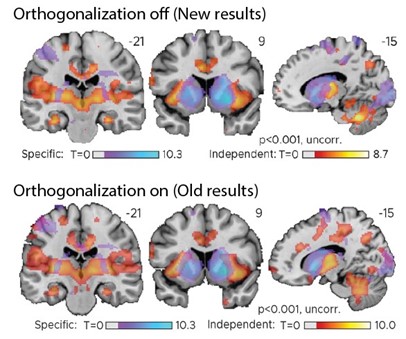

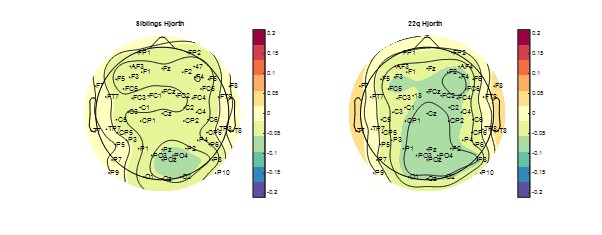

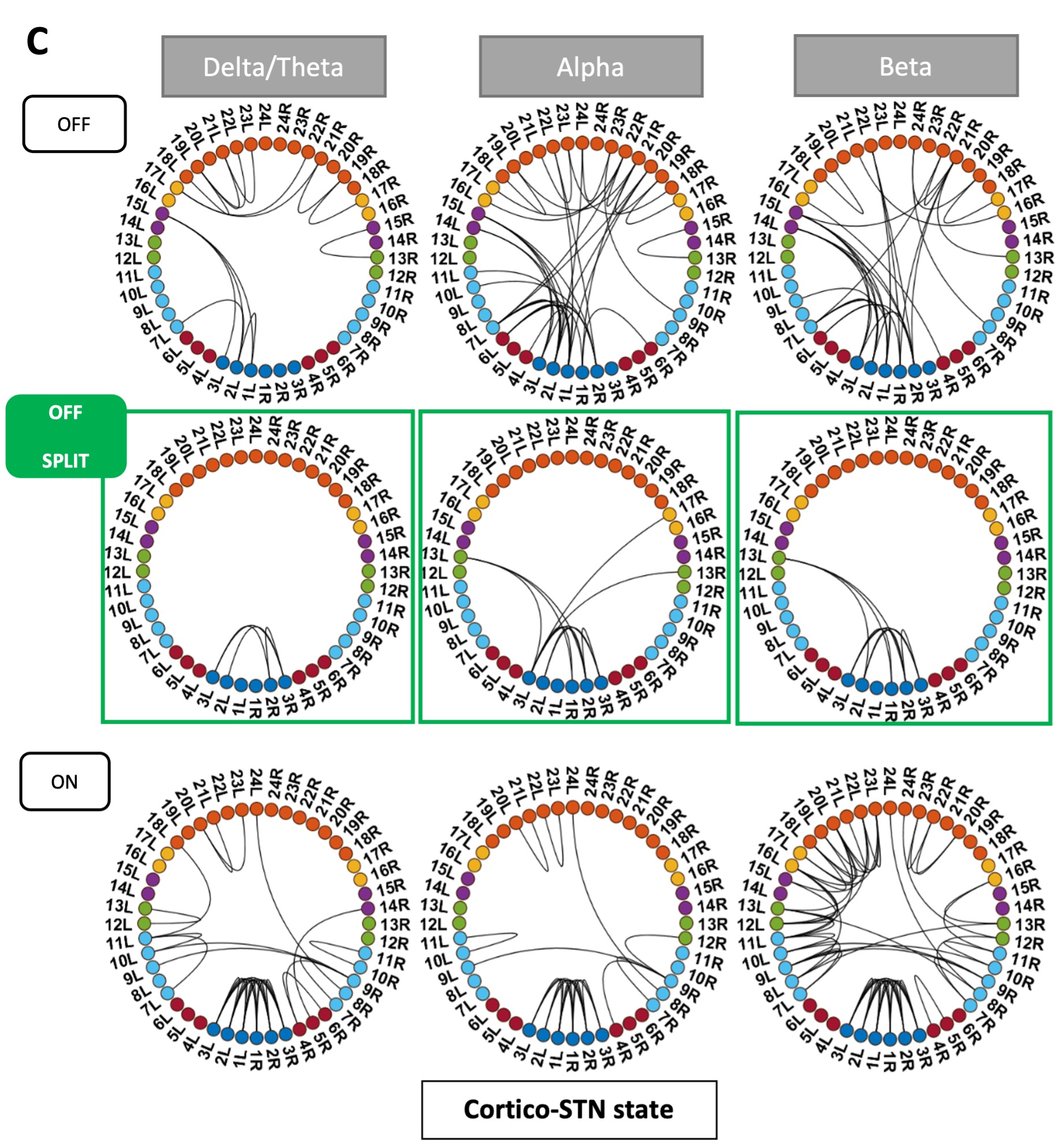

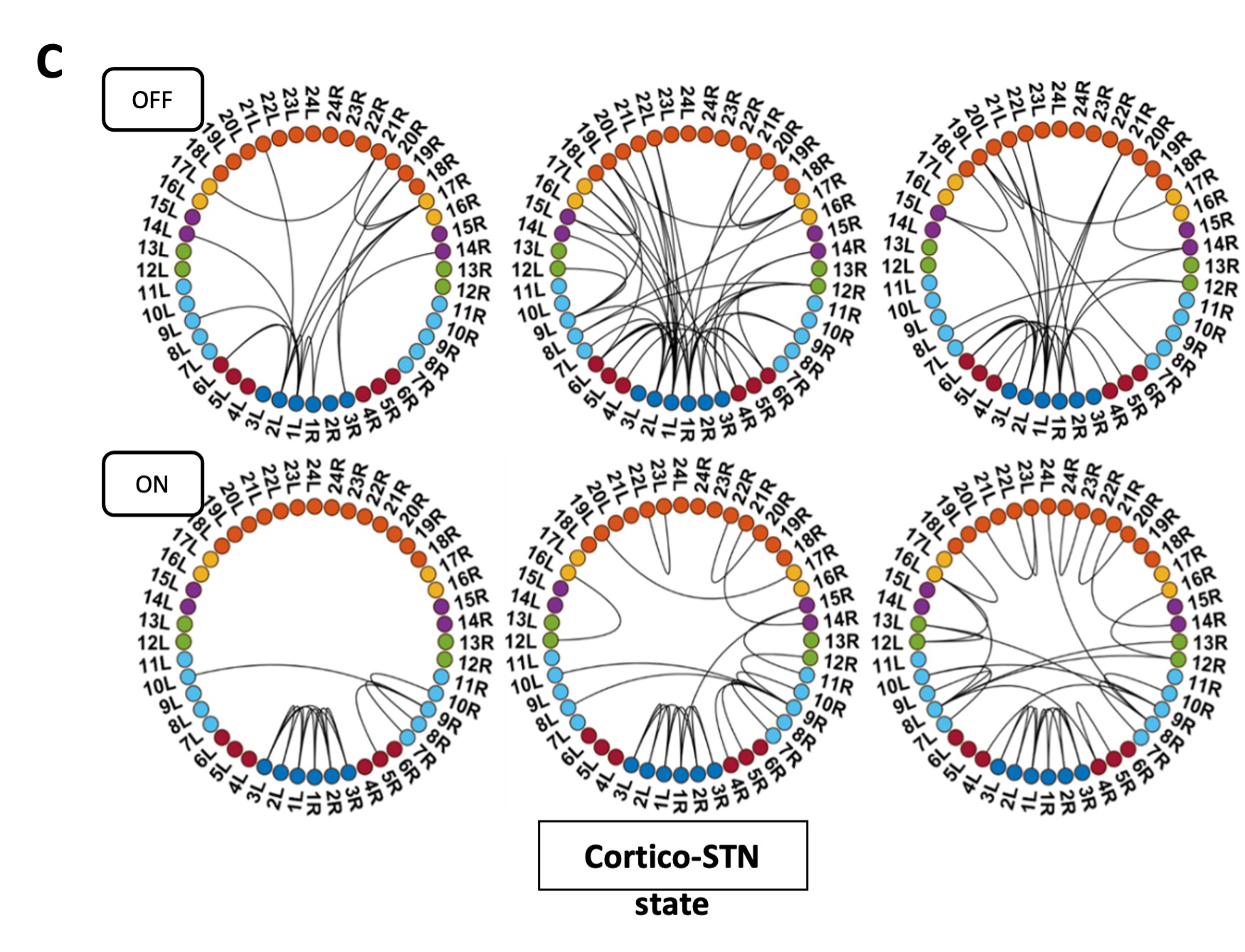

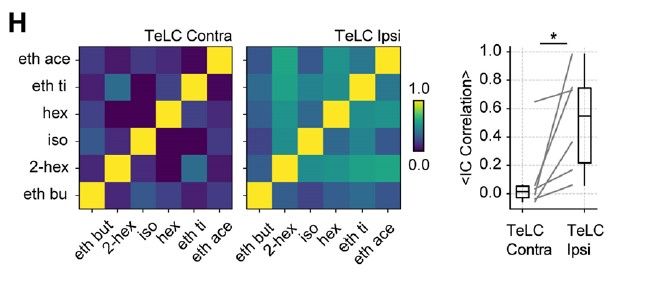

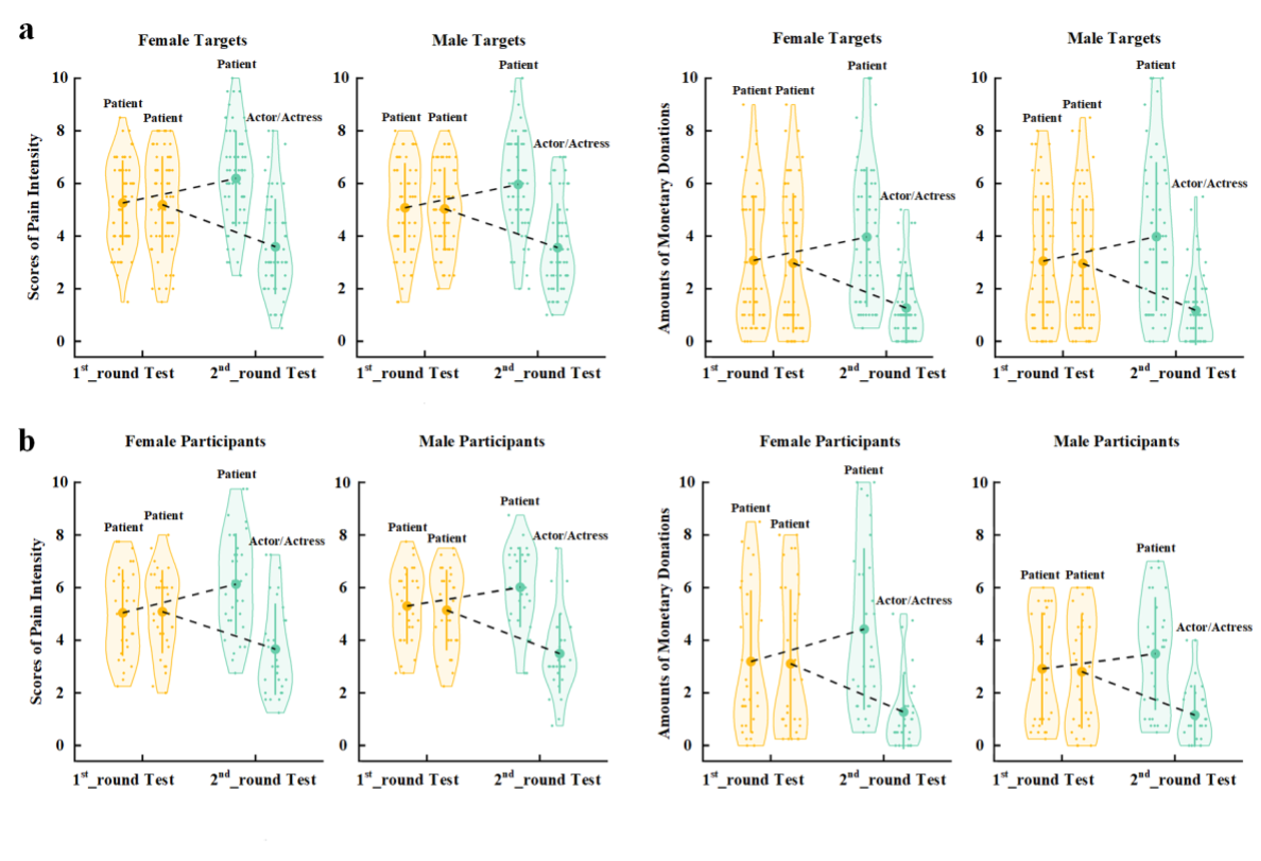

(1) Two significant clusters were identified for gesture entropy (Author response image 1 left). The first cluster was observed between 60-110 ms (channels F1 and F3), with correlation coefficients (r) ranging from 0.207 to 0.236 (p < 0.001). The second cluster was found between 210-280 ms (channel O1), with r-values ranging from 0.244 to 0.313 (p < 0.001).

(2) For speech entropy (Author response image 1 middle), significant clusters were detected in both early and late time windows. In the early time windows, the largest significant cluster was found between 10-170 ms (channels F2, F4, F6, FC2, FC4, FC6, C4, C6, CP4, and CP6), with r-values ranging from 0.151 to 0.340 (p = 0.013), corresponding to the P1 component (0-100 ms). In the late time windows, the largest significant cluster was observed between 560-920 ms (across the whole brain, all channels), with r-values ranging from 0.152 to 0.619 (p = 0.013).

(3) For mutual information (MI) (Author response image 1 right), a significant cluster was found between 270-380 ms (channels FC1, FC2, FC3, FC5, C1, C2, C3, C5, CP1, CP2, CP3, CP5, FCz, Cz, and CPz), with r-values ranging from 0.198 to 0.372 (p = 0.001).

Author response image 1.

Results of RSA analysis.

These additional findings suggest that even using a different modeling approach, neural responses, as indexed by feature metrics of entropy and mutual information, are temporally aligned with distinct ERP components and ERP clusters, as reported in the current manuscript. This alignment serves to further consolidate the results, reinforcing the conclusion we draw. Considering the length of the manuscript, we did not include these results in the current manuscript.

(3) In terms of the correction of multiple comparisons, in Experiment 1, two separate participant groups were recruited for HD-tDCS applied over either the IFG or pMTG. FDR correction was performed separately for each group, resulting in six comparisons for each brain region (three information matrices × two tDCS effects: anodal-sham or cathodal-sham). In Experiment 2, six comparisons (three information matrices × two sites: IFG or pMTG) were submitted for FDR correction. In Experiment 3, FDR correction was applied to the seven regions of interest (ROIs) within each component, resulting in five comparisons.

Reference:

Wilk, M.B. (2015). The Shapiro Wilk And Related Tests For Normality.

Popal, H., Wang, Y., & Olson, I. R. (2019). A guide to representational similarity analysis for social neuroscience. Social cognitive and affective neuroscience, 14(11), 1243-1253.

(4) The authors use ROIs for their different analyses, but it is unclear why and on the basis of what these regions are defined. Why not consider all channels without making them part of an ROI, by using a method like the one described in my previous comment?

Response 4: For the EEG data, we conducted both a traditional ROI analysis and a cluster-based permutation approach. The ROIs were defined based on a well-established work (Habets et al., 2011), allowing for hypothesis-driven testing of specific regions. In addition, we employed a cluster-based permutation methods, which is data-driven and helps enhance robustness while addressing multiple comparisons. This method serves as a complement to the hypothesis-driven ROI analysis, offering an exploratory, unbiased perspective. Notably, the results from both approaches were consistent, reinforcing the reliability of our findings.

To make the methods more accessible to a broader audience, we clarified the relationship between these approaches in the revised manuscript in Lines 267-270: ‘To consolidate the data, we conducted both a traditional region-of-interest (ROI) analysis, with ROIs defined based on a well-established work40, and a cluster-based permutation approach, which utilizes data-driven permutations to enhance robustness and address multiple comparisons’

Additionally, we conducted an RSA analysis without defining specific ROIs, considering all channels in the analysis. This approach yielded consistent results, further validating the robustness of our findings across different analysis methods. See Response 3 for detail.

Reference:

Habets, B., Kita, S., Shao, Z.S., Ozyurek, A., and Hagoort, P. (2011). The Role of Synchrony and Ambiguity in Speech-Gesture Integration during Comprehension. J Cognitive Neurosci 23, 1845-1854. 10.1162/jocn.2010.21462

(5) The authors describe that they have divided their EEG data into a "lower half" and a "higher half" (lines 234-236), based on entropy scores. It is unclear why this is necessary, and I would suggest just using the entropy scores as a continuous measure.

Response 5: To identify ERP components or spatiotemporal clusters that demonstrated significant semantic differences, we split each model into higher and lower halves based on entropy scores. This division allowed us to capture distinct levels of information processing and explore how different levels of entropy or mutual information (MI) related to neural activity. Specifically, the goal was to highlight the gradual activation process of these components and clusters as they correlate with changes in information content. Remarkably, consistent results were observed between the ERP components and clusters, providing robust evidence that semantic information conveyed through gestures and speech significantly influenced the amplitude of these components or clusters. Moreover, the semantic information was shown to be highly sensitive, varying in tandem with these amplitude changes.

Reviewer #2 (Public review):

Comment:

Summary:

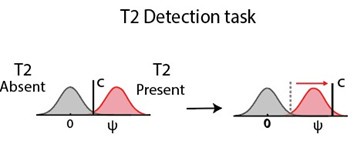

The study is an innovative and fundamental study that clarified important aspects of brain processes for integration of information from speech and iconic gesture (i.e., gesture that depicts action, movement, and shape), based on tDCS, TMS, and EEG experiments. They evaluated their speech and gesture stimuli in information-theoretic ways and calculated how informative speech is (i.e., entropy), how informative gesture is, and how much shared information speech and gesture encode. The tDCS and TMS studies found that the left IFG and pMTG, the two areas that were activated in fMRI studies on speech-gesture integration in the previous literature, are causally implicated in speech-gesture integration. The size of tDC and TMS effects are correlated with the entropy of the stimuli or mutual information, which indicates that the effects stem from the modulation of information decoding/integration processes. The EEG study showed that various ERP (event-related potential, e.g., N1-P2, N400, LPC) effects that have been observed in speech-gesture integration experiments in the previous literature, are modulated by the entropy of speech/gesture and mutual information. This makes it clear that these effects are related to information decoding processes. The authors propose a model of how the speech-gesture integration process unfolds in time, and how IFG and pMTG interact with each other in that process.

Strengths:

The key strength of this study is that the authors used information theoretic measures of their stimuli (i.e., entropy and mutual information between speech and gesture) in all of their analyses. This made it clear that the neuro-modulation (tDCS, TMS) affected information decoding/integration and ERP effects reflect information decoding/integration. This study used tDCS and TMS methods to demonstrate that left IFG and pMTG are causally involved in speech-gesture integration. The size of tDCS and TMS effects are correlated with information-theoretic measures of the stimuli, which indicate that the effects indeed stem from disruption/facilitation of the information decoding/integration process (rather than generic excitation/inhibition). The authors' results also showed a correlation between information-theoretic measures of stimuli with various ERP effects. This indicates that these ERP effects reflect the information decoding/integration process.

We sincerely thank the reviewer for recognizing our efforts and the innovation of employing information-theoretic measures to elucidate the brain processes underlying the multisensory integration of gesture and speech.

Weaknesses:

The "mutual information" cannot fully capture the interplay of the meaning of speech and gesture. The mutual information is calculated based on what information can be decoded from speech alone and what information can be decoded from gesture alone. However, when speech and gesture are combined, a novel meaning can emerge, which cannot be decoded from a single modality alone. When example, a person produces a gesture of writing something with a pen, while saying "He paid". The speech-gesture combination can be interpreted as "paying by signing a cheque". It is highly unlikely that this meaning is decoded when people hear speech only or see gestures only. The current study cannot address how such speech-gesture integration occurs in the brain, and what ERP effects may reflect such a process. Future studies can classify different types of speech-gesture integration and investigate neural processes that underlie each type. Another important topic for future studies is to investigate how the neural processes of speech-gesture integration change when the relative timing between the speech stimulus and the gesture stimulus changes.

We greatly appreciate Reviewer2 ’s thoughtful concern regarding whether "mutual information" adequately captures the interplay between the meanings of speech and gesture. We would like to clarify that the materials used in the present study involved gestures that were performed without actual objects, paired with verbs that precisely describe the corresponding actions. For example, a hammering gesture was paired with the verb “hammer”, and a cutting gesture was paired with the verb “cut”. In this design, all gestures conveyed redundant information relative to the co-occurring speech, creating significant overlap between the information derived from speech alone and that from gesture alone.

We understand the reviewer’s concern about cases where gestures and speech might provide complementary, rather than redundant, information. To address this, we have developed an alternative metric for quantifying information gains contributed by supplementary multisensory cues, which will be explored in a subsequent study. However, for the present study, we believe that the observed overlap in information serves as a key indicator of multisensory convergence, a central focus of our investigation.

Regarding the reviewer’s concern about how neural processes of speech-gesture integration may change with varying relative timing between speech and gesture stimuli, we would like to highlight findings from our previous study (Zhao, 2023, Frontiers in Psychology). In that study, we explored the semantic predictive role of gestures relative to speech under two timing conditions: (1) gestures preceding speech by a fixed interval of 200 ms, and (2) gestures preceding speech at its semantic identification point. Interestingly, only in the second condition did we observe time-window-selective disruptions of the semantic congruency effect in the IFG and pMTG. This led us to conclude that gestures play a semantic priming role for co-occurring speech. Building on this, we designed the present study with gestures deliberately preceding speech at its semantic identification point to reflect this semantic priming relationship. Additionally, ongoing research in our lab is exploring gesture and speech interactions in natural conversational settings to investigate whether the neural processes identified here remain consistent across varying contexts.

To address potential concerns and ensure clarity regarding the limitations of the MI measurement, we have included a discussion of tthis in the revised manuscript in Lines 543-547: ‘Furthermore, MI quantifies overlap in gesture-speech integration, primarily when gestures convey redundant meaning. Consequently, the conclusions drawn in this study are constrained to contexts in which gestures serve to reinforce the meaning of the speech. Future research should aim to explore the neural responses in cases where gestures convey supplementary, rather than redundant, semantic information.’ This is followed by a clarification of the timing relationship between gesture and speech: ‘Note that the sequential cortical involvement and ERP components discussed above are derived from a deliberate alignment of speech onset with gesture DP, creating an artificial priming effect with gesture semantically preceding speech. Caution is advised when generalizing these findings to the spontaneous gesture-speech relationships, although gestures naturally precede speech[34].’ (Lines 539-543).

Reviewer #3 (Public review):

In this useful study, Zhao et al. try to extend the evidence for their previously described two-step model of speech-gesture integration in the posterior Middle Temporal Gyrus (pMTG) and Inferior Frontal Gyrus (IFG). They repeat some of their previous experimental paradigms, but this time quantifying Information-Theoretical (IT) metrics of the stimuli in a stroop-like paradigm purported to engage speech-gesture integration. They then correlate these metrics with the disruption of what they claim to be an integration effect observable in reaction times during the tasks following brain stimulation, as well as documenting the ERP components in response to the variability in these metrics.

The integration of multiple methods, like tDCS, TMS, and ERPs to provide converging evidence renders the results solid. However, their interpretation of the results should be taken with care, as some critical confounds, like difficulty, were not accounted for, and the conceptual link between the IT metrics and what the authors claim they index is tenuous and in need of more evidence. In some cases, the difficulty making this link seems to arise from conceptual equivocation (e.g., their claims regarding 'graded' evidence), whilst in some others it might arise from the usage of unclear wording in the writing of the manuscript (e.g. the sentence 'quantitatively functional mental states defined by a specific parser unified by statistical regularities'). Having said that, the authors' aim is valuable, and addressing these issues would render the work a very useful approach to improve our understanding of integration during semantic processing, being of interest to scientists working in cognitive neuroscience and neuroimaging.

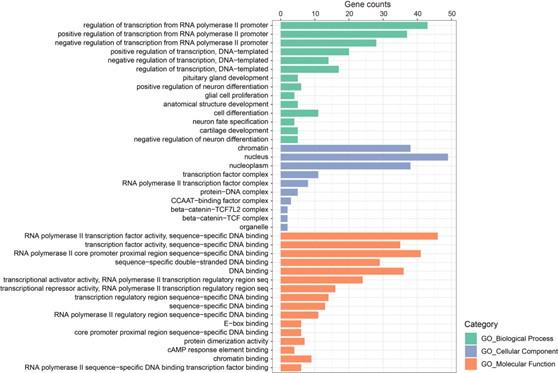

The main hurdle to achieving the aims set by the authors is the presence of the confound of difficulty in their IT metrics. Their measure of entropy, for example, being derived from the distribution of responses of the participants to the stimuli, will tend to be high for words or gestures with multiple competing candidate representations (this is what would presumptively give rise to the diversity of responses in high-entropy items). There is ample evidence implicating IFG and pMTG as key regions of the semantic control network, which is critical during difficult semantic processing when, for example, semantic processing must resolve competition between multiple candidate representations, or when there are increased selection pressures (Jackson et al., 2021). Thus, the authors' interpretation of Mutual Information (MI) as an index of integration is inextricably contaminated with difficulty arising from multiple candidate representations. This casts doubt on the claims of the role of pMTG and IFG as regions carrying out gesture-speech integration as the observed pattern of results could also be interpreted in terms of brain stimulation interrupting the semantic control network's ability to select the best candidate for a given context or respond to more demanding semantic processing.

Response 1: We sincerely thank the reviewer for pointing out the confound of difficulty. The primary aim of this study is to investigate whether the degree of activity in the established integration hubs, IFG and pMTG, is influenced by the information provided by gesture-speech modalities and/or their interactions. While we provided evidence for the differential involvement of the IFG and pMTG by delineating their dynamic engagement across distinct time windows of gesture-speech integration and associating these patterns with unisensory information and their interaction, we acknowledge that the mechanisms underlying these dynamics remain open to interpretation. Specifically, whether the observed effects stem from difficulties in semantic control processes, as suggested by the reviewer, or from resolving information uncertainty, as quantified by entropy, falls outside the scope of the current study. Importantly, we view these two interpretations as complementary rather than mutually exclusive, as both may be contributing factors. Nonetheless, we agree that addressing this question is a compelling avenue for future research.

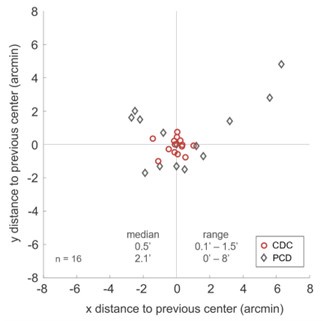

In the revised manuscript, we have included an additional analysis to assess whether the confounding effects of lexical or semantic control difficulty—specifically, the number of available responses—affect the neural outcomes. To address this, we performed partial correlation analyses, controlling for the number of responses.

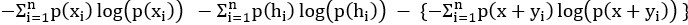

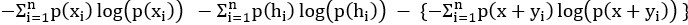

We would like to clarify an important distinction between the measure of entropy derived from the distribution of responses and the concept of response diversity. Entropy, in our analysis, is computed based on the probability distribution of each response, as captured by the information entropy formula. In contrast, response diversity refers to the simple count of different responses provided. Mutual Information (MI), by its nature, is also an entropy measure, quantifying the overlap in responses. For reference, although we observed a high correlation between the three information matrices and the number of responses (gesture entropy & gesture response number: r = 0.976, p < 0.001; speech entropy & speech response number: r = 0.961, p < 0.001; MI & total response number: r = 0.818, p < 0.001), it is crucial to emphasize that these metrics capture different aspects of the semantic information represented. In the revised manuscript, we have provided a table detailing both entropy and response numbers for each stimulus, to allow for greater transparency and clarity.

Furthermore, we have added a comprehensive description of the partial correlation analysis conducted across all three experiments in the methodology section: for Experiment 1, please refer to Lines 213–222: ‘To account for potential confounds related to multiple candidate representations, we conducted partial correlation analyses between the tDCS effects and gesture entropy, speech entropy, and MI, controlling for the number of responses provided for each gesture and speech, as well as the total number of combined responses. Given that HD-tDCS induces overall disruption at the targeted brain regions, we hypothesized that the neural activity within the left IFG and pMTG would be progressively affected by varying levels of multisensory convergence, as indexed by MI. Moreover, we hypothesized that the modulation of neural activity by MI would differ between the left IFG and pMTG, as reflected in the differential modulation of response numbers in the partial correlations, highlighting their distinct roles in semantic processing[37].’

Experiment 2: ‘To control for potential confounds, partial correlations were also performed between the TMS effects and gesture entropy, speech entropy, and MI, controlling for the number of responses for each gesture and speech, as well as the total number of combined responses. By doing this, we can determine how the time-sensitive contribution of the left IFG and pMTG to gesture–speech integration was affected by gesture and speech information distribution.’ (Lines 242–246).

Experiment 3: ‘Additionally, partial correlations were conducted, accounting for the number of responses for each respective metric’ (Lines 292–293).

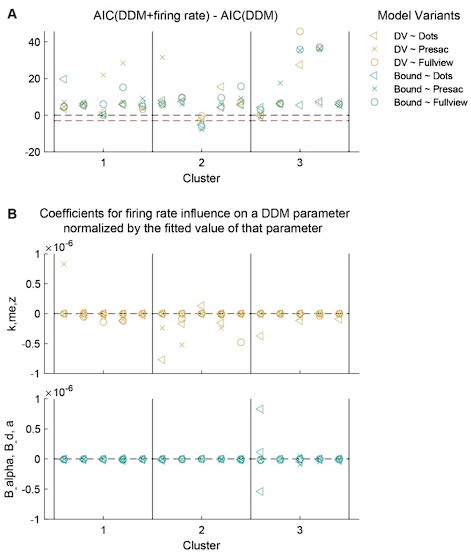

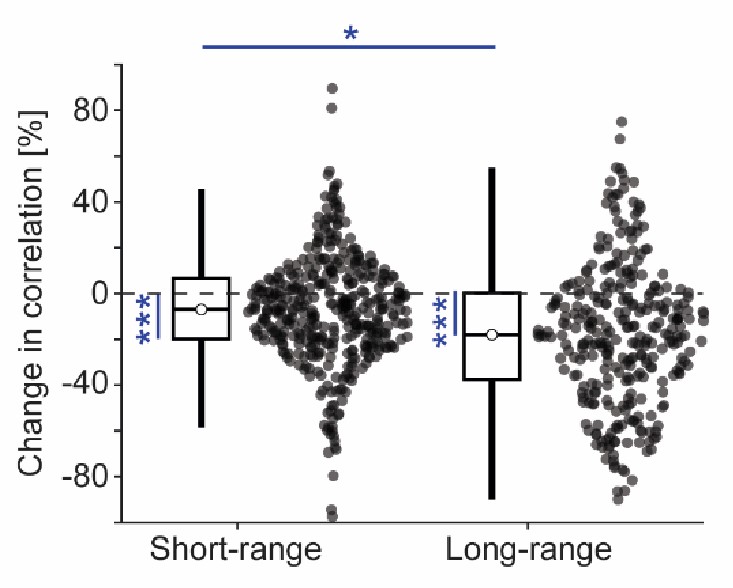

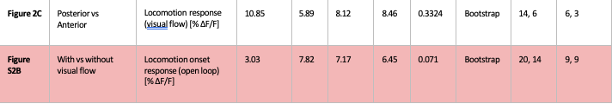

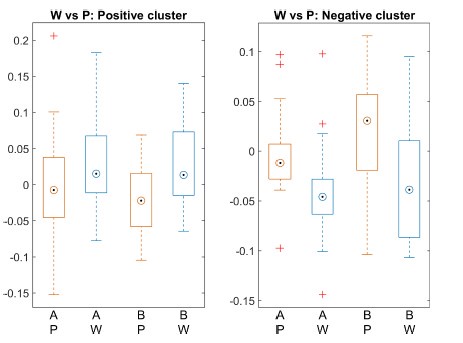

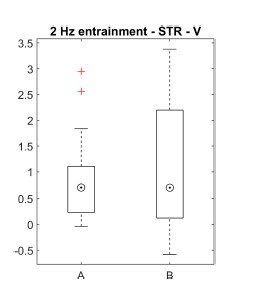

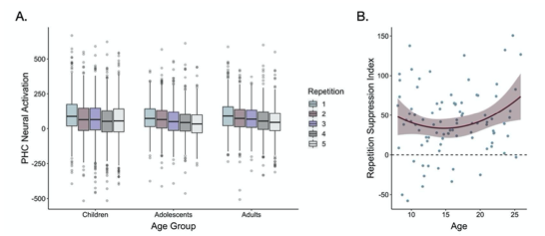

As anticipated by the reviewer, we observed a consistent modulation of response numbers across both regions as well as across the four ERP components and associated clusters. The detailed results are presented below:

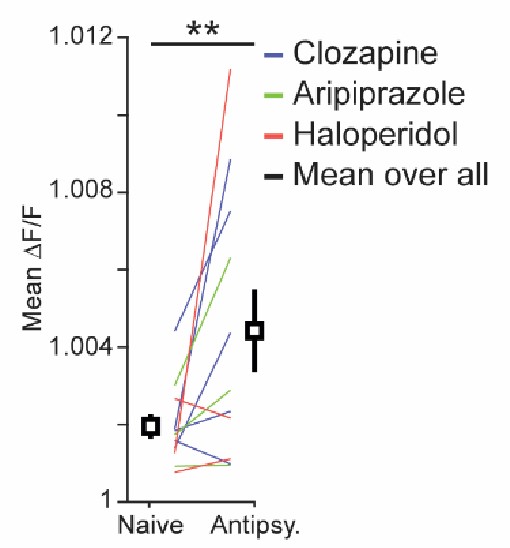

Experiment 1: ‘However, partial correlation analysis, controlling for the total response number, revealed that the initially significant correlation between the Cathodal-tDCS effect and MI was no longer significant (r = -0.303, p = 0.222, 95% CI = [-0.770, 0.164]). This suggests that the observed relationship between Cathodal-tDCS and MI may be confounded by semantic control difficulty, as reflected by the total number of responses. Specifically, the reduced activity in the IFG under Cathodal-tDCS may be driven by variations in the difficulty of semantic control rather than a direct modulation of MI.’ (Lines 310-316) and ‘’Importantly, the reduced activity in the pMTG under Cathodal-tDCS was not influenced by the total response number, as indicated by the non-significant correlation (r = -0.253, p = 0.295, 95% CI = [-0.735, 0.229]). This finding was further corroborated by the unchanged significance in the partial correlation between Cathodal-tDCS and MI, when controlling for the total response number (r = -0.472, p = 0.048, 95% CI = [-0.903, -0.041]). (Lines 324-328).

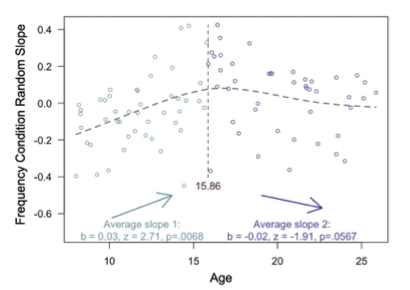

Experiment 2:’ Notably, inhibition of pMTG activity in TW2 was not influenced by the number of speech responses (r = -0.539, p = 0.087, 95% CI = [-1.145, 0.067]). However, the number of speech responses did affect the modulation of speech entropy on the pMTG inhibition effect in TW2. This was evidenced by the non-significant partial correlation between pMTG inhibition and speech entropy when controlling for speech response number (r = -0.218, p = 0.545, 95% CI = [-0.563, 0.127]).

In contrast, the interrupted IFG activity in TW6 appeared to be consistently influenced by the confound of semantic control difficulty. This was reflected in the significant correlation with both gesture response number (r = -0.480, p = 0.032, 95% CI = [-904, -0.056]), speech response number (r = -0.729, p = 0.011, 95% CI = [-1.221, -0.237]), and total response number (r = -0.591, p = 0.008, 95% CI = [-0.993, -0.189]). Additionally, partial correlation analyses revealed non-significant relationship between interrupted IFG activity in TW6 and gesture entropy (r = -0.369, p = 0.120, 95% CI = [-0.810, -0.072]), speech entropy (r = -0.455, p = 0.187, 95% CI = [-1.072, 0.162]), and MI (r = -0.410, p = 0.091, 95% CI = [-0.856, -0.036]) when controlling for response numbers.’ (Lines 349-363)

Experiment 3: ‘To clarify potential confounds of semantic control difficulty, partial correlation analyses were conducted to examine the relationship between the elicited ERP components and the relevant information matrices, controlling for response numbers. Results consistently indicated modulation by response numbers in the relationship of ERP components with the information matrix, as evidenced by the non-significant partial correlations between the P1 amplitude (P1 component over ML: r = -0.574, p = 0.082, 95% CI = [-1.141, -0.007]) and the P1 cluster (r = -0.503, p = 0.138, 95% CI = [-1.102, 0.096]) with speech entropy; the N1-P2 amplitude (N1-P2 component over LA: r = -0.080, p = 0.746, 95% CI = [-0.554, 0.394]) and N1-P2 cluster (r \= -0.179, p = 0.464, 95% CI = [-0.647, 0.289]) with gesture entropy; the N400 amplitude (N400 component over LA: r = 0.264, p = 0.247, 95% CI = [-0.195,0.723]) and N400 cluster (r = 0.394, p = 0.095, 95% CI = [-0.043, 0.831]) with gesture entropy; the N400 amplitude (N400 component over LA: r = -0.134, p = 0.595, 95% CI = [-0.620, 0.352]) and N400 cluster (r = -0.034, p = 0.894, 95% CI = [-0.524,0.456]) with MI; and the LPC amplitude (LPC component over LA: r \= -0.428, p = 0.217, 95% CI = [-1.054, 0.198]) and LPC cluster (r \= -0.202, p = 0.575, 95% CI = [-0.881, 0.477]) with speech entropy.’ (Lines 424-438)

Based on the above results, we conclude that there is a dynamic interplay between the difficulty of semantic representation and the control pressures that shape the resulting neural responses. Furthermore, while the role of the IFG in control processes remains consistent, the present study reveals a more segmented role for the pMTG. Specifically, although the pMTG is well-established in the processing of distributed speech information, the integration of multisensory convergence, as indexed by MI, did not elicit the same control-related modulation in pMTG activity. A comprehensive discussion of the control process in shaping neural responses, as well as the specific roles of the IFG and pMTG in this process, is provided in the Discussion section in Lines (493-511): ‘Given that control processes are intrinsically integrated with semantic processing50, a distributed semantic representation enables dynamic modulation of access to and manipulation of meaningful information, thereby facilitating flexible control over the diverse possibilities inherent in a concept. Accordingly, an increased number of candidate responses amplifies the control demands necessary to resolve competing semantic representations. This effect was observed in the present study, where the association of the information matrix with the tDCS effect in IFG, the inhibition of pMTG activity in TW2, disruption of IFG activity in TW6, and modulation of four distinct ERP components collectively demonstrated that response quantity modulated neural activity. These results underscore the intricate interplay between the difficulty of semantic representation and the control pressures that shape the resulting neural responses.

The IFG and pMTG, central components of the semantic control network, have been extensively implicated in previous research 50-52. While the role of the IFG in managing both unisensory information and multisensory convergence remains consistent, as evidenced by the confounding difficulty results across Experiments 1 and 2, the current study highlights a more context-dependent function for the pMTG. Specifically, although the pMTG is well-established in the processing of distributed speech information, the multisensory convergence, indexed by MI, did not evoke the same control-related modulation in pMTG activity. These findings suggest that, while the pMTG is critical to semantic processing, its engagement in control processes is likely modulated by the specific nature of the sensory inputs involved’

Reference:

Tesink, C.M.J.Y., Petersson, K.M., van Berkum, J.J.A., van den Brink, D., Buitelaar, J.K., and Hagoort, P. (2009). Unification of speaker and meaning in language comprehension: An fMRI study. J Cognitive Neurosci 21, 2085-2099. 10.1162/jocn.2008.21161

Jackson, R.L. (2021). The neural correlates of semantic control revisited. Neuroimage 224, 117444. 10.1016/j.neuroimage.2020.117444.

Jefferies, E. (2013). The neural basis of semantic cognition: converging evidence from neuropsychology, neuroimaging and TMS. Cortex 49, 611-625. 10.1016/j.cortex.2012.10.008.

Noonan, K.A., Jefferies, E., Visser, M., and Lambon Ralph, M.A. (2013). Going beyond inferior prefrontal involvement in semantic control: evidence for the additional contribution of dorsal angular gyrus and posterior middle temporal cortex. J Cogn Neurosci 25, 1824-1850. 10.1162/jocn_a_00442.

In terms of conceptual equivocation, the use of the term 'graded' by the authors seems to be different from the usage commonly employed in the semantic cognition literature (e.g., the 'graded hub hypothesis', Rice et al., 2015). The idea of a graded hub in the controlled semantic cognition framework (i.e., the anterior temporal lobe) refers to a progressive degree of abstraction or heteromodal information as you progress through the anatomy of the region (i.e., along the dorsal-to-ventral axis). The authors, on the other hand, seem to refer to 'graded manner' in the context of a correlation of entropy or MI and the change in the difference between Reaction Times (RTs) of semantically congruent vs incongruent gesture-speech. The issue is that the discourse through parts of the introduction and discussion seems to conflate both interpretations, and the ideas in the main text do not correspond to the references they cite. This is not overall very convincing. What is it exactly the authors are arguing about the correlation between RTs and MI indexes? As stated above, their measure of entropy captures the spread of responses, which could also be a measure of item difficulty (more diverse responses imply fewer correct responses, a classic index of difficulty). Capturing the diversity of responses means that items with high entropy scores are also likely to have multiple candidate representations, leading to increased selection pressures. Regions like pMTG and IFG have been widely implicated in difficult semantic processing and increased selection pressures (Jackson et al., 2021). How is this MI correlation evidence of integration that proceeds in a 'graded manner'? The conceptual links between these concepts must be made clearer for the interpretation to be convincing.

Response 2: Regarding the concern of conceptual equivocation, we would like to emphasize that this study represents the first attempt to focus on the relationship between information quantity and neural engagement, a question addressed in three experiments. Experiment 1 (HD-tDCS) targeted the entire gesture-speech integration process in the IFG and pMTG to assess whether neural activity in these regions, previously identified as integration hubs, is modulated by changes in informativeness from both modalities (i.e., entropy) and their interactions (MI). The results revealed a gradual inhibition of neural activity in both areas as MI increased, evidenced by a negative correlation between MI and the tDCS inhibition effect in both regions. Building on this, Experiments 2 and 3 employed double-pulse TMS and ERPs to further assess whether the engaged neural activity was both time-sensitive and staged. These experiments also evaluated the contributions of various sources of information, revealing correlations between information-theoretic metrics and time-locked brain activity, providing insights into the ‘gradual’ nature of gesture-speech integration.

Therefore, the incremental engagement of the integration hub of IFG and pMTG along with the informativeness of gesture and speech during multisensory integration is different from the "graded hub," which refers to anatomical distribution. We sincerely apologize for this oversight. In the revised manuscript, we have changed the relevant conceptual equivocation in Lines 44-60: ‘Consensus acknowledges the presence of 'convergence zones' within the temporal and inferior parietal areas [1], or the 'semantic hub' located in the anterior temporal lobe[2], pivotal for integrating, converging, or distilling multimodal inputs. Contemporary theories frame the semantic processing as a dynamic sequence of neural states[3], shaped by systems that are finely tuned to the statistical regularities inherent in sensory inputs[4]. These regularities enable the brain to evaluate, weight, and integrate multisensory information, optimizing the reliability of individual sensory signals[5]. However, sensory inputs available to the brain are often incomplete and uncertain, necessitating adaptive neural adjustments to resolve these ambiguities [6]. In this context, neuronal activity is thought to be linked to the probability density of sensory information, with higher levels of uncertainty resulting in the engagement of a broader population of neurons, thereby reflecting the brain’s adaptive capacity to handle diverse possible interpretations[7,8]. Although the role of 'convergence zones' and 'semantic hubs' in integrating multimodal inputs is well established, the precise functional patterns of neural activity in response to the distribution of unified multisensory information—along with the influence of unisensory signals—remain poorly understood.

To this end, we developed an analytic approach to directly probe the cortical engagement during multisensory gesture-speech semantic integration.’

Furthermore, in the Discussion section, we have replaced the term 'graded' with 'incremental' (Line 456,). Additionally, we have included a discussion on the progressive nature of neural engagement, as evidenced by the correlation between RTs and MI indices in Lines 483-492: ‘The varying contributions of unisensory gesture-speech information and the convergence of multisensory inputs, as reflected in the correlation between distinct ERP components and TMS time windows (TMS TWs), are consistent with recent models suggesting that multisensory processing involves parallel detection of modality-specific information and hierarchical integration across multiple neural levels[4,48]. These processes are further characterized by coordination across multiple temporal scales[49]. Building on this, the present study offers additional evidence that the multi-level nature of gesture-speech processing is statistically structured, as measured by information matrix of unisensory entropy and multisensory convergence index of MI, the input of either source would activate a distributed representation, resulting in progressively functioning neural responses.’

Reference:

Damasio, H., Grabowski, T.J., Tranel, D., Hichwa, R.D., and Damasio, A.R. (1996). A neural basis for lexical retrieval. Nature 380, 499-505. DOI 10.1038/380499a0.

Patterson, K., Nestor, P.J., and Rogers, T.T. (2007). Where do you know what you know? The representation of semantic knowledge in the human brain. Nature Reviews Neuroscience 8, 976-987. 10.1038/nrn2277.

Brennan, J.R., Stabler, E.P., Van Wagenen, S.E., Luh, W.M., and Hale, J.T. (2016). Abstract linguistic structure correlates with temporal activity during naturalistic comprehension. Brain and Language 157, 81-94. 10.1016/j.bandl.2016.04.008.

Benetti, S., Ferrari, A., and Pavani, F. (2023). Multimodal processing in face-to-face interactions: A bridging link between psycholinguistics and sensory neuroscience. Front Hum Neurosci 17, 1108354. 10.3389/fnhum.2023.1108354.

Noppeney, U. (2021). Perceptual Inference, Learning, and Attention in a Multisensory World. Annual Review of Neuroscience, Vol 44, 2021 44, 449-473. 10.1146/annurev-neuro-100120-085519.

Ma, W.J., and Jazayeri, M. (2014). Neural coding of uncertainty and probability. Annu Rev Neurosci 37, 205-220. 10.1146/annurev-neuro-071013-014017.

Fischer, B.J., and Pena, J.L. (2011). Owl's behavior and neural representation predicted by Bayesian inference. Nat Neurosci 14, 1061-1066. 10.1038/nn.2872.

Ganguli, D., and Simoncelli, E.P. (2014). Efficient sensory encoding and Bayesian inference with heterogeneous neural populations. Neural Comput 26, 2103-2134. 10.1162/NECO_a_00638.

Meijer, G.T., Mertens, P.E.C., Pennartz, C.M.A., Olcese, U., and Lansink, C.S. (2019). The circuit architecture of cortical multisensory processing: Distinct functions jointly operating within a common anatomical network. Prog Neurobiol 174, 1-15. 10.1016/j.pneurobio.2019.01.004.

Senkowski, D., and Engel, A.K. (2024). Multi-timescale neural dynamics for multisensory integration. Nat Rev Neurosci 25, 625-642. 10.1038/s41583-024-00845-7.

Reviewer #2 (Recommendations for the authors):

I have a number of small suggestions to make the paper more easy to understand.

We sincerely thank the reviewer for their careful reading and thoughtful consideration. All suggestions have been thoroughly addressed and incorporated into the revised manuscript.

(1) Lines 86-87, please clarify whether "chronometric double-pulse TMS" should lead to either excitation or inhibition of neural activities

Double-pulse TMS elicits inhibition of neural activities (see responses to editors), which has been clarified in the revised manuscript in Lines 90-93: ‘we applied inhibitory chronometric double-pulse transcranial magnetic stimulation (TMS) to specific temporal windows associated with integration processes in these regions[23], assessing whether the inhibitory effects of TMS were correlated with unisensory entropy or the multisensory convergence index (MI)’

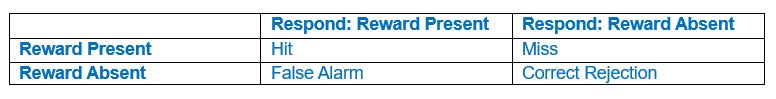

(2) Line 106 "validated by replicating the semantic congruencey effect". Please specify what the task was in the validation study.

The description of the validation task has been added in Lines 116-119: ‘To validate the stimuli, 30 participants were recruited to replicate the multisensory index of semantic congruency effect, hypothesizing that reaction times for semantically incongruent gesture-speech pairs would be significantly longer than those for congruent pairs.’

(3) Line 112. "30 subjects". Are they Chinese speakers?

Yes, all participants in the present study, including those in the pre-tests, are native Chinese speakers.

(4) Line 122, "responses for each item" Please specify whether you mean here "the comprehensive answer" as you defined in 118-119.

Yes, and this information has been added in Lines 136-137: ‘comprehensive responses for each item were converted into Shannon's entropy (H)’

(5) Line 163 "one of three stimulus types (Anodal, Cathodal or Sham)". Please specify whether the order of the three conditions was counterbalanced across participants. Or, whether the order was fixed for all participants.

The order of the three conditions was counterbalanced across participants, a clearer description has been added in the revised manuscript in Lines 184-189: ‘Participants were divided into two groups, with each group undergoing HD-tDCS stimulation at different target sites (IFG or pMTG). Each participant completed three experimental sessions, spaced one week apart, during which 480 gesture-speech pairs were presented across various conditions. In each session, participants received one of three types of HD-tDCS stimulation: Anodal, Cathodal, or Sham. The order of stimulation site and type was counterbalanced using a Latin square design to control for potential order effects.’

(6) Line 191-192, "difference in reaction time between semantic incongruence and semantic congruent pairs)" Here, please specify which reaction time was subtracted from which one. This information is very crucial; without it, you cannot interpret your graphs.

(17) Figure 3. Figure caption for (A). "The semantic congruence effect was calculated as the reaction time difference between...". You need to specify which condition was subtracted from what condition; otherwise, you cannot interpret this figure. "difference" is too ambiguous.

Corrections have been made in the revised manuscript in Lines 208-211: ‘Neural responses were quantified based on the effects of HD-tDCS (active tDCS minus sham tDCS) on the semantic congruency effect, defined as the difference in reaction times between semantic incongruent and congruent conditions (Rt(incongruent) - Rt(congruent))’ and Line 796-798: ‘The semantic congruency effect was calculated as the reaction time (RT) difference between semantically incongruent and semantically congruent pairs (Rt(incongruent) - Rt(congruent))’.

(7) Line 363 "progressive inhibition of IFG and pMTG by HD-tDCS as the degree of gesture-speech interaction, indexed by MI, advanced." This sentence is very hard to follow. I don't understand what part of the data in Figure 3 speaks to "inhibition of IFG". And what is "HD-tDCS"? I think it is easier to read if you talk about correlation (not "progressive" and "advanced").

High-Definition transcranial direct current stimulation (HD-tDCS) was applied to modulate the activity of pMTG and IFG, with cathodal stimulation inducing inhibitory effects and anodal stimulation facilitating neural activity. In Figure 3, we examined the relationship between the tDCS effects on pMTG and IFG and the three information matrices (entropy and MI). Our results revealed significant correlations between MI and the cathodal-tDCS effects in both regions. We acknowledge that the original phrasing may have been unclear, and in the revised manuscript, we have provided a more explicit explanation to enhance clarity in Lines 443-445: ‘Our results, for the first time, revealed that the inhibition effect of cathodal-tDCS on the pMTG and IFG correlated with the degree of gesture-speech multisensory convergence, as indexed by MI’.

(8) Lines 367-368 I don't understand why gesture is top down and speech is bottom up. Is that because gesture precedes speech (gesture is interpretable at the point of speech onset)?

Yes, since we employed a semantic priming paradigm by aligning speech onset with the gesture comprehension point, we interpret the gesture-speech integration process as an interaction between the top-down prediction from gestures and the bottom-up processing of speech. In the revised manuscript, we have provided a clearer and more coherent description that aligns with the results. Lines 445-449: ‘Moreover, the gradual neural engagement was found to be time-sensitive and staged, as evidenced by the selectively interrupted time windows (Experiment 2) and the distinct correlated ERP components (Experiment 3), which were modulated by different information contributors, including unisensory entropy or multisensory MI’

(9) Line 380 - 381. Can you spell out "TW" and "IP"?

(16) Line 448, NIBS, Please spell out "NIBS".

"TW" have been spelled out in Lines 459: ‘time windows (TW)’,"IP" in Line 460: ‘identification point (IP)’. The term "NIBS" was replaced with "HD-tDCS and TMS" to provide clearer specification of the techniques employed: ‘Consistent with this, the present study provides robust evidence, through the application of HD-tDCS and TMS, that the integration hubs for gesture and speech—the pMTG and IFG—operate in an incremental manner.’ (Lines 454-457).

(10) Line 419, The higher certainty of gesture => The higher the certainty of gesture is

(13) Line 428, "a larger MI" => "a larger MI is"

(12) Line 427-428, "the larger overlapped neural populations" => "the larger, the overlapped neural populations"

Changes have been made in Line 522 ‘The higher the certainty of gesture is’ , Line 531: ‘a larger MI is’ and Line 530 ‘the larger, overlapped neural populations’

(11) Line 423 "Greater TMS effect over the IFG" Can you describe the TMS effect?

TMS effect has been described as ‘Greater TMS inhibitory effect’ (Line 526)

(14) Line 423 "reweighting effect" What is this? Please describe (and say which experiment it is about).

Clearer description has been provided in Lines 535-538: ‘As speech entropy increases, indicating greater uncertainty in the information provided by speech, more cognitive effort is directed towards selecting the targeted semantic representation. This leads to enhanced involvement of the IFG and a corresponding reduction in LPC amplitude’.

(15) Line 437 "the graded functionality of every disturbed period is not guaranteed" (I don't understand this sentence).

Clearer description has been provided in Lines 552-557: ‘Additionally, not all influenced TWs exhibited significant associations with entropy and MI. While HD-tDCS and TMS may impact functionally and anatomically connected brain regions[55,56], whether the absence of influence in certain TWs can be attributed to compensation by other connected brain areas, such as angular gyrus[57] or anterior temporal lobe[58], warrants further investigation. Therefore, caution is needed when interpreting the causal relationship between inhibition effects of brain stimulation and information-theoretic metrics (entropy and MI).’

References:

Humphreys, G. F., Lambon Ralph, M. A., & Simons, J. S. (2021). A Unifying Account of Angular Gyrus Contributions to Episodic and Semantic Cognition. Trends in neurosciences, 44(6), 452–463. https://doi.org/10.1016/j.tins.2021.01.006

Bonner, M. F., & Price, A. R. (2013). Where is the anterior temporal lobe and what does it do?. The Journal of neuroscience : the official journal of the Society for Neuroscience, 33(10), 4213–4215. https://doi.org/10.1523/JNEUROSCI.0041-13.2013

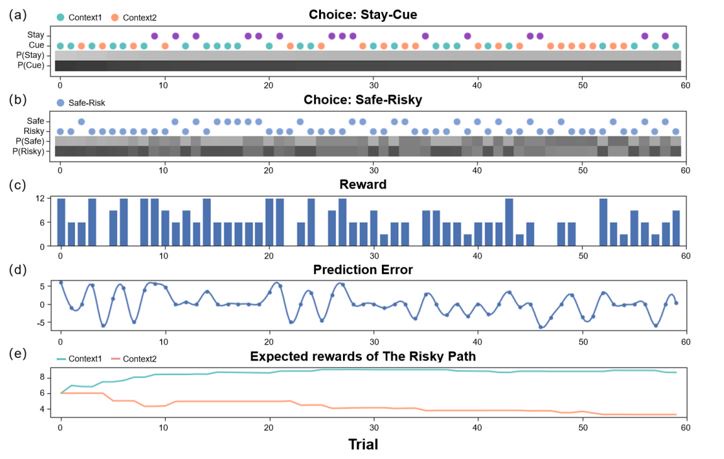

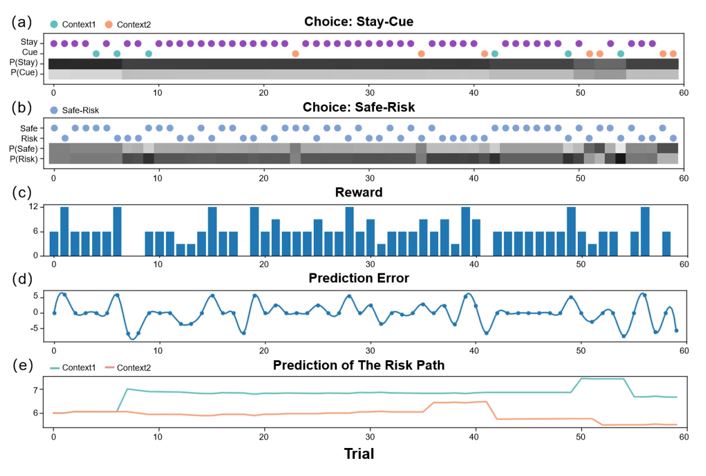

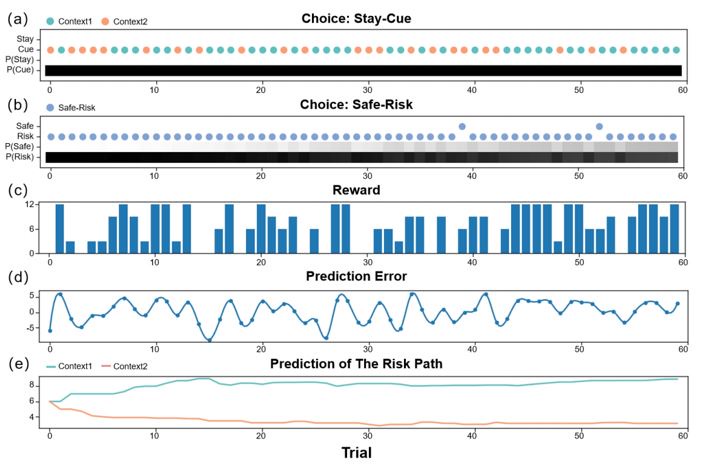

(18) Figure 4. "TW1", "TW2", etc. are not informative. Either replace them with the actual manuscript or add manuscript information (either in the graph itself or in the figure title).

Information was added into the figure title ‘Figure 4. TMS impacts on semantic congruency effect across various time windows (TW).’ (Line 804), included a detailed description of each time window in Lines 805-807: ‘(A) Five time windows (TWs) showing selective disruption of gesture-speech integration were chosen: TW1 (-120 to -80 ms relative to speech identification point), TW2 (-80 to -40 ms), TW3 (-40 to 0 ms), TW6 (80 to 120 ms), and TW7 (120 to 160 ms).’

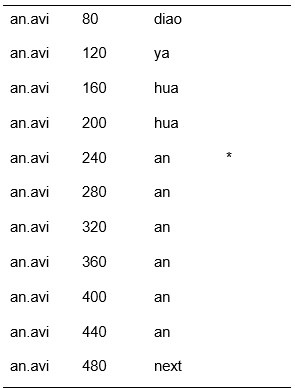

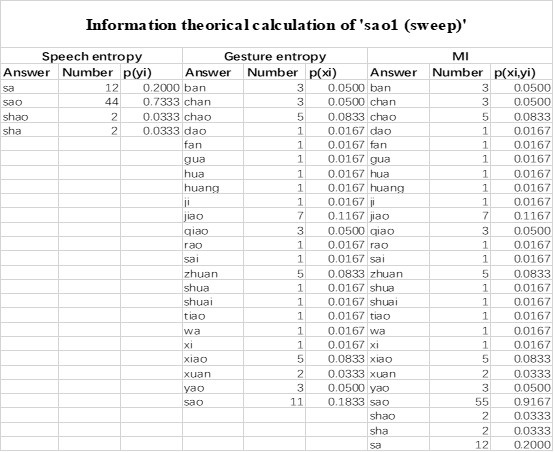

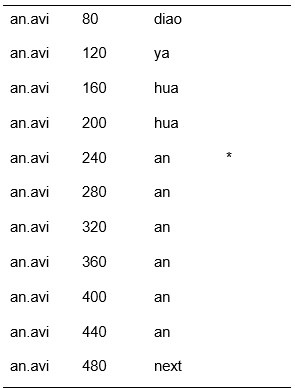

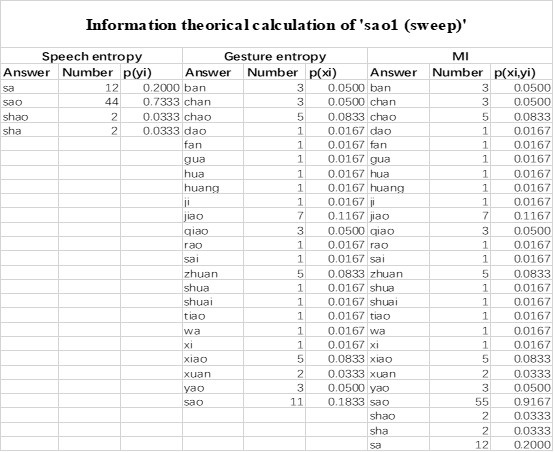

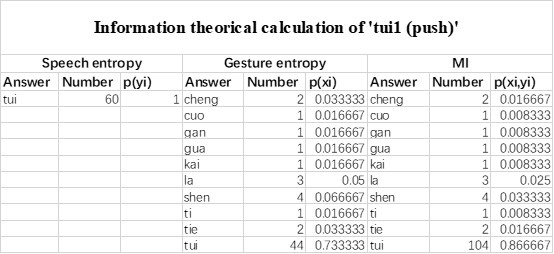

(19) Table 2C.

The last column is titled "p(xi, yi)". I don't understand why the authors use this label for this column.

In the formula, at the very end, there is "p(xi|yi). I wonder why it is p(xi|yi), as opposed to p(yi|xi).

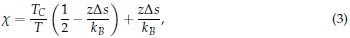

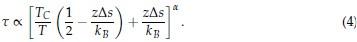

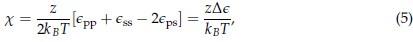

Mutual Information (MI) was calculated by subtracting the entropy of the combined gesture-speech dataset (Entropy(gesture + speech)) from the sum of the individual entropies of gesture and speech (Entropy(gesture) + Entropy(speech)). Thus, the p(xi,yi) aimed to describe the entropy of the combined dataset. We acknowledge the potential ambiguity in the original description, and in the revised manuscript, we have changed the formula of p(xi,yi) into ‘p(xi+yi)’ (Line 848) in Table 2C, and the relevant equation of MI ‘ ’. Also we provided a clear MI calculation process in Lines 143-146: ‘MI was used to measure the overlap between gesture and speech information, calculated by subtracting the entropy of the combined gesture-speech dataset (Entropy(gesture + speech)) from the sum of their individual entropies (Entropy(gesture) + Entropy(speech)) (see Appendix Table 2C)’.

’. Also we provided a clear MI calculation process in Lines 143-146: ‘MI was used to measure the overlap between gesture and speech information, calculated by subtracting the entropy of the combined gesture-speech dataset (Entropy(gesture + speech)) from the sum of their individual entropies (Entropy(gesture) + Entropy(speech)) (see Appendix Table 2C)’.

Reviewer #3 (Recommendations for the authors):

(1) The authors should try and produce data showing that the confound of difficulty due to the number of lexical or semantic representations is not underlying high-entropy items if they wish to improve the credibility of their claim that the disruption of the congruency effect is due to speech-gesture integration. Additionally, they should provide more evidence either in the form of experiments or references to better justify why mutual information is an index for integration in the first place.

Response 1: An additional analysis has been conducted to assess whether the number of lexical or semantic representations affect the neural outcomes, please see details in the Responses to Reviewer 3 (public review) response 1.

Mutual information (MI), a concept rooted in information theory, quantifies the reduction in uncertainty about one signal when the other is known, thereby capturing the statistical dependence between them. MI is calculated as the difference between the individual entropies of each signal and their joint entropy, which reflects the total uncertainty when both signals are considered together. This metric aligns with the core principle of multisensory integration: different modalities reduce uncertainty about each other by providing complementary, predictive information. Higher MI values signify that the integration of sensory signals results in a more coherent and unified representation, while lower MI values indicate less integration or greater divergence between the modalities. As such, MI serves as a robust and natural index for assessing the degree of multisensory integration.

To date, the use of MI as an index of integration has been limited, with one notable study by Tremblay et al. (2016), cited in the manuscript, using pointwise MI to quantify the extent to which two syllables mutually constrain each other. While MI has been extensively applied in natural language processing to measure the co-occurrence strength between words (e.g., Lin et al., 2012), its application as an index of multisensory convergence—particularly in the context of gesture-speech integration as employed in this study—is novel. In the revised manuscript, we have clarified the relationship between MI and multisensory convergence: ‘MI assesses share information between modalities[25],indicating multisensory convergence and acting as an index of gesture-speech integration’ (Lines 73-74).

Also, in our study, we calculated MI as per its original definition, by subtracting the entropy of summed dataset of gesture-speech from the combined entropies of gesture and speech. The detailed calculation method is provided in Lines 136-152: ‘To quantify information content, comprehensive responses for each item were converted into Shannon's entropy (H) as a measure of information richness (Figure 1A bottom). With no significant gender differences observed in both gesture (t(20) = 0.21, p = 0.84) and speech (t(20) = 0.52, p = 0.61), responses were aggregated across genders, resulting in 60 answers per item (Appendix Table 2). Here, p(xi) and p(yi) represent the distribution of 60 answers for a given gesture (Appendix Table 2B) and speech (Appendix Table 2A), respectively. High entropy indicates diverse answers, reflecting broad representation, while low entropy suggests focused lexical recognition for a specific item (Figure 2B). MI was used to measure the overlap between gesture and speech information, calculated by subtracting the entropy of the combined gesture-speech dataset (Entropy(gesture + speech)) from the sum of their individual entropies (Entropy(gesture) + Entropy(speech)) (see Appendix Table 2C). For specific gesture-speech combinations, equivalence between the combined entropy and the sum of individual entropies (gesture or speech) indicates absence of overlap in response sets. Conversely, significant overlap, denoted by a considerable number of shared responses between gesture and speech datasets, leads to a noticeable discrepancy between combined entropy and the sum of gesture and speech entropies. Elevated MI values thus signify substantial overlap, indicative of a robust mutual interaction between gesture and speech.’

Additional examples outlined in Appendix Table 2 in Lines 841-848:

This novel application of MI as a multisensory convergence index offers new insights into how different sensory modalities interact and integrate to shape semantic processing.

Reference:

Tremblay, P., Deschamps, I., Baroni, M., and Hasson, U. (2016). Neural sensitivity to syllable frequency and mutual information in speech perception and production. Neuroimage 136, 106-121. 10.1016/j.neuroimage.2016.05.018

Lin, W., Wu, Y., & Yu, L. (2012). Online Computation of Mutual Information and Word Context Entropy. International Journal of Future Computer and Communication, 167-169.

(2) Finally, if the authors wish to address the graded hub hypothesis as posited by the controlled semantic cognition framework (e.g., Rice et al., 2015), they would have to stimulate a series of ROIs progressing gradually through the anatomy of their candidate regions showing the effects grow along this spline, more than simply correlate MI with RT differences.

Response 2: We appreciate the reviewer’s thoughtful consideration. The incremental engagement of the integration hub of IFG and pMTG along with the informativeness of gesture and speech during multisensory integration is different from the concept of "graded hub," which refers to anatomical distribution. See Responses to reviewer 3 (public review) response 2 for details.

(3) The authors report significant effects with p values as close to the threshold as p=0.49 for the pMTG correlation in Experiment 1, for example. How confident are the authors these results are reliable and not merely their 'statistical luck'? Especially in view of sample sizes that hover around 22-24 participants, which have been called into question in the field of non-invasive brain stimulation (e.g., Mitra et al, 2021)?

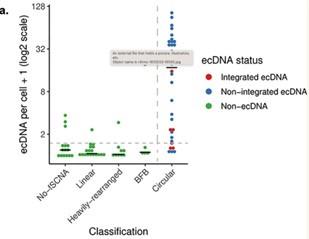

Response 3: In Experiment 1, a total of 52 participants were assigned to two groups, each undergoing HD-tDCS stimulation over either the inferior frontal gyrus (IFG) or posterior middle temporal gyrus (pMTG), yielding 26 participants per group for correlation analysis. Power analysis, conducted using G*Power, indicated that a sample size of 26 participants per group would provide sufficient power (0.8) to detect a large effect size (0.5) at an alpha level of 0.05, justifying the chosen sample size. To control for potential statistical artifacts, we compared the results to those from the unaffected control condition.

In the Experiment 1, participants were tasked with a gender categorization task, where they responded as accurately and quickly as possible to the gender of the voice they saw, while gender congruency (e.g., a male gesture paired with a male voice or a female gesture with a male voice) was manipulated. This manipulation served as direct control, enabling the investigation of automatic and implicit semantic interactions between gesture and speech. This relevant information was provided in the manuscript in Lines 167-172:‘An irrelevant factor of gender congruency (e.g., a man making a gesture combined with a female voice) was created[22,23,35]. This involved aligning the gender of the voice with the corresponding gender of the gesture in either a congruent (e.g., male voice paired with a male gesture) or incongruent (e.g., male voice paired with a female gesture) manner. This approach served as a direct control mechanism, facilitating the investigation of the automatic and implicit semantic interplay between gesture and speech[35]’. Correlation analyses were conducted to examine the TMS disruption effects on gender congruency, comparing reaction times for gender-incongruent versus congruent trials. No significant correlations were found between TMS disruption effects on either the IFG (Cathodal-tDCS effect with MI: r = 0.102, p = 0.677; Anodal-tDCS effect with MI: r = 0.178, p = 0.466) or pMTG (Cathodal-tDCS effect with MI: r \= -0.201, p = 0.410; Anodal-tDCS effect with MI: r = -0.232, p = 0.338).

Moreover, correlations between the TMS disruption effect on semantic congruency and both gesture entropy, speech entropy, and mutual information (MI) were examined. P-values of 0.290, 0.725, and 0.049 were observed, respectively.

The absence of a TMS effect on gender congruency, coupled with the lack of significance when correlated with the other information matrices, highlights the robustness of the significant finding at p = 0.049.

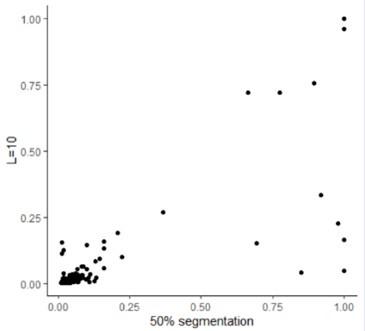

(4) The distributions of entropy for gestures and speech are very unequal. Whilst entropy for gestures has high variability, (.12-4.3), that of speech is very low (ceiling effect?) with low variance. Can the authors comment on whether they think this might have affected their analyses or results in any way? For example, do they think this could be a problem when calculating MI, which integrates both measures? L130-131.'