продуктами

приложениями?

продуктами

приложениями?

от

неразрывный пробел

при

неразрывный пробел

продуктами

приложениями?

или

неразрывный пробел

на

неразрывный пробел

не

неразрывный пробел

Kaspersky

Я бы написала "Возможности Kaspersky Secure Connection" или "Что может Kaspersky Secure Connection?"

с

неразрывный пробел

Author Response

Reviewer #2 (Public Review):

Here, a simple model of cerebellar computation is used to study the dependence of task performance on input type: it is demonstrated that task performance and optimal representations are highly dependent on task and stimulus type. This challenges many standard models which use simple random stimuli and concludes that the granular layer is required to provide a sparse representation. This is a useful contribution to our understanding of cerebellar circuits, though, in common with many models of this type, the neural dynamics and circuit architecture are not very specific to the cerebellum, the model includes the feedforward structure and the high dimension of the granule layer, but little else. This paper has the virtue of including tasks that are more realistic, but by the paper’s own admission, the same model can be applied to the electrosensory lateral line lobe and it could, though it is not mentioned in the paper, be applied to the dentate gyrus and large pyramidal cells of CA3. The discussion does not include specific elements related to, for example, the dynamics of the Purkinje cells or the role of Golgi cells, and, in a way, the demonstration that the model can encompass different tasks and stimuli types is an indication of how abstract the model is. Nonetheless, it is useful and interesting to see a generalization of what has become a standard paradigm for discussing cerebellar function.

We appreciate the Reviewer’s positive comments. Regarding the simplifications of our model, we agree that we have taken a modeling approach that abstracts away certain details to permit comparisons across systems. We now include an in-depth discussion of our simplifying assumptions (Assumptions & Extensions section in the Discussion) and have further noted the possibility that other biophysical mechanisms we have not accounted for may also underlie differences across systems.

Our results predict that qualitative differences in the coding levels of cerebellum-like systems, across brain regions or across species, reflect an optimization to distinct tasks (Figure 7). However, it is also possible that differences in coding level arise from other physiological differences between systems.

Reviewer #3 (Public Review):

1) The paper by Xie et al is a modelling study of the mossy fiber-to-granule cell-to-Purkinje cell network, reporting that the optimal type of representations in the cerebellar granule cell layer depends on the type task. The paper stresses that the findings indicate a higher overall bias towards dense representations than stated in the literature, but it appears the authors have missed parts of the literature that already reported on this. While the modelling and analysis appear mathematically solid, the model is lacking many known constraints of the cerebellar circuitry, which makes the applicability of the findings to the biological counterpart somewhat limited.

We thank the Reviewer for suggesting additional references to include in our manuscript, and for encouraging us to extend our model toward greater biological plausibility and more critically discuss simplifying assumptions we have made. We respond to both the comment about previous literature and about applicability to cerebellar circuitry in detail below.

2) I have some concerns with the novelty of the main conclusion, here from the abstract: ’Here, we generalize theories of cerebellar learning to determine the optimal granule cell representation for tasks beyond random stimulus discrimination, including continuous input-output transformations as required for smooth motor control. We show that for such tasks, the optimal granule cell representation is substantially denser than predicted by classic theories.’ Stated like this, this has in principle already been shown, i.e. for example: Spanne and Jo¨rntell (2013) Processing of multi-dimensional sensorimotor information in the spinal and cerebellar neuronal circuitry: a new hypothesis. PLoS Comput Biol. 9(3):e1002979. Indeed, even the 2 DoF arm movement control that is used in the present paper as an application, was used in this previous paper, with similar conclusions with respect to the advantage of continuous input-output transformations and dense coding. Thus, already from the beginning of this paper, the novelty aspect of this paper is questionable. Even the conclusion in the last paragraph of the Introduction: ‘We show that, when learning input-output mappings for motor control tasks, the optimal granule cell representation is much denser than predicted by previous analyses.’ was in principle already shown by this previous paper.

We thank the Reviewer for drawing our attention to Spanne and Jo¨rntell (2013). Our study shares certain similarities with this work, including the consideration of tasks with smooth input-output mappings, such as learning the dynamics of a two-joint arm. However, our study differs substantially, most notably the fact that we focus our study on parametrically varying the degree of sparsity in the granule cell layer to determine the circumstances under which dense versus sparse coding is optimal. To the best of our ability, we can find no result in Spanne and J¨orntell (2013) that indicates the performance of a network as a function of average coding level. Instead, Spanne and Jo¨rntell (2013) propose that inhibition from Golgi cells produces heterogeneity in coding level which can improve performance, which is an interesting but complementary finding to ours. We therefore do not believe that the quantitative computations of optimal coding level that we present are redundant with the results of this previous study. We also note that a key contribution of our study is mathemetical analysis of the inductive bias of networks with different coding levels which supports our conclusions.

We have included a discussion of Spanne and Jo¨rntell (2013) and (2015) in the revised version of our manuscript:

"Other studies have considered tasks with smooth input-output mappings and low-dimensional inputs, finding that heterogeneous Golgi cell inhibition can improve performance by diversifying individual granule cell thresholds (Spanne and J¨orntell, 2013). Extending our model to include heterogeneous thresholds is an interesting direction for future work. Another proposal states that dense coding may improve generalization (Spanne and Jo¨rntell, 2015). Our theory reveals that whether or not dense coding is beneficial depends on the task."

3) However, the present paper does add several more specific investigations/characterizations that were not previously explored. Many of the main figures report interesting new model results. However, the model is implemented in a highly generic fashion. Consequently, the model relates better to general neural network theory than to specific interpretations of the function of the cerebellar neuronal circuitry. One good example is the findings reported in Figure 2. These represent an interesting extension to the main conclusion, but they are also partly based on arbitrariness as the type of mossy fiber input described in the random categorization task has not been observed in the mammalian cerebellum under behavior in vivo, whereas in contrast, the type of input for the motor control task does resemble mossy fiber input recorded under behavior (van Kan et al 1993).

We agree that the tasks we consider in Figure 2 are simplified compared to those that we consider elsewhere in the paper. The choice of random mossy fiber input was made to provide a comparison to previous modeling studies that also use random input as a benchmark (Marr 1969, Albus 1971, Brunel 2004, Babadi and Sompolinsky 2014, Billings 2014, LitwinKumar et al., 2017). This baseline permits us to specifically evaluate the effects of lowdimensional inputs (Figure 2) and richer input-output mappings (Figure 2, Figure 7). We agree with the Reviewer that the random and uncorrelated mossy fiber activity that has been extensively used in previous studies is almost certainly an unrealistic idealization of in vivo neural activity—this is a motivating factor for our study, which relaxes this assumption and examines the consequences. To provide additional context, we have updated the following paragraph in the main text Results section:

"A typical assumption in computational theories of the cerebellar cortex is that inputs are randomly distributed in a high-dimensional space (Marr, 1969; Albus, 1971; Brunel et al., 2004; Babadi and Sompolinsky, 2014; Billings et al., 2014; Litwin-Kumar et al., 2017). While this may be a reasonable simplification in some cases, many tasks, including cerebellumdependent tasks, are likely best-described as being encoded by a low-dimensional set of variables. For example, the cerebellum is often hypothesized to learn a forward model for motor control (Wolpert et al., 1998), which uses sensory input and motor efference to predict an effector’s future state. Mossy fiber activity recorded in monkeys correlates with position and velocity during natural movement (van Kan et al., 1993). Sources of motor efference copies include motor cortex, whose population activity lies on a lowdimensional manifold (Wagner et al., 2019; Huang et al., 2013; Churchland et al., 2010; Yu et al., 2009). We begin by modeling the low dimensionality of inputs and later consider more specific tasks."

4) The overall conclusion states: ‘Our results....suggest that optimal cerebellar representations are task-dependent.’ This is not a particularly strong or specific conclusion. One could interpret this statement as simply saying: ‘if I construct an arbitrary neural network, with arbitrary intrinsic properties in neurons and synapses, I can get outputs that depend on the intensity of the input that I provide to that network.’ Further, the last sentence of the Introduction states: ‘More broadly, we show that the sparsity of a neural code has a task-dependent influence on learning...’ This is very general and unspecific, and would likely not come as a surprise to anyone interested in the analysis of neural networks. It doesn’t pinpoint any specific biological problem but just says that if I change the density of the input to a [generic] network, then the learning will be impacted in one way or another.

We agree with the Reviewer that our conclusions are quite general, and we have removed the final sentence as we agree it was unspecific. However, we disagree with the Reviewer’s paraphrasing of our results.

First, we do not select arbitrary intrinsic properties of neurons and synapses. Rather, we construct a simplified model with a key quantity, the neuronal threshold, that we vary parametrically in order to assess the effect of the resulting changes in the representation on performance. Second, we do not vary the intensity/density of inputs provided to the network – this is fixed throughout our study for all key comparisons we perform. Instead, we vary the density (coding level) of the expansion layer representation and quantify its effect on inductive bias and generalization. Finally, our study’s key contribution is an explanation of the heterogeneity in average coding level observed across behaviors and cerebellum-like systems. We go beyond the empirical statement that there is a dependence of performance on the parameter that we vary by developing an analytical theory. Our theory describes the performance of the class of networks that we study and the properties of learning tasks that determine the optimal expansion layer representation.

To clarify our main contributions, we have updated the final paragraph of the Introduction. We have also removed the sentence that the Reviewer objects to, as it was less specific than the other points we make here.

"We propose that these differences can be explained by the capacity of representations with different levels of sparsity to support learning of different tasks. We show that the optimal level of sparsity depends on the structure of the input-output relationship of a task. When learning input-output mappings for motor control tasks, the optimal granule cell representation is much denser than predicted by previous analyses. To explain this result, we develop an analytic theory that predicts the performance of cerebellum-like circuits for arbitrary learning tasks. The theory describes how properties of cerebellar architecture and activity control these networks’ inductive bias: the tendency of a network toward learning particular types of input-output mappings (Sollich, 1998; Jacot et al., 2018; Bordelon et al., 2020; Canatar et al., 2021; Simon et al., 2021). The theory shows that inductive bias, rather than the dimension of the representation alone, is necessary to explain learning performance across tasks. It also suggests that cerebellar regions specialized for different functions may adjust the sparsity of their granule cell representations depending on the task."

5) The interpretation of the distribution of the mossy fiber inputs to the granule cells, which would have a crucial impact on the results of a study like this, is likely incorrect. First, unlike the papers that the authors cite, there are many studies indicating that there is a topographic organization in the mossy fiber termination, such that mossy fibers from the same inputs, representing similar types of information, are regionally co-localized in the granule cell layer. Hence, there is no support for the model assumption that there is a predominantly random termination of mossy fibers of different origins. This risks invalidating the comparisons that the authors are making, i.e. such as in Figure 3. This is a list of example papers, there are more: van Kan, Gibson and Houk (1993) Movement-related inputs to intermediate cerebellum of the monkey. Journal of Neurophysiology. Garwicz et al (1998) Cutaneous receptive fields and topography of mossy fibres and climbing fibres projecting to cat cerebellar C3 zone. The Journal of Physiology. Brown and Bower (2001) Congruence of mossy fiber and climbing fiber tactile projections in the lateral hemispheres of the rat cerebellum. The Journal of Comparative Neurology. Na, Sugihara, Shinoda (2019) The entire trajectories of single pontocerebellar axons and their lobular and longitudinal terminal distribution patterns in multiple aldolase C-positive compartments of the rat cerebellar cortex. The Journal of Comparative Neurology.

6) The nature of the mossy fiber-granule cell recording is also reviewed here: Gilbert and Miall (2022) How and Why the Cerebellum Recodes Input Signals: An Alternative to Machine Learning. The Neuroscientist. Further, considering the re-coding idea, the following paper shows that detailed information, as it is provided by mossy fibers, is transmitted through the granule cells without any evidence of re-coding: Jo¨rntell and Ekerot (2006) Journal of Neuroscience; and this paper shows that these granule inputs are powerfully transmitted to the molecular layer even in a decerebrated animal (i.e. where only the ascending sensory pathways remains) Jo¨rntell and Ekerot 2002, Neuron.

We agree that there is strong evidence for a topographic organization in mossy fiber to granule cell connectivity at the microzonal level. We thank the Reviewer for pointing us to specific examples. We acknowledge that our simplified model does not capture the structure of connectivity observed in these studies.

However, the focus of our model is on cerebellar neurons presynaptic to a single Purkinje cell. Random or disordered distribution of inputs at this local scale is compatible with topographic organization at the microzonal scale. Furthermore, while there is evidence of structured connections at the local scale, models with random connectivity are able to reproduce the dimensionality of granule cell activity within a small margin of error (Nguyen et al., 2022). Finally, our finding that dense codes are optimal for learning slowly varying tasks is consistent with evidence for the lack of re-coding – for such tasks, re-coding may absent because it is not required.

We have dedicated a section on this issue in the Assumptions and Extensions portion of our Discussion:

"Another key assumption concerning the granule cells is that they sample mossy fiber inputs randomly, as is typically assumed in Marr-Albus models (Marr, 1969; Albus, 1971; LitwinKumar et al., 2017; Cayco-Gajic et al., 2017). Other studies instead argue that granule cells sample from mossy fibers with highly similar receptive fields (Garwicz et al., 1998; Brown and Bower, 2001; J¨orntell and Ekerot, 2006) defined by the tuning of mossy fiber and climbing fiber inputs to cerebellar microzones (Apps et al., 2018). This has led to an alternative hypothesis that granule cells serve to relay similarly tuned mossy fiber inputs and enhance their signal-to-noise ratio (Jo¨rntell and Ekerot, 2006; Gilbert and Chris Miall, 2022) rather than to re-encode inputs. Another hypothesis is that granule cells enable Purkinje cells to learn piece-wise linear approximations of nonlinear functions (Spanne and J¨orntell, 2013). However, several recent studies support the existence of heterogeneous connectivity and selectivity of granule cells to multiple distinct inputs at the local scale (Huang et al., 2013; Ishikawa et al., 2015). Furthermore, the deviation of the predicted dimension in models constrained by electron-microscopy data as compared to randomly wired models is modest (Nguyen et al., 2022). Thus, topographically organized connectivity at the macroscopic scale may coexist with disordered connectivity at the local scale, allowing granule cells presynaptic to an individual Purkinje cell to sample heterogeneous combinations of the subset of sensorimotor signals relevant to the tasks that Purkinje cell participates in. Finally, we note that the optimality of dense codes for learning slowly varying tasks in our theory suggests that observations of a lack of mixing (J¨orntell and Ekerot, 2002) for such tasks are compatible with Marr-Albus models, as in this case nonlinear mixing is not required."

7) I could not find any description of the neuron model used in this paper, so I assume that the neurons are just modelled as linear summators with a threshold (in fact, Figure 5 mentions inhibition, but this appears to be just one big lump inhibition, which basically is an incorrect implementation). In reality, granule cells of course do have specific properties that can impact the input-output transformation, PARTICULARLY with respect to the comparison of sparse versus dense coding, because the low-pass filtering of input that occurs in granule cells (and other neurons) as well as their spike firing stochasticity (Saarinen et al (2008). Stochastic differential equation model for cerebellar granule cell excitability. PLoS Comput. Biol. 4:e1000004) will profoundly complicate these comparisons and make them less straight forward than what is portrayed in this paper. There are also several other factors that would be present in the biological setting but are lacking here, which makes it doubtful how much information in relation to the biological performance that this modelling study provides: What are the types of activity patterns of the inputs? What are the learning rules? What is the topography? What is the impact of Purkinje cell outputs downstream, as the Purkinje cell output does not have any direct action, it acts on the deep cerebellar nuclear neurons, which in turn act on a complex sensorimotor circuitry to exert their effect, hence predictive coding could only become interpretable after the PC output has been added to the activity in those circuits. Where is the differentiated Golgi cell inhibition?

Thank you for these critiques. We have made numerous edits to improve the presentation of the details of our model in the main text of the manuscript. Indeed, granule cells in the main text are modeled as linear sums of mossy fiber inputs with a threshold-linear activation function. A more detailed description of the model for granule cells can now be found in Equation 1 in the Results section:

"The activity of neurons in the expansion layer is given by: h = φ(Jeffx − θ), (1) where φ is a rectified linear activation function φ(u) = max(u,0) applied element-wise. Our results also hold for other threshold-polynomial activation functions. The scalar threshold θ is shared across neurons and controls the coding level, which we denote by f, defined as the average fraction of neurons in the expansion layer that are active."

Most of our analyses use the firing rate model we describe above, but several Supplemental Figures show extensions to this model. As we mention in the Discussion, our results do not depend on the specific choice of nonlinearity (Figure 2-figure supplement 2). We have also considered the possibility that the stochastic nature of granule cell spikes could impact our measures of coding level. In Figure 7-figure supplement 1 we test the robustness of our main conclusion using a spiking model where we model granule cell spikes with Poisson statistics. When measuring coding level in a population of spiking neurons, a key question is at what time window the Purkinje cell integrates spikes. For several choices of integration time windows, we show that dense coding remains optimal for learning smooth tasks. However, we agree with the Reviewer that there are other biological details our model does not address. For example, our spiking model does not capture some of the properties the Saarinen et al. (2008) model captures, including random sub-threshold oscillations and clusters of spikes. Modeling biophysical phenomena at this scale is beyond the scope of our study. We have added this reference to the relevant section of the Discussion:

"We also note that coding level is most easily defined when neurons are modeled as rate, rather than spiking units. To investigate the consistency of our results under a spiking code, we implemented a model in which granule cell spiking exhibits Poisson variability and quantify coding level as the fraction of neurons that have nonzero spike counts (Figure 7-figure supplement 1; Figure 7C). In general, increased spike count leads to improved performance as noise associated with spiking variability is reduced. Granule cells have been shown to exhibit reliable burst responses to mossy fiber stimulation (Chadderton et al., 2004), motivating models using deterministic responses or sub-Poisson spiking variability. However, further work is needed to quantitatively compare variability in model and experiment and to account for more complex biophysical properties of granule cells (Saarinen et al., 2008)."

A second concern the Reviewer raises is our implementation of Golgi cell inhibition as a homogeneous rather than heterogeneous input onto granule cells. In simplified models, adding heterogeneous inhibition does not dramatically change the qualitative properties of the expansion layer representation, in particular the dimensionality of the representation (Billings et al., 2014, Cayco-Gajic et al., 2017, Litwin-Kumar et al., 2017). We have added a section about inhibition to our Discussion:

"We also have not explicitly modeled inhibitory input provided by Golgi cells, instead assuming such input can be modeled as a change in effective threshold, as in previous studies (Billings et al., 2014; Cayco-Gajic et al., 2017; Litwin-Kumar et al., 2017). This is appropriate when considering the dimension of the granule cell representation (Litwin-Kumar et al., 2017), but more work is needed to extend our model to the case of heterogeneous inhibition."

Regarding the mossy fiber inputs, as we state in response to paragraph 3, we agree with the Reviewer that the random and uncorrelated mossy fiber activity that has been used in previous studies is an unrealistic idealization of in vivo neural activity. One of the motivations for our model was to relax this assumption and examine the consequences: we introduce correlations in the mossy fiber activity by projecting low-dimensional patterns into the mossy fiber layer (Figure 1B):

"A typical assumption in computational theories of the cerebellar cortex is that inputs are randomly distributed in a high-dimensional space (Marr, 1969; Albus, 1971; Brunel et al., 2004; Babadi and Sompolinsky, 2014; Billings et al., 2014; Litwin-Kumar et al., 2017). While this may be a reasonable simplification in some cases, many tasks, including cerebellumdependent tasks, are likely best-described as being encoded by a low-dimensional set of variables. For example, the cerebellum is often hypothesized to learn a forward model for motor control (Wolpert et al., 1998), which uses sensory input and motor efference to predict an effector’s future state. Mossy fiber activity recorded in monkeys correlates with position and velocity during natural movement (van Kan et al., 1993). Sources of motor efference copies include motor cortex, whose population activity lies on a low-dimensional manifold (Wagner et al., 2019; Huang et al., 2013; Churchland et al., 2010; Yu et al., 2009). We begin by modeling the low dimensionality of inputs and later consider more specific tasks.

We therefore assume that the inputs to our model lie on a D-dimensional subspace embedded in the N-dimensional input space, where D is typically much smaller than N (Figure 1B). We refer to this subspace as the “task subspace” (Figure 1C)."

The Reviewer also mentions the learning rule at granule cell to Purkinje cell synapses. We agree that considering online, climbing-fiber-dependent learning is an important generalization. We therefore added a new supplemental figure investigating whether we would still see a difference in optimal coding levels across tasks if online learning were used instead of the least squares solution (Figure 7-figure supplement 2). Indeed, we observed a similar task dependence as we saw in Figure 2F. We have added a new paragraph in the Discussion under Assumptions and Extensions describing our rationale and approach in detail:

"For the Purkinje cells, our model assumes that their responses to granule cell input can be modeled as an optimal linear readout. Our model therefore provides an upper bound to linear readout performance, a standard benchmark for the quality of a neural representation that does not require assumptions on the nature of climbing fiber-mediated plasticity, which is still debated. Electrophysiological studies have argued in favor of a linear approximation (Brunel et al., 2004). To improve the biological applicability of our model, we implemented an online climbing fiber-mediated learning rule and found that optimal coding levels are still task-dependent (Figure 7-figure supplement 2). We also note that although we model several timing-dependent tasks (Figure 7), our learning rule does not exploit temporal information, and we assume that temporal dynamics of granule cell responses are largely inherited from mossy fibers. Integrating temporal information into our model is an interesting direction for future investigation."

Finally, regarding the function of the Purkinje cell, our model defines a learning task as a mapping from inputs to target activity in the Purkinje cell and is thus agnostic to the cell’s downstream effects. We clarify this point when introducing the definition of a learning task:

"In our model, a learning task is defined by a mapping from task variables x to an output f(x), representing a target change in activity of a readout neuron, for example a Purkinje cell. The limited scope of this definition implies our results should not strongly depend on the influence of the readout neuron on downstream circuits."

8) The problem of these, in my impression, generic, arbitrary settings of the neurons and the network in the model becomes obvious here: ‘In contrast to the dense activity in cerebellar granule cells, odor responses in Kenyon cells, the analogs of granule cells in the Drosophila mushroom body, are sparse...’ How can this system be interpreted as an analogy to granule cells in the mammalian cerebellum when the model does not address the specifics lined up above? I.e. the ‘inductive bias’ that the authors speak of, defined as ‘the tendency of a network toward learning particular types of input-output mappings’, would be highly dependent on the specifics of the network model.

We agree with the Reviewer that our model makes several simplifying assumptions for mathematical tractability. However, we note that our study is not the first to draw analogies between cerebellum-like systems, including the mushroom body (Bell et al., 2008; Farris, 2011). All the systems we study feature a sparsely connected, expanded granule-like layer that sends parallel fiber axons onto densely connected downstream neurons known to exhibit powerful synaptic plasticity, thus motivating the key architectural assumptions of our model. We have constrained anatomical parameters of the model using data as available (Table 1). However, we agree with the Reviewer that when making comparisons across species there is always a possibility that differences are due to physiological mechanisms we have not fully understood or captured with a model. As such, we can only present a hypothesis for these differences. We have modified our Discussion section on this topic to clearly state this.

"Our results predict that qualitative differences in the coding levels of cerebellum-like systems, across brain regions or across species, reflect an optimization to distinct tasks (Figure 7). However, it is also possible that differences in coding level arise from other physiological differences between systems."

9) More detailed comments: Abstract: ‘In these models [Marr-Albus], granule cells form a sparse, combinatorial encoding of diverse sensorimotor inputs. Such sparse representations are optimal for learning to discriminate random stimuli.’ Yes, I would agree with the first part, but I contest the second part of this statement. I think what is true for sparse coding is that the learning of random stimuli will be faster, as in a perceptron, but not necessarily better. As the sparsification essentially removes information, it could be argued that the quality of the learning is poorer. So from that perspective, it is not optimal. The authors need to specify from what perspective they consider sparse representations optimal for learning.

This is an important point that we would like to clarify. It is not the case that sparse coding simply speeds up learning. In our study and many related works (Barak et al. 2013; Babadi and Sompolinsky 2014; Litwin-Kumar et al. 2017), learning performance is measured based on the generalization ability of the network – the ability to predict correct labels for previously unseen inputs. As our study and previous studies show, sparse codes are optimal in the sense that they minimize generalization error, independent of any effect on learning speed. To communicate this more effectively, we have added the following sentence to the first paragraph of the Introduction:

"Sparsity affects both learning speed (Cayco-Gajic et al., 2017), and generalization, the ability to predict correct labels for previously unseen inputs (Barak et al., 2013; Babadi and Sompolinsky, 2014; Litwin-Kumar et al., 2017)."

10) Introduction: ‘Indeed, several recent studies have reported dense activity in cerebellar granule cells in response to sensory stimulation or during motor control tasks (Knogler et al., 2017; Wagner et al., 2017; Giovannucci et al., 2017; Badura and De Zeeuw, 2017; Wagner et al., 2019), at odds with classic theories (Marr, 1969; Albus, 1971).’ In fact, this was precisely the issue that was addressed already by Jo¨rntell and Ekerot (2006) Journal of Neuroscience. The conclusion was that these actual recordings of granule cells in vivo provided essentially no support for the assumptions in the Marr-Albus theories.

In our reading, the main finding of J¨orntell and Ekerot (2006) is that individual granule cells are activated by mossy fibers with overlapping receptive fields driven by a single type of somatosensory input. However, there is also evidence of nonlinear mixed selectivity in granule cells in support of the re-coding hypothesis (Huang et al., 2013; Ishikawa et al., 2015). Jo¨rntell and Ekerot (2006) also suggest that the granule cell layer shares similar topographic organization as mossy fibers, organized into microzones. The existence of topographic organization does not invalidate Marr-Albus theories. As we have suggested earlier, a local combinatorial expansion can coexist with a global topographic organization.

We have described these considerations in the Assumptions and Extensions portion of the Discussion:

"Another key assumption concerning the granule cells is that they sample mossy fiber inputs randomly, as is typically assumed in Marr-Albus models (Marr, 1969; Albus, 1971; LitwinKumar et al., 2017; Cayco-Gajic et al., 2017). Other studies instead argue that granule cells sample from mossy fibers with highly similar receptive fields (Garwicz et al., 1998; Brown and Bower, 2001; J¨orntell and Ekerot, 2006) defined by the tuning of mossy fiber and climbing fiber inputs to cerebellar microzones (Apps et al., 2018). This has led to an alternative hypothesis that granule cells serve to relay similarly tuned mossy fiber inputs and enhance their signal-to-noise ratio (Jo¨rntell and Ekerot, 2006; Gilbert and Chris Miall, 2022) rather than to re-encode inputs. Another hypothesis is that granule cells enable Purkinje cells to learn piece-wise linear approximations of nonlinear functions (Spanne and J¨orntell, 2013). However, several recent studies support the existence of heterogeneous connectivity and selectivity of granule cells to multiple distinct inputs at the local scale (Huang et al., 2013; Ishikawa et al., 2015). Furthermore, the deviation of the predicted dimension in models constrained by electron-microscopy data as compared to randomly wired models is modest (Nguyen et al., 2022). Thus, topographically organized connectivity at the macroscopic scale may coexist with disordered connectivity at the local scale, allowing granule cells presynaptic to an individual Purkinje cell to sample heterogeneous combinations of the subset of sensorimotor signals relevant to the tasks that Purkinje cell participates in. Finally, we note that the optimality of dense codes for learning slowly varying tasks in our theory suggests that observations of a lack of mixing (J¨orntell and Ekerot, 2002) for such tasks are compatible with Marr-Albus models, as in this case nonlinear mixing is not required."

We have also included the Jo¨rntell and Ekerot (2006) study as a citation in the Introduction:

"Indeed, several recent studies have reported dense activity in cerebellar granule cells in response to sensory stimulation or during motor control tasks (Jo¨rntell and Ekerot, 2006; Knogler et al., 2017; Wagner et al., 2017; Giovannucci et al., 2017; Badura and De Zeeuw, 2017; Wagner et al., 2019), at odds with classic theories (Marr, 1969; Albus, 1971)."

11) Results: 1st para: There is no information about how the granule cells are modelled.

We agree that this should information should have been more readily available. We now more completely describe the model in the main text. Our model for granule cells can be found in Equation 1 in the Results section and also the Methods (Network Model):

"The activity of neurons in the expansion layer is given by: h = φ(Jeffx − θ), (2)

where φ is a rectified linear activation function φ(u) = max(u,0) applied element-wise. Our results also hold for other threshold-polynomial activation functions. The scalar threshold θ is shared across neurons and controls the coding level, which we denote by f, defined as the average fraction of neurons in the expansion layer that are active."

12) 2nd para: ‘A typical assumption in computational theories of the cerebellar cortex is that inputs are randomly distributed in a high-dimensional space.’ Yes, I agree, and this is in fact in conflict with the known topographical organization in the cerebellar cortex (see broader comment above). Mossy fiber inputs coding for closely related inputs are co-localized in the cerebellar cortex. I think for this model to be of interest from the point of view of the mammalian cerebellar cortex, it would need to pay more attention to this organizational feature.

As we discuss in our response to paragraphs 5 and 6, we see the random distribution assumption at the local scale (inputs presynaptic to a single Purkinje cell) as being compatible with topographic organization occurring at the microzone scale. Furthermore, as discussed earlier, we specifically model low-dimensional input as opposed to the random and high-dimensional inputs typically studied in prior models.

"A typical assumption in computational theories of the cerebellar cortex is that inputs are randomly distributed in a high-dimensional space (Marr, 1969; Albus, 1971; Brunel et al., 2004; Babadi and Sompolinsky, 2014; Billings et al., 2014; Litwin-Kumar et al., 2017). While this may be a reasonable simplification in some cases, many tasks, including cerebellumdependent tasks, are likely best-described as being encoded by a low-dimensional set of variables. For example, the cerebellum is often hypothesized to learn a forward model for motor control (Wolpert et al., 1998), which uses sensory input and motor efference to predict an effector’s future state. Mossy fiber activity recorded in monkeys correlates with position and velocity during natural movement (van Kan et al., 1993). Sources of motor efference copies include motor cortex, whose population activity lies on a low-dimensional manifold (Wagner et al., 2019; Huang et al., 2013; Churchland et al., 2010; Yu et al., 2009). We begin by modeling the low dimensionality of inputs and later consider more specific tasks. We therefore assume that the inputs to our model lie on a D-dimensional subspace embedded in the N-dimensional input space, where D is typically much smaller than N (Figure 1B). We refer to this subspace as the “task subspace” (Figure 1C)."

References

Albus, J.S. (1971). A theory of cerebellar function. Mathematical Biosciences 10, 25–61.

Apps, R., et al. (2018). Cerebellar Modules and Their Role as Operational Cerebellar Processing Units. Cerebellum 17, 654–682.

Babadi, B. and Sompolinsky, H. (2014). Sparseness and expansion in sensory representations. Neuron 83, 1213–1226.

Badura, A. and De Zeeuw, C.I. (2017). Cerebellar granule cells: dense, rich and evolving representations. Current Biology 27, R415–R418.

Barak, O., Rigotti, M., and Fusi, S. (2013). The sparseness of mixed selectivity neurons controls the generalization–discrimination trade-off. Journal of Neuroscience 33, 3844– 3856.

Bell, C.C., Han, V., and Sawtell, N.B. (2008). Cerebellum-like structures and their implications for cerebellar function. Annual Review of Neuroscience 31, 1–24.

Billings, G., Piasini, E., Lo˝rincz, A., Nusser, Z., and Silver, R.A. (2014). Network structure within the cerebellar input layer enables lossless sparse encoding. Neuron 83, 960–974.

Bordelon, B., Canatar, A., and Pehlevan, C. (2020). Spectrum dependent learning curves in kernel regression and wide neural networks. International Conference on Machine Learning 1024–1034.

Brown, I.E. and Bower, J.M. (2001). Congruence of mossy fiber and climbing fiber tactile projections in the lateral hemispheres of the rat cerebellum. Journal of Comparative Neurology 429, 59–70.

Brunel, N., Hakim, V., Isope, P., Nadal, J.P., and Barbour, B. (2004). Optimal information storage and the distribution of synaptic weights: perceptron versus Purkinje cell. Neuron 43, 745–757.

Canatar, A., Bordelon, B., and Pehlevan, C. (2021). Spectral bias and task-model alignment explain generalization in kernel regression and infinitely wide neural networks. Nature Communications 12, 1–12.

Cayco-Gajic, N.A., Clopath, C., and Silver, R.A. (2017). Sparse synaptic connectivity is required for decorrelation and pattern separation in feedforward networks. Nature Communications 8, 1–11.

Chadderton, P., Margrie, T.W., and Ha¨usser, M. (2004). Integration of quanta in cerebellar granule cells during sensory processing. Nature 428, 856–860.

Churchland, M.M., et al. (2010). Stimulus onset quenches neural variability: a widespread cortical phenomenon. Nature Neuroscience 13, 369–378.

Farris, S.M. (2011). Are mushroom bodies cerebellum-like structures? Arthropod structure & development 40, 368–379.

Garwicz, M., Jorntell, H., and Ekerot, C.F. (1998). Cutaneous receptive fields and topography of mossy fibres and climbing fibres projecting to cat cerebellar C3 zone. The Journal of Physiology 512 ( Pt 1), 277–293.

Gilbert, M. and Chris Miall, R. (2022). How and Why the Cerebellum Recodes Input Signals: An Alternative to Machine Learning. The Neuroscientist 28, 206–221.

Giovannucci, A., et al. (2017). Cerebellar granule cells acquire a widespread predictive feedback signal during motor learning. Nature Neuroscience 20, 727–734.

Huang, C.C., et al. (2013). Convergence of pontine and proprioceptive streams onto multimodal cerebellar granule cells. eLife 2, e00400.

Ishikawa, T., Shimuta, M., and Ha¨usser, M. (2015). Multimodal sensory integration in single cerebellar granule cells in vivo. eLife 4, e12916.

Jacot, A., Gabriel, F., and Hongler, C. (2018). Neural tangent kernel: Convergence and generalization in neural networks. Advances in Neural Information Processing Systems 31.

Jo¨rntell, H. and Ekerot, C.F. (2002). Reciprocal Bidirectional Plasticity of Parallel Fiber Receptive Fields in Cerebellar Purkinje Cells and Their Afferent Interneurons. Neuron 34, 797–806.

Jorntell, H. and Ekerot, C.F. (2006). Properties of Somatosensory Synaptic Integration in Cerebellar Granule Cells In Vivo. Journal of Neuroscience 26, 11786–11797.

Knogler, L.D., Markov, D.A., Dragomir, E.I., Stih, V., and Portugues, R. (2017). Senso-ˇ rimotor representations in cerebellar granule cells in larval zebrafish are dense, spatially organized, and non-temporally patterned. Current Biology 27, 1288–1302.

Litwin-Kumar, A., Harris, K.D., Axel, R., Sompolinsky, H., and Abbott, L.F. (2017). Optimal degrees of synaptic connectivity. Neuron 93, 1153–1164. Marr, D. (1969). A theory of cerebellar cortex. Journal of Physiology 202, 437–470.

Nguyen, T.M., et al. (2022). Structured cerebellar connectivity supports resilient pattern separation. Nature 1–7.

Saarinen, A., Linne, M.L., and Yli-Harja, O. (2008). Stochastic Differential Equation Model for Cerebellar Granule Cell Excitability. PLOS Computational Biology 4, e1000004.

Simon, J.B., Dickens, M., and DeWeese, M.R. (2021). A theory of the inductive bias and generalization of kernel regression and wide neural networks. arXiv: 2110.03922.

Sollich, P. (1998). Learning curves for Gaussian processes. Advances in Neural Information Processing Systems 11.

Spanne, A. and Jo¨rntell, H. (2013). Processing of Multi-dimensional Sensorimotor Information in the Spinal and Cerebellar Neuronal Circuitry: A New Hypothesis. PLOS Computational Biology 9, e1002979.

Spanne, A. and Jo¨rntell, H. (2015). Questioning the role of sparse coding in the brain. Trends in Neurosciences 38, 417–427.

van Kan, P.L., Gibson, A.R., and Houk, J.C. (1993). Movement-related inputs to intermediate cerebellum of the monkey. Journal of Neurophysiology 69, 74–94.

Wagner, M.J., Kim, T.H., Savall, J., Schnitzer, M.J., and Luo, L. (2017). Cerebellar granule cells encode the expectation of reward. Nature 544, 96–100.

Wagner, M.J., et al. (2019). Shared cortex-cerebellum dynamics in the execution and learning of a motor task. Cell 177, 669–682.e24.

Wolpert, D.M., Miall, R.C., and Kawato, M. (1998). Internal models in the cerebellum. Trends in Cognitive Sciences 2, 338–347.

Yu, B.M., et al. (2009). Gaussian-process factor analysis for low-dimensional single-trial analysis of neural population activity. Journal of Neurophysiology 102, 614–635.

docker compose --profile download up --build

如果是windows wsl ,命令前需要添加 sudo

Tissue clearing is currently revolutionizing neuroanatomy by enabling organ-level imaging with cellular resolution. However, currently available tools for data analysis require a significant time investment for training and adaptation to each laboratory’s use case, which limits productivity. Here, we present FriendlyClearMap, an integrated toolset that makes ClearMap1 and ClearMap2’s CellMap pipeline easier to use, extends its functions, and provides Docker Images from which it can be run with minimal time investment. We also provide detailed tutorials for each step of the pipeline.For more precise alignment, we add a landmark-based atlas registration to ClearMap’s functions as well as include young mouse reference atlases for developmental studies. We provide alternative cell segmentation method besides ClearMap’s threshold-based approach: Ilastik’s Pixel Classification, importing segmentations from commercial image analysis packages and even manual annotations. Finally, we integrate BrainRender, a recently released visualization tool for advanced 3D visualization of the annotated cells.As a proof-of-principle, we use FriendlyClearMap to quantify the distribution of the three main GABAergic interneuron subclasses (Parvalbumin+, Somatostatin+, and VIP+) in the mouse fore- and midbrain. For PV+ neurons, we provide an additional dataset with adolescent vs. adult PV+ neuron density, showcasing the use for developmental studies. When combined with the analysis pipeline outlined above, our toolkit improves on the state-of-the-art packages by extending their function and making them easier to deploy at scale.

This work has been peer reviewed in GigaScience (see https://doi.org/10.1093/gigascience/giad035 ), which carries out open, named peer-review. These reviews are published under a CC-BY 4.0 license and were as follows:

Reviewer Chris Armit

This Technical Note paper describes "FriendlyClearMap: An optimized toolkit for mouse brain mapping and analysis".

Whereas the core concept of a data analysis tool to assist in spatial mapping of cleared mouse tissues is perfectly reasonable, there are multiple issues with the documentation that renders this toolkit very difficult to use. I detail below some of the issues I have encountered.

GitHub repositoryThe installation instructions are missing from the following GitHub repository: https://github.com/MoritzNegwer/FriendlyClearMap-scriptsThe closest reference I could find to installation instructions is the following: "Please see the Appendices 1-3 of our <X_upcoming> publication for detailed instructions on how to use the pipelines. <X_protocols.io goes here>"Step-bystep installation instructions should be included in the GitHub repository. In addition, the authors should add the protocols.io links to their GitHub repository.

Protocols.ioThe installation instructions are missing from the following protocols.io links:Run Clearmap 1 docker dx.doi.org/10.17504/protocols.io.eq2lynnkrvx9/v1Run Clearmap 2 docker dx.doi.org/10.17504/protocols.io.yxmvmn9pbg3p/v1Both of these protocols include the following instruction:* "Then, download the docker container from our repository: XXX docker container goes here"In the documentation, the authors need to unambiguously refer to the specific Docker container that a user needs to install for each software tool.

Test Data I could not find the test data in the form of image stacks that would be needed to test the FriendlyClearMap protocols. Figure 1 refers to 16-bit TIFF image stacks, and I presume these to be the input data that is needed for the image analysis pipelines described in the manuscript. The authors should provide details of the test imaging dataset, including links if necessary to where the image stacks data can be downloaded, in the 'Data Availability' section of the manuscript.

Platform / Operating SystemsIn the 'Data Availability' section of the manuscript, the authors specify that the Operating Systems are "platform-independent". However, the protocols.io documents lists a set of requirements for Windows and LINUX, but not for MacOS. The authors should provide installation instructions and system requirements for MacOS.I reject this manuscript on the grounds that, due to lack of appropriate documentation and installation instructions, the software tool is too difficult to use and therefore has extremely low reuse potential.

This thread is locked.

Yet another example of why it's dumb for Microsoft to lock Community threads. This is in the Bing search results as the top article for my issue with 1,911 views. Since 2011 though, there have been new developments! The new Media Player app in Windows 10 natively supports Zune playlist files! Since the thread is locked, I can't put this news in a place where others following my same search path will find it.

Guess that's why it makes sense to use Hypothes.is 🤷♂️

s the first GUI-based personal

So with the Apple lisa being the first I can see a few things that are simlar to today. Icons, little images that one can select with describing text. Windows with closing buttons and other stuff like that. I think it probably flopped because of the price. Lisa 1983.

still need to know what's your graphics card! If it's Intel and doesn't have GL drivers on Windows, then your issue is a dupe of the fact that we need to use ANGLE in your case (known issue).

CONSIDERATION

I tested this on my HP TouchSmart tx2 laptop which runs Windows 7 and that machine loads the sites such as https://cvs.khronos.org/svn/repos/registry/trunk/public/webgl/sdk/demos/google/particles/index.html fine.

ISSUE_REPRODUCTION

Seen while running Mozilla/5.0 (Windows; Windows NT 6.1; WOW64; rv:2.0b3) Gecko/20100805 Firefox/4.0b3. I tried in 32 bit mode and the same thing happens. STR: 1. Enable WebGL with the about:config pref 2. Load the site in the URL, or one of the demos from http://www.ambiera.com/copperlicht/demos.html Expected: it would work as it does using Windows XP and Vista using the same build Actual: I get an error saying that "This demo requires a WebGL-enabled browser" I have seen Bug 560975 and I do not have those prefs set in about:config since I am using a new profile.

PROBLEM_DESCRIPTION

Author Response

Reviewer #2 (Public Review):

1) The authors in reality do not analyze oscillations themselves in this manuscript but only the power of signals filtered at determined frequency bands. This is particularly misleading when the authors talk about "spindles". Spindles are classically defined as a thalamico-cortical phenomenon, not recorded from hippocampus LFPs. Thus, the fact that you filter the signal in the same frequency range matching cortical spindles does not mean you are analyzing spindles. The terminology, therefore, is misleading. I would recommend the authors to change spindles to "beta", which at least has been reported in the hippocampus, although in very particular behavioral circumstances. However, one must note that the presence of power in such bands does not guarantee one is recording from these oscillations. For example, the "fast gamma" band might be related to what is defined as fast gamma nested in theta, but it might also be related to ripples in sleep recordings. The increase of "spindle" power in sleep here is probably related to 1/f components arising from the large irregular activity of slow wave sleep local field potentials. The authors should avoid these conceptual confusions in the manuscript, or show that these band power time courses are in fact matching the oscillations they refer to (for example, their spindle band is in fact reflecting increased spindle occurrence).

We thank the reviewer for allowing us to clarify this subject. We completely agree with concerns raised in the comments. To avoid any confusion, we have replaced throughout the manuscript the word ‘spindle’ with ‘beta’.

2) The shuffling procedure to control for the occupancy difference between awake and sleep does not seem to be sufficient. From what I understand, this shuffling is not controlling for the autocorrelation of each band which would be the main source of bias to be accounted for in this instance. Thus, time shifts for each band would be more appropriate. Further, the controls for trial durations should be created using consecutive windows. If you randomly sample sleep bins from distant time points you are not effectively controlling for the difference in duration between trial types. Finally, it is not clear from the text if the UMAP is recomputed for each duration-matched control. This would be a rigorous control as it would remove the potential bias arising from the unbalance between awake and sleep data points, which could bias the subspace to be more detailed for the LFP sleep features. It is very likely the results will hold after these controls, given it is not surprising that sleep is a more diverse state than awake, but it would be good practice to have more rigorous controls to formalize these conclusions.

We are grateful to the reviewer for suggesting alternative analysis. We have used this direction, to create surrogate datasets obtained by time shifting each band and obtained their respective UMAP projections (see modified Figure 2D). Additionally, as suggested, for duration-matched controls, we have selected consecutive windows, rather than random points (Figure 2 – figure supplement 1C). UMAP projections were obtained for each duration-matched control and occupancy was computed. The text in the method section has been modified to indicate the analysis. As expected, the results were identical.

3) Lots of the observations made from the state space approach presented in this manuscript lack any physiological interpretation. For example, Figure 4F suggests a shift in the state space from Sleep1 to Sleep2. The authors comment there is a change in density but they do not make an effort to explain what the change means in terms of brain dynamics. It seems that the spectral patterns are shifting away from the Delta X Spindle region (concluding this by looking at Fig4B) which could be potentially interesting if analyzed in depth. What is the state space revealing about the brain here? It would be important to interpret the changes revealed by this method otherwise what are we learning about the brain from these analyses? This is similar to the results presented in Figure 5, which are merely descriptions of what is seen in the correlation matrix space. It seems potentially interesting that non-REM seems to be split into two clusters in the UMAP space. What does it mean for REM that delta band power in pyramidal and lm layers is anti-correlated to the power within the mid to fast gamma range? What do the transition probabilities shown in Figures 6B and C suggest about hippocampal functioning? The authors just state there are "changes" but they don't characterize these systematically in terms of biology. Overall, the abstract multivariate representation of the neural data shown here could potentially reveal novel dynamics across the awake-sleep cycle, but in the current form of this manuscript, the observations never leave the abstract level.

We thank the reviewer for allowing us to clarify this aspect of the manuscript. We have now edited the main text to include considerations on the biological relevance of the findings of Figure 4, 5 and 6.

Additions to figure 4: In particular, non-REM states in sleep2 tended to concentrate in a region of increased power in the delta and beta bands, which could be the results of increased interactions with cortical activity modulated in the same range. It is also likely that such effect was induced by the exposure to relevant behavioral experience. In fact, changes in density of individual oscillations after learning have been reported using traditional analytical methods and are thought to support memory consolidation (Bakker et al., 2015; Eschenko et al., 2008, 2006). Nevertheless, while traditional methods provide information about individual components, the novel approach used here provides additional information about the combinatorial shift in the dynamics of network oscillations after learning or exploration. Thus, it provides the basis for identifying how coordinated activity among different oscillations supports memory consolidation processes, as those occurring during non-REM sleep after exploration, which cannot be elucidated using traditional analytical methods.

Additions to figure 5: Gamma segregation and delta decoupling offer a picture of hippocampal REM sleep as being more akin to awake locomotion (with the major difference of a stronger medium gamma presence) while also suggesting a substantial independence from cortical slow oscillations. On the other hand, the across-scale coherence of non-REM sleep is consistent with this sleep stage being dominated by brain-wide collective fluctuations engaging oscillations at every range. Distinct cross frequency coupling among various individual pairs of oscillations such as theta-gamma, delta-gamma etc., have been already reported (Bandarabadi et al., 2019; Clemens et al., 2009; Hammer et al., 2021; Scheffzük et al., 2011). However, computing cross frequency coupling on the state space provides the additional information on how multiple oscillations, obtained from distinct CA1 hippocampal layers (stratum pyramidale, stratum radiatum and stratum lacunosum moleculare), are coupled with each other during distinct states of sleep and wakefulness. Furthermore, projecting the correlation matrices on 2D plane, provides a compact tool that allows to visualize the cross-frequency interactions among various hippocampal oscillations. Altogether, this approach reveals the complex nature of coupling dynamics occurring in hippocampus during distinct behavioral states

Additions to Figure 6: We found that transitions occurring from REM-to-REM sleep and non-REM-to-non-REM sleep (intra-state transitions) are more vulnerable to plasticity after exploration as compared to inter-state transitions (such as non-REM to REM, REM-to-intermediate etc.) (Fig 6E, F). These changes in intra-state transitions were observed to be beyond randomness (Fig S9 E, F) indicating a specificity in plastic changes in state transitions after exploration. In particular, while the average REM period duration is unaltered after exploration (Fig 4G), REM temporal structure is reorganized. In fact, increased probability of REM to REM transitions indicates a significant prolongation of REM bout duration. Similarly, the increase in non-REM to non-REM transition probability reflects an increased duration of non-REM bouts. Therefore, environment exploration was accompanied by an increased separation between REM and non-REM periods, possibly as a response to increased computational demands. More in general, the network state space allows to characterize the state transitions in hippocampus and how they are affected by novel experience or learning. By observing the state transition patterns, this analytical framework allows to detect and identify state-specific changes in the hippocampal oscillatory dynamics, beyond the possibilities offered by more traditional univariate and bivariate methods. We next investigated how fast the network flows on the state space and assessed whether the speed is uniform, or it exhibits specific region-dependent characteristics.

Reviewer #3 (Public Review):

1) My primary concern is to provide clear evidence that this approach will provide key insights of high physiological significance, especially for readers who may think the traditional approaches are advantageous (for example due to their simplicity). I think the authors' findings of distinct sleep state signatures or altered organization of the NLG3-KO mouse could serve this purpose. However, right now the physiological significance of these results is unclear. For example, do these sleep state signatures predict later behavior performance, or is altered organization related to other functional impairments in the disease model? Do neurons with distinct sleep state signatures form distinct ensembles and code for related information?

We are thankful to the reviewer for raising a very interesting line of questioning regarding sleep signatures and distinct ensemble. In this study, we show that sleep state signatures can predict how individual cells may participate in information processing during open field exploration. However, further analysis exploring the recruitment of neuronal ensembles are in preparation for another manuscript and is beyond the scope of this article.

We have further modified the description of the results (as also suggested by other reviewers) to highlight the key advantages of this approach over traditional methods.

Regarding functional impairment: as described in the manuscript, the altered organization in animal model of autism could possibly due to alterations in cellular and synaptic mechanisms as those described in previous reports (Modi et al 2019, Foldy et al 2013)

2) For cells with different mean firing rates during exploration: is that because they are putative fast-spiking interneurons and pyramidal cells? From the reported mean firing rates, I think some of these cells are interneurons. Since mean firing rates are well known to vary with cell type, this should be addressed. For example, the sleep state signatures may be distinct for different putative pyramidal cells and interneurons. This would be somewhat expected considering prior work that has shown different cell types have different oscillatory coupling characteristics. I think it would be more interesting to determine if pyramidal cells had distinct sleep state signatures and, if so, whether pyramidal cells from the same sleep state signature have similar properties like they code for similar things or commonly fire together in an ensemble ms the number of cells in Fig. 8 may be limited for this analysis. The authors could use the hc-11 data in addition, which was also tested in this work.

We thank the reviewer for suggesting this additional analysis to better describe the data. To this end, we have added an additional Figure in supplementary data (analysis of hc11 dataset: Figure Figure 8 – figure supplement 3), to demonstrate that interneurons and pyramidal cells have distinct sleep signatures. These findings are in agreement with dataset presented in Figure 8D, E.

As shown in the manuscript, the spatial firing (sparsity) has large variability for cells having similar network signatures (Fig 8E). Thus, additional parameters beside oscillations may be involved in cells encoding. Different network state spaces are required to be explored in future studies to further understand this phenomenon in detail.

We agree that investigating neuronal ensembles and state space are an interesting direction to follow. In another study (in preparation) which are investigating in detail the recruitment of neuronal ensemble by oscillatory state space. Thus, those findings are beyond the scope of this introductory article.

3) Example traces are needed to show how LFPs change over the state-space. Example traces should be included for key parts of the state-space in Figures 2 and 3.

We thank the reviewer for this key insight on data representation. Example traces of how LFP varies on the state space have been added (see Figure 4 – figure supplement 1).

4) What is the primary rationale for 200ms time bins? Is this time scale sufficient to capture the slow dynamics of delta rhythm (1-5Hz) with a maximum of 1s duration?

Time scale of binning depends on the scale of investigation. We also replicated the results with different time bins (such as 50 ms and 1 seconds) and the results are identical. For delta rhythms, with 200 ms time bins, the dynamics will be captured across multiple bins. Additionally, the binned power time series are also smoothed before obtaining projections.

5) Since oscillatory frequency and power are highly associated with running speed, how does speed vary over the state space. Is the relationship between speed and state-space similar to the results of previous studies for theta (Slawinska and Kasicki, Brain Res 1998; Maurer et al, Hippocampus 2005) and gamma oscillations (Ahmed and Mehta J. Neurosci 2012; Kemere et al PLOS ONE 2013), or does it provide novel insights?

We thank the reviewer for highlighting this crucial link between oscillation and locomotion. While various articles have focused on individual oscillations, the combinatorial effects of multiple oscillations from multiple brain areas in regulating the speed of the animal during exploration is definitely worth exploring with this novel approach. These set of results will be introduced in another study, currently in preparation.

6) The separation of 9 states (Fig. 6ABC) seems arbitrary, where state 1 (bin 1) is never visited. I suggest plotting the density distribution of the data in Fig. 2A or Fig. 6A to better determine how many states are there within the state space. For example, five peaks in such a density plot might suggest five states. Alternately, clustering methods could be useful to determine how the number of states.

We thank the reviewer for this this useful suggestion. We agree that additional clustering methods can be used to identify non-canonical sleep states. These are currently being explored in our lab and will be part of future studies. As for this dataset, the density plots are available in figure 4E, which determines how many states are in each part of the state space.

7) The results in Fig. 4G are very interesting and suggest more variation of sub-states during non REM periods in sleep1 than in sleep2. What might explain this difference? Was it associated with more frequent ripple events occurring in sleep2?

The reviewer is right in looking for the source of the decreased of state variability in sleep2. Considering the distribution of relative frequency power in the state space, the higher concentration in sleep 2 corresponds to higher content in the slower delta and spindle frequency bands, rather than the higher frequencies of SWRs. This result can be interpreted in the light of enhanced cortical activity (which is known to heavily recruit those bands) and possibly of enhanced cortical-hippocampal communication following relevant behavioral experience. In fact, it is also necessary to mention that with our recording setup we cannot rule out the effects of volume conductance completely, and thus we cannot exclude that the increase in the delta and spindle bands in the hippocampus were a spurious effect of purely cortical frequency modulations.

8) The state transition results in Fig. 6 are confusing because they include two fundamentally different timescales: fast transitions between oscillatory states and slow dynamics of sleep states. I recommend clarifying the description in the results and the figure caption. Furthermore, how can an animal transition between the same sleep state (Fig. 6EF)? Would they both be in a single sleep state?

The transitions capture the fast oscillatory scales (as they are investigated over a timeframe of 1 second). The sleep stages (REM, non-REM etc.) are used as labels from which the states originate on the state space. This allows us to characterize fast oscillatory dynamics in various sleep stages.

Regarding same state transition: An increase in same state transition probability corresponds to increase in prolongation of that particular state, thereby altering the temporal structure of a given sleep state.

Reviewer #2 (Public Review):

Modi and colleagues describe a multivariate framework to analyze local field potentials, which is specifically applied to CA1 data in this work. Multivariate approaches are welcome in the field and the effort of the authors should be appreciated. However, I found the analyses presented here are too superficial and do not seem to bring new insights into hippocampal dynamics. Further, some surrogate methods used are not necessarily controlling for confounding variables. These concerns are further detailed below.

1. The authors in reality do not analyze oscillations themselves in this manuscript but only the power of signals filtered at determined frequency bands. This is particularly misleading when the authors talk about "spindles". Spindles are classically defined as a thalamico-cortical phenomenon, not recorded from hippocampus LFPs. Thus, the fact that you filter the signal in the same frequency range matching cortical spindles does not mean you are analyzing spindles. The terminology, therefore, is misleading. I would recommend the authors to change spindles to "beta", which at least has been reported in the hippocampus, although in very particular behavioral circumstances. However, one must note that the presence of power in such bands does not guarantee one is recording from these oscillations. For example, the "fast gamma" band might be related to what is defined as fast gamma nested in theta, but it might also be related to ripples in sleep recordings. The increase of "spindle" power in sleep here is probably related to 1/f components arising from the large irregular activity of slow wave sleep local field potentials. The authors should avoid these conceptual confusions in the manuscript, or show that these band power time courses are in fact matching the oscillations they refer to (for example, their spindle band is in fact reflecting increased spindle occurrence).

2. The shuffling procedure to control for the occupancy difference between awake and sleep does not seem to be sufficient. From what I understand, this shuffling is not controlling for the autocorrelation of each band which would be the main source of bias to be accounted for in this instance. Thus, time shifts for each band would be more appropriate. Further, the controls for trial durations should be created using consecutive windows. If you randomly sample sleep bins from distant time points you are not effectively controlling for the difference in duration between trial types. Finally, it is not clear from the text if the UMAP is recomputed for each duration-matched control. This would be a rigorous control as it would remove the potential bias arising from the unbalance between awake and sleep data points, which could bias the subspace to be more detailed for the LFP sleep features. It is very likely the results will hold after these controls, given it is not surprising that sleep is a more diverse state than awake, but it would be good practice to have more rigorous controls to formalize these conclusions.

3. Lots of the observations made from the state space approach presented in this manuscript lack any physiological interpretation. For example, Figure 4F suggests a shift in the state space from Sleep1 to Sleep2. The authors comment there is a change in density but they do not make an effort to explain what the change means in terms of brain dynamics. It seems that the spectral patterns are shifting away from the Delta X Spindle region (concluding this by looking at Fig4B) which could be potentially interesting if analyzed in depth. What is the state space revealing about the brain here? It would be important to interpret the changes revealed by this method otherwise what are we learning about the brain from these analyses? This is similar to the results presented in Figure 5, which are merely descriptions of what is seen in the correlation matrix space. It seems potentially interesting that non-REM seems to be split into two clusters in the UMAP space. What does it mean for REM that delta band power in pyramidal and lm layers is anti-correlated to the power within the mid to fast gamma range? What do the transition probabilities shown in Figures 6B and C suggest about hippocampal functioning? The authors just state there are "changes" but they don't characterize these systematically in terms of biology. Overall, the abstract multivariate representation of the neural data shown here could potentially reveal novel dynamics across the awake-sleep cycle, but in the current form of this manuscript, the observations never leave the abstract level.

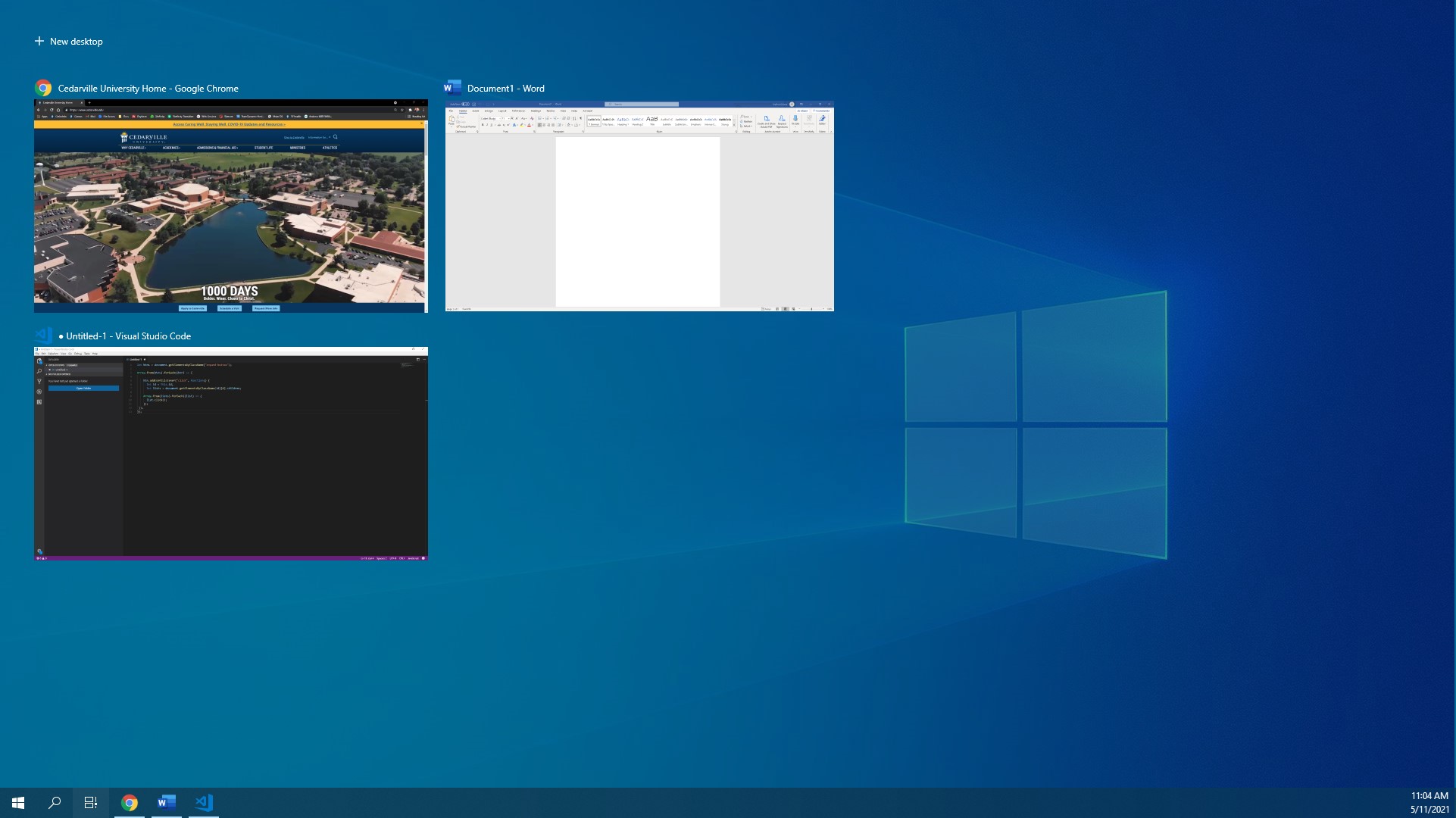

Press and hold the [Windows] key > Click the [Tab] key once. A row of screen shots representing all the open applications will appear.

[Window][Tab]

Windows Switch Between Open Windows Applications

Exclamation

It's not exclaimation, it's default beep.

need more work on Windows

Future work is needed.

Blackboard Collaborate Blackboard Collaborate works best with the Google Chrome browser, you can download and install this free. Chrome is available on Windows, Mac OS, Android (Google Play Store) and iOS (App Store) devices. Join a Collaborate Session You will be invited to the session via a link. A Blackboard Collaborate guest link looks like this, https://ca.bbcollab.com/guest/9b3e2fdd4ffd4bffb0b22fa76bd98f96 . This link is a test session in my Test course. You can join this at a time to suit you and explore Collaborate as a participant. When you click on the link it will open the session in your Browser. Add your name, then click the ‘Join Session’ button. Once you enter the Collaborate room, there are some functions to be aware of. Collaborate Panel Click the purple tab, bottom right, to open it Click the Settings cog wheel icon bottom right of the expanded panel, this is where you check your audio and video. Scroll down to set session notifications settings Click the Speech bubble icon bottom left. In here you can type your comments or conversation whilst the audio-visual presentation is going on. Session Controls There’s a row of four buttons bottom centre. My Status and Settings – click to check your status and give emoji feedback. Mute or unmute your microphone. Switch on or off your webcam video. Raise your hand to let the lecturer know you wish to speak. You should get a practice or test session with academic staff before the actual teaching session. This will help you understand the parts of the tool you need to interact with. This should be minimal, the academic will manage the session itself. Mobile device access to Collaborate Blackboard Collaborate allows you to connect to sessions with an Apple iPad, or iPhone, Android or Windows devices via a browser. A video guide to Collaborate on mobile devices opens in YouTube (18.06 mins) [Transcript]. You can do these things with Blackboard Collaborate on a mobile browser View the Whiteboard. View an Application on another user’s desktop. Join breakout rooms. Send and receive chat messages with the entire room. Listen to other speakers and speak to the room. Respond to polls. Collaborate Accessibility For the best Collaborate experience with your screen reader use Chrome and JAWS on a Windows system. On a Mac use Safari and VoiceOver. Windows 10 – Chrome with JAWS: Provisional Windows 7 – Chrome with JAWS: Compatible MacOS – Safari with VoiceOver: Certified MacOS – Chrome with VoiceOver: Provisional There are some useful keyboard shortcuts in Blackboard Collaborate, including: To turn the microphone on and off, press Alt + M in Windows. On a Mac, press Option + M. To turn the camera on and off, press Alt + C in Windows. On a Mac, press Option + C. To raise and lower your hand, press Alt + H in Windows. On a Mac, press Option + H. For detailed support for accessibility with Collaborate visit Blackboard Help

delete

Windows 11 is introducing a new app called Dev Home, currently in preview, specifically designed for developers. As the name implies, Dev Home aims to enhance developers’ productivity and streamline their workflow in software creation.

When will it come?

Women lined the rooftop and windows of the ten-story building and jumped, landing in a “mangled, bloody pulp.”

That sounds like a terrible sight to witness.

Suggested by Cato Minor<br /> Mac, Windows, Linux, iOS, Android

Windows 11 optional updates: Here why you should wait Status is online Joshua Bevis We help London based businesses transform their IT

Reviewer #3 (Public Review):

In their manuscript, Schneider et al. aim to develop voyAGEr, a web-based tool that enables the exploration of gene expression changes over age in a tissue- and sex-specific manner. The authors achieved this goal by calculating the significance of gene expression alterations within a sliding window, using their unique algorithm, Shifting Age Range Pipeline for Linear Modelling (ShARP-LM), as well as tissue-level summaries that calculated the significance of the proportion of differentially expressed genes by the windows and calculated enrichments of pathways for showing biological relevance. Furthermore, the authors examined the enrichment of cell types, pathways, and diseases by defining the co-expressed gene modules in four selected tissues. The voyAGEr was developed as a discovery tool, providing researchers with easy access to the vast amount of transcriptome data from the GTEx project. Overall, the research design is unique and well-performed, with interesting results that provide useful resources for the field of human genetics of aging. I have a few questions and comments, which I hope the authors can address.