The social media platform X (Twitter), claimed that there were record breaking traffic numbers for the 2024 super bowl game. However, with further cybersecurity research they found that 75% of the traffic X had posted about was fake and other social media platforms showed much lower rates. CHEQ's data had also shown that 32% of X's visitors were bots. Elon Musk now faces advertisement concerns over recent comments and poses as future challenges.

- Jan 2025

-

social-media-ethics-automation.github.io social-media-ethics-automation.github.io

-

docdrop.org docdrop.org

-

The order built on the basis of the Leader-Arbiter mechanism leads to the personification ofpower. This mechanism of rule has made it easier to carry out market reforms and to resolveconflicts between influential groups. At the same time, however, it has become a seriousobstacle to developing solid democratic institutions and transparent politics, leading to theformation of networks of informal ties and “shadow rules of the game” rather than a politicalsystem based on clear rules and the separation of powers

The leader-arbiter mechanism in Russia helped with the reforms but hindered how democratic institutions could develop. Relying on "shadow rules" led to informal networks rather than policy. This undermined democratic progress and instead enforced authoritarian practices. This segment tells us that political reform was difficult but for a number of reasons. One of which is the informal networking but another was the internal divisions that this caused within the elite.

-

-

www.nytimes.com www.nytimes.com

-

The artist strives not to collect the most toys, rack up virtual kills or race to the jackpot square but simply to be in the game, map its corners, make time stretch — and maybe figure out a way to hack this world, change the rules and free us all. For victory is just a blip. The best games never end.

Finding out everything about the world... no thats not right. The artist makes art in order to understand their own exsisatnce, not to rack up points. Maybe i should follow this way. This clever analogy from C. Thu Nguyen.

-

-

social-media-ethics-automation.github.io social-media-ethics-automation.github.io

-

On the other hand, some bots are made with the intention of harming, countering, or deceiving others. For example, people use bots to spam advertisements at people. You can use bots as a way of buying fake followers [c8], or making fake crowds that appear to support a cause (called Astroturfing [c9]). As one example, in 2016, Rian Johnson, who was in the middle of directing Star Wars: The Last Jedi, got bombarded by tweets that all originated in Russia (likely making at least some use of bots).

I've had my experiences with antagonistic bots. In high school, a few of my friends would use Kahoot bots to overflow the number of students who joined the Kahoot game. There was this website where you would give the Kahoot game code and the bots would join by themselves.

-

-

www.dreamco.com www.dreamco.com

-

the gamestate—a collection of all relevant virtual information that may change during play

game state

-

MDA: Using the mechanics-dynamics-aesthetics model of game development, design-ers create aesthetic models for various types of gameplay. Aesthetics don’t refer to thelooks of the game but rather the emotional response the designer and developmentteam hope to evoke in the players through the game dynamics. If mechanics are therules and dynamics are the play of the game, then aesthetics are typically the fun (orlack thereof) experienced by playing. Designers ask themselves which aesthetic theyhope to achieve, define the dynamics that would lead to this feeling, and then create themechanics to produce the desired dynamics.

MDA in gaming

-

C OMMON T ERMS IN G AME D ESIGN

COMMON TERMS FOUND IN GAME DESIGN FOUND HERE

-

An activity with rules. It is a form of play oftenbut not always involving conflict, either with other players, with the game system itself, orwith randomness/fate/luck. Most games have goals, but not all (for example, The Sims andSimCity). Most games have defined start and end points, but not all (for example, World ofWarcraft and Dungeons & Dragons). Most games involve decision making on the part of theplayers, but not all (for example, Candy Land and Chutes and Ladders). A video game is agame (as defined above) that uses a digital video screen of some kind, in some way.”

what is a video game

-

Yet its rules, simple as they are, allow for a depth of strategy so great that itis still played heavily today

What keeps people playing a game

-

-

-

One of the best sources of information in the game world is the game itself. Game state can be transcribed into text so that a SLM can reason about the game world

What is the mapping between the Game World and the Real world?

-

to incorporate ACE autonomous game characters into their titles.

So, would all autonomous game characters have the same strategies and personalities that arise from NVIDIA implementation, regardless of the game or platform? Interesting.

-

-

hawkeyecollege.simplesyllabus.com hawkeyecollege.simplesyllabus.com

-

1/19/25 Chapter 10 READING: Wrapping and Taping Techniques

I am excited to learn how to tape because before every soccer practice and game I have to get my ankle taped.

-

-

usrussiarelations.org usrussiarelations.org

-

American Red Cross

To prepare you for your next trivia game night. Who started the American Red Cross, and what is its purpose?

-

-

social-media-ethics-automation.github.io social-media-ethics-automation.github.io

-

Before electronic computers were generally available, when scientists wanted the results of some calculations, they sometimes hired “computers” [b114], which were people trained to perform the calculations.

This concept reminds me of an interesting plot in《Three Body Problem》, where, in the three-body-problem visual game, a simplified "human computer" is described: assuming there are three people facing to each other, holding either red or white light, if a person see both the others hold white light, he would hold the white light as well; otherwise he would hold the red light. This game happens to represent the binary system in computer.

-

-

www.kickstarter.com www.kickstarter.comTend1

Tags

Annotators

URL

-

-

www.kickstarter.com www.kickstarter.com

-

www.kickstarter.com www.kickstarter.com

-

social-media-ethics-automation.github.io social-media-ethics-automation.github.io

-

Some platforms are used for sharing text and pictures (e.g., Facebook, Twitter, LinkedIn, WeChat, Weibo, QQ), some for sharing video (e.g., Youtube, TickTock), some for sharing audio (e.g., Clubhouse), some for sharing fanfiction (e.g., Fanfiction.net, AO3), some for gathering and sharing knowledge (e.g., Wikipedia, Quora, StackOverflow), some for sharing erotic content (e.g, OnlyFans).

I think some games could also be regarded as social media. For instance, I used to play Game for Peace, which is a mobile shooting game in China. In this application, players need to team up and work together to survive in the last. Therefore, discussions and communications are very frequent in this game. Sometimes, the interpersonal relationship is beyond teammates. Some people use this game to find a girlfriend or a boyfriend, and some players could make money by selling their services to other players. So I think some gaming platforms should also be included as a kind of social media.

-

-

today.tamu.edu today.tamu.edu

-

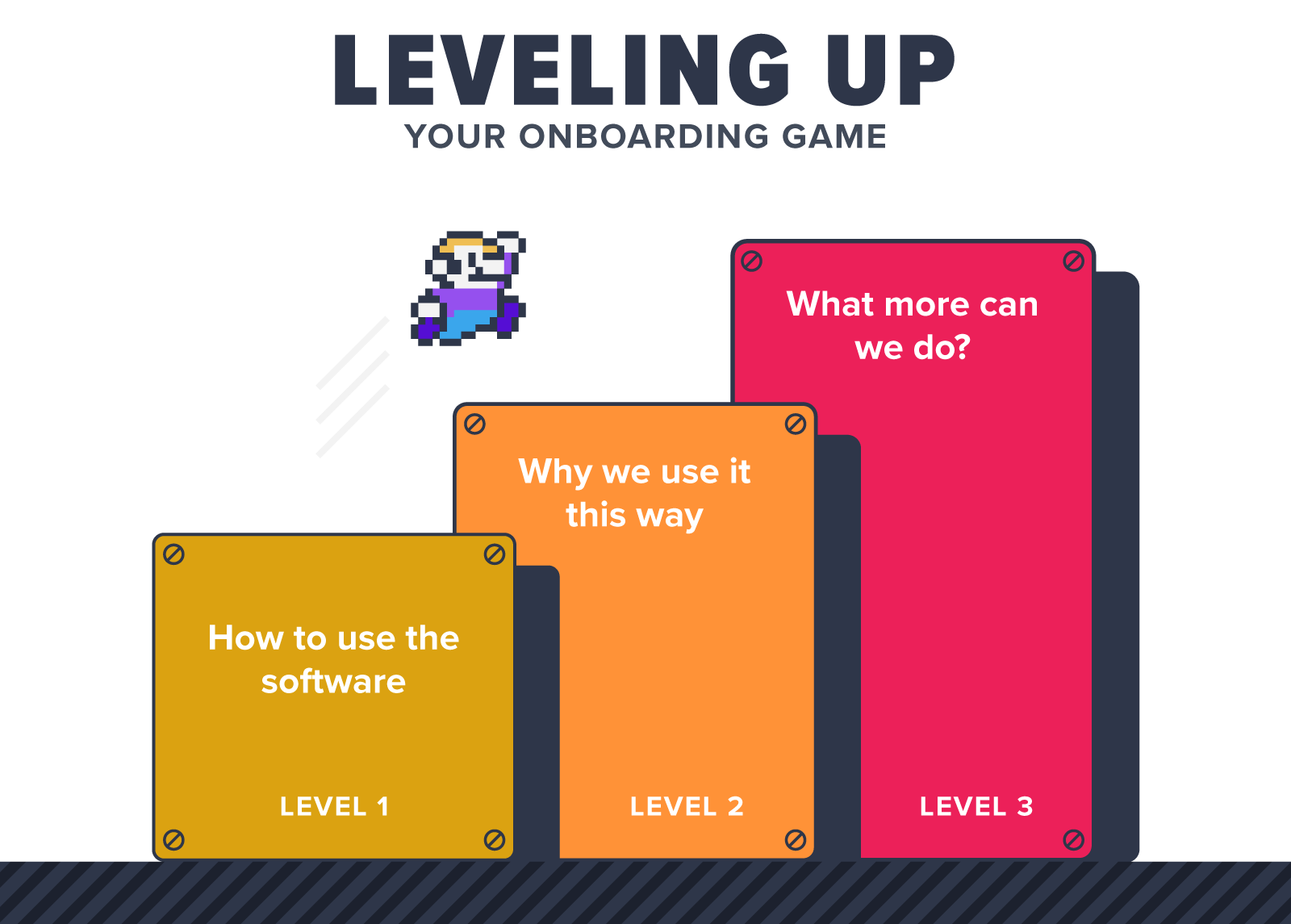

Games are a natural way to allow students to fail in a safe way, learn from failures and try again until they succeed. Some games, like Burnout Paradise* make failure fun. In the game, players can crash their cars – and the more spectacular the crash, the higher the points. This allows players to essentially learn from their mistakes, correct them and try again. The late video game theorist and author Jesper Juul wrote in his book, “The Art of Failure,” that losing in video games is part of what makes games so engaging. Failing in a game makes the player feel inadequate, yet the player can immediately redeem themselves and improve their skills.

Failure in video games not only brings a challenge to make videogame's more fun but difficulty can also teach people to make new strategies or make important decisions in said video game that can also improve real life skills.

-

The use of video games in the classroom is nothing new. Many people who went to school in the 1970s through the 1990s may recall the iconic video game The Oregon Trail, which made its debut in a classroom in 1971.

"The Oregon Trail" was a massive success in both education and entertainment. in addition, several other edutainment games have been made for ages 3-8, most being very popular

-

-

www.are.na www.are.na

-

these communities are trying to sift through the layers of the world to see what else might have been left behind at the code level by the developers during the making of the game.

intertextual (esp wrt code); marginalia; SKAM — transmedia emergent storytelling

-

Spectator Mode becomes a way to analyze the game world from the outside in, to instantly uncover the secret paths and tunnels in the ground below, to look at the gameworld from within a block, or from under the bedrock looking up through pools of lava, hidden diamonds, and glowing skeletons.

in what ways do you understand / come to learn a system, a world, an infrastructure? cf. position/where one is situated across an AI tech stack

Tags

Annotators

URL

-

-

nicholasmuellner.com nicholasmuellner.comjude1

-

But the uneasy fascination of the adjacent, vacant landscape rested in its unexpected insistence on presence. Suddenly, this primitive attempt at virtuality

cf Travess Smalley: https://www.are.na/editorial/where-boundary-breaks

-

-

biaiscognitifs.innovation.maif biaiscognitifs.innovation.maif

-

www.biorxiv.org www.biorxiv.org

-

Author response:

The following is the authors’ response to the previous reviews.

Reviewer #1 (Public review):

As a starting point, the authors discuss the so-called "additive partitioning" (AP) method proposed by Loreau & Hector in 2001. The AP is the result of a mathematical rearrangement of the definition of overyielding, written in terms of relative yields (RY) of species in mixtures relative to monocultures. One term, the so-called complementarity effect (CE), is proportional to the average RY deviations from the null expectations that plants of both species "do the same" in monocultures and mixtures. The other term, the selection effect (SE), captures how these RY deviations are related to monoculture productivity. Overall, CE measures whether relative biomass gains differ from zero when averaged across all community members, and SE, whether the "relative advantage" species have in the mixture, is related to their productivity. In extreme cases, when all species benefit, CE becomes positive.

This is not true; positive CE does not require positive RY deviations of all species. CE is positive as long as average RY deviation is greater than 0. In a 2-species mixture, for example, if the RY deviation of one species is -0.2 and that of the other species is +0.3, CE would be still positive. Positive CE can be associated with negative NE (net biodiversity effects) when more productivity species have smaller negative RY deviation compared to positive RY deviation of less productive species. Therefore, the suggestion by the reviewer “This is intuitively compatible with the idea that niche complementarity mitigates competition (CE>0)” is not correct.

When large species have large relative productivity increases, SE becomes positive. This is intuitively compatible with the idea that niche complementarity mitigates competition (CE>0), or that competitively superior species dominate mixtures and thereby driver overyielding (SE>0).

The use of word “mitigate” indicates that the effects of niche complementarity and competition are in opposite directions, which is not true with biodiversity experiments based on replacement design. We have explained this in detail in our first responses to reviewers.

However, it is very important to understand that CE and SE capture the "statistical structure" of RY that underlies overyielding. Specifically, CE and SE are not the ultimate biological mechanisms that drive overyielding, and never were meant to be. CE also does not describe niche complementarity. Interpreting CE and SE as directly quantifying niche complementarity or resource competition, is simply wrong, although it sometimes is done. The criticism of the AP method thus in large part seems unwarranted. The alternative methods the authors discuss (lines 108-123) are based on very similar principles.

Agree. However, If CE and SE are not meant to be biological mechanisms, as suggested by the reviewer, the argument “This is intuitively compatible with the idea that niche complementarity mitigates competition (CE>0), or that competitively superior species dominate mixtures and thereby driver overyielding (SE>0)” would be invalid.

Lines 108-123 are not on our method.

The authors now set out to develop a method that aims at linking response patterns to "more true" biological mechanisms.

Assuming that "competitive dominance" is key to understanding mixture productivity, because "competitive interactions are the predominant type of interspecific relationships in plants", the authors introduce "partial density" monocultures, i.e. monocultures that have the same planting density for a species as in a mixture. The idea is that using these partial density monocultures as a reference would allow for isolating the effect of competition by the surrounding "species matrix".

The authors argue that "To separate effects of competitive interactions from those of other species interactions, we would need the hypothesis that constituent species share an identical niche but differ in growth and competitive ability (i.e., absence of positive/negative interactions)." - I think the term interaction is not correctly used here, because clearly competition is an interaction, but the point made here is that this would be a zero-sum game.

We did not say that competition is not an interaction.

The authors use the ratio of productivity of partial density and full-density monocultures, divided by planting density, as a measure of "competitive growth response" (abbreviated as MG). This is the extra growth a plant individual produces when intraspecific competition is reduced.

Here, I see two issues: first, this rests on the assumption that there is only "one mode" of competition if two species use the same resources, which may not be true, because intraspecific and interspecific competition may differ. Of course, one can argue that then somehow "niches" are different, but such a niche definition would be very broad and go beyond the "resource set" perspective the authors adopt. Second, this value will heavily depend on timing and the relationship between maximum initial growth rates and competitive abilities at high stand densities.

True. Research findings indicate that biodiversity effect detected with AP is not constant.

The authors then progress to define relative competitive ability (RC), and this time simply uses monoculture biomass as a measure of competitive ability. To express this biomass in a standardized way, they express it as different from the mean of the other species and then divide by the maximum monoculture biomass of all species.

I have two concerns here: first, if competitive ability is the capability of a species to preempt resources from a pool also accessed by another species, as the authors argued before, then this seems wrong because one would expect that a species can simply be more productive because it has a broader niche space that it exploits. This contradicts the very narrow perspective on competitive ability the authors have adopted. This also is difficult to reconcile with the idea that specialist species with a narrow niche would outcompete generalist species with a broad niche.

Competitive ability is not necessarily associated with species niche space. Both generalist and specialist species can be more productive at a particular study site, as long as they are more capable of obtaining resources from a local pool. Remember, biodiversity experiments are conducted at a site of particular conditions, not across a range of species niche space at landscape level.

Second, I am concerned by the mathematical form. Standardizing by the maximum makes the scaling dependent on a single value.

As explained in lines 370-376, the mathematical form is a linear approximation as the relationship between competitive growth responses and species relative competitive ability is generally unknow but would be likely nonlinear. Once the relationship is determined in future research, the scaling factor is not needed.

As a final step, the authors calculate a "competitive expectation" for a species' biomass in the mixture, by scaling deviations from the expected yield by the product MG ⨯ RC. This would mean a species does better in a mixture when (1) it benefits most from a conspecific density reduction, and (2) has a relatively high biomass.

Put simply, the assumption would be that if a species is productive in monoculture (high RC), it effectively does not "see" the competitors and then grows like it would be the sole species in the community, i.e. like in the partial density monoculture.

Overall, I am not very convinced by the proposed method.

Comments on revised version:

Only minimal changes were made to the manuscript, and they do not address the main points that were raised.

Reviewer #2 (Public review):

This manuscript by Tao et al. reports on an effort to better specify the underlying interactions driving the effects of biodiversity on productivity in biodiversity experiments. The authors are especially concerned with the potential for competitive interactions to drive positive biodiversity-ecosystem functioning relationships by driving down the biomass of subdominant species. The authors suggest a new partitioning schema that utilizes a suite of partial density treatments to capture so-called competitive ability. While I agree with the authors that understanding the underlying drivers of biodiversity-ecosystem functioning relationships is valuable - I am unsure of the added value of this specific approach for several reasons.

No responses.

Comments on revised version:

The authors changed only one minor detail in response to the last round of reviews.

Reviewer #3 (Public review):

Summary:

This manuscript claims to provide a new null hypothesis for testing the effects of biodiversity on ecosystem functioning. It reports that the strength of biodiversity effects changes when this different null hypothesis is used. This main result is rather inevitable. That is, one expects a different answer when using a different approach. The question then becomes whether the manuscript's null hypothesis is both new and an improvement on the null hypothesis that has been in use in recent decades.

Our approach adopts two hypotheses, null hypothesis that is also with the additive partitioning model and competitive hypothesis that is new. Null hypothesis assumes that inter- and intra-specie interactions are the same, while competitive hypothesis assumes that species differ in competitive ability and growth rate. Therefore, our approach is an extension of current approach. Our approach separates effects of competitive interactions from those of other species interactions, while the current approach does not.

Strengths:

In general, I appreciate studies like this that question whether we have been doing it all wrong and I encourage consideration of new approaches.

Weaknesses:

Despite many sweeping critiques of previous studies and bold claims of novelty made throughout the manuscript, I was unable to find new insights. The manuscript fails to place the study in the context of the long history of literature on competition and biodiversity and ecosystem functioning.

We have explained in our first responses that competition and biodiversity effects are studied in different experimental approaches, i.e., additive and replacement designs. Results from one approach are not compatible with those from the other. For example, competition effect with additive design is negative but generally positive with replacement design that is used extensively in biodiversity experiments. We have considered species competitive ability, density-growth relationship, and different effects of competitive interactions between additive and replacement design, while the current method does not reflect any of those.

The Introduction claims the new approach will address deficiencies of previous approaches, but after reading further I see no evidence that it addresses the limitations of previous approaches noted in the Introduction. Furthermore, the manuscript does not reproducibly describe the methods used to produce the results (e.g., in Table 1) and relies on simulations, claiming experimental data are not available when many experiments have already tested these ideas and not found support for them.

We used simulation data, as partial density monocultures are generally not available in previous biodiversity experiments.

Finally, it is unclear to me whether rejecting the 'new' null hypothesis presented in the manuscript would be of interest to ecologists, agronomists, conservationists, or others.

Our null hypothesis is the same as the null hypothesis with the additive partitioning assuming that inter- and intra-species interactions are the same, while our competitive hypothesis assumes that species differ in competitive ability and growth rate. Rejecting null hypothesis means that inter- and intra-species interactions are different, whereas rejecting competitive hypothesis indicates existence of positive/negative species interactions. This would be interesting to everyone.

Comments on revised version:

Please see review comments on the previous version of this manuscript. The authors have not revised their manuscript to address most of the issues previously raised by reviewers.

No responses.

Recommendations for the authors:

Reviewer #1 (Recommendations for the authors):

Do take reviews seriously. Even if you think the reviewers all are wrong and did not understand your work, then this seems to indicate that it was not clearly presented.

Reviewer #2 (Recommendations for the authors):

I can understand that the authors are perhaps frustrated with what they perceive as a basic misunderstanding of their goals and approach. This misunderstanding however, provides with it an opportunity to clarify. I believe that the authors have tried to clarify in rebutting our statements but would do better to clarify in the manuscript itself. If we reviewers, who are deeply invested in this field, don't understand the approach and its value, then it is likely that many readers will not as well.

The additive partitioning has been publicly questioned at least for serval times since the conception of the method in 2001. Our work provides an alternative.

-

-

www.atelier-collaboratif.com www.atelier-collaboratif.com

-

Briefing Doc: Exploration des Techniques de Facilitation

Ce document synthétise les concepts clés et les méthodes de facilitation présentées dans le "Kit de Facilitation" (https://www.atelier-collaboratif.com/telechargements/kit-de-facilitation.pdf).

Thèmes Principaux:

Importance de la préparation:

Avant chaque atelier, une phase de réflexion est essentielle pour définir l'objectif, identifier les participants et les livrables attendus.

Il faut également anticiper les risques et les solutions possibles. Le "Kit" propose un plateau "ORGANISATION" pour structurer cette préparation (p.4).

Diversité des pratiques:

Le kit présente un éventail de "cartes pratiques" (p.5) classées selon leur niveau de difficulté (S'améliorer, Prioriser/Décider, Briser la glace, Générer des idées, etc.) et proposant des techniques variées pour chaque étape d'un atelier collaboratif.

Importance de l'intelligence collective: La plupart des techniques présentées visent à stimuler la participation active de tous, à encourager le partage d'idées, et à favoriser la co-construction de solutions. Idées et Faits Marquants:

Le rôle du facilitateur:

Le facilitateur joue un rôle central dans la réussite d'un atelier. Il guide le groupe, assure le bon déroulement des activités, et veille à créer un environnement propice à la collaboration.

L'utilisation d'outils visuels:

Des outils comme les post-it, les tableaux, les cartes, etc. sont fréquemment utilisés pour faciliter la visualisation des idées, la structuration des discussions, et la prise de décisions collective.

L'importance du feedback:

Plusieurs techniques (ex: ROTI Agile, Perfection Game) permettent de recueillir du feedback des participants, ce qui est crucial pour l'amélioration continue des ateliers. Exemples de Techniques et Citations:

La Rétrospective 4L (p. 6):

Permet de faire le bilan d'une activité en utilisant l'analogie d'une voiture. Les participants identifient sur des cartes "ce qui freine" (le vent) et "ce qui pousse" (le moteur).

Gommettocratie (p. 9):

Technique de priorisation simple et visuelle.

Les participants votent pour les idées qui les séduisent le plus en collant des gommettes.

Le Réseau Social en Papier (p. 11):

Un jeu ludique pour briser la glace et permettre aux participants de se connaître. World Café (p. 18): "Inventé en 2009 par Jim Benson et Jeremy Lightsmith".

Cette technique favorise le dialogue et l'échange d'idées sur plusieurs sujets en petits groupes.

Impact Mapping (p. 24):

Permet de "représenter visuellement les impacts et hypothèses de développement d'un produit".

Méthode du Point de Vue (POV) (p. 25):

"Le point de vue est la perception du problème vue par l'utilisateur." Cette méthode permet de se centrer sur les besoins des utilisateurs.

Conclusion:

Le "Kit de Facilitation" est une ressource précieuse pour tous ceux qui souhaitent animer des ateliers collaboratifs efficaces.

Il propose une grande variété de techniques et d'outils pour chaque étape du processus, de la préparation à la mise en œuvre des décisions.

-

- Dec 2024

-

4thgenerationcivilization.substack.com 4thgenerationcivilization.substack.com

-

The Everywheres are on the contrary nomadic elements that are willing to be of service to cosmo-local productive economic alliances, seeding various locales with the trans-local experience, both of other locales they may have visited, but also of the network itself.

Seductive analogy. Reminds me of Daniel Schmatchtenberger's Game A (civilizational problems of competition) and Game B (beyond competition) that creates a simple US vs. THEM for convening the like-minded activists, but not sure it accurately describes the virtual landscape well-enough for implementers seeking to develop and deploy new type(s) of competence.

-

-

arxiv.org arxiv.org

-

Reviewer #1 (Public review):

Summary:

Zhang et al. addressed the question of whether advantageous and disadvantageous inequality aversion can be vicariously learned and generalized. Using an adapted version of the ultimatum game (UG), in three phases, participants first gave their own preference (baseline phase), then interacted with a "teacher" to learn their preference (learning phase), and finally were tested again on their own (transfer phase). The key measure is whether participants exhibited similar choice preferences (i.e., rejection rate and fairness rating) influenced by the learning phase, by contrasting their transfer phase and baseline phase. Through a series of statistical modeling and computational modeling, the authors reported that both advantageous and disadvantageous inequality aversion can indeed be learned (Study 1), and even be generalised (Study 2).

Strengths:

This study is very interesting, it directly adapted the lab's previous work on the observational learning effect on disadvantageous inequality aversion, to test both advantageous and disadvantageous inequality aversion in the current study. Social transmission of action, emotion, and attitude have started to be looked at recently, hence this research is timely. The use of computational modeling is mostly appropriate and motivated. Study 2, which examined the vicarious inequality aversion in conditions where feedback was never provided, is interesting and important to strengthen the reported effects. Both studies have proper justifications to determine the sample size.

Weaknesses:

Despite the strengths, a few conceptual aspects and analytical decisions have to be explained, justified, or clarified.

INTRODUCTION/CONCEPTUALIZATION<br /> (1) Two terms seem to be interchangeable, which should not, in this work: vicarious/observational learning vs preference learning. For vicarious learning, individuals observe others' actions (and optionally also the corresponding consequence resulting directly from their own actions), whereas, for preference learning, individuals predict, or act on behalf of, the others' actions, and then receive feedback if that prediction is correct or not. For the current work, it seems that the experiment is more about preference learning and prediction, and less so about vicarious learning. The intro and set are heavily around vicarious learning, and later the use of vicarious learning and preference learning is rather mixed in the text. I think either tone down the focus on vicarious learning, or discuss how they are different. Some of the references here may be helpful: Charpentier et al., Neuron, 2020; Olsson et al., Nature Reviews Neuroscience, 2020; Zhang & Glascher, Science Advances, 2020

EXPERIMENTAL DESIGN<br /> (2) For each offer type, the experiment "added a uniformly distributed noise in the range of (-10 ,10)". I wonder what this looks like? With only integers such as 25:75, or even with decimal points? More importantly, is it possible to have either 70:30 or 90:10 option, after adding the noise, to have generated an 80:20 split shown to the participants? If so, for the analyses later, when participants saw the 80:20 split, which condition did this trial belong to? 70:30 or 90:10? And is such noise added only to the learning phase, or also to the baseline/transfer phases? This requires some clarification.

(3) For the offer conditions (90:10, 70:30, 50:50, 30:70, 10:90) - are they randomized? If so, how is it done? Is it randomized within each participant, and/or also across participants (such that each participant experienced different trial sequences)? This is important, as the order especially for the learning phase can largely impact the preference learning of the participants.

STATISTICAL ANALYSIS & COMPUTATIONAL MODELING<br /> (4) In Study 1 DI offer types (90:10, 70:30), the rejection rate for DI-AI averse looks consistently higher than that for DI averse (ie, the blue line is above the yellow line). Is this significant? If so, how come? Since this is a between-subject design, I would not anticipate such a result (especially for the baseline). Also, for the LME results (eg, Table S3), only interactions were reported but not the main results.

(5) I do not particularly find this analysis appealing: "we examined whether participants' changes in rejection rates between Transfer and Baseline, could be explained by the degree to which they vicariously learned, defined as the change in punishment rates between the first and last 5 trials of the Learning phase." Naturally, the participants' behavior in the first 5 trials in the learning phase will be similar to those in the baseline; and their behavior in the last 5 trials in the learning phase would echo those at the transfer phase. I think it would be stronger to link the preference learning results to the change between the baseline and transfer phase, eg, by looking at the difference between alpha (beta) at the end of the learning phase and the initial alpha (beta).

(6) I wonder if data from the baseline and transfer phases can also be modeled, using a simple Fehr-Schimdt model. This way, the change in alpha/beta can also be examined between the baseline and transfer phase.

(7) I quite liked Study 2 which tests the generalization effect, and I expected to see an adapted computational modeling to directly reflect this idea. Indeed, the authors wrote, "[...] given that this model [...] assumes the sort of generalization of preferences between offer types [...]". But where exactly did the preference learning model assume the generalization? In the methods, the modeling seems to be only about Study 1; did the authors advise their model to accommodate Study 2? The authors also ran simulation for the learning phase in Study 2 (Figure 6), and how did the preference update (if at all) for offers (90:10 and 10:90) where feedback was not given? Extending/Unpacking the computational modeling results for Study 2 will be very helpful for the paper.

-

-

www.kickstarter.com www.kickstarter.comIsla!1

-

www.kickstarter.com www.kickstarter.com

-

www.youtube.com www.youtube.com

-

for - TED Talk - YouTube - A word game to convey any language - Ajit Narayanan - potential source - Deep Humanity - BEing journeys in language - appreciation of inhabiting the symbolosphere // - Summary - An interesting idea of teasing out the data structure behind language - This could be a rich area to explore for Deep Humanity language BEing journeys to help people gain deeper appreciation of their own amazing language abilities - as well as gain an appreciation for the enormous amount of time our life is spent in the (relative) symbolosphere

-

Next (SHIFT+n) Feats of memory anyone can do | Joshua Foer 20:29

Ajit Narayanan:

A word game to communicate in any language

-

supposing I was a writer, say, for a newspaper or for a magazine. I could create content in one language, FreeSpeech, and the person who's consuming that content, the person who's reading that particular information could choose any engine, and they could read it in their own mother tongue, in their native language

for - freespeech can be used as an international language translator - data structure of thought - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

-

when you want to use Google, you go into Google search, and you type in English, and it matches the English with the English. What if we could do this in FreeSpeech instead? I have a suspicion that if we did this, we'd find that algorithms like searching, like retrieval, all of these things, are much simpler and also more effective, because they don't process the data structure of speech. Instead they're processing the data structure of thought

for - indyweb dev - question - alternative to AI Large Language Models? - Is indyweb functionality the same as Freespeech functionality? - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan - data structure of thought - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

-

language is really the brain's invention to convert this rich, multi-dimensional thought on one hand into speech on the other hand.

for - key insight - ideas are multidimensional - speech is one dimensional - language is one dimensional - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

-

the dream, the hope, the vision, really, is that when they learn English this way, they learn it with the same proficiency as their mother tongue.

for - investigate - question - Does this other app that allows learning another language with the proficiency of a child exist? - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

-

there were a group of scientists that were trying to understand how the brain processes language, and they found something very interesting. They found that when you learn a language as a child, as a two-year-old, you learn it with a certain part of your brain, and when you learn a language as an adult -- for example, if I wanted to learn Japanese

for - research study - language - children learning mother tongue use a different post off the brain then adults learning another language - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

-

if I wasn't an English speaker, if I was speaking in some other language, this map would actually hold true in any language. So long as the questions are standardized, the map is actually independent of language. So I call this FreeSpeech

for - app - Free Speech - permutations of pictures that can created meaning without using language - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

-

grammar is incredibly powerful, because grammar is this one component of language which takes this finite vocabulary that all of us have and allows us to convey an infinite amount of information, an infinite amount of ideas. It's the way in which you can put things together in order to convey anything you want to

for - the power of grammar - infinite permutations if meaning using a finite set of symbols - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

Tags

- research study - language - children learning mother tongue use a different post off the brain then adults learning another language - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- the power of grammar - infinite permutations if meaning using a finite set of symbols - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- potential source - Deep Humanity - BEing journeys in language

- app - Free Speech - permutations of pictures that can created meaning without using language - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- investigate - question - Does this other app that allows learning another language with the proficiency of a child exist? - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- indyweb dev - question - alternative to AI Large Language Models? - Is indyweb functionality the same as Freespeech functionality? - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- data structure of thought - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- appreciation of inhabiting the symbolosphere

- freespeech can be used as an international language translator - data structure of thought - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

- key insight - ideas are multidimensional - speech is one dimensional - language is one dimensional - from TED Talk - YouTube - A word game to convey any language - Ajit Narayanan

Annotators

URL

-

-

www.youtube.com www.youtube.com

-

for - climate crisis - impact of Trump tariff strategy - increasing economic and carbon inequality and precarity for the masses - from - Youtube - Trump wants to crash to benefit the ultra wealthy - Trump's planning to crash the global economy - Richard J Murphy - 2024, Dec

// - SUMMARY - Richard J Murphy provides us with a big picture of Trump's objective in his calculated Tariff strategy - It's not that it makes no sense and is a strategy of a madman - On the contrary, he has a very calculated and maniacal strategy that will result in significantly increasing the wealth of the elites - By creating high tariffs, he will bring about a global economic crash - Like the 2008 and 2020 crash, central banks will print trillions of dollars of money and handout bailouts - It is the elites who will receive these bailouts and inflate the value of their assets - This will - substantially increase the wealth of the rich - substantially increase the precarity of the vast majority of people - increase global inequality - financial inequality and - carbon inequality - This increased precarity is bad news for the climate crisis as a precarious population have less flexibility in reducing their carbon footprint and are more dependent than ever on whatever remain job and resources they still have - Given we have this knowledge of the elite's hidden strategy, can we the people intervene in any way? - We need to have an understanding of how elites see the world - The entire worldview of externalizing investment as a game of accumulation must be understood deeply - in order to find leverage points for rapid system change

//

Tags

Annotators

URL

-

-

granta.com granta.com

-

The ‘sapiosexual type’ is more sophisticated than its predecessors and becoming prevalent with highly educated women. Creating these characters convincingly is no easy task. Otome game companies often hire female writers from top universities with diverse backgrounds, equipped with knowledge from a range of disciplines. ‘He’ should be able to comment on a Shakespearean sonnet or quantum physics in a magisterial way, if prompted.

what

-

Iconic games such as Genshin Impact (developed by the Shanghai game company miHoYo, whose slogan is ‘Tech Otakus Save the World’) are featuring more and more charming male characters to appeal to female players, which is seen as a betrayal of its origins. Many male players refuse to play with male characters – going so far as to deliberately drop them dead – and vow to boycott the game until this supposed mistake is rectified. What they don’t grasp is that they need to outspend female and gay players to regain some bargaining power. Petulantly railing against the ‘pink tax’ won’t get them what they want.

What shitty use of passive voice universalizing the next sentence's "many male players"

-

-

onethingnewsletter.substack.com onethingnewsletter.substack.com

-

Parasocial relationships are the name of the game. When people call for a Joe Rogan of the left, it seems like they don’t realize that one of the reasons he is so powerful is that he is many of his listeners’ best friend. People spend hours and hours a day with him; his show and its extended universe have become an on-demand loneliness killing service. The power (and value) of that relationship is unmatched. Puck is a parasocial publication, that’s why you hear the tentpole writers’ voices in solo podcasts.

I want to read more about parasocial media patterns pre-broadcast media. You can't tell me that there weren't forms

-

-

Local file Local file

-

To pretend that this multi-level game can be flattened outinto a merely technical question is naïve. That becomes clearwhen it enters the patently political phase and people fight overthe legislative and regulative details.

technical question doesn't work- Foucalt

-

-

www.modernisation.gouv.fr www.modernisation.gouv.fr

-

www.youtube.com www.youtube.com

-

Sommaire minuté des temps forts du webinaire "Le tabac chez les jeunes : comment les accompagner à l'arrêt ? | Crips IDF"

Ce sommaire met en avant la richesse du webinaire, en abordant à la fois * le contexte de la consommation de tabac chez les jeunes, * les nouveaux produits et les risques associés, * le lien avec la santé mentale et * les différentes stratégies d'accompagnement.

Le témoignage de l'association Repère apporte une dimension concrète et inspirante, tandis que la présentation des outils du Crips offre aux professionnels un panel de ressources pour animer des séances de prévention et de sensibilisation.

0:00 - 1:30 : Introduction et présentation des intervenantes

- Géraldine, infirmière addictologue et tabacologue, et Estella Furau, chargée de projet sur la thématique addiction au Crips Île-de-France, se présentent et exposent leurs expériences professionnelles.

1:30 - 8:00 : Contexte de la consommation de tabac chez les jeunes

- Discussion sur la définition du public "jeune" et les spécificités des différentes tranches d'âge.

- Importance de la prise de conscience de l'addiction pour amorcer une démarche d'arrêt.

- Mise en avant des différences d'accès aux ressources et de prévalence tabagique selon les milieux sociaux.

- Bonne nouvelle : diminution de la prévalence tabagique chez les jeunes, mais vigilance nécessaire face aux nouveaux produits (vape, puff...).

- Impact positif des politiques publiques (lieux sans tabac, augmentation du prix du paquet) sur la diminution du tabagisme.

- Débat sur l'interdiction de fumer sur les terrasses.

- Différences d'accompagnement entre un patient jeune et un patient plus âgé : difficulté de la prise de conscience de l'addiction chez les jeunes.

- Importance d'accompagner le jeune dans sa demande, qu'il s'agisse d'une réduction ou d'un arrêt total.

- Présentation de Tabac info service, un outil d'aide à l'arrêt.

8:00 - 15:00 : Nouveaux produits et risques associés

- Augmentation de l'usage de la vape chez les jeunes, notamment la puff, devenue un objet tendance.

- Préoccupations concernant la puff : marketing ciblant les jeunes, risques de dépendance et de passage à la cigarette.

- Interdiction des puffs jetables : une mesure efficace ?

- Méconnaissance des risques liés à la chicha (tabac, nicotine, combustion...) chez les jeunes.

- Difficulté de la prise en charge face à la consommation de chicha, souvent vécue comme une expérience collective.

- Diminution de l'expérimentation du cannabis, mais il reste la première drogue illicite consommée par les jeunes.

- Importance d'aborder les co-consommations et les transferts d'addiction.

- Conseils pour la prise en charge du cannabis : réduction des risques et orientation vers des structures spécialisées.

15:00 - 22:00 : Lien entre santé mentale et consommation de tabac

- Statistiques alarmantes sur le bien-être mental des lycéens : 49% ne présentent pas un bon niveau de bien-être mental.

- Impact du tabac sur la santé mentale : la dépression peut être un signe de manque de nicotine.

- Apparition possible de signes de dépression, voire d'idées suicidaires, lors d'un sevrage tabagique trop brutal.

- Importance de différencier une dépression induite par l'arrêt du tabac d'une dépression préexistante.

22:00 - 27:00 : Conseils aux professionnels, aux proches et aux jeunes fumeurs

- Conseils aux professionnels : patience, humilité et importance de la transmission d'information sur les ressources disponibles.

- Conseils aux proches : ne pas forcer le fumeur à arrêter, lui apporter du soutien et l'informer des aides disponibles.

- Conseils aux jeunes fumeurs : se tourner vers un professionnel, l'arrêt du tabac est faisable.

- Importance de lutter contre les représentations négatives de l'aide et des tabacologues.

- Rôle des pharmaciens, des sages-femmes, des kinés... dans l'accompagnement à l'arrêt et la réduction des risques.

- Importance de la réduction des risques et du remboursement des substituts nicotiniques.

27:00 - 33:00 : Présentation de programmes d'aide à l'arrêt pour les jeunes

- Mois sans tabac : campagne nationale de sensibilisation et d'aide à l'arrêt.

- Tabado : programme probant d'aide à l'arrêt pour les lycées professionnels et les CFA (actuellement interrompu).

- Desclic Stop Tabac : programme similaire à Tabado pour les établissements agricoles.

- Programmes d'animation-débat : Ligue contre le cancer, Crips Île-de-France.

33:00 - 44:00 : Témoignage de l'association Repère et présentation d'un projet d'ateliers "Mois sans tabac"

- Marie Durantis, éducatrice spécialisée, présente son projet d'ateliers "Mois sans tabac" mené avec des jeunes et des adultes.

- Importance de l'approche ludique et éducative : brainstorming, questionnaire, jeux, création d'affiches...

- Participation au challenge "Mois sans tabac" de la Ligue contre le cancer.

- Visite de l'Escape Game "Tabac" à la Cité des Sciences.

- Bilan positif du projet : favorise la cohésion de groupe, la prévention et la sensibilisation.

44:00 - Fin : Présentation d'outils d'animation du Crips pour aborder le tabac avec les jeunes

- Tirtaclop : outil pour aborder la réduction du tabac et identifier les besoins et les plaisirs.

- Infox : jeu de cartes d'affirmations vraies ou fausses pour initier le débat et évaluer les connaissances.

- PICT Prévention Tabac : jeu de type Pictionary pour aborder les thématiques liées au tabac de manière ludique.

- Escape Game Tabac (version jeu de cartes et version salle) : outil ludique pour aborder toutes les dimensions de l'arrêt du tabac.

- Outil "Chicha" : jeu de cartes pour explorer les contextes de consommation de la chicha et identifier les conduites à risque.

- Modérateur de forum : jeu de rôle pour déconstruire les idées reçues et encourager la réflexion critique.

- Jeu "À J" : jeu de cartes pour analyser des situations à risque liées à la consommation de tabac et d'alcool.

Tags

Annotators

URL

-

-

learning.amplify.com learning.amplify.com

-

According to all known laws of aviation,

there is no way a bee should be able to fly.

Its wings are too small to get its fat little body off the ground.

The bee, of course, flies anyway

because bees don't care what humans think is impossible.

Yellow, black. Yellow, black. Yellow, black. Yellow, black.

Ooh, black and yellow! Let's shake it up a little.

Barry! Breakfast is ready!

Ooming!

Hang on a second.

Hello?

Barry?

Adam?

Oan you believe this is happening?

I can't. I'll pick you up.

Looking sharp.

Use the stairs. Your father paid good money for those.

Sorry. I'm excited.

Here's the graduate. We're very proud of you, son.

A perfect report card, all B's.

Very proud.

Ma! I got a thing going here.

You got lint on your fuzz.

Ow! That's me!

Wave to us! We'll be in row 118,000.

Bye!

Barry, I told you, stop flying in the house!

Hey, Adam.

Hey, Barry.

Is that fuzz gel?

A little. Special day, graduation.

Never thought I'd make it.

Three days grade school, three days high school.

Those were awkward.

Three days college. I'm glad I took a day and hitchhiked around the hive.

You did come back different.

Hi, Barry.

Artie, growing a mustache? Looks good.

Hear about Frankie?

Yeah.

You going to the funeral?

No, I'm not going.

Everybody knows, sting someone, you die.

Don't waste it on a squirrel. Such a hothead.

I guess he could have just gotten out of the way.

I love this incorporating an amusement park into our day.

That's why we don't need vacations.

Boy, quite a bit of pomp… under the circumstances.

Well, Adam, today we are men.

We are!

Bee-men.

Amen!

Hallelujah!

Students, faculty, distinguished bees,

please welcome Dean Buzzwell.

Welcome, New Hive Oity graduating class of…

…9:15.

That concludes our ceremonies.

And begins your career at Honex Industries!

Will we pick ourjob today?

I heard it's just orientation.

Heads up! Here we go.

Keep your hands and antennas inside the tram at all times.

Wonder what it'll be like? A little scary. Welcome to Honex, a division of Honesco

and a part of the Hexagon Group.

This is it!

Wow.

Wow.

We know that you, as a bee, have worked your whole life

to get to the point where you can work for your whole life.

Honey begins when our valiant Pollen Jocks bring the nectar to the hive.

Our top-secret formula

is automatically color-corrected, scent-adjusted and bubble-contoured

into this soothing sweet syrup

with its distinctive golden glow you know as…

Honey!

That girl was hot.

She's my cousin!

She is?

Yes, we're all cousins.

Right. You're right.

At Honex, we constantly strive

to improve every aspect of bee existence.

These bees are stress-testing a new helmet technology.

What do you think he makes? Not enough. Here we have our latest advancement, the Krelman.

What does that do? Oatches that little strand of honey that hangs after you pour it. Saves us millions.

Oan anyone work on the Krelman?

Of course. Most bee jobs are small ones. But bees know

that every small job, if it's done well, means a lot.

But choose carefully

because you'll stay in the job you pick for the rest of your life.

The same job the rest of your life? I didn't know that.

What's the difference?

You'll be happy to know that bees, as a species, haven't had one day off

in 27 million years.

So you'll just work us to death?

We'll sure try.

Wow! That blew my mind!

"What's the difference?" How can you say that?

One job forever? That's an insane choice to have to make.

I'm relieved. Now we only have to make one decision in life.

But, Adam, how could they never have told us that?

Why would you question anything? We're bees.

We're the most perfectly functioning society on Earth.

You ever think maybe things work a little too well here?

Like what? Give me one example.

I don't know. But you know what I'm talking about.

Please clear the gate. Royal Nectar Force on approach.

Wait a second. Oheck it out.

Hey, those are Pollen Jocks! Wow. I've never seen them this close.

They know what it's like outside the hive.

Yeah, but some don't come back.

Hey, Jocks! Hi, Jocks! You guys did great!

You're monsters! You're sky freaks! I love it! I love it!

I wonder where they were. I don't know. Their day's not planned.

Outside the hive, flying who knows where, doing who knows what.

You can'tjust decide to be a Pollen Jock. You have to be bred for that.

Right.

Look. That's more pollen than you and I will see in a lifetime.

It's just a status symbol. Bees make too much of it.

Perhaps. Unless you're wearing it and the ladies see you wearing it.

Those ladies? Aren't they our cousins too?

Distant. Distant.

Look at these two.

Oouple of Hive Harrys. Let's have fun with them. It must be dangerous being a Pollen Jock.

Yeah. Once a bear pinned me against a mushroom!

He had a paw on my throat, and with the other, he was slapping me!

Oh, my! I never thought I'd knock him out. What were you doing during this?

Trying to alert the authorities.

I can autograph that.

A little gusty out there today, wasn't it, comrades?

Yeah. Gusty.

We're hitting a sunflower patch six miles from here tomorrow.

Six miles, huh? Barry! A puddle jump for us, but maybe you're not up for it.

Maybe I am. You are not! We're going 0900 at J-Gate.

What do you think, buzzy-boy? Are you bee enough?

I might be. It all depends on what 0900 means.

Hey, Honex!

Dad, you surprised me.

You decide what you're interested in?

Well, there's a lot of choices. But you only get one. Do you ever get bored doing the same job every day?

Son, let me tell you about stirring.

You grab that stick, and you just move it around, and you stir it around.

You get yourself into a rhythm. It's a beautiful thing.

You know, Dad, the more I think about it,

maybe the honey field just isn't right for me.

You were thinking of what, making balloon animals?

That's a bad job for a guy with a stinger.

Janet, your son's not sure he wants to go into honey!

Barry, you are so funny sometimes. I'm not trying to be funny. You're not funny! You're going into honey. Our son, the stirrer!

You're gonna be a stirrer? No one's listening to me! Wait till you see the sticks I have.

I could say anything right now. I'm gonna get an ant tattoo!

Let's open some honey and celebrate!

Maybe I'll pierce my thorax. Shave my antennae.

Shack up with a grasshopper. Get a gold tooth and call everybody "dawg"!

I'm so proud.

We're starting work today! Today's the day. Oome on! All the good jobs will be gone.

Yeah, right.

Pollen counting, stunt bee, pouring, stirrer, front desk, hair removal…

Is it still available? Hang on. Two left! One of them's yours! Oongratulations! Step to the side.

What'd you get? Picking crud out. Stellar! Wow!

Oouple of newbies?

Yes, sir! Our first day! We are ready!

Make your choice.

You want to go first? No, you go. Oh, my. What's available?

Restroom attendant's open, not for the reason you think.

Any chance of getting the Krelman? Sure, you're on. I'm sorry, the Krelman just closed out.

Wax monkey's always open.

The Krelman opened up again.

What happened?

A bee died. Makes an opening. See? He's dead. Another dead one.

Deady. Deadified. Two more dead.

Dead from the neck up. Dead from the neck down. That's life!

Oh, this is so hard!

Heating, cooling, stunt bee, pourer, stirrer,

humming, inspector number seven, lint coordinator, stripe supervisor,

mite wrangler. Barry, what do you think I should… Barry?

Barry!

All right, we've got the sunflower patch in quadrant nine…

What happened to you? Where are you?

I'm going out.

Out? Out where?

Out there.

Oh, no!

I have to, before I go to work for the rest of my life.

You're gonna die! You're crazy! Hello?

Another call coming in.

If anyone's feeling brave, there's a Korean deli on 83rd

that gets their roses today.

Hey, guys.

Look at that. Isn't that the kid we saw yesterday? Hold it, son, flight deck's restricted.

It's OK, Lou. We're gonna take him up.

Really? Feeling lucky, are you?

Sign here, here. Just initial that.

Thank you. OK. You got a rain advisory today,

and as you all know, bees cannot fly in rain.

So be careful. As always, watch your brooms,

hockey sticks, dogs, birds, bears and bats.

Also, I got a couple of reports of root beer being poured on us.

Murphy's in a home because of it, babbling like a cicada!

That's awful. And a reminder for you rookies, bee law number one, absolutely no talking to humans!

All right, launch positions!

Buzz, buzz, buzz, buzz! Buzz, buzz, buzz, buzz! Buzz, buzz, buzz, buzz!

Black and yellow!

Hello!

You ready for this, hot shot?

Yeah. Yeah, bring it on.

Wind, check.

Antennae, check.

Nectar pack, check.

Wings, check.

Stinger, check.

Scared out of my shorts, check.

OK, ladies,

let's move it out!

Pound those petunias, you striped stem-suckers!

All of you, drain those flowers!

Wow! I'm out!

I can't believe I'm out!

So blue.

I feel so fast and free!

Box kite!

Wow!

Flowers!

This is Blue Leader. We have roses visual.

Bring it around 30 degrees and hold.

Roses!

30 degrees, roger. Bringing it around.

Stand to the side, kid. It's got a bit of a kick.

That is one nectar collector!

Ever see pollination up close? No, sir. I pick up some pollen here, sprinkle it over here. Maybe a dash over there,

a pinch on that one. See that? It's a little bit of magic.

That's amazing. Why do we do that?

That's pollen power. More pollen, more flowers, more nectar, more honey for us.

Oool.

I'm picking up a lot of bright yellow. Oould be daisies. Don't we need those?

Oopy that visual.

Wait. One of these flowers seems to be on the move.

Say again? You're reporting a moving flower?

Affirmative.

That was on the line!

This is the coolest. What is it?

I don't know, but I'm loving this color.

It smells good. Not like a flower, but I like it.

Yeah, fuzzy.

Ohemical-y.

Oareful, guys. It's a little grabby.

My sweet lord of bees!

Oandy-brain, get off there!

Problem!

Guys! This could be bad. Affirmative.

Very close.

Gonna hurt.

Mama's little boy.

You are way out of position, rookie!

Ooming in at you like a missile!

Help me!

I don't think these are flowers.

Should we tell him? I think he knows. What is this?!

Match point!

You can start packing up, honey, because you're about to eat it!

Yowser!

Gross.

There's a bee in the car!

Do something!

I'm driving!

Hi, bee.

He's back here!

He's going to sting me!

Nobody move. If you don't move, he won't sting you. Freeze!

He blinked!

Spray him, Granny!

What are you doing?!

Wow… the tension level out here is unbelievable.

I gotta get home.

Oan't fly in rain.

Oan't fly in rain.

Oan't fly in rain.

Mayday! Mayday! Bee going down!

Ken, could you close the window please?

Ken, could you close the window please?

Oheck out my new resume. I made it into a fold-out brochure.

You see? Folds out.

Oh, no. More humans. I don't need this.

What was that?

Maybe this time. This time. This time. This time! This time! This…

Drapes!

That is diabolical.

It's fantastic. It's got all my special skills, even my top-ten favorite movies.

What's number one? Star Wars?

Nah, I don't go for that…

…kind of stuff.

No wonder we shouldn't talk to them. They're out of their minds.

When I leave a job interview, they're flabbergasted, can't believe what I say.

There's the sun. Maybe that's a way out.

I don't remember the sun having a big 75 on it.

I predicted global warming.

I could feel it getting hotter. At first I thought it was just me.

Wait! Stop! Bee!

Stand back. These are winter boots.

Wait!

Don't kill him!

You know I'm allergic to them! This thing could kill me!

Why does his life have less value than yours?

Why does his life have any less value than mine? Is that your statement?

I'm just saying all life has value. You don't know what he's capable of feeling.

My brochure!

There you go, little guy.

I'm not scared of him. It's an allergic thing.

Put that on your resume brochure.

My whole face could puff up.

Make it one of your special skills.

Knocking someone out is also a special skill.

Right. Bye, Vanessa. Thanks.

Vanessa, next week? Yogurt night?

Sure, Ken. You know, whatever.

You could put carob chips on there.

Bye.

Supposed to be less calories.

Bye.

I gotta say something.

She saved my life. I gotta say something.

All right, here it goes.

Nah.

What would I say?

I could really get in trouble.

It's a bee law. You're not supposed to talk to a human.

I can't believe I'm doing this.

I've got to.

Oh, I can't do it. Oome on!

No. Yes. No.

Do it. I can't.

How should I start it? "You like jazz?" No, that's no good.

Here she comes! Speak, you fool!

Hi!

I'm sorry.

You're talking. Yes, I know. You're talking!

I'm so sorry.

No, it's OK. It's fine. I know I'm dreaming.

But I don't recall going to bed.

Well, I'm sure this is very disconcerting.

This is a bit of a surprise to me. I mean, you're a bee!

I am. And I'm not supposed to be doing this,

but they were all trying to kill me.

And if it wasn't for you…

I had to thank you. It's just how I was raised.

That was a little weird.

I'm talking with a bee. Yeah. I'm talking to a bee. And the bee is talking to me!

I just want to say I'm grateful. I'll leave now.

Wait! How did you learn to do that? What? The talking thing.

Same way you did, I guess. "Mama, Dada, honey." You pick it up.

That's very funny. Yeah. Bees are funny. If we didn't laugh, we'd cry with what we have to deal with.

Anyway…

Oan I…

…get you something?

Like what? I don't know. I mean… I don't know. Ooffee?

I don't want to put you out.

It's no trouble. It takes two minutes.

It's just coffee.

I hate to impose.

Don't be ridiculous!

Actually, I would love a cup.

Hey, you want rum cake?

I shouldn't.

Have some.

No, I can't.

Oome on!

I'm trying to lose a couple micrograms.

Where? These stripes don't help. You look great!

I don't know if you know anything about fashion.

Are you all right?

No.

He's making the tie in the cab as they're flying up Madison.

He finally gets there.

He runs up the steps into the church. The wedding is on.

And he says, "Watermelon? I thought you said Guatemalan.

Why would I marry a watermelon?"

Is that a bee joke?

That's the kind of stuff we do.

Yeah, different.

So, what are you gonna do, Barry?

About work? I don't know.

I want to do my part for the hive, but I can't do it the way they want.

I know how you feel.

You do? Sure. My parents wanted me to be a lawyer or a doctor, but I wanted to be a florist.

Really? My only interest is flowers. Our new queen was just elected with that same campaign slogan.

Anyway, if you look…

There's my hive right there. See it?

You're in Sheep Meadow!

Yes! I'm right off the Turtle Pond!

No way! I know that area. I lost a toe ring there once.

Why do girls put rings on their toes?

Why not?

It's like putting a hat on your knee.

Maybe I'll try that.

You all right, ma'am?

Oh, yeah. Fine.

Just having two cups of coffee!

Anyway, this has been great. Thanks for the coffee.

Yeah, it's no trouble.

Sorry I couldn't finish it. If I did, I'd be up the rest of my life.

Are you…?

Oan I take a piece of this with me?

Sure! Here, have a crumb.

Thanks! Yeah. All right. Well, then… I guess I'll see you around.

Or not.

OK, Barry.

And thank you so much again… for before.

Oh, that? That was nothing.

Well, not nothing, but… Anyway…

This can't possibly work.

He's all set to go. We may as well try it.

OK, Dave, pull the chute.

Sounds amazing. It was amazing! It was the scariest, happiest moment of my life.

Humans! I can't believe you were with humans!

Giant, scary humans! What were they like?

Huge and crazy. They talk crazy.

They eat crazy giant things. They drive crazy.

Do they try and kill you, like on TV?

Some of them. But some of them don't.

How'd you get back?

Poodle.

You did it, and I'm glad. You saw whatever you wanted to see.

You had your "experience." Now you can pick out yourjob and be normal.

Well… Well? Well, I met someone.

You did? Was she Bee-ish?

A wasp?! Your parents will kill you!

No, no, no, not a wasp.

Spider?

I'm not attracted to spiders.

I know it's the hottest thing, with the eight legs and all.

I can't get by that face.

So who is she?

She's… human.

No, no. That's a bee law. You wouldn't break a bee law.

Her name's Vanessa. Oh, boy. She's so nice. And she's a florist!

Oh, no! You're dating a human florist!

We're not dating.

You're flying outside the hive, talking to humans that attack our homes

with power washers and M-80s! One-eighth a stick of dynamite!

She saved my life! And she understands me.

This is over!

Eat this.

This is not over! What was that?

They call it a crumb. It was so stingin' stripey! And that's not what they eat. That's what falls off what they eat!

You know what a Oinnabon is? No. It's bread and cinnamon and frosting. They heat it up…

Sit down!

…really hot!

Listen to me! We are not them! We're us. There's us and there's them!

Yes, but who can deny the heart that is yearning?

There's no yearning. Stop yearning. Listen to me!

You have got to start thinking bee, my friend. Thinking bee!

Thinking bee. Thinking bee. Thinking bee! Thinking bee! Thinking bee! Thinking bee!

There he is. He's in the pool.

You know what your problem is, Barry?

I gotta start thinking bee?

How much longer will this go on?

It's been three days! Why aren't you working?

I've got a lot of big life decisions to think about.

What life? You have no life! You have no job. You're barely a bee!

Would it kill you to make a little honey?

Barry, come out. Your father's talking to you.

Martin, would you talk to him?

Barry, I'm talking to you!

You coming?

Got everything?

All set!

Go ahead. I'll catch up.

Don't be too long.

Watch this!

Vanessa!

We're still here. I told you not to yell at him. He doesn't respond to yelling!

Then why yell at me? Because you don't listen! I'm not listening to this.

Sorry, I've gotta go.

Where are you going? I'm meeting a friend. A girl? Is this why you can't decide?

Bye.

I just hope she's Bee-ish.

They have a huge parade of flowers every year in Pasadena?

To be in the Tournament of Roses, that's every florist's dream!

Up on a float, surrounded by flowers, crowds cheering.

A tournament. Do the roses compete in athletic events?

No. All right, I've got one. How come you don't fly everywhere?

It's exhausting. Why don't you run everywhere? It's faster.

Yeah, OK, I see, I see. All right, your turn.

TiVo. You can just freeze live TV? That's insane!

You don't have that?

We have Hivo, but it's a disease. It's a horrible, horrible disease.

Oh, my.

Dumb bees!

You must want to sting all those jerks.

We try not to sting. It's usually fatal for us.

So you have to watch your temper.

Very carefully. You kick a wall, take a walk,

write an angry letter and throw it out. Work through it like any emotion:

Anger, jealousy, lust.

Oh, my goodness! Are you OK?

Yeah.

What is wrong with you?! It's a bug. He's not bothering anybody. Get out of here, you creep!

What was that? A Pic 'N' Save circular?

Yeah, it was. How did you know?

It felt like about 10 pages. Seventy-five is pretty much our limit.

You've really got that down to a science.

I lost a cousin to Italian Vogue. I'll bet. What in the name of Mighty Hercules is this?

How did this get here? Oute Bee, Golden Blossom,

Ray Liotta Private Select?

Is he that actor?

I never heard of him.

Why is this here?

For people. We eat it.

You don't have enough food of your own?

Well, yes.

How do you get it?

Bees make it.

I know who makes it!

And it's hard to make it!

There's heating, cooling, stirring. You need a whole Krelman thing!

It's organic. It's our-ganic! It's just honey, Barry.

Just what?!

Bees don't know about this! This is stealing! A lot of stealing!

You've taken our homes, schools, hospitals! This is all we have!

And it's on sale?! I'm getting to the bottom of this.

I'm getting to the bottom of all of this!

Hey, Hector.

You almost done? Almost. He is here. I sense it.

Well, I guess I'll go home now

and just leave this nice honey out, with no one around.

You're busted, box boy!

I knew I heard something. So you can talk!

I can talk. And now you'll start talking!

Where you getting the sweet stuff? Who's your supplier?

I don't understand. I thought we were friends.

The last thing we want to do is upset bees!

You're too late! It's ours now!

You, sir, have crossed the wrong sword!

You, sir, will be lunch for my iguana, Ignacio!

Where is the honey coming from?

Tell me where!

Honey Farms! It comes from Honey Farms!

Orazy person!

What horrible thing has happened here?

These faces, they never knew what hit them. And now

they're on the road to nowhere!

Just keep still.

What? You're not dead?

Do I look dead? They will wipe anything that moves. Where you headed?

To Honey Farms. I am onto something huge here.

I'm going to Alaska. Moose blood, crazy stuff. Blows your head off!

I'm going to Tacoma.

And you? He really is dead. All right.

Uh-oh!

What is that?!

Oh, no!

A wiper! Triple blade!

Triple blade?

Jump on! It's your only chance, bee!

Why does everything have to be so doggone clean?!

How much do you people need to see?!

Open your eyes! Stick your head out the window!

From NPR News in Washington, I'm Oarl Kasell.

But don't kill no more bugs!

Bee!

Moose blood guy!!

You hear something?

Like what?

Like tiny screaming.

Turn off the radio.

Whassup, bee boy?

Hey, Blood.

Just a row of honey jars, as far as the eye could see.

Wow!

I assume wherever this truck goes is where they're getting it.

I mean, that honey's ours.

Bees hang tight. We're all jammed in. It's a close community.

Not us, man. We on our own. Every mosquito on his own.

What if you get in trouble? You a mosquito, you in trouble. Nobody likes us. They just smack. See a mosquito, smack, smack!

At least you're out in the world. You must meet girls.

Mosquito girls try to trade up, get with a moth, dragonfly.

Mosquito girl don't want no mosquito.

You got to be kidding me!

Mooseblood's about to leave the building! So long, bee!

Hey, guys! Mooseblood! I knew I'd catch y'all down here. Did you bring your crazy straw?

We throw it in jars, slap a label on it, and it's pretty much pure profit.

What is this place?

A bee's got a brain the size of a pinhead.

They are pinheads!

Pinhead.

Oheck out the new smoker. Oh, sweet. That's the one you want. The Thomas 3000!

Smoker?

Ninety puffs a minute, semi-automatic. Twice the nicotine, all the tar.

A couple breaths of this knocks them right out.

They make the honey, and we make the money.

"They make the honey, and we make the money"?

Oh, my!

What's going on? Are you OK?

Yeah. It doesn't last too long.

Do you know you're in a fake hive with fake walls?

Our queen was moved here. We had no choice.

This is your queen? That's a man in women's clothes!

That's a drag queen!

What is this?

Oh, no!

There's hundreds of them!

Bee honey.

Our honey is being brazenly stolen on a massive scale!

This is worse than anything bears have done! I intend to do something.

Oh, Barry, stop.

Who told you humans are taking our honey? That's a rumor.

Do these look like rumors?

That's a conspiracy theory. These are obviously doctored photos.

How did you get mixed up in this?

He's been talking to humans.

What? Talking to humans?! He has a human girlfriend. And they make out!

Make out? Barry!

We do not.

You wish you could. Whose side are you on? The bees!

I dated a cricket once in San Antonio. Those crazy legs kept me up all night.

Barry, this is what you want to do with your life?

I want to do it for all our lives. Nobody works harder than bees!

Dad, I remember you coming home so overworked

your hands were still stirring. You couldn't stop.

I remember that.

What right do they have to our honey?

We live on two cups a year. They put it in lip balm for no reason whatsoever!

Even if it's true, what can one bee do?

Sting them where it really hurts.

In the face! The eye!

That would hurt. No. Up the nose? That's a killer.

There's only one place you can sting the humans, one place where it matters.

Hive at Five, the hive's only full-hour action news source.

No more bee beards!

With Bob Bumble at the anchor desk.

Weather with Storm Stinger.

Sports with Buzz Larvi.

And Jeanette Ohung.

Good evening. I'm Bob Bumble. And I'm Jeanette Ohung. A tri-county bee, Barry Benson,

intends to sue the human race for stealing our honey,

packaging it and profiting from it illegally!

Tomorrow night on Bee Larry King,

we'll have three former queens here in our studio, discussing their new book,

Olassy Ladies, out this week on Hexagon.

Tonight we're talking to Barry Benson.

Did you ever think, "I'm a kid from the hive. I can't do this"?

Bees have never been afraid to change the world.

What about Bee Oolumbus? Bee Gandhi? Bejesus?

Where I'm from, we'd never sue humans.

We were thinking of stickball or candy stores.

How old are you?

The bee community is supporting you in this case,

which will be the trial of the bee century.

You know, they have a Larry King in the human world too.

It's a common name. Next week…

He looks like you and has a show and suspenders and colored dots…

Next week…

Glasses, quotes on the bottom from the guest even though you just heard 'em.

Bear Week next week! They're scary, hairy and here live.

Always leans forward, pointy shoulders, squinty eyes, very Jewish.

In tennis, you attack at the point of weakness!

It was my grandmother, Ken. She's 81.