Agents and CDC streams are powerful together because they split the work well.

大多数人可能认为AI代理应该独立完成所有任务,包括数据获取和处理。但作者提出反直觉的分工模式:AI专注于逻辑解释和适应,而数据库引擎专注于持续评估和精确更新。这种分工挑战了当前AI代理应该端到端处理所有任务的主流观点。

Agents and CDC streams are powerful together because they split the work well.

大多数人可能认为AI代理应该独立完成所有任务,包括数据获取和处理。但作者提出反直觉的分工模式:AI专注于逻辑解释和适应,而数据库引擎专注于持续评估和精确更新。这种分工挑战了当前AI代理应该端到端处理所有任务的主流观点。

Agents and CDC streams are powerful together because they split the work well.

大多数人认为AI代理应该负责从端到端的任务执行。但作者认为AI代理和数据库引擎应该分工合作:代理负责解释新信息和调整逻辑,而数据库负责持续应用逻辑并发出精确更新。这种分工模式挑战了AI代理应该完全自主的主流观点。

With change data capture (CDC), the system emits a stream of precise updates: inserts, updates, deletes, each tied to specific records.

大多数人认为AI代理需要主动查询数据系统以获取信息。但作者提出了一种反直觉的方法:让数据库主动向AI代理发送变更事件,而不是让代理轮询或查询。这种模式将AI代理从主动查询者转变为被动响应者,从根本上改变了人机交互模式。

Type Deck: Index Cards Cards<br /> by Steven Heller, Rick Landers<br /> https://www.amazon.com/Type-Deck-Index-Steven-Heller/dp/0500420793

https://www.instagram.com/p/DTP7yMkCUCQ/

Background video contains a variety of card index filing cabinets as well as an example thumbing through a subject index with a card:

U.S. - Education - Colleges - Columbia<br /> Homosexual's bulletin board<br /> (see US - people - homosexuals 13-229-L

“NFORMATION RETRIEVAL” 1961 IBM BUSINESS COMPUTER PROMO MAINFRAME PUNCHCARD COMPUTERS SM10435<br /> by [[Periscope Film]] on YouTube <br /> accessed on 2026-01-04T15:56:12

Some great visuals hiding in here.<br /> Starts out with details for properly threading film projector<br /> keywords - indexing methods<br /> Key Word in Context (KWIC)<br /> inverted file (aka lookup file)<br /> Notice this is a few years after Desk Set (1957)<br /> Selective dissemination of information<br /> Fake company name: Alamer

It was my discovery of wiki technology some time in 2002 that ended this undirected search and constituted the other fundamental change in the way I dealt with information. What I liked about it from the beginning was that it allows of easily linking bits of information and favors the braking down of large chunks of information into smaller bits. This emphasis on the granularity of information reminded me not only of the old index card method, but it also convinced me almost immediately that it was a significant improvement over the paper-based system. I adopted this technology and I have never looked back.

Movement from index cards to wikis in early 2002 by Manfred Kuehn.

Hunter, Estelle Belle. 1923. Modern Filing Manual. Rochester, NY: Yawman and Erbe Manufacturing Company. https://www.google.com/books/edition/Modern_Filing_Manual/F-lNAQAAMAAJ?hl=en.

We're going back to the basics today for the non-technical people to explain “what is an “index” and why they are important to making your search engine work cost effectively at scale. Imagine you walked into a library back in the day before computers and asked the librarian to find you every book that mentioned the word "gazebo". You would probably get some pretty weird looks because it would be horribly inefficient for the librarian to go through every single book in the library to satisfy your obscure query. It would likely take months or even years to do a single query. Now imagine you asked them for every book in the library by “Hunter S Thompson”. That would be a piece of cake, but why? That’s because the library maintains an index of all the books that come in by title, author & etc. Each index is just a list of possible values that people would be searching for. In our example, the author index is an alphabetical list of author names and the specific book name/locations where you can find the whole book so you can get all the other information contained in the book. The index is built before any search is ever made. When a new book comes into the library the librarian breaks out those old index cards and adds it to the related indexes before the book ever hits the shelves. We do this same technique when working with data at scale. Let’s circle back to that first query for the word "gazebo". Why wouldn’t the library maintain an index for literally every word ever? Imagine a library filled with more index cards than books? It would be virtually unusable. Common words like the word “the” would likely contain the names of every book in the library rendering that index completely useless. I have seen databases where the indexes are twice the size of the data actually being indexed and it quickly has diminishing returns. It is a delicate balance for people like me to engineer these giant scalable search engines to walk to get the performance we need without flooding our virtual library (the database) with unneeded indexes.

via u/schematical at https://reddit.com/user/schematical/comments/1oe41bx/what_is_a_database_index_as_explained_to_a_1930s/

Perhaps it's a question of the "long search" versus the "short search"? Long searches with proper connecting tissue are more often the thing that produces innovation out of serendipity and this is the thing of greatest value versus "What time does the Superbowl start?". How do you build a database index to improve the "long search"?

See, for example Keith Thomas' problem: https://hyp.is/DFLyZljJEe2dD-t046xWvQ/www.lrb.co.uk/the-paper/v32/n11/keith-thomas/diary

How about using ascratch pad slightly smaller than thepage-size of the book—so that theedges of the sheets won't protrude?Make your index, outlines, and evenyour notes on the pad, and then insertthese sheets permanently inside thefront and back covers of the book.

This practice is not too dissimilar to that used by zettelkasten practitioners (including Niklas Luhmann) who broadly used his bibliographic cards this way.

By separating his index and ideas from the book and putting them into a physical index, it makes them easier to juxtapose with other ideas over time rather than having them anchored directly to the book itself. For academics and researchers, this will tend to help save time from having to constantly retrieve these portions from individual books.

https://www.etsy.com/listing/1505097692/antique-1921-meads-file-index-corrective<br /> Antique 1921 Mead's File Index Corrective Diets Infants Nursing Weight Charts Mead card index geared toward health of infants with details, nursing patterns, growth data, feeding data, case history, etc.

For example, even a read-only transaction at this level may see a control record updated to show that a batch has been completed but not see one of the detail records which is logically part of the batch because it read an earlier revision of the control record.

a repeatable read transaction cannot modify or lock rows changed by other transactions after the repeatable read transaction began.

Because Read Committed mode starts each command with a new snapshot that includes all transactions committed up to that instant, subsequent commands in the same transaction will see the effects of the committed concurrent transaction in any case. The point at issue above is whether or not a single command sees an absolutely consistent view of the database.

Thus, successive SELECT commands within a single transaction see the same data, i.e., they do not see changes made by other transactions that committed after their own transaction started.

Clock, Watch and Document Database by [[Bill Stoddard]]

Could National mechanized accounting save as much for you? Almost certainly! For businesses of every size and type, employing from 50 people, up, report that upon mechanizing their accounting with National Accounting Machines, they effected savingsTHE NATIONAL CASH REGISTER DETROIT PUBLIC LIBRARYup to 30%. Savings which often pay for the whole National installation in the first year—and then run on, year after year. Ask your local National representative to check your present set-up, and report specifically the savings you can expect.

advertisement on page 17 of Newsweek (US Edition) 1948-10-04: Vol 32 Iss 14<br /> https://archive.org/details/sim_newsweek-us_1948-10-04_32_14/page/16/mode/2up

Advertisement shows two female clerks at adding machines with drawers of a card index next to them as they work.

For any new environments and databases, you can use just drizzle-kit migrate, and all the migrations together with init will be applied

When you run migrate on a database that already has all the tables from your schema, you need to run it with the drizzle-kit migrate --no-init flag, which will skip the init step. If you run it without this flag and get an error that such tables already exist, drizzle-kit will detect it and suggest you add this flag.

When you introspect the database, you will receive an initial migration without comments. Instead of commenting it out, we will add a flag to journal entity with the init flag, indicating that this migration was generated by introspect action

root@51a758d136a2:~/test/test-project# npx prisma migrate diff --from-empty --to-schema-datamodel prisma/schema.prisma --script > migration.sql root@51a758d136a2:~/test/test-project# cat migration.sql -- CreateTable CREATE TABLE "test" ( "id" SERIAL NOT NULL, "val" INTEGER, CONSTRAINT "test_pkey" PRIMARY KEY ("id") ); root@51a758d136a2:~/test/test-project# mkdir -p prisma/migrations/initial root@51a758d136a2:~/test/test-project# mv migration.sql prisma/migrations/initial/

While that change fixes the issue, there’s a production outage waiting to happen. When the schema change is applied, the existing GetUserActions query will begin to fail. The correct way to fix this is to deploy the updated query before applying the schema migration. sqlc verify was designed to catch these types of problems. It ensures migrations are safe to deploy by sending your current schema and queries to sqlc cloud. There, we run your existing queries against your new schema changes to find any issues.

Why another database schema migration tool? Dbmate was inspired by many other tools, primarily Active Record Migrations, with the goals of being trivial to configure, and language & framework independent. Here is a comparison between dbmate and other popular migration tools.

upsert

(Do not confuse this with the --schema option, which uses the word “schema” in a different meaning.)

Treat object-name columns in the information_schema views as being of type name, not varchar (Tom Lane) § § § Per the SQL standard, object-name columns in the information_schema views are declared as being of domain type sql_identifier. In PostgreSQL, the underlying catalog columns are really of type name. This change makes sql_identifier be a domain over name, rather than varchar as before. This eliminates a semantic mismatch in comparison and sorting behavior, which can greatly improve the performance of queries on information_schema views that restrict an object-name column. Note however that inequality restrictions, for example

Databases that do not shareinformation across the company with other systems are some-times called data silos.

Bingo, we need to expand on what is Silo and what not The word silo comes from Spanish silo, meaning “pit for storing grain,” which traces back to Greek siros or seiros — a pit for holding grain or corn.

First used in English in the 1830s to mean underground grain storage; the metaphorical/organizational use (as in "data silo") started in the 1970s–1980s in business and IT contexts.

In theory, ER should never be a problem in a well-designeddatabase because two entity instances should be equivalent if,and only if, they have the same primary key.

In practice... when you have 3 matches that are identical and want to select one of them and archive an do other things you do need a "forced" ID this was my conclusion after a few rounds of prototyping

Don't use the timestamp type to store timestamps, use timestamptz (also known as timestamp with time zone) instead.

temporal_tables extension if you are in a pinch and want to use that for row versioning in place of a lacking SQL 2011 support.

Don't use the type varchar(n) by default. Consider varchar (without the length limit) or text instead.

varchar(n) is a variable width text field that will throw an error if you try and insert a string longer than n characters (not bytes) into it.

79 View upvote and downvote totals. This answer is not useful Save this answer. Show activity on this post. Generally, there is no downside to using text in terms of performance/memory. On the contrary: text is the optimum. Other types have more or less relevant downsides. text is literally the "preferred" type among string types in the Postgres type system, which can affect function or operator type resolution.

Don't add a length modifier to varchar if you don't need it. (Most of the time, you don't.) Just use text for all character data. Make that varchar (standard SQL type) without length modifier if you need to stay compatible with RDBMS which don't have text as generic character string type.

I rant against 255 occasionally. Sure, there used to be some reasons for '255', but many are no longer valid, and even counter-productive.

You have your database schema as a source of truth

Hurd, Cuthbert C., ed. Proceedings: IBM Computation Seminar December 1949. New York: Internation Buisiness Machines Corporation, 1951. http://archive.org/details/bitsavers_ibmproceedeminarDec49_14295048.

In a variety of context here the idea of "cards" could be held to be synonymous with "notes".

Collision cards (though used in a physics setting) could be a bit hilarious with the idea of "atomic notes" and the idea of "combinatorial creativity".

No revolutionary schemes ona large scale are advocated at present.

Some idea of the rapidity with which the field has grown may be gainedfrom the fact that the bibliography of uses contains 400 entries, comparedwith 276 entries in the first edition. This great increase is reflected in theextension of the Practical Applications Section (Part II) from 186 pagesin the first edition to 295 pages in the present book.

An indication of the state-of-the-art in punch card systems from 1951 to 1958, particularly with respect to practical applications.

Casey, Robert S., James W. Perry, Madeline M. Berry, and Allen Kent. Punched Cards: Their Applications To Science And Industry. 2nd ed. 1951. Reprint, New York: Reinhold Publishing Corporation, 1958. http://archive.org/details/PunchedCardsTheirApplicationsToScienceAndIndustry.

Begun, George M. “Making Your Own Punched Cards.” Journal of Chemical Education 32, no. 6 (June 1, 1955): 328. https://doi.org/10.1021/ed032p328.

George Begun used a template of "heavy galvanized iron" to drill holes into his 5 x 8" index cards to create his own edge-noted card system for use in his chemistry work. Rather than using commercially made sorting needles, he recommended the use of a ice pick with a dulled point "for safety".

Edge-Notched Cards: A queryable database...made of paper by [[Soren Bjornstad]]

Value of knowledge in a zettelkasten as a function of reference(use/look up) frequency; links to other ideas; ease of recall without needing to look up (also a measure of usefulness); others?

Define terms and create a mathematical equation of stocks and flows around this system of information. Maybe "knowledge complexity" or "information optimization"? see: https://hypothes.is/a/zejn0oscEe-zsjMPhgjL_Q

takes into account the value of information from the perspective of a particular observer<br /> relative information value

cross-reference: Umberto Eco on no piece of information is superior: https://hypothes.is/a/jqug2tNlEeyg2JfEczmepw

Inspired by idea in https://hypothes.is/a/CdoMUJCYEe-VlxtqIFX4qA

Relaxo is a transactional database built on top of git.

Relaxo uses the git persistent data structure for storing documents. This data structure exposes a file-system like interface, which stores any kind of data.

databases are not designed to be browsed.

Casey Newton makes this blanket statement. Any real evidence for this beyond his "gut"?

Many "paper machines" like Niklas Luhmann's zettelkasten were almost custom made not just for searching, but for browsing through regularly much like commonplace books.

Perhaps the question is really, how is your particular database designed?

Aurora Serverless packs a number of database instances onto a single physical machine (each isolated inside its own virtual machine using AWS’s Nitro Hypervisor). As these databases shrink and grow, resources like CPU and memory are reclaimed from shrinking workloads, pooled in the hypervisor, and given to growing workloads

Oh, wow, so the workload themselves are dynamically scaling up and down "vertically" as opposed to "horizontally" - I think this is a bit like dynamically changing the size of Docker containers that are running the databases while they're running

The perfect index for the above query would be a multi-column index spanning all three columns in matching sequence and with matching sort order:

My fiance got a white Adler Tippa recently, but is unsure of the exact model or year. We looked up the serial number but nothing has come up even on the database. The Tippa plate just says Tippa, not Adler Tippa, so it can't be too old. Any ideas? Serial number: 10148440

reply to u/DinoPup87 at https://new.reddit.com/r/typewriters/comments/1efzeor/adler_tippa_id/

It's a common misconception that the database lists all serial numbers.

You'll need to identify the make (and preferably the model) to search the database. Then you'll want to look at the serial numbers which your serial number appears between to be able to identify the year (or month if the data is granular enough) your machine was made. Reading the notes at the header of each page will give you details for how best to read and interpret the charts for each manufacturer. Notes and footnotes will provide you with additional details when available.

You can then compare your machine against others which individuals have photographed and uploaded to the database. Feel free to add your typewriter as an example by making an account of your own. Doing this is sure to help researches and other enthusiasts in the future. Don't forget photos of your manual, tools which came with your machine, your case, and original dated purchase receipts if you have them.

For the purpose of indexing we shall divide 73our stock of names or terms into those ofconcretes, processes and countries, concretes being the com-modities with which we are concerned, processes indicatingtheir actions, and countries indicating the localities with whichthe concretes are connected (295 et seq.) .

There are likely other metadata he may be missing here: - in particular dates/times/time periods which may be useful for the historians. - others? - general forms of useful metadata from a database perspective?

But by means of the cards, these materials canbe arranged and re-arranged in almost endless variety, we mayclassify them roughly or minutely, we may arrange them by thealphabet, by numbers, trades or professions, territories, we maylimit ourselves to certain trades or territories only

Don't worry about performance too early though - it's more important to get the design right, and "right" in this case means using the database the way a database is meant to be used, as a transactional system.

The only issue left to tackle is the performance issue. In many cases it actually turns out to be a non-issue because of the clustered index on AgreementStatus (AgreementId, EffectiveDate) - there's very little I/O seeking going on there. But if it is ever an issue, there are ways to solve that, using triggers, indexed/materialized views, application-level events, etc.

Udi Dahan wrote about this in Don't Delete - Just Don't. There is always some sort of task, transaction, activity, or (my preferred term) event which actually represents the "delete". It's OK if you subsequently want to denormalize into a "current state" table for performance, but do that after you've nailed down the transactional model, not before. In this case you have "users". Users are essentially customers. Customers have a business relationship with you. That relationship does not simply vanish into thin air because they canceled their account. What's really happening is:

Paul Otlet, another great information visionary, to create a worldwide database for allsubjects.

Otlet's effort was more than a "database for all subjects", wasn't it? This seems a bit simplistic.

Duncan, Dennis. Index, A History of the: A Bookish Adventure from Medieval Manuscripts to the Digital Age. 1st ed. London: Allen Lane, 2021. https://wwnorton.com/books/9781324002543.

annotation link: urn:x-pdf:a4bd1877f0712efcc681d33d58777e55

The smallest collection of card catalogs is near the librarian’s information desk in the Social Science/Philosophy/Religion department on lower level three. It is rarely used and usually only by librarians. It contains hundreds of cards that reflect some of the most commonly asked questions of the department librarians. Most of the departments on the lower levels have similar small collections. Card catalog behind the reference desk on lower level three, photo credit: Tina Lernø

Dr Minor would read a text not for its meaning but for its words. It wasa novel approach to the task – the equivalent of cutting up a book word byword, and then placing each in an alphabetical list which helped the editorsquickly find quotations. Just as Google today ‘reads’ text as a series of wordsor symbols that are searchable and discoverable, so with Dr Minor. A manualundertaking of this kind was laborious – he was basically working as acomputer would work – but it probably resulted in a higher percentage of hisquotations making it to the Dictionary page than those of other contributors.

Instance methods Instances of Models are documents. Documents have many of their own built-in instance methods. We may also define our own custom document instance methods. // define a schema const animalSchema = new Schema({ name: String, type: String }, { // Assign a function to the "methods" object of our animalSchema through schema options. // By following this approach, there is no need to create a separate TS type to define the type of the instance functions. methods: { findSimilarTypes(cb) { return mongoose.model('Animal').find({ type: this.type }, cb); } } }); // Or, assign a function to the "methods" object of our animalSchema animalSchema.methods.findSimilarTypes = function(cb) { return mongoose.model('Animal').find({ type: this.type }, cb); }; Now all of our animal instances have a findSimilarTypes method available to them. const Animal = mongoose.model('Animal', animalSchema); const dog = new Animal({ type: 'dog' }); dog.findSimilarTypes((err, dogs) => { console.log(dogs); // woof }); Overwriting a default mongoose document method may lead to unpredictable results. See this for more details. The example above uses the Schema.methods object directly to save an instance method. You can also use the Schema.method() helper as described here. Do not declare methods using ES6 arrow functions (=>). Arrow functions explicitly prevent binding this, so your method will not have access to the document and the above examples will not work.

Certainly! Let's break down the provided code snippets:

In Mongoose, a schema is a blueprint for defining the structure of documents within a collection. When you define a schema, you can also attach methods to it. These methods become instance methods, meaning they are available on the individual documents (instances) created from that schema.

Instance methods are useful for encapsulating functionality related to a specific document or model instance. They allow you to define custom behavior that can be executed on a specific document. In the given example, the findSimilarTypes method is added to instances of the Animal model, making it easy to find other animals of the same type.

methods object directly in the schema options:javascript

const animalSchema = new Schema(

{ name: String, type: String },

{

methods: {

findSimilarTypes(cb) {

return mongoose.model('Animal').find({ type: this.type }, cb);

}

}

}

);

methods object directly in the schema:javascript

animalSchema.methods.findSimilarTypes = function(cb) {

return mongoose.model('Animal').find({ type: this.type }, cb);

};

Schema.method() helper:javascript

animalSchema.method('findSimilarTypes', function(cb) {

return mongoose.model('Animal').find({ type: this.type }, cb);

});

Imagine you have a collection of animals in your database, and you want to find other animals of the same type. Instead of writing the same logic repeatedly, you can define a method that can be called on each animal instance to find similar types. This helps in keeping your code DRY (Don't Repeat Yourself) and makes it easier to maintain.

```javascript const mongoose = require('mongoose'); const { Schema } = mongoose;

// Define a schema with a custom instance method const animalSchema = new Schema({ name: String, type: String });

// Add a custom instance method to find similar types animalSchema.methods.findSimilarTypes = function(cb) { return mongoose.model('Animal').find({ type: this.type }, cb); };

// Create the Animal model using the schema const Animal = mongoose.model('Animal', animalSchema);

// Create an instance of Animal const dog = new Animal({ type: 'dog', name: 'Buddy' });

// Use the custom method to find similar types dog.findSimilarTypes((err, similarAnimals) => { console.log(similarAnimals); }); ```

In this example, findSimilarTypes is a custom instance method added to the Animal schema. When you create an instance of the Animal model (e.g., a dog), you can then call findSimilarTypes on that instance to find other animals with the same type. The method uses the this.type property, which refers to the type of the current animal instance. This allows you to easily reuse the logic for finding similar types across different instances of the Animal model.

A file card system took up an entire wall. It included records of previous games for endless analytical pleasure and even an index of potential competitors' tournament histories.

There will be errors in MESON – those I have copied from books, magazines and the card collections I have access to, those I have copied from the other free online databases and those I have perpetrated myself. If you find an error, do contact me about it, quoting the problem ids (PIDs).

MESON is comprised in part of card index collections of chess problems and puzzles.

http://www.bstephen.me.uk/meson/meson.pl?opt=top MESON Chess Problem Database

Compiled using a variety of sources including card indexes.

found via

<script async src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>As for the Pirnie collection, not counted it, but I am slowly going through it for my online #ChessProblem database: https://t.co/eTDrPnX09b . Also going through several boxes of the White-Hume Collection which I have.

— Brian Stephenson (@bstephen2) August 5, 2020

Brian Stephenson@bstephen2·Aug 4, 2020The Pirnie Collection of #ChessProblem s is index cards in Clark's shoe boxes and is held in my house. The late JP Toft created a huge card database of #ChessProblem s in Scandinavia and is now held in a public library.

It Took Decades To Create This Chess Puzzle Database (30 Thousand), 2020. https://www.youtube.com/watch?v=Y9craX0M_2A.

A chess School named after Genrikh Kasparyan (alternately Henrik Kasparian) houses his card index of chess puzzles with over 30,000 cards.

The cards are stored in stacked wooden trays in a two door cabinet with 4 shelves.

There are at least 23 small wooden trays of cards pictured in the video, though there are possibly many more. (Possibly as many as about 35 based on the layout of the cabinet and those easily visible.)

Kasparyan's son Sergei donated the card index to the chess school.

Each index card in the collection, filed in portrait orientation, begins with the name of the puzzle composer, lists its first publication, has a chess board diagram with the pieces arranges, and beneath that the solution of the puzzle. The cards are arranged alphabetically by the name of the puzzle composer.

The individual puzzle diagrams appear to have been done with a stamp of the board done in light blue ink with darker blue (or purple?) and red inked stamped pieces arranged on top of it.

ᔥu/ManuelRodriguez331 in r/Zettelkasten - Chess players are memorizing games with index cards

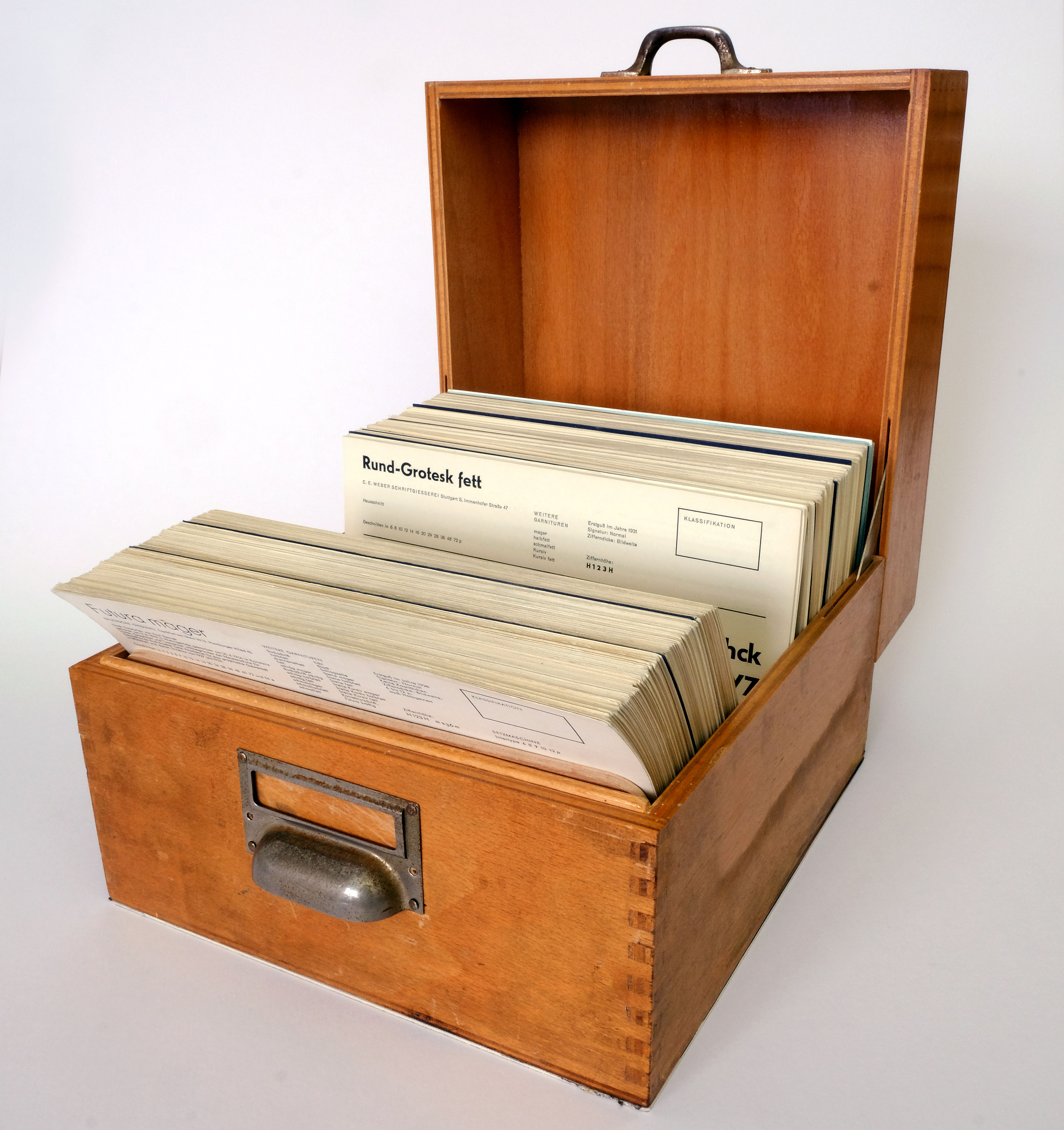

Wörgötter, Michael. “Schriftenkartei [Typeface Index], 1958–1971.” Photo sharing social website. Flickr, 2023. https://www.flickr.com/photos/letterformarchive/albums/72177720310834741. License: Creative Commons BY-NC-SA 2.0 https://creativecommons.org/licenses/by-nc-sa/2.0/

Found via: Coles, Stephen. “This Just In: Schriftenkartei, a Typeface Index.” Letterform Archive, November 3, 2023. https://letterformarchive.org/news/schriftenkartei-german-font-index/.

When Michael Wörgötter, a Munich-based designer and educator, came across his own Schriftenkartei set earlier this year, he understood their value for designers and researchers and wanted to make them as widely accessible as possible. He scanned each card at 1200 DPI, and reprinted them in two bound volumes, along with a handy supplementary guide, written in German and English, that offers historical background. The books are available for purchase directly from Wörgötter.

Munich-based designer and educator Michael Wörgötter digitally scanned and then printed bound copies of the 638 cards of the Schriftenkartei into two volumes with a supplementary guide for additional historical background. He subsequently donated the Schriftenkartei to the Letterform Archive.

Digital copies of the cards are available on Flicker (https://www.flickr.com/photos/letterformarchive/albums/72177720310834741) and the Letterform Archive intends to provide digital copies in their online archive.

Coles, Stephen. “This Just In: Schriftenkartei, a Typeface Index.” Letterform Archive, November 3, 2023. https://letterformarchive.org/news/schriftenkartei-german-font-index/.

Example of a zettelkasten covering the available typefaces produced from 1958 and 1971 in West Germany.

Father emptied a card �le for Margot and me and �lled it withindex cards that are blank on one side. This is to become ourreading �le, in which Margot and I are supposed to note down thebooks we’ve read, the author and the date. I’ve learned two newwords: “brothel” and “coquette.” I’ve bought a separate notebookfor new words.

—Anne Frank (1929-1945), diary entry dated Saturday, February 27, 1943 (age 13)

Anne Frank was given an empty card file by her father who filled it with index cards that were blank on one side. They were intended to use it as a "reading file" in which she and Margot were "supposed to note down the books we've read, the author and the date."

In the same entry she mentioned that she'd bought a separate notebook for writing down new words she encountered. Recent words she mentions encountering were "brothel" and "coquette".

Envisioning the next wave of emergent AI

Are we stretching too far by saying that AI are currently emergent? Isn't this like saying that card indexes of the early 20th century are computers. In reality they were data storage and the "computing" took place when humans did the actual data processing/thinking to come up with new results.

Emergence would seem to actually be the point which comes about when the AI takes its own output and continues processing (successfully) on it.

I wonder what you think of a distinction between the more traditional 'scholar's box', and the proto-databases that were used to write dictionaries and then for projects such as the Mundaneum. I can't help feeling there's a significant difference between a collection of notes meant for a single person, and a collection meant to be used collaboratively. But not sure exactly how to characterize this difference. Seems to me that there's a tradition that ended up with the word processor, and another one that ended up with the database. I feel that the word processor, unlike the database, was a dead end.

reply to u/atomicnotes at https://www.reddit.com/r/Zettelkasten/comments/16njtfx/comment/k1tuc9c/?utm_source=reddit&utm_medium=web2x&context=3

u/atomicnotes, this is an excellent question. (Though I'd still like to come to terms with people who don't think it acts as a knowledge management system, there's obviously something I'm missing.)

Some of your distinction comes down to how one is using their zettelkasten and what sorts of questions are being asked of it. One of the earliest descriptions I've seen that begins to get at the difference is the description by Beatrice Webb of her notes (appendix C) in My Apprenticeship. As she describes what she's doing, I get the feeling that she's taking the same broad sort of notes we're all used to, but it's obvious from her discussion that she's also using her slips as a traditional database, but is lacking modern vocabulary to describe it as such.

Early efforts like the OED, TLL, the Wb, and even Gertrud Bauer's Coptic linguistic zettelkasten of the late 1970s were narrow enough in scope and data collected to make them almost dead simple to define, organize and use as databases on paper. Of course how they were used to compile their ultimate reference books was a bit more complex in form than the basic data from which they stemmed.

The Mundaneum had a much more complex flavor because it required a standardized system for everyone to work in concert against much more freeform as well as more complex forms of collected data and still be able to search for the answers to specific questions. While still somewhat database flavored, it was dramatically different from the others because of it scope and the much broader sorts of questions one could ask of it. I think that if you ask yourself what sorts of affordances you get from the two different groups (databases and word processors (or even their typewriter precursors) you find even more answers.

Typewriters and word processors allowed one to get words down on paper quicker by a magnitude of order or two faster, and in combination with reproduction equipment, made it easier to spin off copies of the document for small scale and local mass distribution a lot easier. They do allow a few affordances like higher readability (compared with less standardized and slower handwriting), quick search (at least in the digital era), and moving pieces of text around (also in digital). Much beyond this, they aren't tremendously helpful as a composition tool. As a thinking tool, typewriters and word processors aren't significantly better than their analog predecessors, so you don't gain a huge amount of leverage by using them.

On the other hand, databases and their spreadsheet brethren offer a lot more, particularly in digital realms. Data collection and collation become much easier. One can also form a massive variety of queries on such collected data, not to mention making calculations on those data or subjecting them to statistical analyses. Searching, sorting, and making direct comparisons also become far easier and quicker to do once you've amassed the data you need. Here again, Beatrice Webb's early experience and descriptions are very helpful as are Hollerinth's early work with punch cards and census data and the speed with which the results could be used.

Now if you compare the affordances by each of these in the digital era and plot their shifts against increasing computer processing power, you'll see that the value of the word processor stays relatively flat while the database shows much more significant movement.

Surely there is a lot more at play, particularly at scale and when taking network effects into account, but perhaps this quick sketch may explain to you a bit of the difference you've described.

Another difference you may be seeing/feeling is that of contextualization. Databases usually have much smaller and more discrete amounts of data cross-indexed (for example: a subject's name versus weight with a value in pounds or kilograms.) As a result the amount of context required to use them is dramatically lower compared to the sorts of data you might keep in an average atomic/evergreen note, which may need to be more heavily recontextualized for you when you need to use it in conjunction with other similar notes which may also need you to recontextualize them and then use them against or with one another.

Some of this is why the cards in the Thesaurus Linguae Latinae are easier to use and understand out of the box (presuming you know Latin) than those you might find in the Mundaneum. They'll also be far easier to use than a stranger's notes which will require even larger contextualization for you, especially when you haven't spent the time scaffolding the related and often unstated knowledge around them. This is why others' zettelkasten will be more difficult (but not wholly impossible) for a stranger to use. You might apply the analogy of context gaps between children and adults for a typical Disney animated movie to the situation. If you're using someone else's zettelkasten, you'll potentially be able to follow a base level story the way a child would view a Disney cartoon. Compare this to the zettelkasten's creator who will not only see that same story, but will have a much higher level of associative memory at play to see and understand a huge level of in-jokes, cultural references, and other associations that an adult watching the Disney movie will understand that the child would completely miss.

I'm curious to hear your thoughts on how this all plays out for your way of conceptualizing it.

The first thing to do is to take that four-page synopsis and make a list of all the scenes that you’ll need to turn the story into a novel. And the easiest way to make that list is . . . with a spreadsheet.

Of course spreadsheets are databases of information and one can easily and profitably put all these details into index cards which are just as easy (maybe even easier) to move around

I think the problem with after_destroy is that it is triggered before the database commits. This means the change may not yet be seen by other processes querying the database; it also means the change could be rolled back, and never actually commited. Since shrine deletes the attachment in this hook, that would mean it might delete the attachment prematurely, or even delete the attachment when the record never ends up destroyed in the database at all (in case of rollback), which would be bad. For shrine's logic to work as expected here, it really does need to be triggered only after the DB commit in which the model destroy is committed.

These index cards are organized alphabetically by subject ranging from accessories to world affairs and covering almost everything in between.

Phyllis Diller's gag file was arranged alphabetically by subject and ranged from "accessories" to "world affairs".

Thanks Sascha for an excellent primer on the internal machinations of our favorite machines beyond the usual focus on the storage/memory and indexing portions of the process.

Said another way, a zettelkasten is part of a formal logic machine/process. Or alternately, as Markus Krajewski aptly demonstrates in Paper Machines (MIT Press, 2011), they are early analog storage devices in which the thinking and logic operations are done cerebrally (by way of direct analogy to brain and hand:manually) and subsequently noted down which thereby makes them computers.

Just as mathematicians try to break down and define discrete primitives or building blocks upon which they can then perform operations to come up with new results, one tries to find and develop the most interesting "atomic notes" from various sources which they can place into their zettelkasten in hopes of operating on them (usually by juxtaposition, negation, union, etc.) to derive, find, and prove new insights. If done well, these newly discovered ideas can be put back into the machine as inputs to create additional newer and more complex outputs continuously. While the complexity of Lie Algebras is glorious and seems magical, it obviously helps to first understand the base level logic before one builds up to it. The same holds true of zettelkasten.

Now if I could only get the printf portion to work the way I want...

But I would do less than justice to Mr. Adler's achieve-ment if I left the matter there. The Syntopicon is, in additionto all this, and in addition to being a monument to the indus-try, devotion, and intelligence of Mr. Adler and his staff, astep forward in the thought of the West. It indicates wherewe are: where the agreements and disagreements lie; wherethe problems are; where the work has to be done. It thushelps to keep us from wasting our time through misunder-standing and points to the issues that must be attacked.When the history of the intellectual life of this century iswritten, the Syntopicon will be regarded as one of the land-marks in it.

p xxvi

Hutchins closes his preface to his grand project with Mortimer J. Adler by giving pride of place to Adler's Syntopicon.

Adler's Syntopicon isn't just an index compiled into two books which were volumes 2 and 3 of The Great Books of the Western World, it's physically a topically indexed card index of data (a grand zettelkasten surveying Western culture if you will). It's value to readers and users is immeasurable and it stands as a fascinating example of what a well-constructed card index might allow one to do even when they don't have their own yet.

Adler spoke of practicing syntopical reading, but anyone who compiles their own card index (in either analog or digital form) will realize the ultimate value in creating their own syntopical writing or what Robert Hutchins calls participating in "The Great Conversation" across twenty-five centuries of documented human communication.

See also: https://hypothes.is/a/WF4THtUNEe2dZTdlQCbmXw

The way Hutchins presents the idea of "Adler's achievement" here seems to indicate that Hutchins didn't have a direct hand in compiling or working on it directly.

Discussing the documentary system of surveillance, Foucault points toa “partly official, partly secret hierarchy” in Paris that had been using a card index to managedata on suspects and criminals at least since 1833.

source apparently from: “Apparition de la fiche et constitution des sciences humaines: encore une invention que les historiens célèbrent peu.” Michel Foucault, Surveillir et punir. Naissance de la prison (Paris: Gallimard, 1975), 287, referring to A. Bonneville, De la recidive (Paris, 1844), 92–93.

Note taking can also be done to create a database (a la Beatrice Webb's scientific note taking).

Memindex Phondex Office Phone Number Organizer Styrene NOS

Memindex, Inc. of Rochester, NY manufactured a plastic "Phonedex" in the mid-20th century. It was made of Dow Chemical Styrene and sat underneath a standard rotary dial telephone and contained index cards with one's lists of phone numbers on them.

Basic statistics regarding the TLL: - ancient Latin vocabulary words: ca. 55,000 words - 10,000,000 slips - ca. 6,500 boxes - ca. 1,500 slips per box - library 32,000 volumes - contributors: 375 scholars from 20 different countries - 12 Indo-European specalists - 8 Romance specialists - 100 proof-readers - ca. 44,000 words published - published content: 70% of the entire vocabulary - print run: 1,350 - Publisher: consortium of 35 academies from 27 countries on 5 continents

Longest remaining words: - non / 37 boxes of ca 55,500 slips - qui, quae, quod / 65 boxes of ca. 96,000 slips - sum, esse, fui / 54.5 boxes of ca. 81,750 slips - ut / 35 boxes of ca 52,500 slips

Note that some of these words have individual zettelkasten for themselves approaching the size of some of the largest personal collections we know about!

[18:51]

The Wörterbuch der ägyptischen Sprache was an international collaborative zettelkasten project begun in 1897 and finally published as five volumes in 1926.

https://en.wikipedia.org/wiki/W%C3%B6rterbuch_der_%C3%A4gyptischen_Sprache

Ausgangspunkt und Zentrum der Arbeit am Altägyptischen Wörterbuch ist die Anlage eines erschöpfenden Corpus ägyptischer Texte.

In the early twentieth century one might have created a card index to study a large textual corpus, but in the twenty first one is more likely to rely on a relational database instead.

I love the fact that the image for "Research & History" here is a six drawer card index!

Lustig, Jason. “‘Mere Chips from His Workshop’: Gotthard Deutsch’s Monumental Card Index of Jewish History.” History of the Human Sciences, vol. 32, no. 3, July 2019, pp. 49–75. SAGE Journals, https://doi.org/10.1177/0952695119830900

Cross reference preliminary notes from https://journals.sagepub.com/doi/abs/10.1177/0952695119830900

Finished reading 2023-02-21 13:04:00

urn:x-pdf:6053dd751da0fa870cad9a71a28882ba

If you already have an instance of your model, you can start a transaction and acquire the lock in one go using the following code: book = Book.first book.with_lock do # This block is called within a transaction, # book is already locked. book.increment!(:views) end

As our needs become more sophisticated we steadily move away from that model. We may want to look at the information in a different way to the record store, perhaps collapsing multiple records into one, or forming virtual records by combining information for different places. On the update side we may find validation rules that only allow certain combinations of data to be stored, or may even infer data to be stored that's different from that we provide.

Since 2015 a digitalized card index of Greek functionwords in Coptic is available online (as part of the DDGCL)

A digitized version of Gertrud Bauer's zettelkasten has been available online since 2015.

https://refubium.fu-berlin.de/handle/fub188/24570

Some interesting programming and structured data with relationship to the Gertrud Bauer Zettelkasten Online.

Richter, Tonio Sebastian. “Whatever in the Coptic Language Is Not Greek, Can Wholly Be Considered Ancient Egyptian”: Recent Approaches towards an Integrated View of the Egyptian-Coptic Lexicon.” Journal of the Canadian Society for Coptic Studies. Journal de La Société Canadienne Pour Les Études Coptes 9 (2017): 9–32. https://doi.org/10.11588/propylaeumdok.00004673.

Skimmed for the specifics I was looking for with respect to Gertrud Bauer's zettelkasten.

Tami Gottschalk,

As a complete aside I can't help but wonder if Tami Gottschalk is related to Louis R. Gottschalk, the historian who wrote Understanding history; a primer of historical method?

The DDGLC data are not accessible online as of yet. A migration of the database and the data into aMySQL target system is underway and will allow us to offer an online user interface by the end of 2017 Whatwe can already offer now is a by-product of our work, the Gertrud Bauer Zettelkasten Online.6'

61 Available online at http://research.uni-leipzig.de/ddglc/bauerindex.html. The Work on this parergon to the lexicographical labors of the DDGLC project was funded by the Gertrud-und Alexander Böhlig-Stiftung. The digitization of the original card index was conducted by temporary collaborators and volunteers in the DDGLC project: Jenny Böttinger, Claudia Gamma, Tami Gottschalk, Josephine Hensel, Katrin John, Mariana Jung, Christina Katsikadeli, and Elen Saif. The IT concept and programming were carried out by Katrin John and Maximilian Moller.

Digitization of Gertrud Bauer's zettelkasten was underway in 2017 to put the data into a MySQL database with the intention of offering it as an online user interface sometime in 2017.

After browsing through a variety of the cards in Gertrud Bauer's Zettelkasten Online it becomes obvious that the collection was created specifically as a paper-based database for search, retrieval, and research. The examples and data within it are much more narrowly circumscribed for a specific use than those of other researchers like Niklas Luhmann whose collection spanned a much broader variety of topics and areas of knowledge.

This particular use case makes the database nature of zettelkasten more apparent than some others, particularly in modern (post-2013 zettelkasten of a more personal nature).

I'm reminded here of the use case(s) described by Beatrice Webb in My Apprenticeship for scientific note taking, by which she more broadly meant database creation and use.

In summer 2010, Professor Peter Nagel of Bonn forwarded seven cardboard boxes full of lexicographical slips to the DDGLC office, which had been handed over to him in the early '90s by the late Professor Alexander Böhlig.

In the 1990s Professor Alexander Böhlig of the University of Tuebingen gave Gertrud Bauer's zettelkasten to Professor Peter Nagel of Bonn. He in turn forwardd the seven cardboard boxes of slips to the Database and Dictionary of Greek Loanwords in Coptic (DDGLC) office for their use.

The original slips have been scanned and slotted into a database replicating the hierarchical structure of the original compilation. It is our pleasure to provide a new lexicographical tool to our colleagues in Coptology, Classical Studies, and Linguistics, and other interested parties.

The Database and Dictionary of Greek Loanwords in Coptic (DDGLC) has scanned and placed the original slips from Gertrud Bauer's zettelkasten into a database for scholarly use. The database allows the replication of the hierarchical structure of Bauer's original compilation.

Until the development of new digital tools, Goitein’s index cards providedthe most extensive database for the study of the documentary Geniza.

Goitein's index cards provided a database not only for his own work, but for those who studied documentary Geniza after him.

Goitein accumulated more than 27,000 index cards in his research work over the span of 35 years. (Approximately 2.1 cards per day.)

His collection can broadly be broken up into two broad categories: 1. Approximately 20,000 cards are notes covering individual topics generally making of the form of a commonplace book using index cards rather than books or notebooks. 2. Over 7,000 cards which contain descriptions of a single fragment from the Cairo Geniza.

A large number of cards in the commonplace book section were used in the production of his magnum opus, a six volume series about aspects of Jewish life in the Middle Ages, which were published as A Mediterranean Society: The Jewish communities of the Arab World as Portrayed in the Documents of the Cairo Geniza (1967–1993).

https://genizalab.princeton.edu/resources/goiteins-index-cards

<small><cite class='h-cite via'>ᔥ <span class='p-author h-card'>u/Didactico</span> in Goitein's Index Cards : antinet (<time class='dt-published'>12/15/2022 23:12:33</time>)</cite></small>

Postgres itself is a database “server.” There are several ways to connect to Postgres via “clients,” including GUIs, CLIs, and programming languages often via ORMs

I work primarily on Windows, but I support my kids who primarily use Mac for their college education. I have used DT on Mac, IPOS, IOS for about a year. On Windows, I have been using Kinook’s UltraRecall (UR) for the past 15 years. It is both a knowledge outliner and document manager. Built on top of a sql lite database. You can use just life DT and way way more. Of course, there is no mobile companion for UR. The MS Windows echo system in this regard is at least 12 years behind.

Reference for UltraRecall (UR) being the most DEVONthink like Windows alternative. No mobile companion for UR. Look into this being paired with Obsidian

Natto https://natto.dev<br /> built by Paul Shen https://twitter.com/_paulshen

https://www.loom.com/share/a05f636661cb41628b9cb7061bd749ae

Synopsis: Maggie Delano looks at some of the affordances supplied by Tana (compared to Roam Research) in terms of providing better block-based user interface for note type creation, search, and filtering.

These sorts of tools and programmable note implementations remind me of Beatrice Webb's idea of scientific note taking or using her note cards like a database to sort and search for data to analyze it and create new results and insight.

It would seem that many of these note taking tools like Roam and Tana are using blocks and sub blocks as a means of defining atomic notes or database-like data in a way in which sub-blocks are linked to or "filed underneath" their parent blocks. In reality it would seem that they're still using a broadly defined index card type system as used in the late 1800s/early 1900s to implement a set up that otherwise would be a traditional database in the Microsoft Excel or MySQL sort of fashion, the major difference being that the user interface is cognitively easier to understand for most people.

These allow people to take a form of structured textual notes to which might be attached other smaller data or meta data chunks that can be easily searched, sorted, and filtered to allow for quicker or easier use.

Ostensibly from a mathematical (or set theoretic and even topological) point of view there should be a variety of one-to-one and onto relationships (some might even extend these to "links") between these sorts of notes and database representations such that one should be able to implement their note taking system in Excel or MySQL and do all of these sorts of things.

Cascading Idea Sheets or Cascading Idea Relationships

One might analogize these sorts of note taking interfaces to Cascading Style Sheets (CSS). While there is the perennial question about whether or not CSS is a programming language, if we presume that it is (and it is), then we can apply the same sorts of class, id, and inheritance structures to our notes and their meta data. Thus one could have an incredibly atomic word, phrase, or even number(s) which inherits a set of semantic relationships to those ideas which it sits below. These links and relationships then more clearly define and contextualize them with respect to other similar ideas that may be situated outside of or adjacent to them. Once one has done this then there is a variety of Boolean operations which might be applied to various similar sets and classes of ideas.

If one wanted to go an additional level of abstraction further, then one could apply the ideas of category theory to one's notes to generate new ideas and structures. This may allow using abstractions in one field of academic research to others much further afield.

The user interface then becomes the key differentiator when bringing these ideas to the masses. Developers and designers should be endeavoring to allow the power of complex searches, sorts, and filtering while minimizing the sorts of advanced search queries that an average person would be expected to execute for themselves while also allowing some reasonable flexibility in the sorts of ways that users might (most easily for them) add data and meta data to their ideas.

Jupyter programmable notebooks are of this sort, but do they have the same sort of hierarchical "card" type (or atomic note type) implementation?

Gotthard Deutsch (1859–1921) taught at Hebrew Union College in Cincinnati from 1891 until his death, where he produced a card index of 70,000 ‘facts’ of Jewish history.

Gotthard Deutsch (1859-1921) had a card index of 70,000 items relating to Jewish history.

There is a difference between various modes of note taking and their ultimate outcomes. Some is done for learning about an area and absorbing it into one's own source of general knowledge. Others are done to collect and generate new sorts of knowledge. But some may be done for raw data collection and analysis. Beatrice Webb called this "scientific note taking".

Historian Jacques Goutor talks about research preparation for this sort of data collecting and analysis though he doesn't give it a particular name. He recommends reading papers in related areas to prepare for the sort of data acquisition one may likely require so that one can plan out some of one's needs in advance. This will allow the researcher, especially in areas like history or sociology, the ability to preplan some of the sorts of data and notes they'll need to take from their historical sources or subjects in order to carry out their planned goals. (p8)

C. Wright Mills mentions (On Intellectual Craftsmanship, 1952) similar research planning whereby he writes out potential longer research methods even when he is not able to spend the time, effort, energy, or other (financial) resources to carry out such plans. He felt that just the thought experiments and exercise of doing such unfulfilled research often bore fruit in his other sociological endeavors.

Google Forms and Sheets allow users toannotate using customizable tools. Google Forms offers a graphicorganizer that can prompt student-determined categorical input andthen feeds the information into a Sheets database. Sheetsdatabases are taggable, shareable, and exportable to other software,such as Overleaf (London, UK) for writing and Python for coding.The result is a flexible, dynamic knowledge base with many learningapplications for individual and group work

Who is using these forms in practice? I'd love to see some examples.

This sort of set up could be used with some outlining functionality to streamline the content creation end of common note taking practices.

Is anyone using a spreadsheet program (Excel, Google Sheets) as the basis for their zettelkasten?

Link to examples of zettelkasten as database (Webb, Seignobos suggestions)

arranged according to their subject-matter ;" that" epigraphic monuments belonging to the sameterritory mutually explain each other when placedside by side ;" and, lastly, that " while it is all butimpossible to range in order of subject-matter ahundred thousand inscriptions nearly all of whichbelong to several categories ; on the other hand,each monument has but one place, and a verydefinite place, in the geographical order."

Similar to the examples provided by Beatrice Webb in My Apprenticeship, the authors here are talking about a sort of scientific note taking method that is ostensibly similar to that of the use of a modern day computer database or spreadsheet function, but which had to be effected in index card form to do the sorting and compiling and analysis.

Do the authors here use the specific phrase scientific note taking? It appears that they do not.

the method of slips is the only one mechanicallypossible for the purpose of forming, classifying, andutiUsing a collection of documents of any greatextent. Statisticians, financiers, and men of letterswho observe, have now discovered this as well asscholars.

Moreover

A zettelkasten type note taking method isn't only popular and useful for scholars by 1898, but is useful to "statisticians, financiers, and men of letters".

Note carefully the word "mechanically" here used in a pre-digital context. One can't easily keep large amounts of data in one's head at once to make sense of it, so having a physical and mechanical means of doing so would have been important. In 21st century contexts one would more likely use a spreadsheet or database for these types of manipulations at increasingly larger scales.

It wasnot until we had completely re-sorted all our innumerable sheets ofpaper according to subjects, thus bringing together all the facts relatingto each, whatever the trade concerned, or the place or the date—andhad shuffled and reshuffled these sheets according to various tentativehypotheses—that a clear, comprehensive and verifiable theory of theworking and results of Trade Unionism emerged in our minds; tobe embodied, after further researches by way of verification, in ourIndustrial Democracy (1897).

Beatrice Webb was using her custom note taking system in the lead up to the research that resulted in the publication of Industrial Democracy (1897).

Is there evidence that she was practicing this note taking/database practice earlier than this?

Validation

Mongoose Validation. This is essential.

Chawla, D. S. (2021). Hundreds of ‘predatory’ journals indexed on leading scholarly database. Nature. https://doi.org/10.1038/d41586-021-00239-0

after_commit { puts "We're all done!" }

Notice the order: this is printed last, after the outer (real) transaction is committed, not when the inner "transaction" block finishes without error.

These callbacks are smart enough to run after the final (outer) transaction* is committed. * Usually, there is one real transaction and nested transactions are implemented through savepoints (see, for example, PostgreSQL).

important qualification: the outer transaction, the (only) real transaction

These callbacks are focused on the transactions, instead of specific model actions.

At least I think this is talking about this as limitation/problem.

The limitation/problem being that it's not good/useful for performing after-transaction code only for specific actions.

But the next sentence "This is beneficial..." seems contradictory, so I'm a bit confused/unclear of what the intention is...

Looking at this project more, it doesn't appear to solve the "after-transaction code only for specific actions" problem like I initially thought it did (and like https://github.com/grosser/ar_after_transaction does), so I believe I was mistaken. Still not sure what is meant by "instead of specific model actions". Are they claiming that "before_commit_on_create" for example is a "specific model action"? (hardly!) That seems almost identical to the (not specific enough) callbacks provided natively by Rails. Oh yeah, I guess they do point out that Rails 3 adds this functionality, so this gem is only needed for Rails 2.

In this case, the worker process query the newly-created notification before main process commits the transaction, it will raise NotFoundError, because transaction in worker process can't read uncommitted notification from transaction in main process.

Generates the following sql in sqlite3: "SELECT \"patients\".* FROM \"patients\" INNER JOIN \"users\" ON \"users\".\"id\" = \"patients\".\"user_id\" WHERE (\"users\".\"name\" LIKE '%query%')" And the following sql in postgres (notice the ILIKE): "SELECT \"patients\".* FROM \"patients\" INNER JOIN \"users\" ON \"users\".\"id\" = \"patients\".\"user_id\" WHERE (\"users\".\"name\" ILIKE '%query%')" This allows you to join with simplicity, but still get the abstraction of the ARel matcher to your RDBMS.

Data integrity is a good thing. Constraining the values allowed by your application at the database-level, rather than at the application-level, is a more robust way of ensuring your data stays sane.

# Optionally, you can write a description for the migration, which you can use for # documentation and changelogs. describe 'The _id suffix has been removed from the author property in the Articles API.'

Object hierarchies are very different from relational hierarchies. Relational hierarchies focus on data and its relationships, whereas objects manage not only data, but also their identity and the behavior centered around that data.

If you need to ensure migrations run in a certain order with regular db:migrate, set up Outrigger.ordered. It can be a hash or a proc that takes a tag; either way it needs to return a sortable value: Outrigger.ordered = { predeploy: -1, postdeploy: 1 } This will run predeploys, untagged migrations (implicitly 0), and then postdeploy migrations.

The code will work without exception but it doesn’t set correct association, because the defined classes are under namespace AddStatusToUser. This is what happens in reality: role = AddStatusToUser::Role.create!(name: 'admin') AddStatusToUser::User.create!(nick: '@ka8725', role: role)

this gem promotes writing tests for data migrations providing a way allows to write code that migrates data in separate methods.

having the code migrates data separately covered by proper tests eliminates those pesky situations with outdated migrations or corrupted data.

There are three keys to backfilling safely: batching, throttling, and running it outside a transaction. Use the Rails console or a separate migration with disable_ddl_transaction!.

Active Record creates a transaction around each migration, and backfilling in the same transaction that alters a table keeps the table locked for the duration of the backfill. class AddSomeColumnToUsers < ActiveRecord::Migration[7.0] def change add_column :users, :some_column, :text User.update_all some_column: "default_value" end end

Okay, so what’s the blockchain? It’s a database. Unlike most databases, it’s not controlled by one entity and it’s not easily rewritten. Instead, it’s a ledger, a permanent, examinable, public database. One can use it to record transactions of various sorts. It would be a really good way to keep track of property records, for example. Instead, we have title insurance, unsearchable folders of deeds in City Hall and often dusty tax records.

This wrongly assumes that

Delegated types newly introduced here looks like a Class Table Inheritance (CTI).

A very visible aspect of the object-relational mismatch is the fact that relational databases don't support inheritance. You want database structures that map clearly to the objects and allow links anywhere in the inheritance structure. Class Table Inheritance supports this by using one database table per class in the inheritance structure.

Tyler Black, MD. (2022, January 4). /1 =-=-=-=-=-=-=- Thread: Mortality in 2020 and myths =-=-=-=-=-=-=- 2020, unsurprisingly, came with excess death. There was an 18% increase in overall mortality, year on year. But let’s dive in a little bit deeper. The @CDCgov has updated WONDER, its mortality database. Https://t.co/DbbvvbTAZQ [Tweet]. @tylerblack32. https://twitter.com/tylerblack32/status/1478501508132048901

Singh Chawla, D. (2022). Massive open index of scholarly papers launches. Nature. https://doi.org/10.1038/d41586-022-00138-y

Antivaccine activists use a government database on side effects to scare the public. (n.d.). Retrieved December 7, 2021, from https://www.science.org/content/article/antivaccine-activists-use-government-database-side-effects-scare-public

Dumpster diving in the VAERS database to find more COVID-19 vaccine-associated myocarditis in children | Science-Based Medicine. (n.d.). Retrieved September 14, 2021, from https://sciencebasedmedicine.org/dumpster-diving-in-vaers-doctors-fall-into-the-same-trap-as-antivaxxers/

See how age and illnesses change the risk of dying from covid-19 | The Economist. (n.d.). Retrieved June 29, 2021, from https://www.economist.com/graphic-detail/covid-pandemic-mortality-risk-estimator

Li, X., Ostropolets, A., Makadia, R., Shoaibi, A., Rao, G., Sena, A. G., Martinez-Hernandez, E., Delmestri, A., Verhamme, K., Rijnbeek, P. R., Duarte-Salles, T., Suchard, M. A., Ryan, P. B., Hripcsak, G., & Prieto-Alhambra, D. (2021). Characterising the background incidence rates of adverse events of special interest for covid-19 vaccines in eight countries: Multinational network cohort study. BMJ, 373, n1435. https://doi.org/10.1136/bmj.n1435

For example, Database Cleaner for a long time was a must-have add-on: we couldn’t use transactions to automatically rollback the database state, because each thread used its own connection; we had to use TRUNCATE ... or DELETE FROM ... for each table instead, which is much slower. We solved this problem by using a shared connection in all threads (via the TestProf extension). Rails 5.1 was released with a similar functionality out-of-the-box.

It’s easy to create bugs because the environment is a somewhat degenerate settings database.

I suggest to make it UNIQUE because it seems like the column should be unique

Wadman, M. (2021). Antivaccine activists use a government database on side effects to scare the public. Science. https://doi.org/10.1126/science.abj6981

Early government intervention is key to reducing the spread of COVID-19. (2020, May 6). Science & Research News | Frontiers. https://blog.frontiersin.org/2020/05/06/early-government-intervention-is-key-to-reducing-the-spread-of-covid-19/

The Economic Impacts of COVID-19: Evidence from a New Public Database Built Using Private Sector Data. (2020, May 7). Opportunity Insights. https://opportunityinsights.org/paper/tracker/

Robert Colvile. (2021, February 16). The vaccine passports debate is a perfect illustration of my new working theory: That the most important part of modern government, and its most important limitation, is database management. Please stick with me on this—It’s much more interesting than it sounds. (1/?) [Tweet]. @rcolvile. https://twitter.com/rcolvile/status/1361673425140543490

COVID-19 Living Evidence. (2020, October 23). Weekly update of COAP As of 23.10.2020, we have indexed 80588 publications: 8902 pre-prints 71686 peer-reviewed publications Pre-prints: BioRxiv, MedRxiv Peer-reviewed: PubMed, EMBASE https://t.co/RaDy1Wm4Hq https://t.co/FYRaYPe8oG [Tweet]. @evidencelive. https://twitter.com/evidencelive/status/1319578431848353793

Else, H. (2020). How a torrent of COVID science changed research publishing—In seven charts. Nature, 588(7839), 553–553. https://doi.org/10.1038/d41586-020-03564-y

However, sometimes actions can't be rolled back and it is unfortunately unavoidable. For example, consider when we send emails during the call to process. If we send before saving a record and that record fails to save what do we do? We can't unsend that email.

I prefer not to duplicate the name of the table in any of the columns (So I prefer option 1 above).

So do I.

Gen3 Data Commons overview/explained.

GDC purpose/summary: https://youtu.be/LY5SkHJplxc?t=118

IANA Time Zone Database Main time zone database. This is where Moment TimeZone sources its data from.

every place has a history of different Time Zones because of the geographical, economical, political, religious reasons .These rules are present in IANA Time Zone database. Also it contains rules for Daylight Saving Time (DST) . Checkout the map on this page: https://en.wikipedia.org/wiki/Daylight_saving_time

Databases If databases data is stored on a ZFS filesystem, it’s better to create a separate dataset with several tweaks: zfs create -o recordsize=8K -o primarycache=metadata -o logbias=throughput -o mountpoint=/path/to/db_data rpool/db_data recordsize: match the typical RDBMSs page size (8 KiB) primarycache: disable ZFS data caching, as RDBMSs have their own logbias: essentially, disabled log-based writes, relying on the RDBMSs’ integrity measures (see detailed Oracle post)

Interaction with stable storage in the modern world isgenerally mediated by systems that fall roughly into oneof two categories: a filesystem or a database. Databasesassume as much as they can about the structure of thedata they store. The type of any given piece of datais known (e.g., an integer, an identifier, text, etc.), andthe relationships between data are well defined. Thedatabase is the all-knowing and exclusive arbiter of ac-cess to data.Unfortunately, if the user of the data wants more di-rect control over the data, a database is ill-suited. At thesame time, it is unwieldy to interact directly with stablestorage, so something light-weight in between a databaseand raw storage is needed. Filesystems have traditionallyplayed this role. They present a simple container abstrac-tion for data (a file) that is opaque to the system, and theyallow a simple organizational structure for those contain-ers (a hierarchical directory structure)

Databases and filesystems are both systems which mediate the interaction between user and stable storage.

Often, the implicit aim of a database is to capture as much as they can about the structure of the data they store. The database is the all-knowing and exclusive arbiter of access to data.

If a user wants direct access to the data, a database isn't the right choice, but interacting directly with stable storage is too involved.

A Filesystem is a lightweight (container) abstraction in between a database and raw storage. Filesystems are opaque to the system (i.e. visible only to the user) and allow for a simple, hierarchical organizational structure of directories.

I've spent the last 3.5 years building a platform for "information applications". The key observation which prompted this was that hierarchical file systems didn't work well for organising information within an organisation.However, hierarchy itself is still incredibly valuable. People think in terms of hierarchies - it's just that they think in terms of multiple hierarchies and an item will almost always belong in more than one place in those hierarchies.If you allow users to describe items in the way which makes sense to them, and then search and browse by any of the terms they've used, then you've eliminated almost all the frustrations of a file system. In my experience of working with people building complex information applications, you need: * deep hierarchy for classifying things * shallow hierarchy for noting relationships (eg "parent company") * multi-values for every single field * controlled values (in our case by linking to other items wherever possible) Unfortunately, none of this stuff is done well by existing database systems. Which was annoying, because I had to write an object store.

Impressed by this comment. It foreshadows what Roam would become:

What you need to build a complex information system is:

I too have been confused by behavior like this. Perhaps a clearly defined way to isolate atomic units with synchronous reactivity would help those of us still working through the idiosyncrasies of reactivity.

Wilkinson, Jack, Kellyn F. Arnold, Eleanor J. Murray, Maarten van Smeden, Kareem Carr, Rachel Sippy, Marc de Kamps, et al. ‘Time to Reality Check the Promises of Machine Learning-Powered Precision Medicine’. The Lancet Digital Health 0, no. 0 (16 September 2020). https://doi.org/10.1016/S2589-7500(20)30200-4.

The Realm is a new database module that is improving the way databases are used and also supports relationships between objects. If you are part of the SQL development world, then you must be familiar with the Realm.

COVID-19 reports. (n.d.). Imperial College London. Retrieved September 17, 2020, from http://www.imperial.ac.uk/medicine/departments/school-public-health/infectious-disease-epidemiology/mrc-global-infectious-disease-analysis/covid-19/covid-19-reports/

So Memex was first and foremost an extension of human memory and the associative movements that the mind makes through information: a mechanical analogue to an already mechanical model of memory. Bush transferred this idea into information management; Memex was distinct from traditional forms of indexing not so much in its mechanism or content, but in the way it organised information based on association. The design did not spring from the ether, however; the first Memex design incorporates the technical architecture of the Rapid Selector and the methodology of the Analyzer — the machines Bush was assembling at the time.

How much further would Bush have gone if he had known about graph theory? He is describing a graph database with nodes and edges and a graphical model itself is the key to the memex.

Outbreak Science Rapid PREreview • Dashboard. (n.d.). Retrieved September 11, 2020, from https://outbreaksci.prereview.org/dashboard?q=COVID-19&q=Coronavirus&q=SARS-CoV-2

In this writeup, we will be discussing one such important prerequisite that is React Native database. We can call it the backbone of React Native applications.

Zheng, Q., Jones, F. K., Leavitt, S. V., Ung, L., Labrique, A. B., Peters, D. H., Lee, E. C., & Azman, A. S. (2020). HIT-COVID, a global database tracking public health interventions to COVID-19. Scientific Data, 7(1), 286. https://doi.org/10.1038/s41597-020-00610-2

Home. (n.d.). Retrieved June 14, 2020, from http://riskhomeostasis.org/

Allows batch updates by silencing notifications while the fn is running. Example: form.batch(() => { form.change('firstName', 'Erik') // listeners not notified form.change('lastName', 'Rasmussen') // listeners not notified }) // NOW all listeners notified

Preprint Servers Have Changed Research Culture in Many Fields. Will a New One for Education Catch On? - EdSurge News. (2020, August 20). EdSurge. https://www.edsurge.com/news/2020-08-20-preprint-servers-have-changed-research-culture-in-many-fields-will-a-new-one-for-education-catch-on

Living Evidence on COVID-19. (n.d.). Retrieved August 24, 2020, from https://zika.ispm.unibe.ch/assets/data/pub/search_beta/

Arqoub, O. A., Elega, A. A., Özad, B. E., Dwikat, H., & Oloyede, F. A. (2020). Mapping the Scholarship of Fake News Research: A Systematic Review. Journalism Practice, 0(0), 1–31. https://doi.org/10.1080/17512786.2020.1805791

Roll over each school to find out more information on their respective plans. (n.d.). Tableau Software. Retrieved August 2, 2020, from https://public.tableau.com/views/NESCACFallPlansMap/Dashboard1

Panda, A., Gonawela, A., Acharyya, S., Mishra, D., Mohapatra, M., Chandrasekaran, R., & Pal, J. (2020). NivaDuck—A Scalable Pipeline to Build a Database of Political Twitter Handles for India and the United States. International Conference on Social Media and Society, 200–209. https://doi.org/10.1145/3400806.3400830

Follow the money: See where $380B in Paycheck Protection Program money went. (n.d.). Retrieved July 27, 2020, from https://www.cnn.com/projects/ppp-business-loans/

Stathoulopoulos, K. (2020, March 17). Orion: An open-source tool for the science of science. Medium. https://medium.com/@kstathou/orion-an-open-source-tool-for-the-science-of-science-4259935f91d4

Submit a COVID 19 Dashboard. (n.d.). Google Docs. Retrieved July 19, 2020, from https://docs.google.com/forms/d/e/1FAIpQLSdcq-I98VZeA7NjUxBvqMGmdg5ahRucDVwxo057E-x9BmeM-Q/viewform?embedded=true&usp=embed_facebook