À mesure que nos vies sont de plus en plus connectées, les données personnelles que nous émettons lors de chacune de nos activités deviennent un enjeu industriel considérable.

Partons à la découverte d’un monde bâti autour du big data.

À mesure que nos vies sont de plus en plus connectées, les données personnelles que nous émettons lors de chacune de nos activités deviennent un enjeu industriel considérable.

Partons à la découverte d’un monde bâti autour du big data.

And it constitutes an important but overlooked signpost in the 20th-centuryhistory of information, as ‘facts’ fell out of fashion but big data became big business.

Of course the hardest problem in big data has come to be realized as the issue of cleaning up messing and misleading data!

hat we want is to be able to leave Facebook and still talk to our friends, instead of having many Facebooks.

What about Matrix?

AI text generator, a boon for bloggers? A test report

While I wanted to investigate AI text generators further, I ended up writing a testreport.. I was quite stunned because the AI text generator turns out to be able to create a fully cohesive and to-the-point article in minutes. Here is the test report.

Large enterprises and organizations use a vast variety ofdifferent information systems, databases, portals, wikis andKnowledge Bases [KBs] combining hundreds and thousandsof data and information sources.

Problemstellung: Big Data

Notez que le coût pour une grande sécurité est incroyablement élevé. Si vous voulez fournir des données sur lesquelles vous êtes capable d’effectuer des calculs de manière complètement homomorphe, la taille va être extrêmement grande par rapport aux données initiales. Actuellement, c’est quelque chose qui n’est pas exploitable pour des calculs de type big data.

Ne pas faire de big data avec des donnees personnelles et faire du chiffrement homomorphe sur les donnees personnelles

billing data created by electric utilities

That's really true. For example, we can use the big data of electricity to depict the resumption rate of factories after the pandemic.

Teague, S., Shatte, A. B. R., Fuller-Tyszkiewicz, M., & Hutchinson, D. M. (2021). Social media monitoring of mental health during disasters: A scoping review of methods and applications. PsyArXiv. https://doi.org/10.31234/osf.io/ykz2n

The privacy policy — unlocking the door to your profile information, geodata, camera, and in some cases emails — is so disturbing that it has set off alarms even in the tech world.

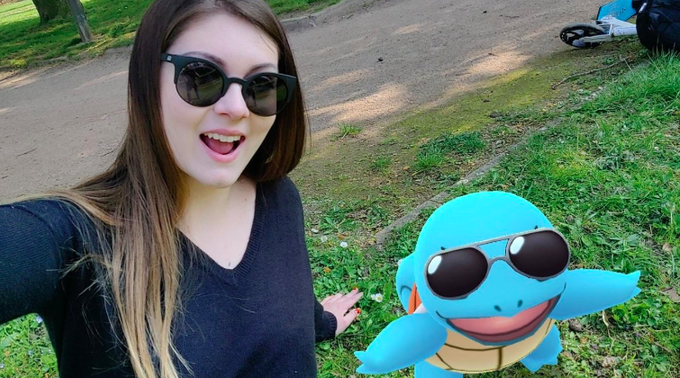

This Intercept article covers some of the specific privacy policy concerns Barron hints at here. The discussion of one of the core patents underlying the game, which is described as a “System and Method for Transporting Virtual Objects in a Parallel Reality Game" is particularly interesting. Essentially, this system generates revenue for the company (in this case Niantic and Google) through the gamified collection of data on the real world - that selfie you took with squirtle is starting to feel a little bit less innocent in retrospect...

Monod, Mélodie, Alexandra Blenkinsop, Xiaoyue Xi, Daniel Hebert, Sivan Bershan, Simon Tietze, Marc Baguelin, et al. ‘Age Groups That Sustain Resurging COVID-19 Epidemics in the United States’. Science 371, no. 6536 (26 March 2021). https://doi.org/10.1126/science.abe8372.

Ioannidis, John P. A. (2020) ‘The Infection Fatality Rate of COVID-19 Inferred from Seroprevalence Data’. MedRxiv. https://doi.org/10.1101/2020.05.13.20101253.

Goolsbee, Austan, and Chad Syverson. ‘Fear, Lockdown, and Diversion: Comparing Drivers of Pandemic Economic Decline 2020’. Journal of Public Economics 193 (1 January 2021): 104311. https://doi.org/10.1016/j.jpubeco.2020.104311.

Li, Y. (2020, October 18). Public health measures and R. Media Hopper Create. https://media.ed.ac.uk/media/1_1uhkv3uc

McCabe, Stefan, Leo Torres, Timothy LaRock, Syed Arefinul Haque, Chia-Hung Yang, Harrison Hartle, and Brennan Klein. ‘Netrd: A Library for Network Reconstruction and Graph Distances’. ArXiv:2010.16019 [Physics], 29 October 2020. http://arxiv.org/abs/2010.16019.

Heroy, Samuel, Isabella Loaiza, Alexander Pentland, and Neave O’Clery. ‘Controlling COVID-19: Labor Structure Is More Important than Lockdown Policy’. ArXiv:2010.14630 [Physics], 5 November 2020. http://arxiv.org/abs/2010.14630.

Li, You, Harry Campbell, Durga Kulkarni, Alice Harpur, Madhurima Nundy, Xin Wang, and Harish Nair. ‘The Temporal Association of Introducing and Lifting Non-Pharmaceutical Interventions with the Time-Varying Reproduction Number (R) of SARS-CoV-2: A Modelling Study across 131 Countries’. The Lancet Infectious Diseases 21, no. 2 (1 February 2021): 193–202. https://doi.org/10.1016/S1473-3099(20)30785-4.

Sabino, Ester C., Lewis F. Buss, Maria P. S. Carvalho, Carlos A. Prete, Myuki A. E. Crispim, Nelson A. Fraiji, Rafael H. M. Pereira, u. a. „Resurgence of COVID-19 in Manaus, Brazil, despite High Seroprevalence“. The Lancet 0, Nr. 0 (27. Januar 2021). https://doi.org/10.1016/S0140-6736(21)00183-5.

(2021). How epidemiology has shaped the COVID pandemic. Nature, 589, 491-492. Doi: 10.1038/d41586-021-00183-z

MacKenna, Brian, Helen J. Curtis, Caroline E. Morton, Peter Inglesby, Alex J. Walker, Jessica Morley, Amir Mehrkar, u. a. „Trends, Regional Variation, and Clinical Characteristics of COVID-19 Vaccine Recipients: A Retrospective Cohort Study in 23.4 Million Patients Using OpenSAFELY.“ MedRxiv, 26. Januar 2021, 2021.01.25.21250356. https://doi.org/10.1101/2021.01.25.21250356.

Brous, P., & Janssen, M. (2020). Trusted Decision-Making: Data Governance for Creating Trust in Data Science Decision Outcomes. Administrative Sciences, 10(4), 81. https://doi.org/10.3390/admsci10040081

Elmas, T., Overdorf, R., Akgül, Ö. F., & Aberer, K. (2020). Misleading Repurposing on Twitter. ArXiv:2010.10600 [Cs]. http://arxiv.org/abs/2010.10600

AI and control of Covid-19 coronavirus. (n.d.). Artificial Intelligence. Retrieved October 15, 2020, from https://www.coe.int/en/web/artificial-intelligence/ai-and-control-of-covid-19-coronavirus

Howard, M. C. (2021). Gender, face mask perceptions, and face mask wearing: Are men being dangerous during the COVID-19 pandemic? Personality and Individual Differences, 170, 110417. https://doi.org/10.1016/j.paid.2020.110417

Non-Covid infectious disease cases down in England, data suggests. (2020, October 9). The Guardian. http://www.theguardian.com/science/2020/oct/09/non-covid-infectious-disease-cases-down-in-england-data-suggests

Dr Duncan Robertson on Twitter. (n.d.). Twitter. Retrieved October 12, 2020, from https://twitter.com/Dr_D_Robertson/status/1314544108547997703

COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved October 11, 2020, from https://covid-19.iza.org/publications/dp13620/

COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved October 11, 2020, from https://covid-19.iza.org/publications/dp13640/

New tool for the early detection of public health threats from Twitter data: Epitweetr. (2020, October 1). European Centre for Disease Prevention and Control. https://www.ecdc.europa.eu/en/news-events/new-tool-early-detection-public-health-threats-twitter-data-epitweetr

Davis, N., & Boseley, S. (2020, October 9). Coronavirus: At least three-quarters of people in UK fail to self-isolate. The Guardian. https://www.theguardian.com/world/2020/oct/09/covid-in-england-latest-figures-suggest

2019nCOV. (n.d.). MOBS Lab. Retrieved October 2, 2020, from https://www.mobs-lab.org/2019ncov.html

Ruktanonchai, N. W., Floyd, J. R., Lai, S., Ruktanonchai, C. W., Sadilek, A., Rente-Lourenco, P., Ben, X., Carioli, A., Gwinn, J., Steele, J. E., Prosper, O., Schneider, A., Oplinger, A., Eastham, P., & Tatem, A. J. (2020). Assessing the impact of coordinated COVID-19 exit strategies across Europe. Science, 369(6510), 1465–1470. https://doi.org/10.1126/science.abc5096

Obradovich, N., Özak, Ö., Martín, I., Ortuño-Ortín, I., Awad, E., Cebrián, M., Cuevas, R., Desmet, K., Rahwan, I., & Cuevas, Á. (2020). Expanding the measurement of culture with a sample of two billion humans [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/qkf42

Friston, K., Costello, A., & Pillay, D. (2020). Dark matter, second waves and epidemiological modelling. MedRxiv, 2020.09.01.20185876. https://doi.org/10.1101/2020.09.01.20185876

Holman, E. A., Thompson, R. R., Garfin, D. R., & Silver, R. C. (2020). The unfolding COVID-19 pandemic: A probability-based, nationally representative study of mental health in the U.S. Science Advances, eabd5390. https://doi.org/10.1126/sciadv.abd5390

Graphs and maps from EUROMOMO. (n.d.). EUROMOMO. Retrieved September 18, 2020, from https://euromomo.eu/dev-404-page/

COVID-19 reports. (n.d.). Imperial College London. Retrieved September 17, 2020, from http://www.imperial.ac.uk/medicine/departments/school-public-health/infectious-disease-epidemiology/mrc-global-infectious-disease-analysis/covid-19/covid-19-reports/

Stuart mcdonald on Twitter. (n.d.). Twitter. Retrieved September 10, 2020, from https://twitter.com/ActuaryByDay/status/1303719422595682306

Spiegelhalter, D. (2020). Use of “normal” risk to improve understanding of dangers of covid-19. BMJ, 370. https://doi.org/10.1136/bmj.m3259

Hu, Y., & Wang, R.-Q. (2020). Understanding the removal of precise geotagging in tweets. Nature Human Behaviour, 1–3. https://doi.org/10.1038/s41562-020-00949-x

COVID-19. (n.d.). Retrieved September 7, 2020, from https://covid19.healthdata.org/united-states-of-america?view=total-deaths&tab=trend

COVID Projections Tracker. (n.d.). Retrieved September 7, 2020, from https://www.covid-projections.com/

NW, 1615 L. St, Suite 800Washington, & Inquiries, D. 20036USA202-419-4300 | M.-857-8562 | F.-419-4372 | M. (n.d.). A majority of young adults in the U.S. live with their parents for the first time since the Great Depression. Pew Research Center. Retrieved September 7, 2020, from https://www.pewresearch.org/fact-tank/2020/09/04/a-majority-of-young-adults-in-the-u-s-live-with-their-parents-for-the-first-time-since-the-great-depression/

ReconfigBehSci on Twitter: “RT @ScottGottliebMD: See how quickly you can find Sweden on this map.... https://t.co/bhXACObtnQ” / Twitter. (n.d.). Twitter. Retrieved June 29, 2020, from https://twitter.com/scibeh/status/1276799757575315457

Spinney, L. (2020, May 31). Covid-19 expert Karl Friston: “Germany may have more immunological ‘dark matter.’” The Observer. https://www.theguardian.com/world/2020/may/31/covid-19-expert-karl-friston-germany-may-have-more-immunological-dark-matter

Sixth Report. (2020, June 19). COVID-19 Mobility Monitoring Project. https://covid19mm.github.io//in-progress/2020/06/19/sixth-report.html

Ali, A. (2020, August 28). Visualizing the Social Media Universe in 2020. Visual Capitalist. https://www.visualcapitalist.com/visualizing-the-social-media-universe-in-2020/

Bhatia, S., Walasek, L., Slovic, P., & Kunreuther, H. (2020). The More Who Die, the Less We Care: Evidence from Natural Language Analysis of Online News Articles and Social Media Posts. Risk Analysis, risa.13582. https://doi.org/10.1111/risa.13582

Chaudhry, R., Dranitsaris, G., Mubashir, T., Bartoszko, J., & Riazi, S. (2020). A country level analysis measuring the impact of government actions, country preparedness and socioeconomic factors on COVID-19 mortality and related health outcomes. EClinicalMedicine, 0(0). https://doi.org/10.1016/j.eclinm.2020.100464

Embracing the slowdown. (n.d.). Retrieved August 30, 2020, from https://marketing.twitter.com/emea/en_gb/insights/embracing-the-slowdown

Lozano, R., Fullman, N., Mumford, J. E., Knight, M., Barthelemy, C. M., Abbafati, C., Abbastabar, H., Abd-Allah, F., Abdollahi, M., Abedi, A., Abolhassani, H., Abosetugn, A. E., Abreu, L. G., Abrigo, M. R. M., Haimed, A. K. A., Abushouk, A. I., Adabi, M., Adebayo, O. M., Adekanmbi, V., … Murray, C. J. L. (2020). Measuring universal health coverage based on an index of effective coverage of health services in 204 countries and territories, 1990–2019: A systematic analysis for the Global Burden of Disease Study 2019. The Lancet, 0(0). https://doi.org/10.1016/S0140-6736(20)30750-9

Home—COVID 19 forecast hub. (n.d.). Retrieved August 28, 2020, from https://covid19forecasthub.org/

Santosh, R., Guntuku, S. C., Schwartz, H., Eichstaedt, J., & Ungar, L. (2020). Detecting Symptoms using Context-based Twitter Embeddings during COVID-19. https://openreview.net/forum?id=DFJhXXPZrM7

Holtz, D., Zhao, M., Benzell, S. G., Cao, C. Y., Rahimian, M. A., Yang, J., Allen, J., Collis, A., Moehring, A., Sowrirajan, T., Ghosh, D., Zhang, Y., Dhillon, P. S., Nicolaides, C., Eckles, D., & Aral, S. (2020). Interdependence and the cost of uncoordinated responses to COVID-19. Proceedings of the National Academy of Sciences, 117(33), 19837–19843. https://doi.org/10.1073/pnas.2009522117

Green, J., Edgerton, J., Naftel, D., Shoub, K., & Cranmer, S. J. (2020). Elusive consensus: Polarization in elite communication on the COVID-19 pandemic. Science Advances, eabc2717. https://doi.org/10.1126/sciadv.abc2717

Children and COVID-19: State-Level Data Report. (n.d.). Retrieved August 12, 2020, from http://services.aap.org/en/pages/2019-novel-coronavirus-covid-19-infections/children-and-covid-19-state-level-data-report/

Mak, H. W., & Fancourt, D. (2020). Predictors of engaging in voluntary work during the Covid-19 pandemic: Analyses of data from 31,890 adults in the UK. https://doi.org/10.31235/osf.io/er8xd

Rice, W. L., & Pan, B. (2020). Understanding drivers of change in park visitation during the COVID-19 pandemic: A spatial application of Big data [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/97qa4

COVID Recovery Dashboard. Retrieved from https://goodjudgment.io/covid-recovery/#1363 on 12/08/2020

Peterson, David, and Aaron Panofsky. ‘Metascience as a Scientific Social Movement’. Preprint. SocArXiv, 4 August 2020. https://doi.org/10.31235/osf.io/4dsqa.

Lockdown Accounting. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved August 1, 2020, from https://covid-19.iza.org/publications/dp13397/

Public Attention and Policy Responses to COVID-19 Pandemic. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved July 31, 2020, from https://covid-19.iza.org/publications/dp13427/

Urban Density and COVID-19. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved July 30, 2020, from https://covid-19.iza.org/publications/dp13440/

Lockdown Strategies, Mobility Patterns and COVID-19. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved July 29, 2020, from https://covid-19.iza.org/publications/dp13293/

Does the COVID-19 Pandemic Improve Global Air Quality? New Cross-National Evidence on Its Unintended Consequences. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved July 29, 2020, from https://covid-19.iza.org/publications/dp13480/

Intergenerational Residence Patterns and COVID-19 Fatalities in the EU and the US. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved July 29, 2020, from https://covid-19.iza.org/publications/dp13452/

Exploring the Relationship between Care Homes and Excess Deaths in the COVID-19 Pandemic: Evidence from Italy. COVID-19 and the Labor Market. (n.d.). IZA – Institute of Labor Economics. Retrieved July 27, 2020, from https://covid-19.iza.org/publications/dp13492/

Webster, G. D., Howell, J. L., Losee, J. E., Mahar, E., & Wongsomboon, V. (2020). Culture, COVID-19, and Collectivism: A Paradox of American Exceptionalism? [Preprint]. PsyArXiv. https://doi.org/10.31234/osf.io/hqcs6

Roll over each school to find out more information on their respective plans. (n.d.). Tableau Software. Retrieved August 2, 2020, from https://public.tableau.com/views/NESCACFallPlansMap/Dashboard1

Monte, F. (2020). Mobility Zones (Working Paper No. 27236; Working Paper Series). National Bureau of Economic Research. https://doi.org/10.3386/w27236

Working with Census microdata. (n.d.). Retrieved July 31, 2020, from https://walker-data.com/tidycensus/articles/pums-data.html

Follow the money: See where $380B in Paycheck Protection Program money went. (n.d.). Retrieved July 27, 2020, from https://www.cnn.com/projects/ppp-business-loans/

La, V.-P., Pham, T.-H., Ho, T. M., Hoàng, N. M., Linh, N. P. K., Vuong, T.-T., Nguyen, H.-K. T., Tran, T., Van Quy, K., Ho, T. M., & Vuong, Q.-H. (2020). Policy response, social media and science journalism for the sustainability of the public health system amid the COVID-19 outbreak: The Vietnam lessons [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/cfw8x

Motta, M., Stecula, D., & Farhart, C. E. (2020). How Right-Leaning Media Coverage of COVID-19 Facilitated the Spread of Misinformation in the Early Stages of the Pandemic [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/a8r3p

Kubinec, R., & Carvalho, L. (2020). A Retrospective Bayesian Model for Measuring Covariate Effects on Observed COVID-19 Test and Case Counts [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/jp4wk

Goldman, D. S. (2020). Initial Observations of Psychological and Behavioral Effects of COVID-19 in the United States, Using Google Trends Data. https://doi.org/10.31235/osf.io/jecqp

Bernardi, F., Cozzani, M., & Zanasi, F. (2020). Social inequality and the risk of being in a nursing home during the COVID-19 pandemic [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/ksefy

Metternich, N. W. (2020). Drawback before the wave?: Protest decline during the Covid-19 pandemic. https://doi.org/10.31235/osf.io/3ej72

Dudel, C., Riffe, T., Acosta, E., van Raalte, A. A., Strozza, C., & Myrskylä, M. (2020). Monitoring trends and differences in COVID-19 case fatality rates using decomposition methods: Contributions of age structure and age-specific fatality [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/j4a3d

Jul 2, N. H. / P. (2020, July 2). Urban density not linked to higher coronavirus infection rates. The Hub. https://hub.jhu.edu/2020/07/02/urban-density-not-linked-to-higher-covid-19-infection-rates/

Fonseca, S. C., Rivas, I., Romaguera, D., Quijal-Zamorano, M., Czarlewski, W., Vidal, A., Fonseca, J. A., Ballester, J., Anto, J. M., Basagana, X., Cunha, L. M., & Bousquet, J. (2020). Association between consumption of vegetables and COVID-19 mortality at a country level in Europe. MedRxiv, 2020.07.17.20155846. https://doi.org/10.1101/2020.07.17.20155846

Weinberger, D. M., Chen, J., Cohen, T., Crawford, F. W., Mostashari, F., Olson, D., Pitzer, V. E., Reich, N. G., Russi, M., Simonsen, L., Watkins, A., & Viboud, C. (2020). Estimation of Excess Deaths Associated With the COVID-19 Pandemic in the United States, March to May 2020. JAMA Internal Medicine. https://doi.org/10.1001/jamainternmed.2020.3391

Number of people tested for coronavirus (England): 30 January to 27 May 2020. (n.d.). GOV.UK. Retrieved July 17, 2020, from https://www.gov.uk/government/publications/number-of-people-tested-for-coronavirus-england-30-january-to-27-may-2020

Kulu, H., & Dorey, P. (2020). Infection Rates from Covid-19 in Great Britain by Geographical Units: A Model-based Estimation from Mortality Data [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/84f3e

Haman, M. (2020). The use of Twitter by state leaders and its impact on the public during the COVID-19 pandemic. https://doi.org/10.31235/osf.io/u4maf

Graham, A., Cullen, F. T., Pickett, J., Jonson, C. L., Haner, M., & Sloan, M. M. (2020). Faith in Trump, Moral Foundations, and Social Distancing Defiance During the Coronavirus Pandemic [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/fudzq

Science Magazine on Twitter: “Democrats & Republicans have seemingly taken a divided stance on how to handle the #COVID19 pandemic, & their Twitter accounts might provide the best evidence to date. Watch the latest video from our #coronavirus series on research from @ScienceAdvances: https://t.co/BSCdWS011J https://t.co/sJyF357wje” / Twitter. (n.d.). Twitter. Retrieved June 27, 2020, from https://twitter.com/sciencemagazine/status/1276282194596675590

Breznau, N. (2020). The Welfare State and Risk Perceptions: The Novel Coronavirus Pandemic and Public Concern in 70 Countries. https://doi.org/10.31235/osf.io/96fd2

Burgess, M. G., Langendorf, R. E., Ippolito, T., & Pielke, R. (2020). Optimistically biased economic growth forecasts and negatively skewed annual variation [Preprint]. SocArXiv. https://doi.org/10.31235/osf.io/vndqr

Deaths registered weekly in England and Wales, provisional—Office for National Statistics. (n.d.). Retrieved July 9, 2020, from https://www.ons.gov.uk/peoplepopulationandcommunity/birthsdeathsandmarriages/deaths/bulletins/deathsregisteredweeklyinenglandandwalesprovisional/weekending26june2020

Fontana, M., Iori, M., Montobbio, F., & Sinatra, R. (2020). New and atypical combinations: An assessment of novelty and interdisciplinarity. Research Policy, 49(7), 104063. https://doi.org/10.1016/j.respol.2020.104063

Fontanet, A., Tondeur, L., Madec, Y., Grant, R., Besombes, C., Jolly, N., Pellerin, S. F., Ungeheuer, M.-N., Cailleau, I., Kuhmel, L., Temmam, S., Huon, C., Chen, K.-Y., Crescenzo, B., Munier, S., Demeret, C., Grzelak, L., Staropoli, I., Bruel, T., … Hoen, B. (2020). Cluster of COVID-19 in northern France: A retrospective closed cohort study. MedRxiv, 2020.04.18.20071134. https://doi.org/10.1101/2020.04.18.20071134

Report: COVID-19 in schools – the experience in NSW | NCIRS. (n.d.). Retrieved July 4, 2020, from http://www.ncirs.org.au/covid-19-in-schools

Sapoval, N., Mahmoud, M., Jochum, M. D., Liu, Y., Elworth, R. A. L., Wang, Q., Albin, D., Ogilvie, H., Lee, M. D., Villapol, S., Hernandez, K., Berry, I. M., Foox, J., Beheshti, A., Ternus, K., Aagaard, K. M., Posada, D., Mason, C., Sedlazeck, F. J., & Treangen, T. J. (2020). Hidden genomic diversity of SARS-CoV-2: Implications for qRT-PCR diagnostics and transmission. BioRxiv, 2020.07.02.184481. https://doi.org/10.1101/2020.07.02.184481

COVID-19: Data Summary—NYC Health. (n.d.). Retrieved July 3, 2020, from https://www1.nyc.gov/site/doh/covid/covid-19-data.page

Lavezzo, E., Franchin, E., Ciavarella, C., Cuomo-Dannenburg, G., Barzon, L., Del Vecchio, C., Rossi, L., Manganelli, R., Loregian, A., Navarin, N., Abate, D., Sciro, M., Merigliano, S., De Canale, E., Vanuzzo, M. C., Besutti, V., Saluzzo, F., Onelia, F., Pacenti, M., … Crisanti, A. (2020). Suppression of a SARS-CoV-2 outbreak in the Italian municipality of Vo’. Nature, 1–1. https://doi.org/10.1038/s41586-020-2488-1

Vaughan, A. (n.d.). R number. New Scientist. Retrieved June 29, 2020, from https://www.newscientist.com/term/r-number/

Rosenberg, E. S., Tesoriero, J. M., Rosenthal, E. M., Chung, R., Barranco, M. A., Styer, L. M., Parker, M. M., John Leung, S.-Y., Morne, J. E., Greene, D., Holtgrave, D. R., Hoefer, D., Kumar, J., Udo, T., Hutton, B., & Zucker, H. A. (2020). Cumulative incidence and diagnosis of SARS-CoV-2 infection in New York. Annals of Epidemiology. https://doi.org/10.1016/j.annepidem.2020.06.004

Bulbulia, J., Barlow, F., Davis, D. E., Greaves, L., Highland, B., Houkamau, C., Milfont, T. L., Osborne, D., Piven, S., Shaver, J., Troughton, G., Wilson, M., Yogeeswaran, K., & Sibley, C. G. (2020). National Longitudinal Investigation of COVID-19 Lockdown Distress Clarifies Mechanisms of Mental Health Burden and Relief [Preprint]. PsyArXiv. https://doi.org/10.31234/osf.io/cswde

Per capita: COVID-19 tests vs. Confirmed deaths. (n.d.). Our World in Data. Retrieved June 23, 2020, from https://ourworldindata.org/grapher/covid-19-tests-deaths-scatter-with-comparisons

COVID-19 Regional Safety Assessment | DKG. (n.d.). DKV. Retrieved June 17, 2020, from https://www.dkv.global/covid-safety-assessment-200-regions

COVID-19: Review of disparities in risks and outcomes. (n.d.). GOV.UK. Retrieved June 15, 2020, from https://www.gov.uk/government/publications/covid-19-review-of-disparities-in-risks-and-outcomes

Ziems, C., He, B., Soni, S., & Kumar, S. (2020). Racism is a Virus: Anti-Asian Hate and Counterhate in Social Media during the COVID-19 Crisis. ArXiv:2005.12423 [Physics]. http://arxiv.org/abs/2005.12423

Gozzi, N., Tizzani, M., Starnini, M., Ciulla, F., Paolotti, D., Panisson, A., & Perra, N. (2020). Collective response to the media coverage of COVID-19 Pandemic on Reddit and Wikipedia. ArXiv:2006.06446 [Physics]. http://arxiv.org/abs/2006.06446

Chu, D. K., Akl, E. A., Duda, S., Solo, K., Yaacoub, S., Schünemann, H. J., Chu, D. K., Akl, E. A., El-harakeh, A., Bognanni, A., Lotfi, T., Loeb, M., Hajizadeh, A., Bak, A., Izcovich, A., Cuello-Garcia, C. A., Chen, C., Harris, D. J., Borowiack, E., … Schünemann, H. J. (2020). Physical distancing, face masks, and eye protection to prevent person-to-person transmission of SARS-CoV-2 and COVID-19: A systematic review and meta-analysis. The Lancet, 0(0). https://doi.org/10.1016/S0140-6736(20)31142-9

Pueyo, T. (2020, June 12). Coronavirus: Should We Aim for Herd Immunity Like Sweden? Medium. https://medium.com/@tomaspueyo/coronavirus-should-we-aim-for-herd-immunity-like-sweden-b1de3348e88b

Boustan, L. P., Kahn, M. E., Rhode, P. W., & Yanguas, M. L. (2020). The Effect of Natural Disasters on Economic Activity in US Counties: A Century of Data. Journal of Urban Economics, 103257. https://doi.org/10.1016/j.jue.2020.103257

Murphy, C., Laurence, E., & Allard, A. (2020). Deep learning of stochastic contagion dynamics on complex networks. ArXiv:2006.05410 [Cond-Mat, Physics:Physics, Stat]. http://arxiv.org/abs/2006.05410

Layer, R. M., Fosdick, B., Larremore, D. B., Bradshaw, M., & Doherty, P. (2020). Case Study: Using Facebook Data to Monitor Adherence to Stay-at-home Orders in Colorado and Utah. MedRxiv, 2020.06.04.20122093. https://doi.org/10.1101/2020.06.04.20122093

Hsiang, S., Allen, D., Annan-Phan, S., Bell, K., Bolliger, I., Chong, T., Druckenmiller, H., Huang, L. Y., Hultgren, A., Krasovich, E., Lau, P., Lee, J., Rolf, E., Tseng, J., & Wu, T. (2020). The effect of large-scale anti-contagion policies on the COVID-19 pandemic. Nature, 1–9. https://doi.org/10.1038/s41586-020-2404-8

Efron, B. (2020). Prediction, Estimation, and Attribution. Journal of the American Statistical Association, 115(530), 636–655. https://doi.org/10.1080/01621459.2020.1762613

Oliver, N., Lepri, B., Sterly, H., Lambiotte, R., Deletaille, S., Nadai, M. D., Letouzé, E., Salah, A. A., Benjamins, R., Cattuto, C., Colizza, V., Cordes, N. de, Fraiberger, S. P., Koebe, T., Lehmann, S., Murillo, J., Pentland, A., Pham, P. N., Pivetta, F., … Vinck, P. (2020). Mobile phone data for informing public health actions across the COVID-19 pandemic life cycle. Science Advances, 6(23), eabc0764. https://doi.org/10.1126/sciadv.abc0764

Steinmetz, H., Batzdorfer, V., & Bosnjak, M. (2020). The ZPID lockdown measures dataset for Germany [Report]. ZPID (Leibniz Institute for Psychology Information). http://dx.doi.org/10.23668/psycharchives.3019

Kempfert, K., Martinez, K., Siraj, A., Conrad, J., Fairchild, G., Ziemann, A., Parikh, N., Osthus, D., Generous, N., Del Valle, S., & Manore, C. (2020). Time Series Methods and Ensemble Models to Nowcast Dengue at the State Level in Brazil. ArXiv:2006.02483 [q-Bio, Stat]. http://arxiv.org/abs/2006.02483

Blackman, J., & Goldenstein, T. (2020, May 21). Using cellphone data, national study predicts huge June spike in Houston coronavirus cases. HoustonChronicle.Com. https://www.houstonchronicle.com/news/houston-texas/houston/article/Using-cellphone-data-national-study-predicts-15286096.php

hedonometer on Twitter: “Yesterday was the saddest day in the history of @Twitter https://t.co/91VP3Ywtnr” / Twitter. (n.d.). Twitter. Retrieved June 1, 2020, from https://twitter.com/hedonometer/status/1266774310565425154

Zdeborová, L. (2020). Understanding deep learning is also a job for physicists. Nature Physics, 1–3. https://doi.org/10.1038/s41567-020-0929-2

The Ethics of Online Research. (2018, August 30). Leeds University Library Blog. https://leedsunilibrary.wordpress.com/2018/08/30/the-ethics-of-online-research/

A flood of coronavirus apps are tracking us. Now it’s time to keep track of them. (n.d.). MIT Technology Review. Retrieved May 12, 2020, from https://www.technologyreview.com/2020/05/07/1000961/launching-mittr-covid-tracing-tracker/

Countries are using apps and data networks to keep tabs on the pandemic. (2020 March 26). The Economist. https://www.economist.com/briefing/2020/03/26/countries-are-using-apps-and-data-networks-to-keep-tabs-on-the-pandemic?fsrc=newsletter&utm_campaign=the-economist-today&utm_medium=newsletter&utm_source=salesforce-marketing-cloud&utm_term=2020-05-07&utm_content=article-link-1

Drew, D. A., Nguyen, L. H., Steves, C. J., Menni, C., Freydin, M., Varsavsky, T., Sudre, C. H., Cardoso, M. J., Ourselin, S., Wolf, J., Spector, T. D., Chan, A. T., & Consortium§, C. (2020). Rapid implementation of mobile technology for real-time epidemiology of COVID-19. Science. https://doi.org/10.1126/science.abc0473

r/BehSciResearch—Social Licensing of Privacy-Encroaching Policies to Address COVID. (n.d.). Reddit. Retrieved April 27, 2020, from https://www.reddit.com/r/BehSciResearch/comments/fq0rvm/social_licensing_of_privacyencroaching_policies/

Gao, S., Rao, J., Kang, Y., Liang, Y., & Kruse, J. (2020). Mapping county-level mobility pattern changes in the United States in response to COVID-19. ArXiv:2004.04544 [Physics, q-Bio]. http://arxiv.org/abs/2004.04544

Editor, A. (2020, March 31). UCLA Researchers Use Big Data Expertise to Create a News Media Resource on the COVID-19 Crisis. LA Social Science. https://lasocialscience.ucla.edu/2020/03/31/ucla-researchers-use-big-data-expertise-to-create-a-news-media-resource-on-the-covid-19-crisis/

One important aspect of critical social media research is the study of not just ideolo-gies of the Internet but also ideologies on the Internet. Critical discourse analysis and ideology critique as research method have only been applied in a limited manner to social media data. Majid KhosraviNik (2013) argues in this context that ‘critical dis-course analysis appears to have shied away from new media research in the bulk of its research’ (p. 292). Critical social media discourse analysis is a critical digital method for the study of how ideologies are expressed on social media in light of society’s power structures and contradictions that form the texts’ contexts.

t has, for example, been common to study contemporary revolutions and protests (such as the 2011 Arab Spring) by collecting large amounts of tweets and analysing them. Such analyses can, however, tell us nothing about the degree to which activists use social and other media in protest communication, what their motivations are to use or not use social media, what their experiences have been, what problems they encounter in such uses and so on. If we only analyse big data, then the one-sided conclusion that con-temporary rebellions are Facebook and Twitter revolutions is often the logical conse-quence (see Aouragh, 2016; Gerbaudo, 2012). Digital methods do not outdate but require traditional methods in order to avoid the pitfall of digital positivism. Traditional socio-logical methods, such as semi-structured interviews, participant observation, surveys, content and critical discourse analysis, focus groups, experiments, creative methods, par-ticipatory action research, statistical analysis of secondary data and so on, have not lost importance. We do not just have to understand what people do on the Internet but also why they do it, what the broader implications are, and how power structures frame and shape online activities

Challenging big data analytics as the mainstream of digital media studies requires us to think about theoretical (ontological), methodological (epistemological) and ethical dimensions of an alternative paradigm

Making the case for the need for digitally native research methodologies.

Who communicates what to whom on social media with what effects? It forgets users’ subjectivity, experiences, norms, values and interpre-tations, as well as the embeddedness of the media into society’s power structures and social struggles. We need a paradigm shift from administrative digital positivist big data analytics towards critical social media research. Critical social media research combines critical social media theory, critical digital methods and critical-realist social media research ethics.

de-emphasis of philosophy, theory, critique and qualitative analysis advances what Paul Lazarsfeld (2004 [1941]) termed administrative research, research that is predominantly concerned with how to make technologies and administration more efficient and effective.

Big data analytics’ trouble is that it often does not connect statistical and computational research results to a broader analysis of human meanings, interpretations, experiences, atti-tudes, moral values, ethical dilemmas, uses, contradictions and macro-sociological implica-tions of social media.

Such funding initiatives privilege quantitative, com-putational approaches over qualitative, interpretative ones.

There is a tendency in Internet Studies to engage with theory only on the micro- and middle-range levels that theorize single online phenomena but neglect the larger picture of society as a totality (Rice and Fuller, 2013). Such theories tend to be atomized. They just focus on single phenomena and miss soci-ety’s big picture

A final word: when we do not understand something, it does not look like there is anything to be understood at all - it just looks like random noise. Just because it looks like noise does not mean there is no hidden structure.

Excellent statement! Could this be the guiding principle of the current big data boom in biology?

The Chinese place a higher value on community good versus individual rights, so most feel that, if social credit will bring a safer, more secure, more stable society, then bring it on

hus it becomes possible to see how ques-tions around data use need to shift from asking what is in the data, to include discussions of how the data is structured, and how this structure codifies value systems and social practices, subject positions and forms of visibility and invisi-bility (and thus forms of surveillance), along with the very ideas of crisis, risk governance and preparedness. Practices around big data produce and perpetuate specific forms of social engagement as well as understandings of the areas affected and the people being served.

How data structure influences value systems and social practices is a much-needed topic of inquiry.

Big data is not just about knowing more. It could be – and should be – about knowing better or about changing what knowing means. It is an ethico- episteme-ontological- political matter. The ‘needle in the haystack’ metaphor conceals the fact that there is no such thing as one reality that can be revealed. But multiple, lived are made through mediations and human and technological assemblages. Refugees’ realities of intersecting intelligences are shaped by the ethico- episteme-ontological politics of big data.

Big, sweeping statement that helps frame how big data could be better conceptualized as a complex, socially contextualized, temporal artifact.

Burns (2015) builds on this to investigate how within digital humanitarianism discourses, big data produce and perform subjects ‘in need’ (individuals or com-munities affected by crises) and a humanitarian ‘saviour’ community that, in turn, seeks answers through big data

I don't understand what Burns is arguing here. Who is he referring to claims that DHN is a "savior" or "the solution" to crisis response?

"Big data should therefore be be conceptualized as a framing of what can be known about a humanitarian crisis, and how one is able to grasp that knowledge; in short, it is an epistemology. This epistemology privileges knowledges and knowledge- based practices originating in remote geographies and de- emphasizes the connections between multiple knowledges.... Put another way, this configuration obscures the funding, resource, and skills constraints causing imperfect humanitarian response, instead positing volunteered labor as ‘the solution.’ This subjectivity formation carves a space in which digital humanitarians are necessary for effective humanitarian activities." (Burns 2015: 9–10)

Crises are often not a crisis of information. It is often not a lack of data or capacity to analyse it that prevents ‘us’ from pre-venting disasters or responding effectively. Risk management fails because there is a lack of a relational sense of responsibility. But this does not have to be the case. Technologies that are designed to support collaboration, such as what Jasanoff (2007) terms ‘technologies of humility’, can be better explored to find ways of framing data and correlations that elicit a greater sense of relational responsibility and commitment.

Is it "a lack of relational sense of responsibility" in crisis response (state vs private sector vs public) or is it the wicked problem of power, class, social hierarchies, etc.?

"... ways of framing data and correlations that elicit a greater sense of responsibility and commitment."

That could have a temporal component to it to position urgency, timescape, horizon, etc.

In some ways this constitutes the production of ‘liquid resilience’ – a deflection of risk to the individuals and communities affected which moves us from the idea of an all-powerful and knowing state to that of a ‘plethora of partial projects and initiatives that are seeking to harness ICTs in the service of better knowing and governing individuals and populations’ (Ruppert 2012: 118)

This critique addresses surveillance state concerns about glue-ing datasets together to form a broader understanding of aggregate social behavior without the necessary constraints/warnings about social contexts and discontinuity between data.

Skimmed the Ruppert paper, sadly doesn't engage with time and topologies.

Indeed, as Chandler (2015: 9) also argues, crowdsourcing of big data does not equate to a democratisation of risk assessment or risk governance:

Beyond this quote, Chandler (in engaging crisis/disaster scenarios) argues that Big Data may be more appropriately framed as community reflexive knowledge than causal knowledge. That's an interesting idea.

*"Thus, It would be more useful to see Big Data as reflexive knowledge rather than as causal knowledge. Big Data cannot help explain global warming but it can enable individuals and household to measure their own energy consumption through the datafication of household objects and complex production and supply chains. Big Data thereby datafies or materialises an individual or community’s being in the world. This reflexive approach works to construct a pluralised and multiple world of self-organising and adaptive processes. The imaginary of Big Data is that the producers and consumers of knowledge and of governance would be indistinguishable; where both knowing and governing exist without external mediation, constituting a perfect harmonious and self-adapting system: often called ‘community resilience’. In this discourse, increasingly articulated by governments and policy-makers, knowledge of causal connections is no longer relevant as communities adapt to the real-time appearances of the world, without necessarily understanding them."

"Rather than engaging in external understandings of causality in the world, Big Data works on changing social behaviour by enabling greater adaptive reflexivity. If, through Big Data, we could detect and manage our own biorhythms and know the effects of poor eating or a lack of exercise, we could monitor our own health and not need costly medical interventions. Equally, if vulnerable and marginal communities could ‘datafy’ their own modes of being and relationships to their environments they would be able to augment their coping capacities and resilience without disasters or crises occurring. In essence, the imaginary of Big Data resolves the essential problem of modernity and modernist epistemologies, the problem of unintended consequences or side-effects caused by unknown causation, through work on the datafication of the self in its relational-embeddedness.42 This is why disasters in current forms of resilience thinking are understood to be ‘transformative’: revealing the unintended consequences of social planning which prevented proper awareness and responsiveness. Disasters themselves become a form of ‘datafication’, revealing the existence of poor modes of self-governance."*

Downloaded Chandler paper. Cites Meier quite a bit.

However, with these big data collections, the focus becomes not the individu-al’s behaviour but social and economic insecurities, vulnerabilities and resilience in relation to the movement of such people. The shift acknowledges that what is surveilled is more complex than an individual person’s movements, communica-tions and actions over time.

The shift from INGO emergency response/logistics to state-sponsored, individualized resilience via the private sector seems profound here.

There's also a subtle temporal element here of surveilling need and collecting data over time.

Again, raises serious questions about the use of predictive analytics, data quality/classification, and PII ethics.

Andrejevic and Gates (2014: 190) suggest that ‘the target becomes the hidden patterns in the data, rather than particular individuals or events’. National and local authorities are not seeking to monitor individuals and discipline their behaviour but to see how many people will reach the country and when, so that they can accommodate them, secure borders, and identify long- term social out-looks such as education, civil services, and impacts upon the host community (Pham et al. 2015).

This seems like a terribly naive conclusion about mass data collection by the state.

Also:

"Yet even if capacities to analyse the haystack for needles more adequately were available, there would be questions about the quality of the haystack, and the meaning of analysis. For ‘Big Data is not self-explanatory’ (Bollier 2010: 13, in boyd and Crawford 2012). Neither is big data necessarily good data in terms of quality or relevance (Lesk 2013: 87) or complete data (boyd and Crawford 2012)."

as boyd and Crawford argue, ‘without taking into account the sample of a data set, the size of the data set is meaningless’ (2012: 669). Furthermore, many tech-niques used by the state and corporations in big data analysis are based on probabilistic prediction which, some experts argue, is alien to, and even incom-prehensible for, human reasoning (Heaven 2013). As Mayer-Schönberger stresses, we should be ‘less worried about privacy and more worried about the abuse of probabilistic prediction’ as these processes confront us with ‘profound ethical dilemmas’ (in Heaven 2013: 35).

Primary problems to resolve regarding the use of "big data" in humanitarian contexts: dataset size/sample, predictive analytics are contrary to human behavior, and ethical abuses of PII.

We showhow the rise of large datasets, in conjunction with arising interest in data as scholarly output, contributesto the advent of data sharing platforms in a field trad-itionally organized by infrastructures.

What does this paper mean by infrastructures? Perhaps this is a reference to the traditional scholarly journals and monographs.

Human cognition loses its personal character. Individuals turn into data, and data become regnant

Reminds me of The End of Theory. But if we lose the theory, the human understanding, what will be the consequences?

Get the best Explanation on Talend Training and Tutorial Course with Real time Experience and Exercises with Real time projects for better Hands on from the scratch to advance level

so check this link and learn :- https://www.youtube.com/watch?v=lhTPrpBvakw

big data solutions for cardiovascular and oncological research.

big data solutions for cardiovascular and oncological research.

patient adherence

Patient adherence strategy and Big Data

reliability and accessibility of big data will help facilitate increased reliance upon outcomes-based contracting and alternative payment models.

reliability and accessibility of big data will help facilitate increased reliance upon outcomes-based contracting and alternative payment models.

We also downloaded Twitter user profiles, such as the size offollowers, along with their profile description.

I wonder how many profiles in the 3,389 tweets? Did the automate the review and capture of the details? Or did they review each profile by hand?

Thesedigitaltracesareoftenreferredtoasbigdataandarepopularlydiscussedasaresource,arawmaterialwithqualitiestobeminedandcapitalized,thenewoiltobetappedtospureconomies.Throughavarietyofpracticesofvaluation,corporationsnotonlyexploitthedigitaltracesoftheircustomerstomaximizetheiroperationsbutalsosellthosetracestoothers.Forthatreason,citizensubjectswhouseplatformssuchasGooglearesometimesreferredtonotasitscustomersbutasitsproduct.

The company's API for scoring toxicity in online discussions already behaves like a racist hand dryer.

this is just so awful.

what are our best, shared hopes for DH? What tasks and projects might we take up, or tie in? What are our functions—or, if you prefer, our vocations, now

The Digital Recovery of Texts. Due to computer assisted approaches to paleography( noun: paleography the study of ancient writing systems and the deciphering and dating of historical manuscripts) and the steady advances in the field of digital preservation "Resurrection can be grisly work, I THINK WE COME TO UNDERSTAND EXTINCTION BETTER IN OUR STRUGGLES." .

DH has a public and transformative role to play : Big Data and The Longue Duree

Unable to look at article by Armitage, D & Guldi,j.i on The Return of The Longue Duree - hit by paywall each time,

Look at great article on WWW.WIRED.COM .

https://www.wired.com/2014/01/return-of-history-long-timescales/

"The return of the longue durée is intimately connected to changing questions of scale. In a moment of ever-growing inequality, amid crises of global governance, and under the impact of anthropogenic climate change, even a minimal understanding of the conditions shaping our lives demands a scaling-up of our inquiries. "

What does she mean by BIG DATA? Read Samuel Arbesman article in The Washington Post for easy explanation [] (https://www.washingtonpost.com/opinions/five-myths-about-big-data/2013/08/15/64a0dd0a-e044-11e2-963a-72d740e88c12_story.html?utm_term=.54ff7fdf82fe)

A data lake management service with an Apache licence. I am particularly interested in how well the monitoring features of this platform work.

In fact, academics now regularly tap into the reservoir of digitized material that Google helped create, using it as a dataset they can query, even if they can’t consume full texts.

It's good to understand that exploring a corpus for "brainstorming" or discovering heretofore seen connections is different than a discovery query that is meant to give access to an entire text.

‘Precision Education’ Hopes to Apply Big Data to Lift Diverse Student Groups

‘Precision Education’ Hopes to Apply Big Data to Lift Diverse Student Groups

literature became data

Doesn't this obfuscate the process? Literature became digital. Digital enables a wide range of futther activity to take place on top of literature, including, perhaps, it's datafication.

volume, velocity, and variety

volume: The actual size of traffic

Velocity: How fast does the traffic show up.

Variety: Refers to data that can be unstructured, semi structured or multi structured.

En produisant des services gratuits (ou très accessibles), performants et à haute valeur ajoutée pour les données qu’ils produisent, ces entreprises captent une gigantesque part des activités numériques des utilisateurs. Elles deviennent dès lors les principaux fournisseurs de services avec lesquels les gouvernements doivent composer s’ils veulent appliquer le droit, en particulier dans le cadre de la surveillance des populations et des opérations de sécurité.

Voilà pourquoi les GAFAM sont aussi puissants (voire plus) que des États.

How Uber Uses Psychological Tricks to Push Its Drivers’ Buttons

Persuasion

Corporate thought leaders have now realized that it is a much greater challenge to actually apply that data. The big takeaways in this topic are that data has to be seen to be acknowledged, tangible to be appreciated, and relevantly presented to have an impact. Connecting data on the macro level across an organization and then bringing it down to the individual stakeholder on the micro level seems to be the key in getting past the fact that right now big data is one thing to have and quite another to unlock.

Simply possessing pools of data is of limited utility. It's like having a space ship but your only access point to it is through a pin hole in the garage wall that lets you see one small, random glint of ship; you (think you) know there's something awesome inside but that sense is really all you've got. Margaret points out that it has to be seen (data visualization), it has to be tangible (relevant to audience) and connected at micro and macro levels (storytelling). For all of the machine learning and AI that helps us access the spaceship, these key points are (for now) human-driven.

Either we own political technologies, or they will own us. The great potential of big data, big analysis and online forums will be used by us or against us. We must move fast to beat the billionaires.

Not in the right major. Not in the right class. Not in the right school. Not in the right country.

There's a bit of a slippery slope here, no? Maybe it's Audrey on that slope, maybe it's data-happy schools/companies. In either case, I wonder if it might be productive to lay claim to some space on that slope, short of the dangers below, aware of them, and working to responsibly leverage machine intelligence alongside human understanding.

Ed-Tech in a Time of Trump

in order to facilitate advisors holding more productive conversations about potential academic directions with their advisees.

Conversations!

Each morning, all alerts triggered over the previous day are automatically sent to the advisor assigned to the impacted students, with a goal of advisor outreach to the student within 24 hours.

Key that there's still a human and human relationships in the equation here.

A single screen for each student offers all of the information that advisors reported was most essential to their work,

Did students have access to the same data?

and Georgia State's IT and legal offices readily accepted the security protocols put in place by EAB to protect the student data.

So it's not as if this was done willy-nilly.

Machine learning:

The importance of models may need to be underscored in this age of “big data” and “data mining”. Data, no matter how big, can only tell you what happened in the past. Unless you’re a historian, you actually care about the future — what will happen, what could happen, what would happen if you did this or that. Exploring these questions will always require models. Let’s get over “big data” — it’s time for “big modeling”.

Page 14

Rockwell and Sinclair note that corporations are mining text including our email; as they say here:

more and more of our private textual correspondence is available for large-scale analysis and interpretation. We need to learn more about these methods to be able to think through the ethical, social, and political consequences. The humanities have traditions of engaging with issues of literacy, and big data should be not an exception. How to analyze interpret, and exploit big data are big problems for the humanities.

big data

les algorithmes ont besoin de données soi-disant neutres.. c'est un peu aller dans le sens des discours d'accompagnement de ces algorithmes et services de recommandation qui considèrent leurs données "naturelles", sans valeur intrasèque. (voir Bonenfant 2015)

Data are not useful in and of themselves. They only have utility if meaning and value can be extracted from them. In other words, it is what is done with data that is important, not simply that they are generated. The whole of science is based on realising meaning and value from data. Making sense of scaled small data and big data poses new challenges. In the case of scaled small data, the challenge is linking together varied datasets to gain new insights and opening up the data to new analytical approaches being used in big data. With respect to big data, the challenge is coping with its abundance and exhaustivity (including sizeable amounts of data with low utility and value), timeliness and dynamism, messiness and uncertainty, high relationality, semi-structured or unstructured nature, and the fact that much of big data is generated with no specific question in mind or is a by-product of another activity. Indeed, until recently, data analysis techniques have primarily been designed to extract insights from scarce, static, clean and poorly relational datasets, scientifically sampled and adhering to strict assumptions (such as independence, stationarity, and normality), and generated and alanysed with a specific question in mind.

Good discussion of the different approaches allowed/required by small v. big data.

We should have control of the algorithms and data that guide our experiences online, and increasingly offline. Under our guidance, they can be powerful personal assistants.

Big business has been very militant about protecting their "intellectual property". Yet they regard every detail of our personal lives as theirs to collect and sell at whim. What a bunch of little darlings they are.

The New Politics of Educational Data

Ranty Blog Post about Big Data, Learning Analytics, & Higher Ed

As Big-Data Companies Come to Teaching, a Pioneer Issues a Warning

50 Years of Data Science, David Donoho<br> 2015, 41 pages

This paper reviews some ingredients of the current "Data Science moment", including recent commentary about data science in the popular media, and about how/whether Data Science is really di fferent from Statistics.

The now-contemplated fi eld of Data Science amounts to a superset of the fi elds of statistics and machine learning which adds some technology for 'scaling up' to 'big data'.

The idea was to pinpoint the doctors prescribing the most pain medication and target them for the company’s marketing onslaught. That the databases couldn’t distinguish between doctors who were prescribing more pain meds because they were seeing more patients with chronic pain or were simply looser with their signatures didn’t matter to Purdue.

Shared information

The “social”, with an embedded emphasis on the data part of knowledge building and a nod to solidarity. Cloud computing does go well with collaboration and spelling out the difference can help lift some confusion.

Big data to knowledge (BD2K)

would like to know more about this term and HHS inititiative

the critical role that big data open source projects play in the Internet of Things (IoT).